Hub documentation

Using Adapters at Hugging Face

Using Adapters at Hugging Face

Note: Adapters has replaced the

adapter-transformerslibrary and is fully compatible in terms of model weights. See here for more.

Adapters is an add-on library to 🤗 transformers for efficiently fine-tuning pre-trained language models using adapters and other parameter-efficient methods.

Adapters also provides various methods for composition of adapter modules during training and inference.

You can learn more about this in the Adapters paper.

Exploring Adapters on the Hub

You can find Adapters models by filtering at the left of the models page. Some adapter models can be found in the Adapter Hub repository. Models from both sources are aggregated on the AdapterHub website.

Installation

To get started, you can refer to the AdapterHub installation guide. You can also use the following one-line install through pip:

pip install adaptersUsing existing models

For a full guide on loading pre-trained adapters, we recommend checking out the official guide.

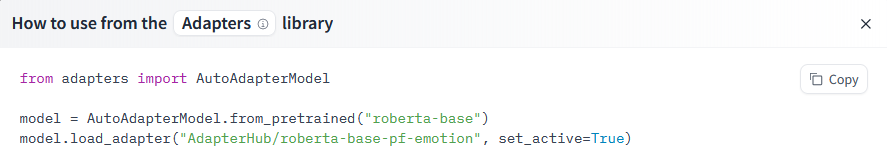

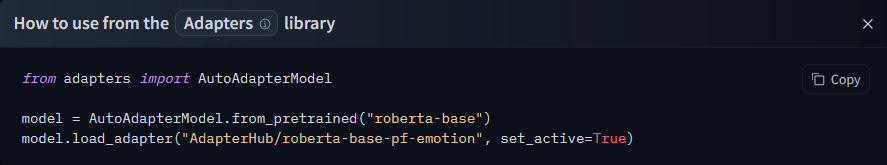

As a brief summary, a full setup consists of three steps:

- Load a base

transformersmodel with theAutoAdapterModelclass provided by Adapters. - Use the

load_adapter()method to load and add an adapter. - Activate the adapter via

active_adapters(for inference) or activate and set it as trainable viatrain_adapter()(for training). Make sure to also check out composition of adapters.

from adapters import AutoAdapterModel

# 1.

model = AutoAdapterModel.from_pretrained("FacebookAI/roberta-base")

# 2.

adapter_name = model.load_adapter("AdapterHub/roberta-base-pf-imdb")

# 3.

model.active_adapters = adapter_name

# or model.train_adapter(adapter_name)You can also use list_adapters to find all adapter models programmatically:

from adapters import list_adapters

# source can be "ah" (AdapterHub), "hf" (hf.co) or None (for both, default)

adapter_infos = list_adapters(source="hf", model_name="FacebookAI/roberta-base")If you want to see how to load a specific model, you can click Use in Adapters and you will be given a working snippet that you can load it!

Sharing your models

For a full guide on sharing models with Adapters, we recommend checking out the official guide.

You can share your adapter by using the push_adapter_to_hub method from a model that already contains an adapter.

model.push_adapter_to_hub(

"my-awesome-adapter",

"awesome_adapter",

adapterhub_tag="sentiment/imdb",

datasets_tag="imdb"

)This command creates a repository with an automatically generated model card and all necessary metadata.

Additional resources

- Adapters repository

- Adapters docs

- Adapters paper

- Integration with Hub docs