Transformers documentation

ImageGPT

ImageGPT

Overview

The ImageGPT model was proposed in Generative Pretraining from Pixels by Mark Chen, Alec Radford, Rewon Child, Jeffrey Wu, Heewoo Jun, David Luan, Ilya Sutskever. ImageGPT (iGPT) is a GPT-2-like model trained to predict the next pixel value, allowing for both unconditional and conditional image generation.

The abstract from the paper is the following:

Inspired by progress in unsupervised representation learning for natural language, we examine whether similar models can learn useful representations for images. We train a sequence Transformer to auto-regressively predict pixels, without incorporating knowledge of the 2D input structure. Despite training on low-resolution ImageNet without labels, we find that a GPT-2 scale model learns strong image representations as measured by linear probing, fine-tuning, and low-data classification. On CIFAR-10, we achieve 96.3% accuracy with a linear probe, outperforming a supervised Wide ResNet, and 99.0% accuracy with full fine-tuning, matching the top supervised pre-trained models. We are also competitive with self-supervised benchmarks on ImageNet when substituting pixels for a VQVAE encoding, achieving 69.0% top-1 accuracy on a linear probe of our features.

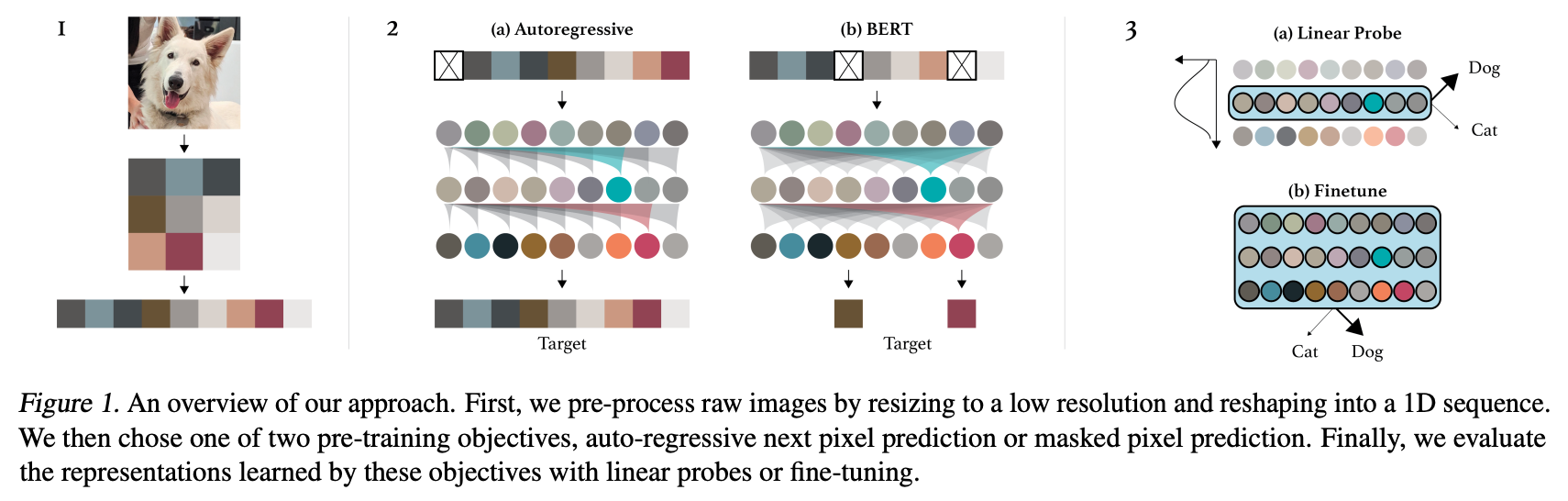

Summary of the approach. Taken from the [original paper](https://cdn.openai.com/papers/Generative_Pretraining_from_Pixels_V2.pdf).

Summary of the approach. Taken from the [original paper](https://cdn.openai.com/papers/Generative_Pretraining_from_Pixels_V2.pdf).

This model was contributed by nielsr, based on this issue. The original code can be found here.

Tips:

- Demo notebooks for ImageGPT can be found here.

- ImageGPT is almost exactly the same as GPT-2, with the exception that a different activation function is used (namely “quick gelu”), and the layer normalization layers don’t mean center the inputs. ImageGPT also doesn’t have tied input- and output embeddings.

- As the time- and memory requirements of the attention mechanism of Transformers scales quadratically in the sequence length, the authors pre-trained ImageGPT on smaller input resolutions, such as 32x32 and 64x64. However, feeding a sequence of 32x32x3=3072 tokens from 0..255 into a Transformer is still prohibitively large. Therefore, the authors applied k-means clustering to the (R,G,B) pixel values with k=512. This way, we only have a 32*32 = 1024-long sequence, but now of integers in the range 0..511. So we are shrinking the sequence length at the cost of a bigger embedding matrix. In other words, the vocabulary size of ImageGPT is 512, + 1 for a special “start of sentence” (SOS) token, used at the beginning of every sequence. One can use ImageGPTFeatureExtractor to prepare images for the model.

- Despite being pre-trained entirely unsupervised (i.e. without the use of any labels), ImageGPT produces fairly

performant image features useful for downstream tasks, such as image classification. The authors showed that the

features in the middle of the network are the most performant, and can be used as-is to train a linear model (such as

a sklearn logistic regression model for example). This is also referred to as “linear probing”. Features can be

easily obtained by first forwarding the image through the model, then specifying

output_hidden_states=True, and then average-pool the hidden states at whatever layer you like. - Alternatively, one can further fine-tune the entire model on a downstream dataset, similar to BERT. For this, you can use ImageGPTForImageClassification.

- ImageGPT comes in different sizes: there’s ImageGPT-small, ImageGPT-medium and ImageGPT-large. The authors did also train an XL variant, which they didn’t release. The differences in size are summarized in the following table:

| Model variant | Depths | Hidden sizes | Decoder hidden size | Params (M) | ImageNet-1k Top 1 |

|---|---|---|---|---|---|

| MiT-b0 | [2, 2, 2, 2] | [32, 64, 160, 256] | 256 | 3.7 | 70.5 |

| MiT-b1 | [2, 2, 2, 2] | [64, 128, 320, 512] | 256 | 14.0 | 78.7 |

| MiT-b2 | [3, 4, 6, 3] | [64, 128, 320, 512] | 768 | 25.4 | 81.6 |

| MiT-b3 | [3, 4, 18, 3] | [64, 128, 320, 512] | 768 | 45.2 | 83.1 |

| MiT-b4 | [3, 8, 27, 3] | [64, 128, 320, 512] | 768 | 62.6 | 83.6 |

| MiT-b5 | [3, 6, 40, 3] | [64, 128, 320, 512] | 768 | 82.0 | 83.8 |

ImageGPTConfig

class transformers.ImageGPTConfig

< source >( vocab_size = 513 n_positions = 1024 n_embd = 512 n_layer = 24 n_head = 8 n_inner = None activation_function = 'quick_gelu' resid_pdrop = 0.1 embd_pdrop = 0.1 attn_pdrop = 0.1 layer_norm_epsilon = 1e-05 initializer_range = 0.02 scale_attn_weights = True use_cache = True tie_word_embeddings = False scale_attn_by_inverse_layer_idx = False reorder_and_upcast_attn = False **kwargs )

Parameters

-

vocab_size (

int, optional, defaults to 512) — Vocabulary size of the GPT-2 model. Defines the number of different tokens that can be represented by theinputs_idspassed when calling ImageGPTModel orTFImageGPTModel. -

n_positions (

int, optional, defaults to 32*32) — The maximum sequence length that this model might ever be used with. Typically set this to something large just in case (e.g., 512 or 1024 or 2048). -

n_embd (

int, optional, defaults to 512) — Dimensionality of the embeddings and hidden states. -

n_layer (

int, optional, defaults to 24) — Number of hidden layers in the Transformer encoder. -

n_head (

int, optional, defaults to 8) — Number of attention heads for each attention layer in the Transformer encoder. -

n_inner (

int, optional, defaults to None) — Dimensionality of the inner feed-forward layers.Nonewill set it to 4 times n_embd -

activation_function (

str, optional, defaults to"quick_gelu") — Activation function (can be one of the activation functions defined in src/transformers/activations.py). Defaults to “quick_gelu”. -

resid_pdrop (

float, optional, defaults to 0.1) — The dropout probability for all fully connected layers in the embeddings, encoder, and pooler. -

embd_pdrop (

int, optional, defaults to 0.1) — The dropout ratio for the embeddings. -

attn_pdrop (

float, optional, defaults to 0.1) — The dropout ratio for the attention. -

layer_norm_epsilon (

float, optional, defaults to 1e-5) — The epsilon to use in the layer normalization layers. -

initializer_range (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. -

scale_attn_weights (

bool, optional, defaults toTrue) — Scale attention weights by dividing by sqrt(hidden_size).. -

use_cache (

bool, optional, defaults toTrue) — Whether or not the model should return the last key/values attentions (not used by all models). -

scale_attn_by_inverse_layer_idx (

bool, optional, defaults toFalse) — Whether to additionally scale attention weights by1 / layer_idx + 1. -

reorder_and_upcast_attn (

bool, optional, defaults toFalse) — Whether to scale keys (K) prior to computing attention (dot-product) and upcast attention dot-product/softmax to float() when training with mixed precision.

This is the configuration class to store the configuration of a ImageGPTModel or a TFImageGPTModel. It is

used to instantiate a GPT-2 model according to the specified arguments, defining the model architecture.

Instantiating a configuration with the defaults will yield a similar configuration to that of the ImageGPT

small architecture.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

Example:

>>> from transformers import ImageGPTModel, ImageGPTConfig

>>> # Initializing a ImageGPT configuration

>>> configuration = ImageGPTConfig()

>>> # Initializing a model from the configuration

>>> model = ImageGPTModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configImageGPTFeatureExtractor

class transformers.ImageGPTFeatureExtractor

< source >( clusters do_resize = True size = 32 resample = 2 do_normalize = True **kwargs )

Parameters

-

clusters (

np.ndarray) — The color clusters to use, as anp.ndarrayof shape(n_clusters, 3). -

do_resize (

bool, optional, defaults toTrue) — Whether to resize the input to a certainsize. -

size (

intorTuple(int), optional, defaults to 32) — Resize the input to the given size. If a tuple is provided, it should be (width, height). If only an integer is provided, then the input will be resized to (size, size). Only has an effect ifdo_resizeis set toTrue. -

resample (

int, optional, defaults toPIL.Image.BILINEAR) — An optional resampling filter. This can be one ofPIL.Image.NEAREST,PIL.Image.BOX,PIL.Image.BILINEAR,PIL.Image.HAMMING,PIL.Image.BICUBICorPIL.Image.LANCZOS. Only has an effect ifdo_resizeis set toTrue. -

do_normalize (

bool, optional, defaults toTrue) — Whether or not to normalize the input to the range between -1 and +1.

Constructs an ImageGPT feature extractor. This feature extractor can be used to resize images to a smaller resolution (such as 32x32 or 64x64), normalize them and finally color quantize them to obtain sequences of “pixel values” (color clusters).

This feature extractor inherits from FeatureExtractionMixin which contains most of the main methods. Users should refer to this superclass for more information regarding those methods.

__call__

< source >( images: typing.Union[PIL.Image.Image, numpy.ndarray, ForwardRef('torch.Tensor'), typing.List[PIL.Image.Image], typing.List[numpy.ndarray], typing.List[ForwardRef('torch.Tensor')]] return_tensors: typing.Union[str, transformers.file_utils.TensorType, NoneType] = None **kwargs ) → BatchFeature

Parameters

-

images (

PIL.Image.Image,np.ndarray,torch.Tensor,List[PIL.Image.Image],List[np.ndarray],List[torch.Tensor]) — The image or batch of images to be prepared. Each image can be a PIL image, NumPy array or PyTorch tensor. In case of a NumPy array/PyTorch tensor, each image should be of shape (C, H, W), where C is a number of channels, H and W are image height and width. -

return_tensors (

stror TensorType, optional, defaults to'np') — If set, will return tensors of a particular framework. Acceptable values are:'tf': Return TensorFlowtf.constantobjects.'pt': Return PyTorchtorch.Tensorobjects.'np': Return NumPynp.ndarrayobjects.'jax': Return JAXjnp.ndarrayobjects.

Returns

A BatchFeature with the following fields:

- pixel_values — Pixel values to be fed to a model, of shape (batch_size, num_channels, height, width).

Main method to prepare for the model one or several image(s).

NumPy arrays and PyTorch tensors are converted to PIL images when resizing, so the most efficient is to pass PIL images.

ImageGPTModel

class transformers.ImageGPTModel

< source >( config )

Parameters

- config (ImageGPTConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare ImageGPT Model transformer outputting raw hidden-states without any specific head on top.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >(

input_ids = None

past_key_values = None

attention_mask = None

token_type_ids = None

position_ids = None

head_mask = None

inputs_embeds = None

encoder_hidden_states = None

encoder_attention_mask = None

use_cache = None

output_attentions = None

output_hidden_states = None

return_dict = None

**kwargs

)

→

transformers.modeling_outputs.BaseModelOutputWithPastAndCrossAttentions or tuple(torch.FloatTensor)

Parameters

-

input_ids (

torch.LongTensorof shape(batch_size, sequence_length)) —input_ids_length=sequence_lengthifpast_key_valuesisNoneelsepast_key_values[0][0].shape[-2](sequence_lengthof input past key value states). Indices of input sequence tokens in the vocabulary.If

past_key_valuesis used, onlyinput_idsthat do not have their past calculated should be passed asinput_ids.Indices can be obtained using ImageGPTFeatureExtractor. See ImageGPTFeatureExtractor.call() for details.

-

past_key_values (

Tuple[Tuple[torch.Tensor]]of lengthconfig.n_layers) — Contains precomputed hidden-states (key and values in the attention blocks) as computed by the model (seepast_key_valuesoutput below). Can be used to speed up sequential decoding. Theinput_idswhich have their past given to this model should not be passed asinput_idsas they have already been computed. -

attention_mask (

torch.FloatTensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

-

token_type_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]:- 0 corresponds to a sentence A token,

- 1 corresponds to a sentence B token.

-

position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. -

head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

-

inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix.If

past_key_valuesis used, optionally only the lastinputs_embedshave to be input (seepast_key_values). -

use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). -

output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. -

output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. -

return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. -

labels (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Labels for language modeling. Note that the labels are shifted inside the model, i.e. you can setlabels = input_idsIndices are selected in[-100, 0, ..., config.vocab_size]All labels set to-100are ignored (masked), the loss is only computed for labels in[0, ..., config.vocab_size]

Returns

transformers.modeling_outputs.BaseModelOutputWithPastAndCrossAttentions or tuple(torch.FloatTensor)

A transformers.modeling_outputs.BaseModelOutputWithPastAndCrossAttentions or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ImageGPTConfig) and inputs.

-

last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model.If

past_key_valuesis used only the last hidden-state of the sequences of shape(batch_size, 1, hidden_size)is output. -

past_key_values (

tuple(tuple(torch.FloatTensor)), optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — Tuple oftuple(torch.FloatTensor)of lengthconfig.n_layers, with each tuple having 2 tensors of shape(batch_size, num_heads, sequence_length, embed_size_per_head)) and optionally ifconfig.is_encoder_decoder=True2 additional tensors of shape(batch_size, num_heads, encoder_sequence_length, embed_size_per_head).Contains pre-computed hidden-states (key and values in the self-attention blocks and optionally if

config.is_encoder_decoder=Truein the cross-attention blocks) that can be used (seepast_key_valuesinput) to speed up sequential decoding. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

-

cross_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueandconfig.add_cross_attention=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights of the decoder’s cross-attention layer, after the attention softmax, used to compute the weighted average in the cross-attention heads.

The ImageGPTModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import ImageGPTFeatureExtractor, ImageGPTModel

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> feature_extractor = ImageGPTFeatureExtractor.from_pretrained("openai/imagegpt-small")

>>> model = ImageGPTModel.from_pretrained("openai/imagegpt-small")

>>> inputs = feature_extractor(images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> last_hidden_states = outputs.last_hidden_stateImageGPTForCausalImageModeling

class transformers.ImageGPTForCausalImageModeling

< source >( config )

Parameters

- config (ImageGPTConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The ImageGPT Model transformer with a language modeling head on top (linear layer with weights tied to the input embeddings).

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >(

input_ids = None

past_key_values = None

attention_mask = None

token_type_ids = None

position_ids = None

head_mask = None

inputs_embeds = None

encoder_hidden_states = None

encoder_attention_mask = None

labels = None

use_cache = None

output_attentions = None

output_hidden_states = None

return_dict = None

**kwargs

)

→

transformers.modeling_outputs.CausalLMOutputWithCrossAttentions or tuple(torch.FloatTensor)

Parameters

-

input_ids (

torch.LongTensorof shape(batch_size, sequence_length)) —input_ids_length=sequence_lengthifpast_key_valuesisNoneelsepast_key_values[0][0].shape[-2](sequence_lengthof input past key value states). Indices of input sequence tokens in the vocabulary.If

past_key_valuesis used, onlyinput_idsthat do not have their past calculated should be passed asinput_ids.Indices can be obtained using ImageGPTFeatureExtractor. See ImageGPTFeatureExtractor.call() for details.

-

past_key_values (

Tuple[Tuple[torch.Tensor]]of lengthconfig.n_layers) — Contains precomputed hidden-states (key and values in the attention blocks) as computed by the model (seepast_key_valuesoutput below). Can be used to speed up sequential decoding. Theinput_idswhich have their past given to this model should not be passed asinput_idsas they have already been computed. -

attention_mask (

torch.FloatTensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

-

token_type_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]:- 0 corresponds to a sentence A token,

- 1 corresponds to a sentence B token.

-

position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. -

head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

-

inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix.If

past_key_valuesis used, optionally only the lastinputs_embedshave to be input (seepast_key_values). -

use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). -

output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. -

output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. -

return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. -

labels (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Labels for language modeling. Note that the labels are shifted inside the model, i.e. you can setlabels = input_idsIndices are selected in[-100, 0, ..., config.vocab_size]All labels set to-100are ignored (masked), the loss is only computed for labels in[0, ..., config.vocab_size]

Returns

transformers.modeling_outputs.CausalLMOutputWithCrossAttentions or tuple(torch.FloatTensor)

A transformers.modeling_outputs.CausalLMOutputWithCrossAttentions or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ImageGPTConfig) and inputs.

-

loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Language modeling loss (for next-token prediction). -

logits (

torch.FloatTensorof shape(batch_size, sequence_length, config.vocab_size)) — Prediction scores of the language modeling head (scores for each vocabulary token before SoftMax). -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

-

cross_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Cross attentions weights after the attention softmax, used to compute the weighted average in the cross-attention heads.

-

past_key_values (

tuple(tuple(torch.FloatTensor)), optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — Tuple oftorch.FloatTensortuples of lengthconfig.n_layers, with each tuple containing the cached key, value states of the self-attention and the cross-attention layers if model is used in encoder-decoder setting. Only relevant ifconfig.is_decoder = True.Contains pre-computed hidden-states (key and values in the attention blocks) that can be used (see

past_key_valuesinput) to speed up sequential decoding.

The ImageGPTForCausalImageModeling forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import ImageGPTFeatureExtractor, ImageGPTForCausalImageModeling

>>> import torch

>>> import matplotlib.pyplot as plt

>>> import numpy as np

>>> feature_extractor = ImageGPTFeatureExtractor.from_pretrained("openai/imagegpt-small")

>>> model = ImageGPTForCausalImageModeling.from_pretrained("openai/imagegpt-small")

>>> device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

>>> model.to(device)

>>> # unconditional generation of 8 images

>>> batch_size = 8

>>> context = torch.full((batch_size, 1), model.config.vocab_size - 1) # initialize with SOS token

>>> context = torch.tensor(context).to(device)

>>> output = model.generate(

... input_ids=context, max_length=model.config.n_positions + 1, temperature=1.0, do_sample=True, top_k=40

... )

>>> clusters = feature_extractor.clusters

>>> n_px = feature_extractor.size

>>> samples = output[:, 1:].cpu().detach().numpy()

>>> samples_img = [

... np.reshape(np.rint(127.5 * (clusters[s] + 1.0)), [n_px, n_px, 3]).astype(np.uint8) for s in samples

>>> ] # convert color cluster tokens back to pixels

>>> f, axes = plt.subplots(1, batch_size, dpi=300)

>>> for img, ax in zip(samples_img, axes):

... ax.axis("off")

... ax.imshow(img)ImageGPTForImageClassification

class transformers.ImageGPTForImageClassification

< source >( config )

Parameters

- config (ImageGPTConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The ImageGPT Model transformer with an image classification head on top (linear layer). ImageGPTForImageClassification average-pools the hidden states in order to do the classification.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >(

input_ids = None

past_key_values = None

attention_mask = None

token_type_ids = None

position_ids = None

head_mask = None

inputs_embeds = None

labels = None

use_cache = None

output_attentions = None

output_hidden_states = None

return_dict = None

**kwargs

)

→

transformers.modeling_outputs.SequenceClassifierOutputWithPastor tuple(torch.FloatTensor)

Parameters

-

input_ids (

torch.LongTensorof shape(batch_size, sequence_length)) —input_ids_length=sequence_lengthifpast_key_valuesisNoneelsepast_key_values[0][0].shape[-2](sequence_lengthof input past key value states). Indices of input sequence tokens in the vocabulary.If

past_key_valuesis used, onlyinput_idsthat do not have their past calculated should be passed asinput_ids.Indices can be obtained using ImageGPTFeatureExtractor. See ImageGPTFeatureExtractor.call() for details.

-

past_key_values (

Tuple[Tuple[torch.Tensor]]of lengthconfig.n_layers) — Contains precomputed hidden-states (key and values in the attention blocks) as computed by the model (seepast_key_valuesoutput below). Can be used to speed up sequential decoding. Theinput_idswhich have their past given to this model should not be passed asinput_idsas they have already been computed. -

attention_mask (

torch.FloatTensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

-

token_type_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]:- 0 corresponds to a sentence A token,

- 1 corresponds to a sentence B token.

-

position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. -

head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

-

inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix.If

past_key_valuesis used, optionally only the lastinputs_embedshave to be input (seepast_key_values). -

use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). -

output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. -

output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. -

return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. -

labels (

torch.LongTensorof shape(batch_size,), optional) — Labels for computing the sequence classification/regression loss. Indices should be in[0, ..., config.num_labels - 1]. Ifconfig.num_labels == 1a regression loss is computed (Mean-Square loss), Ifconfig.num_labels > 1a classification loss is computed (Cross-Entropy).

Returns

transformers.modeling_outputs.SequenceClassifierOutputWithPastor tuple(torch.FloatTensor)

A transformers.modeling_outputs.SequenceClassifierOutputWithPastor a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ImageGPTConfig) and inputs.

-

loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Classification (or regression if config.num_labels==1) loss. -

logits (

torch.FloatTensorof shape(batch_size, config.num_labels)) — Classification (or regression if config.num_labels==1) scores (before SoftMax). -

past_key_values (

tuple(tuple(torch.FloatTensor)), optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — Tuple oftuple(torch.FloatTensor)of lengthconfig.n_layers, with each tuple having 2 tensors of shape(batch_size, num_heads, sequence_length, embed_size_per_head))Contains pre-computed hidden-states (key and values in the self-attention blocks) that can be used (see

past_key_valuesinput) to speed up sequential decoding. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The ImageGPTForImageClassification forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import ImageGPTFeatureExtractor, ImageGPTForImageClassification

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> feature_extractor = ImageGPTFeatureExtractor.from_pretrained("openai/imagegpt-small")

>>> model = ImageGPTForImageClassification.from_pretrained("openai/imagegpt-small")

>>> inputs = feature_extractor(images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> logits = outputs.logits