Transformers documentation

UPerNet

This model was released on 2018-07-26 and added to Hugging Face Transformers on 2023-01-16.

UPerNet

Overview

The UPerNet model was proposed in Unified Perceptual Parsing for Scene Understanding by Tete Xiao, Yingcheng Liu, Bolei Zhou, Yuning Jiang, Jian Sun. UPerNet is a general framework to effectively segment a wide range of concepts from images, leveraging any vision backbone like ConvNeXt or Swin.

The abstract from the paper is the following:

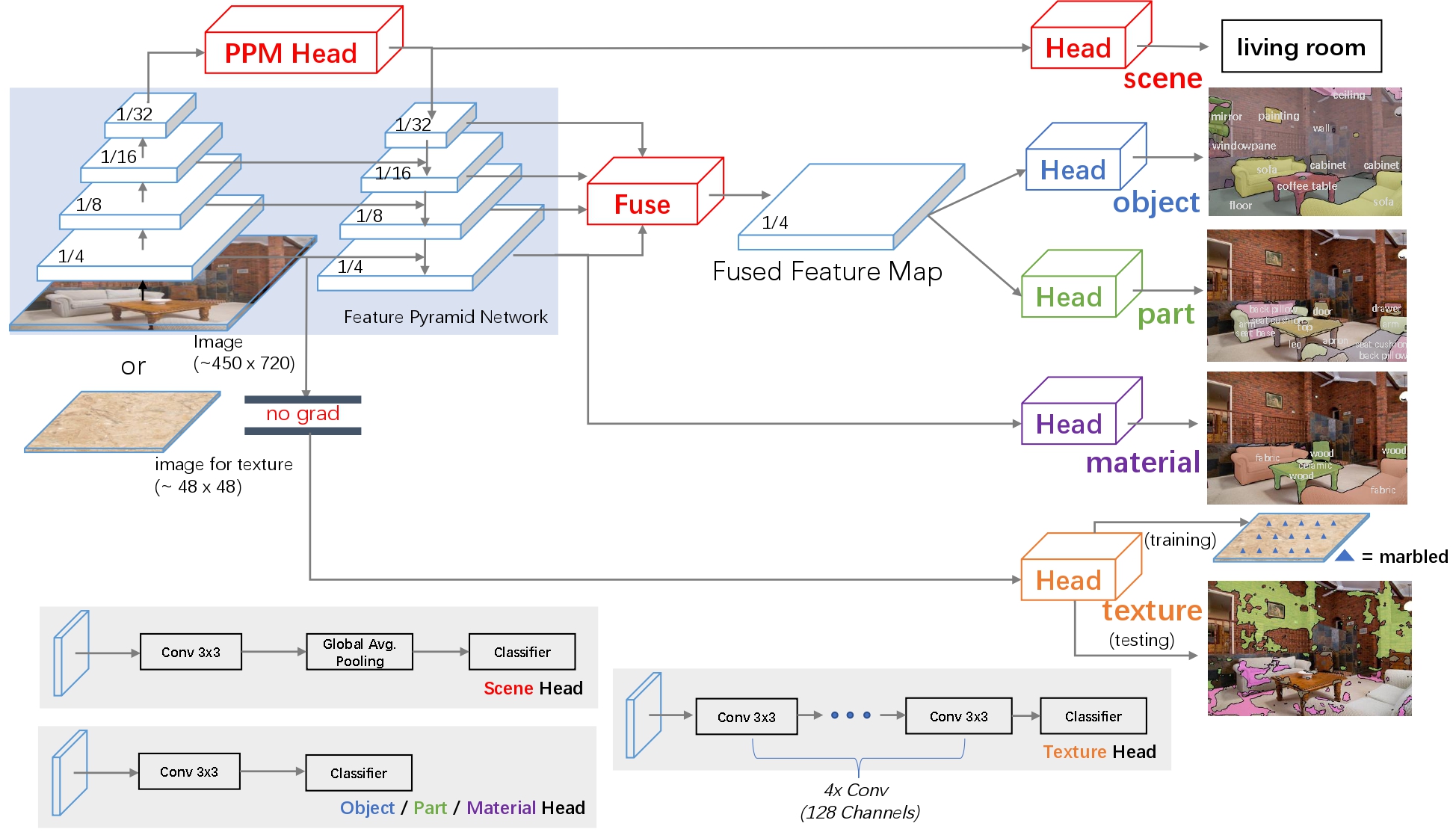

Humans recognize the visual world at multiple levels: we effortlessly categorize scenes and detect objects inside, while also identifying the textures and surfaces of the objects along with their different compositional parts. In this paper, we study a new task called Unified Perceptual Parsing, which requires the machine vision systems to recognize as many visual concepts as possible from a given image. A multi-task framework called UPerNet and a training strategy are developed to learn from heterogeneous image annotations. We benchmark our framework on Unified Perceptual Parsing and show that it is able to effectively segment a wide range of concepts from images. The trained networks are further applied to discover visual knowledge in natural scenes.

UPerNet framework. Taken from the original paper.

UPerNet framework. Taken from the original paper. This model was contributed by nielsr. The original code is based on OpenMMLab’s mmsegmentation here.

Usage examples

UPerNet is a general framework for semantic segmentation. It can be used with any vision backbone, like so:

from transformers import SwinConfig, UperNetConfig, UperNetForSemanticSegmentation

backbone_config = SwinConfig(out_features=["stage1", "stage2", "stage3", "stage4"])

config = UperNetConfig(backbone_config=backbone_config)

model = UperNetForSemanticSegmentation(config)To use another vision backbone, like ConvNeXt, simply instantiate the model with the appropriate backbone:

from transformers import ConvNextConfig, UperNetConfig, UperNetForSemanticSegmentation

backbone_config = ConvNextConfig(out_features=["stage1", "stage2", "stage3", "stage4"])

config = UperNetConfig(backbone_config=backbone_config)

model = UperNetForSemanticSegmentation(config)Note that this will randomly initialize all the weights of the model.

Resources

A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with UPerNet.

- Demo notebooks for UPerNet can be found here.

- UperNetForSemanticSegmentation is supported by this example script and notebook.

- See also: Semantic segmentation task guide

If you’re interested in submitting a resource to be included here, please feel free to open a Pull Request and we’ll review it! The resource should ideally demonstrate something new instead of duplicating an existing resource.

UperNetConfig

class transformers.UperNetConfig

< source >( backbone_config = None backbone = None use_pretrained_backbone = False use_timm_backbone = False backbone_kwargs = None hidden_size = 512 initializer_range = 0.02 pool_scales = [1, 2, 3, 6] use_auxiliary_head = True auxiliary_loss_weight = 0.4 auxiliary_in_channels = None auxiliary_channels = 256 auxiliary_num_convs = 1 auxiliary_concat_input = False loss_ignore_index = 255 **kwargs )

Parameters

- backbone_config (

PretrainedConfigordict, optional, defaults toResNetConfig()) — The configuration of the backbone model. - backbone (

str, optional) — Name of backbone to use whenbackbone_configisNone. Ifuse_pretrained_backboneisTrue, this will load the corresponding pretrained weights from the timm or transformers library. Ifuse_pretrained_backboneisFalse, this loads the backbone’s config and uses that to initialize the backbone with random weights. - use_pretrained_backbone (

bool, optional,False) — Whether to use pretrained weights for the backbone. - use_timm_backbone (

bool, optional,False) — Whether to loadbackbonefrom the timm library. IfFalse, the backbone is loaded from the transformers library. - backbone_kwargs (

dict, optional) — Keyword arguments to be passed to AutoBackbone when loading from a checkpoint e.g.{'out_indices': (0, 1, 2, 3)}. Cannot be specified ifbackbone_configis set. - hidden_size (

int, optional, defaults to 512) — The number of hidden units in the convolutional layers. - initializer_range (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. - pool_scales (

tuple[int], optional, defaults to[1, 2, 3, 6]) — Pooling scales used in Pooling Pyramid Module applied on the last feature map. - use_auxiliary_head (

bool, optional, defaults toTrue) — Whether to use an auxiliary head during training. - auxiliary_loss_weight (

float, optional, defaults to 0.4) — Weight of the cross-entropy loss of the auxiliary head. - auxiliary_channels (

int, optional, defaults to 256) — Number of channels to use in the auxiliary head. - auxiliary_num_convs (

int, optional, defaults to 1) — Number of convolutional layers to use in the auxiliary head. - auxiliary_concat_input (

bool, optional, defaults toFalse) — Whether to concatenate the output of the auxiliary head with the input before the classification layer. - loss_ignore_index (

int, optional, defaults to 255) — The index that is ignored by the loss function.

This is the configuration class to store the configuration of an UperNetForSemanticSegmentation. It is used to instantiate an UperNet model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the UperNet openmmlab/upernet-convnext-tiny architecture.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

Examples:

>>> from transformers import UperNetConfig, UperNetForSemanticSegmentation

>>> # Initializing a configuration

>>> configuration = UperNetConfig()

>>> # Initializing a model (with random weights) from the configuration

>>> model = UperNetForSemanticSegmentation(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configUperNetForSemanticSegmentation

class transformers.UperNetForSemanticSegmentation

< source >( config )

Parameters

- config (UperNetForSemanticSegmentation) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

UperNet framework leveraging any vision backbone e.g. for ADE20k, CityScapes.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: typing.Optional[torch.Tensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None labels: typing.Optional[torch.Tensor] = None return_dict: typing.Optional[bool] = None ) → transformers.modeling_outputs.SemanticSegmenterOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.Tensorof shape(batch_size, num_channels, image_size, image_size), optional) — The tensors corresponding to the input images. Pixel values can be obtained using SegformerImageProcessor. See SegformerImageProcessor.call() for details (processor_classuses SegformerImageProcessor for processing images). - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - labels (

torch.LongTensorof shape(batch_size, height, width), optional) — Ground truth semantic segmentation maps for computing the loss. Indices should be in[0, ..., config.num_labels - 1]. Ifconfig.num_labels > 1, a classification loss is computed (Cross-Entropy). - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.modeling_outputs.SemanticSegmenterOutput or tuple(torch.FloatTensor)

A transformers.modeling_outputs.SemanticSegmenterOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (UperNetConfig) and inputs.

-

loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Classification (or regression if config.num_labels==1) loss. -

logits (

torch.FloatTensorof shape(batch_size, config.num_labels, logits_height, logits_width)) — Classification scores for each pixel.The logits returned do not necessarily have the same size as the

pixel_valuespassed as inputs. This is to avoid doing two interpolations and lose some quality when a user needs to resize the logits to the original image size as post-processing. You should always check your logits shape and resize as needed. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, patch_size, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, patch_size, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The UperNetForSemanticSegmentation forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the pre and post processing steps while the latter silently ignores them.

Examples:

>>> from transformers import AutoImageProcessor, UperNetForSemanticSegmentation

>>> from PIL import Image

>>> from huggingface_hub import hf_hub_download

>>> image_processor = AutoImageProcessor.from_pretrained("openmmlab/upernet-convnext-tiny")

>>> model = UperNetForSemanticSegmentation.from_pretrained("openmmlab/upernet-convnext-tiny")

>>> filepath = hf_hub_download(

... repo_id="hf-internal-testing/fixtures_ade20k", filename="ADE_val_00000001.jpg", repo_type="dataset"

... )

>>> image = Image.open(filepath).convert("RGB")

>>> inputs = image_processor(images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> logits = outputs.logits # shape (batch_size, num_labels, height, width)

>>> list(logits.shape)

[1, 150, 512, 512]