Transformers documentation

KOSMOS-2

This model was released on 2023-06-26 and added to Hugging Face Transformers on 2023-10-30.

KOSMOS-2

Overview

The KOSMOS-2 model was proposed in Kosmos-2: Grounding Multimodal Large Language Models to the World by Zhiliang Peng, Wenhui Wang, Li Dong, Yaru Hao, Shaohan Huang, Shuming Ma, Furu Wei.

KOSMOS-2 is a Transformer-based causal language model and is trained using the next-word prediction task on a web-scale

dataset of grounded image-text pairs GRIT. The spatial coordinates of

the bounding boxes in the dataset are converted to a sequence of location tokens, which are appended to their respective

entity text spans (for example, a snowman followed by <patch_index_0044><patch_index_0863>). The data format is

similar to “hyperlinks” that connect the object regions in an image to their text span in the corresponding caption.

The abstract from the paper is the following:

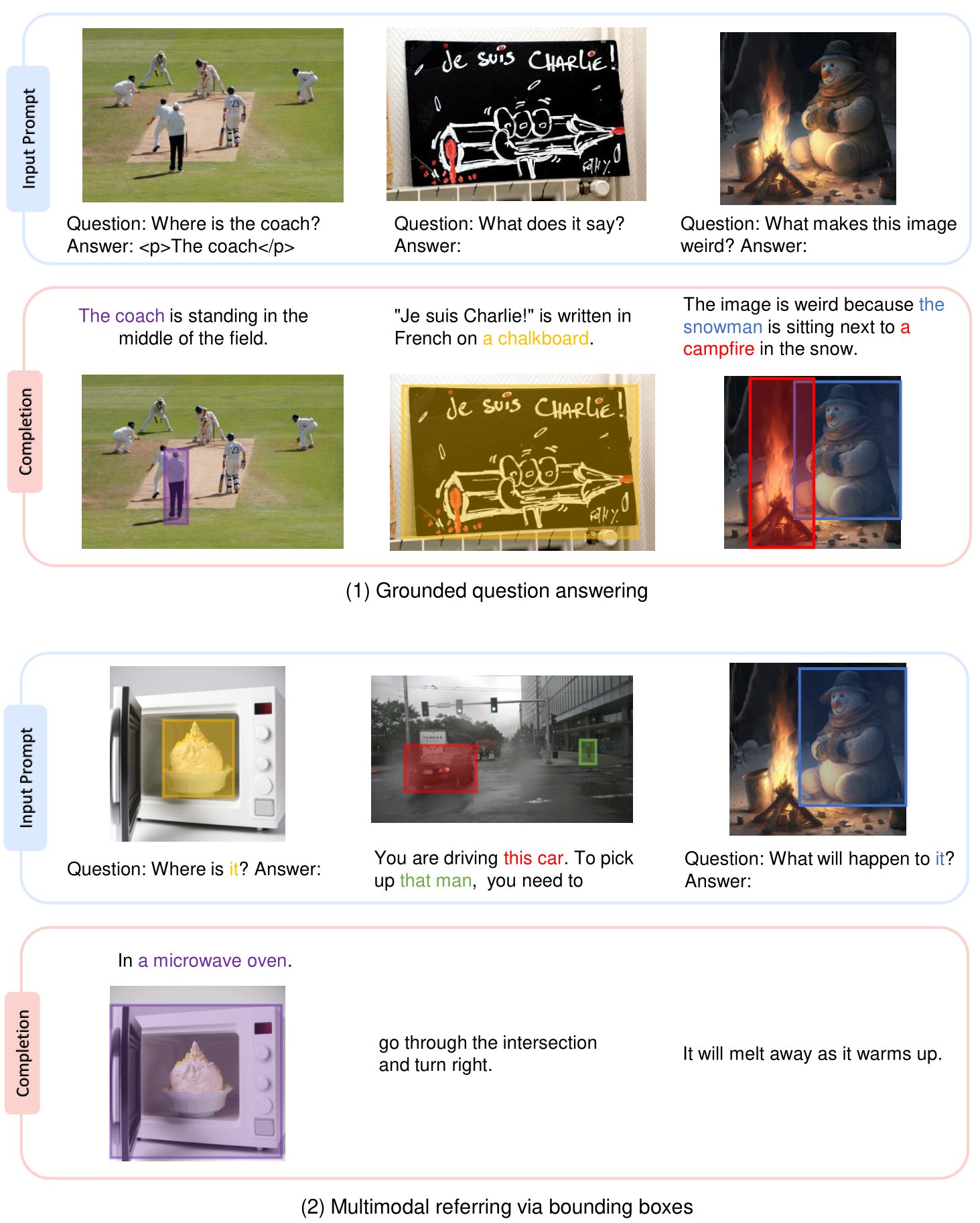

We introduce Kosmos-2, a Multimodal Large Language Model (MLLM), enabling new capabilities of perceiving object descriptions (e.g., bounding boxes) and grounding text to the visual world. Specifically, we represent refer expressions as links in Markdown, i.e., “[text span](bounding boxes)”, where object descriptions are sequences of location tokens. Together with multimodal corpora, we construct large-scale data of grounded image-text pairs (called GrIT) to train the model. In addition to the existing capabilities of MLLMs (e.g., perceiving general modalities, following instructions, and performing in-context learning), Kosmos-2 integrates the grounding capability into downstream applications. We evaluate Kosmos-2 on a wide range of tasks, including (i) multimodal grounding, such as referring expression comprehension, and phrase grounding, (ii) multimodal referring, such as referring expression generation, (iii) perception-language tasks, and (iv) language understanding and generation. This work lays out the foundation for the development of Embodiment AI and sheds light on the big convergence of language, multimodal perception, action, and world modeling, which is a key step toward artificial general intelligence. Code and pretrained models are available at https://aka.ms/kosmos-2.

Overview of tasks that KOSMOS-2 can handle. Taken from the original paper.

Overview of tasks that KOSMOS-2 can handle. Taken from the original paper. Example

>>> from PIL import Image

>>> import requests

>>> from transformers import AutoProcessor, Kosmos2ForConditionalGeneration

>>> model = Kosmos2ForConditionalGeneration.from_pretrained("microsoft/kosmos-2-patch14-224")

>>> processor = AutoProcessor.from_pretrained("microsoft/kosmos-2-patch14-224")

>>> url = "https://huggingface.co/microsoft/kosmos-2-patch14-224/resolve/main/snowman.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> prompt = "<grounding> An image of"

>>> inputs = processor(text=prompt, images=image, return_tensors="pt")

>>> generated_ids = model.generate(

... pixel_values=inputs["pixel_values"],

... input_ids=inputs["input_ids"],

... attention_mask=inputs["attention_mask"],

... image_embeds=None,

... image_embeds_position_mask=inputs["image_embeds_position_mask"],

... use_cache=True,

... max_new_tokens=64,

... )

>>> generated_text = processor.batch_decode(generated_ids, skip_special_tokens=True)[0]

>>> processed_text = processor.post_process_generation(generated_text, cleanup_and_extract=False)

>>> processed_text

'<grounding> An image of<phrase> a snowman</phrase><object><patch_index_0044><patch_index_0863></object> warming himself by<phrase> a fire</phrase><object><patch_index_0005><patch_index_0911></object>.'

>>> caption, entities = processor.post_process_generation(generated_text)

>>> caption

'An image of a snowman warming himself by a fire.'

>>> entities

[('a snowman', (12, 21), [(0.390625, 0.046875, 0.984375, 0.828125)]), ('a fire', (41, 47), [(0.171875, 0.015625, 0.484375, 0.890625)])]This model was contributed by Yih-Dar SHIEH. The original code can be found here.

Kosmos2Config

class transformers.Kosmos2Config

< source >( text_config = None vision_config = None latent_query_num = 64 **kwargs )

Parameters

- text_config (

dict, optional) — Dictionary of configuration options used to initializeKosmos2TextConfig. - vision_config (

dict, optional) — Dictionary of configuration options used to initializeKosmos2VisionConfig. - latent_query_num (

int, optional, defaults to 64) — The number of latent query tokens that represent the image features used in the text decoder component. - kwargs (optional) — Dictionary of keyword arguments.

This is the configuration class to store the configuration of a Kosmos2Model. It is used to instantiate a KOSMOS-2 model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the KOSMOS-2 microsoft/kosmos-2-patch14-224 architecture.

Example:

>>> from transformers import Kosmos2Config, Kosmos2Model

>>> # Initializing a Kosmos-2 kosmos-2-patch14-224 style configuration

>>> configuration = Kosmos2Config()

>>> # Initializing a model (with random weights) from the kosmos-2-patch14-224 style configuration

>>> model = Kosmos2Model(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configKosmos2ImageProcessor

Kosmos2Processor

class transformers.Kosmos2Processor

< source >( image_processor tokenizer num_patch_index_tokens = 1024 *kwargs )

Parameters

- image_processor (

CLIPImageProcessor) — An instance of CLIPImageProcessor. The image processor is a required input. - tokenizer (

XLMRobertaTokenizerFast) — An instance of [‘XLMRobertaTokenizerFast`]. The tokenizer is a required input. - num_patch_index_tokens (

int, optional, defaults to 1024) — The number of tokens that represent patch indices.

Constructs an KOSMOS-2 processor which wraps a KOSMOS-2 image processor and a KOSMOS-2 tokenizer into a single processor.

Kosmos2Processor offers all the functionalities of CLIPImageProcessor and some functionalities of XLMRobertaTokenizerFast. See the docstring of call() and decode() for more information.

__call__

< source >( images: typing.Union[ForwardRef('PIL.Image.Image'), numpy.ndarray, ForwardRef('torch.Tensor'), list['PIL.Image.Image'], list[numpy.ndarray], list['torch.Tensor'], NoneType] = None text: typing.Union[str, list[str]] = None **kwargs: typing_extensions.Unpack[transformers.models.kosmos2.processing_kosmos2.Kosmos2ProcessorKwargs] )

Parameters

- bboxes (

Union[list[tuple[int]], list[tuple[float]], list[list[tuple[int]]], list[list[tuple[float]]]], optional) — The bounding bboxes associated totexts. - num_image_tokens (

int, optional defaults to 64) — The number of (consecutive) places that are used to mark the placeholders to store image information. This should be the same aslatent_query_numin the instance ofKosmos2Configyou are using. - first_image_token_id (

int, optional) — The token id that will be used for the first place of the subsequence that is reserved to store image information. If unset, will default toself.tokenizer.unk_token_id + 1. - add_eos_token (

bool, defaults toFalse) — Whether or not to includeEOStoken id in the encoding whenadd_special_tokens=True.

This method uses CLIPImageProcessor.call() method to prepare image(s) for the model, and XLMRobertaTokenizerFast.call() to prepare text for the model.

Please refer to the docstring of the above two methods for more information.

The rest of this documentation shows the arguments specific to Kosmos2Processor.

Kosmos2Model

class transformers.Kosmos2Model

< source >( config: Kosmos2Config )

Parameters

- config (Kosmos2Config) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

KOSMOS-2 Model for generating text and image features. The model consists of a vision encoder and a language model.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: typing.Optional[torch.Tensor] = None input_ids: typing.Optional[torch.Tensor] = None image_embeds_position_mask: typing.Optional[torch.Tensor] = None attention_mask: typing.Optional[torch.Tensor] = None past_key_values: typing.Optional[transformers.cache_utils.Cache] = None image_embeds: typing.Optional[torch.Tensor] = None inputs_embeds: typing.Optional[torch.Tensor] = None position_ids: typing.Optional[torch.Tensor] = None use_cache: typing.Optional[bool] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None interpolate_pos_encoding: bool = False return_dict: typing.Optional[bool] = None **kwargs: typing_extensions.Unpack[transformers.modeling_flash_attention_utils.FlashAttentionKwargs] ) → transformers.models.kosmos2.modeling_kosmos2.Kosmos2ModelOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.Tensorof shape(batch_size, num_channels, image_size, image_size), optional) — The tensors corresponding to the input images. Pixel values can be obtained usingimage_processor_class. Seeimage_processor_class.__call__for details (processor_classusesimage_processor_classfor processing images). - input_ids (

torch.Tensorof shape(batch_size, sequence_length), optional) — Indices of input sequence tokens in the vocabulary. Padding will be ignored by default.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- image_embeds_position_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to indicate the location in a sequence to insert the image features . Mask values selected in[0, 1]:- 1 for places where to put the image features,

- 0 for places that are not for image features (i.e. for text tokens).

- attention_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

- past_key_values (

~cache_utils.Cache, optional) — Pre-computed hidden-states (key and values in the self-attention blocks and in the cross-attention blocks) that can be used to speed up sequential decoding. This typically consists in thepast_key_valuesreturned by the model at a previous stage of decoding, whenuse_cache=Trueorconfig.use_cache=True.Only Cache instance is allowed as input, see our kv cache guide. If no

past_key_valuesare passed, DynamicCache will be initialized by default.The model will output the same cache format that is fed as input.

If

past_key_valuesare used, the user is expected to input only unprocessedinput_ids(those that don’t have their past key value states given to this model) of shape(batch_size, unprocessed_length)instead of allinput_idsof shape(batch_size, sequence_length). - image_embeds (

torch.FloatTensorof shape(batch_size, latent_query_num, hidden_size), optional) — Sequence of hidden-states at the output ofKosmos2ImageToTextProjection. - inputs_embeds (

torch.Tensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix. - position_ids (

torch.Tensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.n_positions - 1]. - use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - interpolate_pos_encoding (

bool, defaults toFalse) — Whether to interpolate the pre-trained position encodings. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.kosmos2.modeling_kosmos2.Kosmos2ModelOutput or tuple(torch.FloatTensor)

A transformers.models.kosmos2.modeling_kosmos2.Kosmos2ModelOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (Kosmos2Config) and inputs.

-

last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional, defaults toNone) — Sequence of hidden-states at the output of the last layer of the model. -

past_key_values (

Cache, optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — It is a Cache instance. For more details, see our kv cache guide.Contains pre-computed hidden-states (key and values in the self-attention blocks and optionally if

config.is_encoder_decoder=Truein the cross-attention blocks) that can be used (seepast_key_valuesinput) to speed up sequential decoding. -

hidden_states (

tuple[torch.FloatTensor], optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple[torch.FloatTensor], optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

-

image_embeds (

torch.FloatTensorof shape(batch_size, latent_query_num, hidden_size), optional) — Sequence of hidden-states at the output ofKosmos2ImageToTextProjection. -

projection_attentions (

tuple(torch.FloatTensor), optional) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights given by

Kosmos2ImageToTextProjection, after the attention softmax, used to compute the weighted average in the self-attention heads. -

vision_model_output (

BaseModelOutputWithPooling, optional) — The output of theKosmos2VisionModel.

The Kosmos2Model forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the pre and post processing steps while the latter silently ignores them.

Examples:

>>> from PIL import Image

>>> import requests

>>> from transformers import AutoProcessor, Kosmos2Model

>>> model = Kosmos2Model.from_pretrained("microsoft/kosmos-2-patch14-224")

>>> processor = AutoProcessor.from_pretrained("microsoft/kosmos-2-patch14-224")

>>> url = "https://huggingface.co/microsoft/kosmos-2-patch14-224/resolve/main/snowman.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> text = (

... "<grounding> An image of<phrase> a snowman</phrase><object><patch_index_0044><patch_index_0863>"

... "</object> warming himself by<phrase> a fire</phrase><object><patch_index_0005><patch_index_0911>"

... "</object>"

... )

>>> inputs = processor(text=text, images=image, return_tensors="pt", add_eos_token=True)

>>> last_hidden_state = model(

... pixel_values=inputs["pixel_values"],

... input_ids=inputs["input_ids"],

... attention_mask=inputs["attention_mask"],

... image_embeds_position_mask=inputs["image_embeds_position_mask"],

... ).last_hidden_state

>>> list(last_hidden_state.shape)

[1, 91, 2048]Kosmos2ForConditionalGeneration

class transformers.Kosmos2ForConditionalGeneration

< source >( config: Kosmos2Config )

Parameters

- config (Kosmos2Config) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

KOSMOS-2 Model for generating text and bounding boxes given an image. The model consists of a vision encoder and a language model.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: typing.Optional[torch.Tensor] = None input_ids: typing.Optional[torch.Tensor] = None image_embeds_position_mask: typing.Optional[torch.Tensor] = None attention_mask: typing.Optional[torch.Tensor] = None past_key_values: typing.Optional[transformers.cache_utils.Cache] = None image_embeds: typing.Optional[torch.Tensor] = None inputs_embeds: typing.Optional[torch.Tensor] = None position_ids: typing.Optional[torch.Tensor] = None labels: typing.Optional[torch.LongTensor] = None use_cache: typing.Optional[bool] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None logits_to_keep: typing.Union[int, torch.Tensor] = 0 **kwargs: typing_extensions.Unpack[transformers.utils.generic.TransformersKwargs] ) → transformers.models.kosmos2.modeling_kosmos2.Kosmos2ForConditionalGenerationModelOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.Tensorof shape(batch_size, num_channels, image_size, image_size), optional) — The tensors corresponding to the input images. Pixel values can be obtained usingimage_processor_class. Seeimage_processor_class.__call__for details (processor_classusesimage_processor_classfor processing images). - input_ids (

torch.Tensorof shape(batch_size, sequence_length), optional) — Indices of input sequence tokens in the vocabulary. Padding will be ignored by default.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- image_embeds_position_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to indicate the location in a sequence to insert the image features . Mask values selected in[0, 1]:- 1 for places where to put the image features,

- 0 for places that are not for image features (i.e. for text tokens).

- attention_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

- past_key_values (

~cache_utils.Cache, optional) — Pre-computed hidden-states (key and values in the self-attention blocks and in the cross-attention blocks) that can be used to speed up sequential decoding. This typically consists in thepast_key_valuesreturned by the model at a previous stage of decoding, whenuse_cache=Trueorconfig.use_cache=True.Only Cache instance is allowed as input, see our kv cache guide. If no

past_key_valuesare passed, DynamicCache will be initialized by default.The model will output the same cache format that is fed as input.

If

past_key_valuesare used, the user is expected to input only unprocessedinput_ids(those that don’t have their past key value states given to this model) of shape(batch_size, unprocessed_length)instead of allinput_idsof shape(batch_size, sequence_length). - image_embeds (

torch.FloatTensorof shape(batch_size, latent_query_num, hidden_size), optional) — Sequence of hidden-states at the output ofKosmos2ImageToTextProjection. - inputs_embeds (

torch.Tensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passinginput_idsyou can choose to directly pass an embedded representation. This is useful if you want more control over how to convertinput_idsindices into associated vectors than the model’s internal embedding lookup matrix. - position_ids (

torch.Tensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.n_positions - 1]. - labels (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Labels for computing the left-to-right language modeling loss (next word prediction). Indices should be in[-100, 0, ..., config.vocab_size](seeinput_idsdocstring) Tokens with indices set to-100are ignored (masked), the loss is only computed for the tokens with labels in[0, ..., config.vocab_size] - use_cache (

bool, optional) — If set toTrue,past_key_valueskey value states are returned and can be used to speed up decoding (seepast_key_values). - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - logits_to_keep (

Union[int, torch.Tensor], defaults to0) — If anint, compute logits for the lastlogits_to_keeptokens. If0, calculate logits for allinput_ids(special case). Only last token logits are needed for generation, and calculating them only for that token can save memory, which becomes pretty significant for long sequences or large vocabulary size. If atorch.Tensor, must be 1D corresponding to the indices to keep in the sequence length dimension. This is useful when using packed tensor format (single dimension for batch and sequence length).

Returns

transformers.models.kosmos2.modeling_kosmos2.Kosmos2ForConditionalGenerationModelOutput or tuple(torch.FloatTensor)

A transformers.models.kosmos2.modeling_kosmos2.Kosmos2ForConditionalGenerationModelOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (Kosmos2Config) and inputs.

-

loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Language modeling loss (for next-token prediction). -

logits (

torch.FloatTensorof shape(batch_size, sequence_length, config.vocab_size)) — Prediction scores of the language modeling head (scores for each vocabulary token before SoftMax). -

past_key_values (

Cache, optional, returned whenuse_cache=Trueis passed or whenconfig.use_cache=True) — It is a Cache instance. For more details, see our kv cache guide.Contains pre-computed hidden-states (key and values in the self-attention blocks and optionally if

config.is_encoder_decoder=Truein the cross-attention blocks) that can be used (seepast_key_valuesinput) to speed up sequential decoding. -

hidden_states (

tuple[torch.FloatTensor], optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple[torch.FloatTensor], optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

-

image_embeds (

torch.FloatTensorof shape(batch_size, latent_query_num, hidden_size), optional) — Sequence of hidden-states at the output ofKosmos2ImageToTextProjection. -

projection_attentions (

tuple(torch.FloatTensor), optional) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights given by

Kosmos2ImageToTextProjection, after the attention softmax, used to compute the weighted average in the self-attention heads. -

vision_model_output (

BaseModelOutputWithPooling, optional) — The output of theKosmos2VisionModel.

The Kosmos2ForConditionalGeneration forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the pre and post processing steps while the latter silently ignores them.

Examples:

>>> from PIL import Image

>>> import requests

>>> from transformers import AutoProcessor, Kosmos2ForConditionalGeneration

>>> model = Kosmos2ForConditionalGeneration.from_pretrained("microsoft/kosmos-2-patch14-224")

>>> processor = AutoProcessor.from_pretrained("microsoft/kosmos-2-patch14-224")

>>> url = "https://huggingface.co/microsoft/kosmos-2-patch14-224/resolve/main/snowman.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> prompt = "<grounding> An image of"

>>> inputs = processor(text=prompt, images=image, return_tensors="pt")

>>> generated_ids = model.generate(

... pixel_values=inputs["pixel_values"],

... input_ids=inputs["input_ids"],

... attention_mask=inputs["attention_mask"],

... image_embeds=None,

... image_embeds_position_mask=inputs["image_embeds_position_mask"],

... use_cache=True,

... max_new_tokens=64,

... )

>>> generated_text = processor.batch_decode(generated_ids, skip_special_tokens=True)[0]

>>> processed_text = processor.post_process_generation(generated_text, cleanup_and_extract=False)

>>> processed_text

'<grounding> An image of<phrase> a snowman</phrase><object><patch_index_0044><patch_index_0863></object> warming himself by<phrase> a fire</phrase><object><patch_index_0005><patch_index_0911></object>.'

>>> caption, entities = processor.post_process_generation(generated_text)

>>> caption

'An image of a snowman warming himself by a fire.'

>>> entities

[('a snowman', (12, 21), [(0.390625, 0.046875, 0.984375, 0.828125)]), ('a fire', (41, 47), [(0.171875, 0.015625, 0.484375, 0.890625)])]