TheBloke's LLM work is generously supported by a grant from andreessen horowitz (a16z)

GenZ 13B v2 - GPTQ

- Model creator: Bud

- Original model: GenZ 13B v2

Description

This repo contains GPTQ model files for Bud's GenZ 13B v2.

Multiple GPTQ parameter permutations are provided; see Provided Files below for details of the options provided, their parameters, and the software used to create them.

Repositories available

- AWQ model(s) for GPU inference.

- GPTQ models for GPU inference, with multiple quantisation parameter options.

- 2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference

- Bud's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions

Prompt template: User-Assistant-Newlines

### User:

{prompt}

### Assistant:

Provided files, and GPTQ parameters

Multiple quantisation parameters are provided, to allow you to choose the best one for your hardware and requirements.

Each separate quant is in a different branch. See below for instructions on fetching from different branches.

Most GPTQ files are made with AutoGPTQ. Mistral models are currently made with Transformers.

Explanation of GPTQ parameters

- Bits: The bit size of the quantised model.

- GS: GPTQ group size. Higher numbers use less VRAM, but have lower quantisation accuracy. "None" is the lowest possible value.

- Act Order: True or False. Also known as

desc_act. True results in better quantisation accuracy. Some GPTQ clients have had issues with models that use Act Order plus Group Size, but this is generally resolved now. - Damp %: A GPTQ parameter that affects how samples are processed for quantisation. 0.01 is default, but 0.1 results in slightly better accuracy.

- GPTQ dataset: The calibration dataset used during quantisation. Using a dataset more appropriate to the model's training can improve quantisation accuracy. Note that the GPTQ calibration dataset is not the same as the dataset used to train the model - please refer to the original model repo for details of the training dataset(s).

- Sequence Length: The length of the dataset sequences used for quantisation. Ideally this is the same as the model sequence length. For some very long sequence models (16+K), a lower sequence length may have to be used. Note that a lower sequence length does not limit the sequence length of the quantised model. It only impacts the quantisation accuracy on longer inference sequences.

- ExLlama Compatibility: Whether this file can be loaded with ExLlama, which currently only supports Llama models in 4-bit.

| Branch | Bits | GS | Act Order | Damp % | GPTQ Dataset | Seq Len | Size | ExLlama | Desc |

|---|---|---|---|---|---|---|---|---|---|

| main | 4 | 128 | Yes | 0.1 | wikitext | 4096 | 7.26 GB | Yes | 4-bit, with Act Order and group size 128g. Uses even less VRAM than 64g, but with slightly lower accuracy. |

| gptq-4bit-32g-actorder_True | 4 | 32 | Yes | 0.1 | wikitext | 4096 | 8.00 GB | Yes | 4-bit, with Act Order and group size 32g. Gives highest possible inference quality, with maximum VRAM usage. |

| gptq-8bit--1g-actorder_True | 8 | None | Yes | 0.1 | wikitext | 4096 | 13.36 GB | No | 8-bit, with Act Order. No group size, to lower VRAM requirements. |

| gptq-8bit-128g-actorder_True | 8 | 128 | Yes | 0.1 | wikitext | 4096 | 13.65 GB | No | 8-bit, with group size 128g for higher inference quality and with Act Order for even higher accuracy. |

| gptq-8bit-32g-actorder_True | 8 | 32 | Yes | 0.1 | wikitext | 4096 | 14.54 GB | No | 8-bit, with group size 32g and Act Order for maximum inference quality. |

| gptq-4bit-64g-actorder_True | 4 | 64 | Yes | 0.1 | wikitext | 4096 | 7.51 GB | Yes | 4-bit, with Act Order and group size 64g. Uses less VRAM than 32g, but with slightly lower accuracy. |

How to download, including from branches

In text-generation-webui

To download from the main branch, enter TheBloke/genz-13B-v2-GPTQ in the "Download model" box.

To download from another branch, add :branchname to the end of the download name, eg TheBloke/genz-13B-v2-GPTQ:gptq-4bit-32g-actorder_True

From the command line

I recommend using the huggingface-hub Python library:

pip3 install huggingface-hub

To download the main branch to a folder called genz-13B-v2-GPTQ:

mkdir genz-13B-v2-GPTQ

huggingface-cli download TheBloke/genz-13B-v2-GPTQ --local-dir genz-13B-v2-GPTQ --local-dir-use-symlinks False

To download from a different branch, add the --revision parameter:

mkdir genz-13B-v2-GPTQ

huggingface-cli download TheBloke/genz-13B-v2-GPTQ --revision gptq-4bit-32g-actorder_True --local-dir genz-13B-v2-GPTQ --local-dir-use-symlinks False

More advanced huggingface-cli download usage

If you remove the --local-dir-use-symlinks False parameter, the files will instead be stored in the central Huggingface cache directory (default location on Linux is: ~/.cache/huggingface), and symlinks will be added to the specified --local-dir, pointing to their real location in the cache. This allows for interrupted downloads to be resumed, and allows you to quickly clone the repo to multiple places on disk without triggering a download again. The downside, and the reason why I don't list that as the default option, is that the files are then hidden away in a cache folder and it's harder to know where your disk space is being used, and to clear it up if/when you want to remove a download model.

The cache location can be changed with the HF_HOME environment variable, and/or the --cache-dir parameter to huggingface-cli.

For more documentation on downloading with huggingface-cli, please see: HF -> Hub Python Library -> Download files -> Download from the CLI.

To accelerate downloads on fast connections (1Gbit/s or higher), install hf_transfer:

pip3 install hf_transfer

And set environment variable HF_HUB_ENABLE_HF_TRANSFER to 1:

mkdir genz-13B-v2-GPTQ

HF_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/genz-13B-v2-GPTQ --local-dir genz-13B-v2-GPTQ --local-dir-use-symlinks False

Windows Command Line users: You can set the environment variable by running set HF_HUB_ENABLE_HF_TRANSFER=1 before the download command.

With git (not recommended)

To clone a specific branch with git, use a command like this:

git clone --single-branch --branch gptq-4bit-32g-actorder_True https://huggingface.co/TheBloke/genz-13B-v2-GPTQ

Note that using Git with HF repos is strongly discouraged. It will be much slower than using huggingface-hub, and will use twice as much disk space as it has to store the model files twice (it stores every byte both in the intended target folder, and again in the .git folder as a blob.)

How to easily download and use this model in text-generation-webui.

Please make sure you're using the latest version of text-generation-webui.

It is strongly recommended to use the text-generation-webui one-click-installers unless you're sure you know how to make a manual install.

- Click the Model tab.

- Under Download custom model or LoRA, enter

TheBloke/genz-13B-v2-GPTQ.

- To download from a specific branch, enter for example

TheBloke/genz-13B-v2-GPTQ:gptq-4bit-32g-actorder_True - see Provided Files above for the list of branches for each option.

- Click Download.

- The model will start downloading. Once it's finished it will say "Done".

- In the top left, click the refresh icon next to Model.

- In the Model dropdown, choose the model you just downloaded:

genz-13B-v2-GPTQ - The model will automatically load, and is now ready for use!

- If you want any custom settings, set them and then click Save settings for this model followed by Reload the Model in the top right.

- Note that you do not need to and should not set manual GPTQ parameters any more. These are set automatically from the file

quantize_config.json.

- Once you're ready, click the Text Generation tab and enter a prompt to get started!

Serving this model from Text Generation Inference (TGI)

It's recommended to use TGI version 1.1.0 or later. The official Docker container is: ghcr.io/huggingface/text-generation-inference:1.1.0

Example Docker parameters:

--model-id TheBloke/genz-13B-v2-GPTQ --port 3000 --quantize awq --max-input-length 3696 --max-total-tokens 4096 --max-batch-prefill-tokens 4096

Example Python code for interfacing with TGI (requires huggingface-hub 0.17.0 or later):

pip3 install huggingface-hub

from huggingface_hub import InferenceClient

endpoint_url = "https://your-endpoint-url-here"

prompt = "Tell me about AI"

prompt_template=f'''### User:

{prompt}

### Assistant:

'''

client = InferenceClient(endpoint_url)

response = client.text_generation(prompt,

max_new_tokens=128,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1)

print(f"Model output: {response}")

How to use this GPTQ model from Python code

Install the necessary packages

Requires: Transformers 4.33.0 or later, Optimum 1.12.0 or later, and AutoGPTQ 0.4.2 or later.

pip3 install transformers optimum

pip3 install auto-gptq --extra-index-url https://huggingface.github.io/autogptq-index/whl/cu118/ # Use cu117 if on CUDA 11.7

If you have problems installing AutoGPTQ using the pre-built wheels, install it from source instead:

pip3 uninstall -y auto-gptq

git clone https://github.com/PanQiWei/AutoGPTQ

cd AutoGPTQ

git checkout v0.4.2

pip3 install .

You can then use the following code

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

model_name_or_path = "TheBloke/genz-13B-v2-GPTQ"

# To use a different branch, change revision

# For example: revision="gptq-4bit-32g-actorder_True"

model = AutoModelForCausalLM.from_pretrained(model_name_or_path,

device_map="auto",

trust_remote_code=False,

revision="main")

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

prompt = "Tell me about AI"

prompt_template=f'''### User:

{prompt}

### Assistant:

'''

print("\n\n*** Generate:")

input_ids = tokenizer(prompt_template, return_tensors='pt').input_ids.cuda()

output = model.generate(inputs=input_ids, temperature=0.7, do_sample=True, top_p=0.95, top_k=40, max_new_tokens=512)

print(tokenizer.decode(output[0]))

# Inference can also be done using transformers' pipeline

print("*** Pipeline:")

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_new_tokens=512,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1

)

print(pipe(prompt_template)[0]['generated_text'])

Compatibility

The files provided are tested to work with AutoGPTQ, both via Transformers and using AutoGPTQ directly. They should also work with Occ4m's GPTQ-for-LLaMa fork.

ExLlama is compatible with Llama and Mistral models in 4-bit. Please see the Provided Files table above for per-file compatibility.

Huggingface Text Generation Inference (TGI) is compatible with all GPTQ models.

Discord

For further support, and discussions on these models and AI in general, join us at:

Thanks, and how to contribute

Thanks to the chirper.ai team!

Thanks to Clay from gpus.llm-utils.org!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

- Patreon: https://patreon.com/TheBlokeAI

- Ko-Fi: https://ko-fi.com/TheBlokeAI

Special thanks to: Aemon Algiz.

Patreon special mentions: Pierre Kircher, Stanislav Ovsiannikov, Michael Levine, Eugene Pentland, Andrey, 준교 김, Randy H, Fred von Graf, Artur Olbinski, Caitlyn Gatomon, terasurfer, Jeff Scroggin, James Bentley, Vadim, Gabriel Puliatti, Harry Royden McLaughlin, Sean Connelly, Dan Guido, Edmond Seymore, Alicia Loh, subjectnull, AzureBlack, Manuel Alberto Morcote, Thomas Belote, Lone Striker, Chris Smitley, Vitor Caleffi, Johann-Peter Hartmann, Clay Pascal, biorpg, Brandon Frisco, sidney chen, transmissions 11, Pedro Madruga, jinyuan sun, Ajan Kanaga, Emad Mostaque, Trenton Dambrowitz, Jonathan Leane, Iucharbius, usrbinkat, vamX, George Stoitzev, Luke Pendergrass, theTransient, Olakabola, Swaroop Kallakuri, Cap'n Zoog, Brandon Phillips, Michael Dempsey, Nikolai Manek, danny, Matthew Berman, Gabriel Tamborski, alfie_i, Raymond Fosdick, Tom X Nguyen, Raven Klaugh, LangChain4j, Magnesian, Illia Dulskyi, David Ziegler, Mano Prime, Luis Javier Navarrete Lozano, Erik Bjäreholt, 阿明, Nathan Dryer, Alex, Rainer Wilmers, zynix, TL, Joseph William Delisle, John Villwock, Nathan LeClaire, Willem Michiel, Joguhyik, GodLy, OG, Alps Aficionado, Jeffrey Morgan, ReadyPlayerEmma, Tiffany J. Kim, Sebastain Graf, Spencer Kim, Michael Davis, webtim, Talal Aujan, knownsqashed, John Detwiler, Imad Khwaja, Deo Leter, Jerry Meng, Elijah Stavena, Rooh Singh, Pieter, SuperWojo, Alexandros Triantafyllidis, Stephen Murray, Ai Maven, ya boyyy, Enrico Ros, Ken Nordquist, Deep Realms, Nicholas, Spiking Neurons AB, Elle, Will Dee, Jack West, RoA, Luke @flexchar, Viktor Bowallius, Derek Yates, Subspace Studios, jjj, Toran Billups, Asp the Wyvern, Fen Risland, Ilya, NimbleBox.ai, Chadd, Nitin Borwankar, Emre, Mandus, Leonard Tan, Kalila, K, Trailburnt, S_X, Cory Kujawski

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

Original model card: Bud's GenZ 13B v2

~ GenZ ~

Democratizing access to LLMs for the open-source community.

Let's advance AI, together.

Introduction 🎉

Welcome to GenZ, an advanced Large Language Model (LLM) fine-tuned on the foundation of Meta's open-source Llama V2 13B parameter model. At Bud Ecosystem, we believe in the power of open-source collaboration to drive the advancement of technology at an accelerated pace. Our vision is to democratize access to fine-tuned LLMs, and to that end, we will be releasing a series of models across different parameter counts (7B, 13B, and 70B) and quantizations (32-bit and 4-bit) for the open-source community to use, enhance, and build upon.

The smaller quantization version of our models makes them more accessible, enabling their use even on personal computers. This opens up a world of possibilities for developers, researchers, and enthusiasts to experiment with these models and contribute to the collective advancement of language model technology.

GenZ isn't just a powerful text generator—it's a sophisticated AI assistant, capable of understanding and responding to user prompts with high-quality responses. We've taken the robust capabilities of Llama V2 and fine-tuned them to offer a more user-focused experience. Whether you're seeking informative responses or engaging interactions, GenZ is designed to deliver.

And this isn't the end. It's just the beginning of a journey towards creating more advanced, more efficient, and more accessible language models. We invite you to join us on this exciting journey. 🚀

Milestone Releases ️🏁

[27 July 2023] GenZ-13B V2 (ggml) : Announcing our GenZ-13B v2 with ggml. This variant of GenZ can run inferencing using only CPU and without the need of GPU. Download the model from HuggingFace.

[27 July 2023] GenZ-13B V2 (4-bit) : Announcing our GenZ-13B v2 with 4-bit quantisation. Enabling inferencing with much lesser GPU memory than the 32-bit variant. Download the model from HuggingFace.

[26 July 2023] GenZ-13B V2 : We're excited to announce the release of our Genz 13B v2 model, a step forward with improved evaluation results compared to v1. Experience the advancements by downloading the model from HuggingFace.

[20 July 2023] GenZ-13B : We marked an important milestone with the release of the Genz 13B model. The journey began here, and you can partake in it by downloading the model from Hugging Face.

Getting Started on Hugging Face 🤗

Getting up and running with our models on Hugging Face is a breeze. Follow these steps:

1️⃣ : Import necessary modules

Start by importing the necessary modules from the ‘transformers’ library and ‘torch’.

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

2️⃣ : Load the tokenizer and the model

Next, load up the tokenizer and the model for ‘budecosystem/genz-13b-v2’ from Hugging Face using the ‘from_pretrained’ method.

tokenizer = AutoTokenizer.from_pretrained("budecosystem/genz-13b-v2", trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained("budecosystem/genz-13b-v2", torch_dtype=torch.bfloat16)

3️⃣ : Generate responses

Now that you have the model and tokenizer, you're ready to generate responses. Here's how you can do it:

inputs = tokenizer("The meaning of life is", return_tensors="pt")

sample = model.generate(**inputs, max_length=128)

print(tokenizer.decode(sample[0]))

In this example, "The meaning of life is" is the prompt template used for inference. You can replace it with any string you like.

Want to interact with the model in a more intuitive way? We have a Gradio interface set up for that. Head over to our GitHub page, clone the repository, and run the ‘generate.py’ script to try it out. Happy experimenting! 😄

Fine-tuning 🎯

It's time to upgrade the model by fine-tuning the model. You can do this using our provided finetune.py script. Here's an example command:

python finetune.py \

--model_name meta-llama/Llama-2-13b \

--data_path dataset.json \

--output_dir output \

--trust_remote_code \

--prompt_column instruction \

--response_column output \

--pad_token_id 50256

Bonus: Colab Notebooks 📚 (WIP)

Looking for an even simpler way to get started with GenZ? We've got you covered. We've prepared a pair of detailed Colab notebooks - one for Inference and one for Fine-tuning. These notebooks come pre-filled with all the information and code you'll need. All you'll have to do is run them!

Keep an eye out for these notebooks. They'll be added to the repository soon!

Why Use GenZ? 💡

You might be wondering, "Why should I choose GenZ over a pretrained model?" The answer lies in the extra mile we've gone to fine-tune our models.

While pretrained models are undeniably powerful, GenZ brings something extra to the table. We've fine-tuned it with curated datasets, which means it has additional skills and capabilities beyond what a pretrained model can offer. Whether you need it for a simple task or a complex project, GenZ is up for the challenge.

What's more, we are committed to continuously enhancing GenZ. We believe in the power of constant learning and improvement. That's why we'll be regularly fine-tuning our models with various curated datasets to make them even better. Our goal is to reach the state of the art and beyond - and we're committed to staying the course until we get there.

But don't just take our word for it. We've provided detailed evaluations and performance details in a later section, so you can see the difference for yourself.

Choose GenZ and join us on this journey. Together, we can push the boundaries of what's possible with large language models.

Model Card for GenZ 13B 📄

Here's a quick overview of everything you need to know about GenZ 13B.

Model Details:

- Developed by: Bud Ecosystem

- Base pretrained model type: Llama V2 13B

- Model Architecture: GenZ 13B, fine-tuned on Llama V2 13B, is an auto-regressive language model that employs an optimized transformer architecture. The fine-tuning process for GenZ 13B leveraged Supervised Fine-Tuning (SFT)

- License: The model is available for commercial use under a custom commercial license. For more information, please visit: Meta AI Model and Library Downloads

Intended Use 💼

When we created GenZ 13B, we had a clear vision of how it could be used to push the boundaries of what's possible with large language models. We also understand the importance of using such models responsibly. Here's a brief overview of the intended and out-of-scope uses for GenZ 13B.

Direct Use

GenZ 13B is designed to be a powerful tool for research on large language models. It's also an excellent foundation for further specialization and fine-tuning for specific use cases, such as:

- Text summarization

- Text generation

- Chatbot creation

- And much more!

Out-of-Scope Use 🚩

While GenZ 13B is versatile, there are certain uses that are out of scope:

- Production use without adequate assessment of risks and mitigation

- Any use cases which may be considered irresponsible or harmful

- Use in any manner that violates applicable laws or regulations, including trade compliance laws

- Use in any other way that is prohibited by the Acceptable Use Policy and Licensing Agreement for Llama 2

Remember, GenZ 13B, like any large language model, is trained on a large-scale corpora representative of the web, and therefore, may carry the stereotypes and biases commonly encountered online.

Recommendations 🧠

We recommend users of GenZ 13B to consider fine-tuning it for the specific set of tasks of interest. Appropriate precautions and guardrails should be taken for any production use. Using GenZ 13B responsibly is key to unlocking its full potential while maintaining a safe and respectful environment.

Training Details 📚

When fine-tuning GenZ 13B, we took a meticulous approach to ensure we were building on the solid base of the pretrained Llama V2 13B model in the most effective way. Here's a look at the key details of our training process:

Fine-Tuning Training Data

For the fine-tuning process, we used a carefully curated mix of datasets. These included data from OpenAssistant, an instruction fine-tuning dataset, and Thought Source for the Chain Of Thought (CoT) approach. This diverse mix of data sources helped us enhance the model's capabilities across a range of tasks.

Fine-Tuning Procedure

We performed a full-parameter fine-tuning using Supervised Fine-Tuning (SFT). This was carried out on 4 A100 80GB GPUs, and the process took under 100 hours. To make the process more efficient, we used DeepSpeed's ZeRO-3 optimization.

Tokenizer

We used the SentencePiece tokenizer during the fine-tuning process. This tokenizer is known for its capability to handle open-vocabulary language tasks efficiently.

Hyperparameters

Here are the hyperparameters we used for fine-tuning:

| Hyperparameter | Value |

|---|---|

| Warmup Ratio | 0.04 |

| Learning Rate Scheduler Type | Cosine |

| Learning Rate | 2e-5 |

| Number of Training Epochs | 3 |

| Per Device Training Batch Size | 4 |

| Gradient Accumulation Steps | 4 |

| Precision | FP16 |

| Optimizer | AdamW |

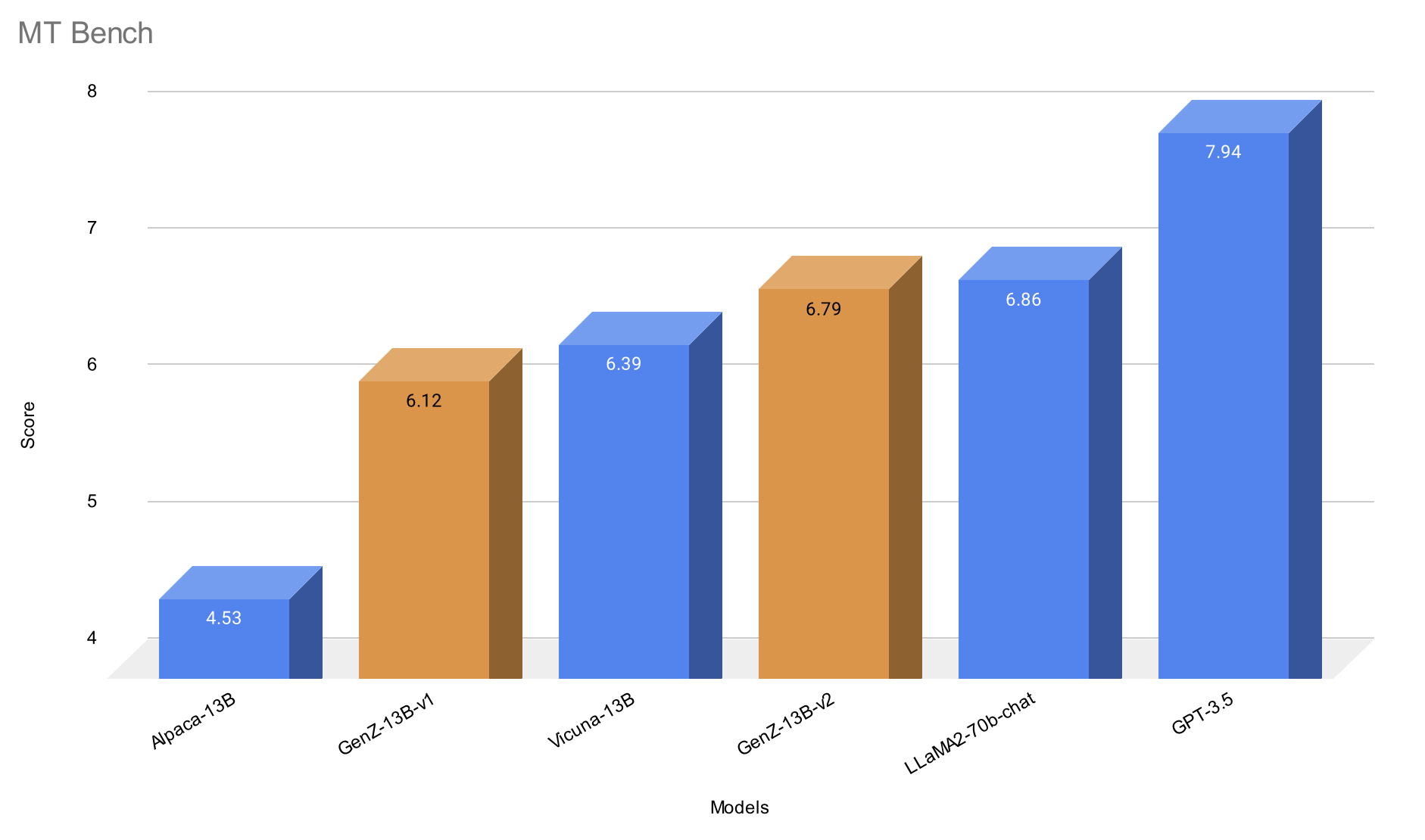

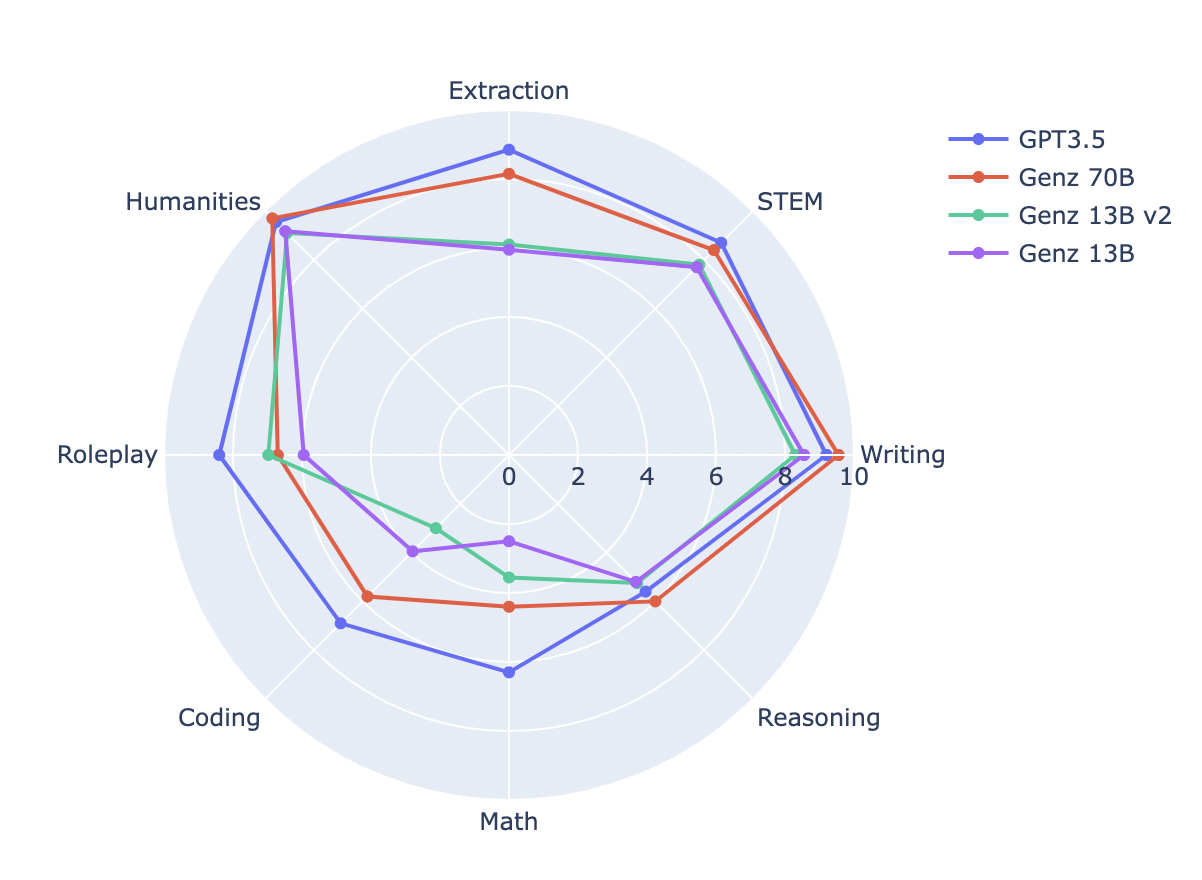

Evaluations 🎯

Evaluating our model is a key part of our fine-tuning process. It helps us understand how our model is performing and how it stacks up against other models. Here's a look at some of the key evaluations for GenZ 13B:

Benchmark Comparison

We've compared GenZ V1 with V2 to understand the improvements our fine-tuning has achieved.

| Model Name | MT Bench | Vicuna Bench | MMLU | Human Eval | Hellaswag | BBH |

|---|---|---|---|---|---|---|

| Genz 13B | 6.12 | 86.1 | 53.62 | 17.68 | 77.38 | 37.76 |

| Genz 13B v2 | 6.79 | 87.2 | 53.68 | 21.95 | 77.48 | 38.1 |

MT Bench Score

A key evaluation metric we use is the MT Bench score. This score provides a comprehensive assessment of our model's performance across a range of tasks.

We're proud to say that our model performs at a level that's close to the Llama-70B-chat model on the MT Bench and top of the list among 13B models.

In the transition from GenZ V1 to V2, we noticed some fascinating performance shifts. While we saw a slight dip in coding performance, two other areas, Roleplay and Math, saw noticeable improvements.

Looking Ahead 👀

We're excited about the journey ahead with GenZ. We're committed to continuously improving and enhancing our models, and we're excited to see what the open-source community will build with them. We believe in the power of collaboration, and we can't wait to see what we can achieve together.

Remember, we're just getting started. This is just the beginning of a journey that we believe will revolutionize the world of large language models. We invite you to join us on this exciting journey. Together, we can push the boundaries of what's possible with AI. 🚀

Check the GitHub for the code -> GenZ

- Downloads last month

- 6

Model tree for TheBloke/genz-13B-v2-GPTQ

Base model

budecosystem/genz-13b-v2