nekomata-14b-pfn-qfin-inst-merge

Model Description

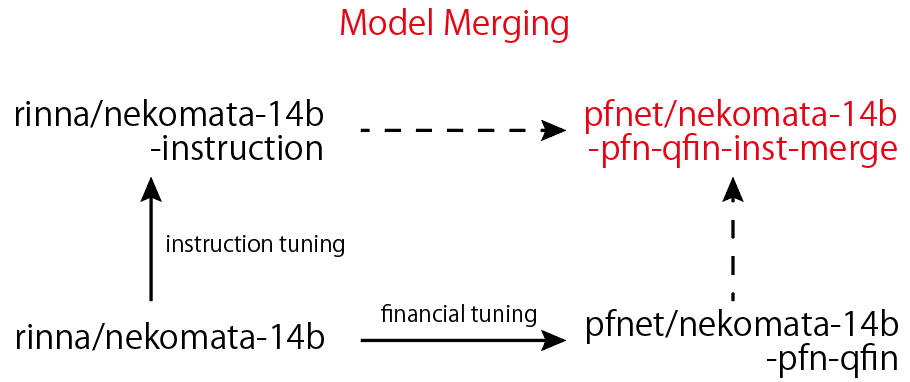

nekomata-14b-pfn-qfin-inst-merge is a merged model using rinna/nekomata-14b, rinna/nekomata-14b-instruction, and pfnet/nekomata-14b-pfn-qfin. This is the instruction model, which is good at generating answers for instructions. This model is released under Tongyi Qianwen LICENSE AGREEMENT.

The research article will also be released later.

Benchmarking

The benchmark score is obtained using Japanese Language Model Financial Evaluation Harness For the benchmark, 0-shot is used.

using default prompts

| Task |Metric| nekomaba-14b | -pfn-qfin |

|----------------|------|------|---|------|------|---|------|

|chabsa |f1 |0.7381| | |0.7428| | |

|cma_basics |acc |0.4737|± |0.0821|0.5263|± |0.0821|

|cpa_audit |acc |0.1608|± |0.0184|0.1633|± |0.0186|

|fp2 |acc |0.3389|± |0.0217|0.3642|± |0.0221|

|security_sales_1|acc |0.4561|± |0.0666|0.5614|± |0.0663|

|----------------|------|------|---|------|------|---|------|

|OVER ALL | |0.4335 |0.4716 |

using default prompts

| Task |Metric| -instruction | OURS |

|----------------|------|------|---|------|------|---|------|

|chabsa |f1 |0.8963| | |0.8429| | |

|cma_basics |acc |0.5000|± |0.0822|0.5789|± |0.0812|

|cpa_audit |acc |0.1859|± |0.0195|0.2136|± |0.0206|

|fp2 |acc |0.3642|± |0.0221|0.3579|± |0.0220|

|security_sales_1|acc |0.5088|± |0.0668|0.4737|± |0.0667|

|----------------|------|------|---|------|------|---|------|

|OVER ALL | |0.4910 |0.4939 |

using prompts v0.3 (instruction prompts)

| Task |Metric| -instruction | OURS |

|----------------|------|------|---|------|------|---|------|

|chabsa |f1 |0.8658| | |0.8620| | |

|cma_basics |acc |0.4737|± |0.0821|0.5000|± |0.0822|

|cpa_audit |acc |0.2085|± |0.0204|0.2060|± |0.0203|

|fp2 |acc |0.3663|± |0.0221|0.3663|± |0.0221|

|security_sales_1|acc |0.5263|± |0.0667|0.5614|± |0.0663|

|----------------|------|------|---|------|------|---|------|

|OVER ALL | |0.4881 |0.4991 |

Usage

Install the required libraries as follows:

>>> python -m pip install numpy sentencepiece torch transformers accelerate transformers_stream_generator tiktoken einops

Execute the following python code:

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("pfnet/nekomata-14b-pfn-qfin-inst-merge", trust_remote_code=True)

# Use GPU with bf16 (recommended for supported devices)

# model = AutoModelForCausalLM.from_pretrained("pfnet/nekomata-14b-pfn-qfin-inst-merge", device_map="auto", trust_remote_code=True, bf16=True)

# Use GPU with fp16

# model = AutoModelForCausalLM.from_pretrained("pfnet/nekomata-14b-pfn-qfin-inst-merge", device_map="auto", trust_remote_code=True, fp16=True)

# Use GPU with fp32

# model = AutoModelForCausalLM.from_pretrained("pfnet/nekomata-14b-pfn-qfin-inst-merge", device_map="auto", trust_remote_code=True, fp32=True)

# Use CPU

# model = AutoModelForCausalLM.from_pretrained("pfnet/nekomata-14b-pfn-qfin-inst-merge", device_map="cpu", trust_remote_code=True)

# Automatically select device and precision

model = AutoModelForCausalLM.from_pretrained("pfnet/nekomata-14b-pfn-qfin-inst-merge", device_map="auto", trust_remote_code=True)

text = """以下は、タスクを説明する指示と、文脈のある入力の組み合わせです。要求を適切に満たす応答を書きなさい。

### 指示:

次の質問に答えてください。

### 入力:

デリバティブ取引のリスク管理について教えてください。

### 応答:"""

input_ids = tokenizer(text, return_tensors="pt").input_ids.to(model.device)

with torch.no_grad():

generated_tokens = model.generate(

inputs=input_ids,

max_new_tokens=512,

do_sample=False,

temperature=1.0,

repetition_penalty=1.1,

top_k=50,

pad_token_id=tokenizer.pad_token_id,

bos_token_id=tokenizer.bos_token_id,

eos_token_id=tokenizer.eos_token_id

)[0]

generated_text = tokenizer.decode(generated_tokens)

print(generated_text.split("### 応答:")[1])

# デリバティブ取引では、原資産の価格変動が大きく影響します。そのため、リスク管理においては、原資産の価格変動リスクを適切にコントロールすることが重要となります。具体的には、ポジションサイズやレバレッジの制限、ヘッジ手法の活用などが挙げられます。また、市場情報の収集・分析を行い、適切なタイミングでポジションを調整することも大切です。さらに、取引先の信用リスクにも注意が必要であり、相手方の財務状況や信用度などを確認し、必要に応じて保証や担保を設定することが求められます。以上のようなリスク管理を行うことで、デリバティブ取引における損失リスクを最小化することができます。ただし、リスク管理はあくまで自己責任であるため、十分な知識と経験を持つことが望まれます。また、金融機関等の専門家によるアドバイスを受けることも有効です。以上が、デリバティブ取引におけるリスク管理の概要です。詳細については、金融庁や日本証券業協会などの公式サイトをご参照ください。

Model Details

- Model size: 14B

- Context length: 2048

- Developed by: Preferred Networks, Inc

- Model type: Causal decoder-only

- Language(s): Japanese and English

- License: Tongyi Qianwen LICENSE AGREEMENT

Bias, Risks, and Limitations

nekomata-14b-pfn-qfin-inst-merge is a new technology that carries risks with use. Testing conducted to date has been in English and Japanese, and has not covered, nor could it cover all scenarios. For these reasons, as with all LLMs, nekomata-14b-pfn-qfin-inst-merge’s potential outputs cannot be predicted in advance, and the model may in some instances produce inaccurate, biased or other objectionable responses to user prompts. This model is not designed for legal, tax, investment, financial, or other advice. Therefore, before deploying any applications of nekomata-14b-pfn-qfin, developers should perform safety testing and tuning tailored to their specific applications of the model.

How to cite

TBD

Contributors

Preferred Networks, Inc.

- Masanori Hirano

- Kentaro Imajo

License

- Downloads last month

- 417