Finetuning Overview:

Model Used: google/gemma-2-2b-it

Dataset: cfilt/iitb-english-hindi

Dataset Insights:

The IIT Bombay English-Hindi corpus contains a parallel corpus for English-Hindi as well as a monolingual Hindi corpus collected from various sources. This corpus has been utilized in the Workshop on Asian Language Translation Shared Task since 2016 for Hindi-to-English and English-to-Hindi language pairs and as a pivot language pair for Hindi-to-Japanese and Japanese-to-Hindi translations.

Finetuning Details:

With the utilization of MonsterAPI's LLM finetuner, this finetuning:

- Was achieved with cost-effectiveness.

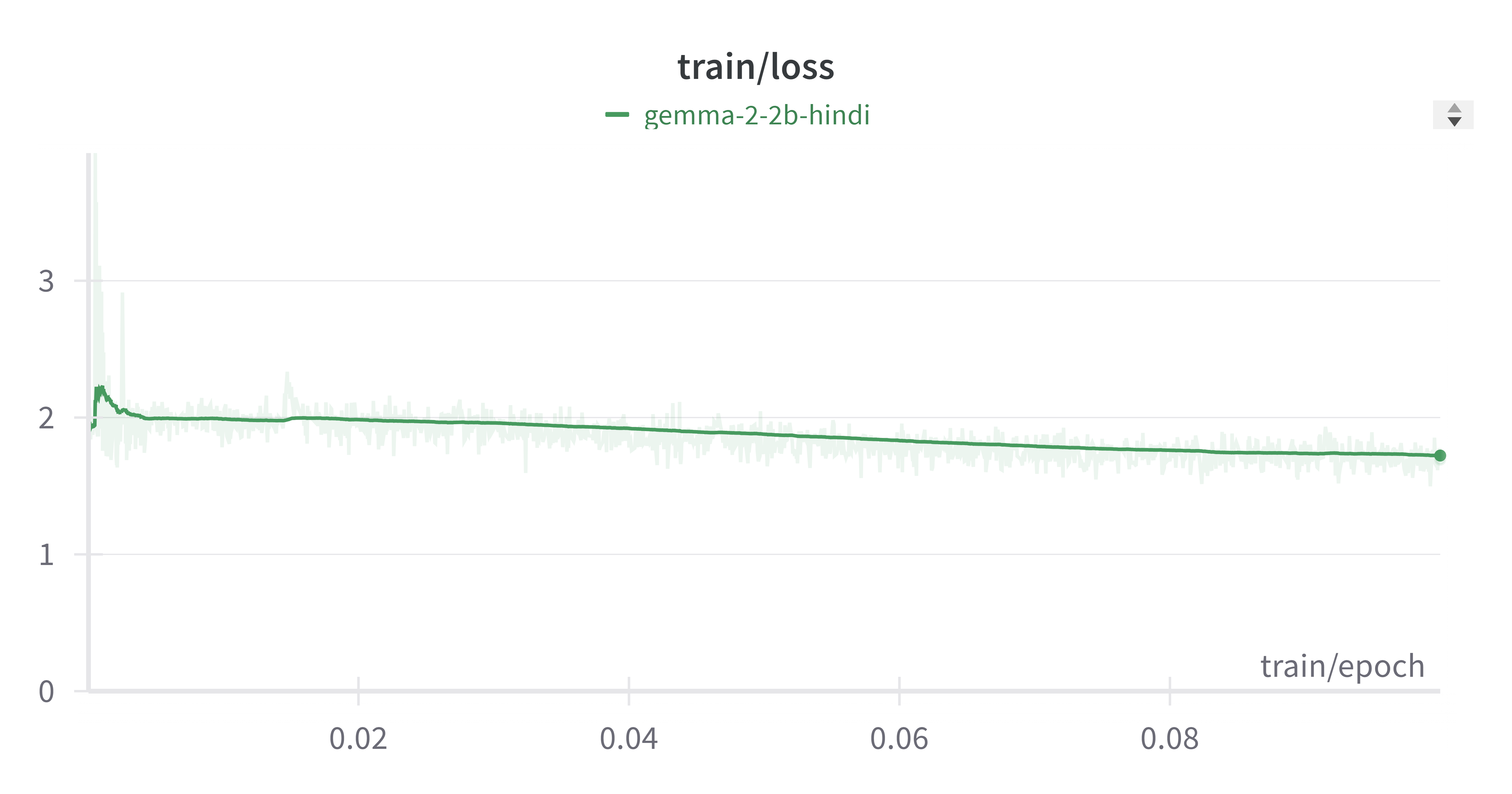

- Completed in a total duration of 1 hour and 33 minutes for 0.1 epochs.

- Costed

$1.91for the entire process.

Hyperparameters & Additional Details:

- Epochs: 0.1

- Total Finetuning Cost: $1.91

- Model Path: google/gemma-2-2b-it

- Learning Rate: 0.001

- Data Split: 100% Train

- Gradient Accumulation Steps: 16

Prompt Template

<bos><start_of_turn>user

{PROMPT}<end_of_turn>

<start_of_turn>model

{OUTPUT} <end_of_turn>

<eos>

license: apache-2.0

- Downloads last month

- 29

Inference Providers

NEW

This model isn't deployed by any Inference Provider.

🙋

Ask for provider support

HF Inference deployability: The HF Inference API does not support translation models for peft

library.