(주)미디어그룹사람과숲과 (주)마커의 LLM 연구 컨소시엄에서 개발된 모델입니다

The license is cc-by-nc-sa-4.0.

KoT-platypus2

CoT + KO-platypus2 = KoT-platypus2

Model Details

Model Developers Kyujin Han (kyujinpy)

Input Models input text only.

Output Models generate text only.

Model Architecture

KoT-platypus2-13B is an auto-regressive language model based on the LLaMA2 transformer architecture.

Repo Link

Github KoT-platypus: KoT-platypus2

Base Model

KO-Platypus2-13B

More detail repo(Github): CoT-llama2

More detail repo(Github): KO-Platypus2

Training Dataset

I use KoCoT_2000.

Using DeepL, translate about kaist-CoT.

I use A100 GPU 40GB and COLAB, when trianing.

Training Hyperparameters

| Hyperparameters | Value |

|---|---|

| batch_size | 64 |

| micro_batch_size | 1 |

| Epochs | 15 |

| learning_rate | 1e-5 |

| cutoff_len | 4096 |

| lr_scheduler | linear |

| base_model | kyujinpy/KO-Platypus2-13B |

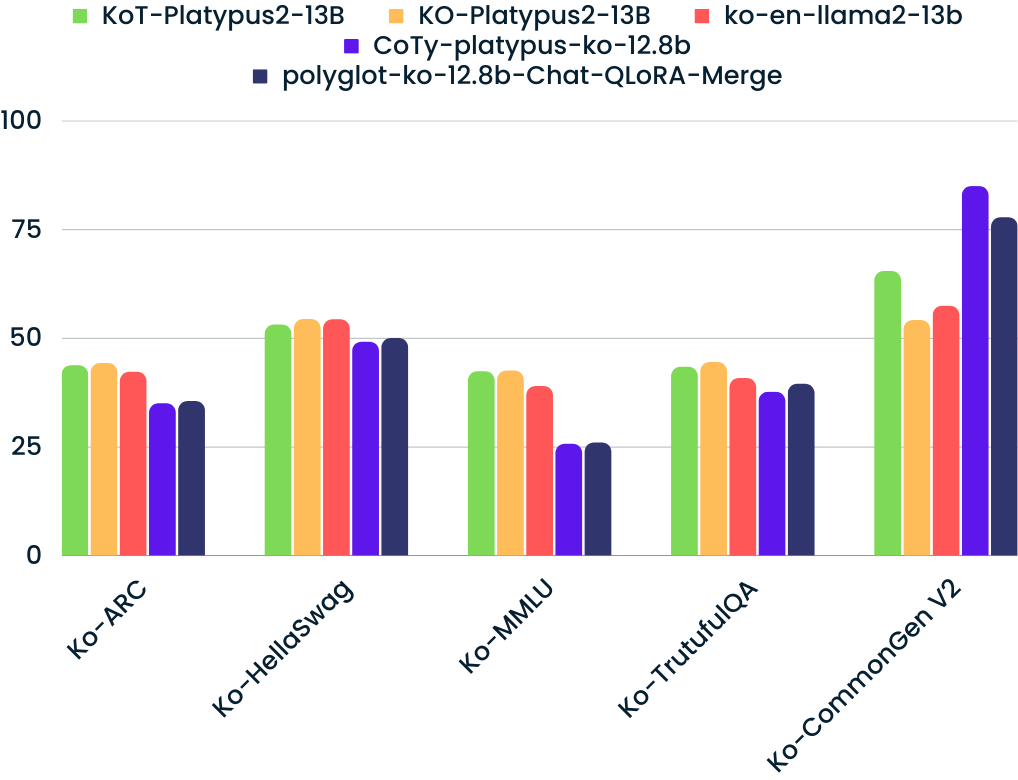

Model Benchmark

KO-LLM leaderboard

- Follow up as Open KO-LLM LeaderBoard.

| Model | Average | Ko-ARC | Ko-HellaSwag | Ko-MMLU | Ko-TruthfulQA | Ko-CommonGen V2 |

|---|---|---|---|---|---|---|

| KoT-Platypus2-13B(ours) | 49.55 | 43.69 | 53.05 | 42.29 | 43.34 | 65.38 |

| KO-Platypus2-13B | 47.90 | 44.20 | 54.31 | 42.47 | 44.41 | 54.11 |

| hyunseoki/ko-en-llama2-13b | 46.68 | 42.15 | 54.23 | 38.90 | 40.74 | 57.39 |

| MarkrAI/kyujin-CoTy-platypus-ko-12.8b | 46.44 | 34.98 | 49.11 | 25.68 | 37.59 | 84.86 |

| momo/polyglot-ko-12.8b-Chat-QLoRA-Merge | 45.71 | 35.49 | 49.93 | 25.97 | 39.43 | 77.70 |

Compare with Top 4 SOTA models. (update: 10/07)

Implementation Code

### KO-Platypus

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

repo = "kyujinpy/KoT-platypus2-13B"

CoT-llama = AutoModelForCausalLM.from_pretrained(

repo,

return_dict=True,

torch_dtype=torch.float16,

device_map='auto'

)

CoT-llama_tokenizer = AutoTokenizer.from_pretrained(repo)

Readme format: beomi/llama-2-ko-7b

- Downloads last month

- 1,596

Inference Providers

NEW

This model isn't deployed by any Inference Provider.

🙋

Ask for provider support