Model description

ShiftViT is a variation of the Vision Transformer (ViT) where the attention operation has been replaced with a shifting operation.

ShiftViT model was proposed as part of the paper When Shift Operation Meets Vision Transformer: An Extremely Simple Alternative to Attention Mechanism. Vision Transformers have lately become very popular for computer vision problems and a lot researchers attribute their success to the attention layers. The authors of the ShiftViT paper have tried to show via the ShiftViT model that even without the attention operation, ViTs can reach SoTA results.

Model Architecture

The architecture for ShiftViT is inspired by the paper Swin Transformer: Hierarchical Vision Transformer using Shifted Windows

Here the authors propose a modular architecture with 4 stages. Each stage works on its own spatial size, creating a hierarchical architecture.

|

|---|

| Figure 1: The entire architecutre of ShiftViT. |

| Source |

Each stage in the ShiftViT architecture comprises of a Shift Block as shown in Fig 2.

The Shift Block as shown in Fig. 2, comprises of the following:

- Shift Operation

- Linear Normalization

- MLP Layer: stack of densely-connected layers

How shift operation works:

- Split the channels

- Select each of the first four spilts and shift and pad them in the respective directions.

- After shifting and padding, we concatenate the channel back.

Intended uses

This ShiftViT model is trained to be used for image classification task.

However, the ShiftViT architecture can be used for a variety of visual recognition tasks. The authors of the ShiftViT paper tested the model on the following tasks:

- Image Classification on ImageNet-1k dataset

- Object Detection on COCO dataset

- Semantic Segmentation on ADE20k dataset

Training and evaluation data

The dataset used for training the model is CIFAR-10. The CIFAR-10 dataset is a popular dataset used for image classification. It contains images belonging to the following 10 classes:

| Classes |

|---|

| airplane |

| automobile |

| bird |

| cat |

| deer |

| dog |

| frog |

| horse |

| ship |

| truck |

No. of samples used for training and evaluation are:

- Training samples: 40000

- Validation samples: 10000

- Testing samples: 10000

Training procedure

- Data Preparation:

- Data Augmentation: The augmentation steps used for data preparation include: rescaling, resizing, cropping and horizontal flipping.

- Building the ShiftViT Model:

- The steps for constructing the ShiftViT model have been covered extensively in this Keras example

- Model Training:

The model is then trained using the following config:

| Training Config | Value |

|---|---|

| Optimizer | Adam |

| Loss Function | sparse_categorical_crossentropy |

| Metric | Accuracy |

| Epochs | 5 |

- Model Testing:

The model is tested on the test data post training achieving an accuracy of ~90%.

Training hyperparameters

The following hyperparameters were used during training:

| Hyperparameters | Value |

|---|---|

| name | AdamW |

| learning_rate.class_name | WarmUpCosine |

| learning_rate.config.lr_start | 1e-05 |

| learning_rate.config.lr_max | 0.001 |

| learning_rate.config.total_steps | 312 |

| learning_rate.config.warmup_steps | 46 |

| decay | 0.0 |

| beta_1 | 0.8999999761581421 |

| beta_2 | 0.9990000128746033 |

| epsilon | 1e-07 |

| amsgrad | False |

| weight_decay | 9.999999747378752e-05 |

| exclude_from_weight_decay | None |

| training_precision | float32 |

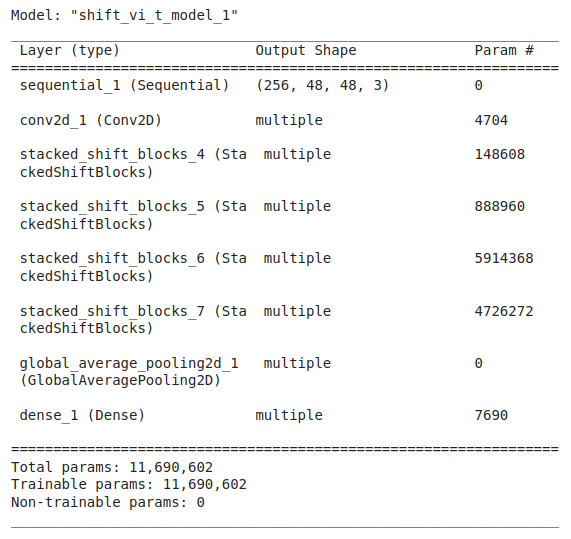

Model Plot

Credits:

- HF Contribution: Shivalika Singh

- Full credits to original Keras example by Aritra Roy Gosthipaty and Ritwik Raha

- Check out the demo space here

- Downloads last month

- 12