Transformers documentation

OWLv2

This model was released on 2023-06-16 and added to Hugging Face Transformers on 2023-10-13.

OWLv2

Overview

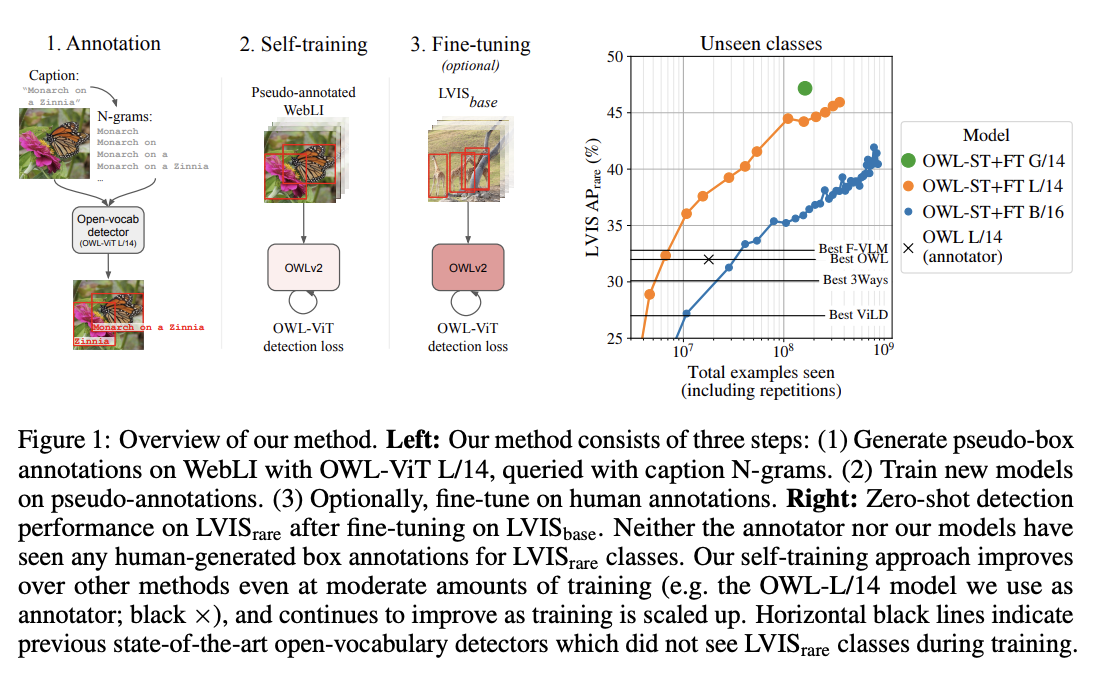

OWLv2 was proposed in Scaling Open-Vocabulary Object Detection by Matthias Minderer, Alexey Gritsenko, Neil Houlsby. OWLv2 scales up OWL-ViT using self-training, which uses an existing detector to generate pseudo-box annotations on image-text pairs. This results in large gains over the previous state-of-the-art for zero-shot object detection.

The abstract from the paper is the following:

Open-vocabulary object detection has benefited greatly from pretrained vision-language models, but is still limited by the amount of available detection training data. While detection training data can be expanded by using Web image-text pairs as weak supervision, this has not been done at scales comparable to image-level pretraining. Here, we scale up detection data with self-training, which uses an existing detector to generate pseudo-box annotations on image-text pairs. Major challenges in scaling self-training are the choice of label space, pseudo-annotation filtering, and training efficiency. We present the OWLv2 model and OWL-ST self-training recipe, which address these challenges. OWLv2 surpasses the performance of previous state-of-the-art open-vocabulary detectors already at comparable training scales (~10M examples). However, with OWL-ST, we can scale to over 1B examples, yielding further large improvement: With an L/14 architecture, OWL-ST improves AP on LVIS rare classes, for which the model has seen no human box annotations, from 31.2% to 44.6% (43% relative improvement). OWL-ST unlocks Web-scale training for open-world localization, similar to what has been seen for image classification and language modelling.

OWLv2 high-level overview. Taken from the original paper.

OWLv2 high-level overview. Taken from the original paper. This model was contributed by nielsr. The original code can be found here.

Usage example

OWLv2 is, just like its predecessor OWL-ViT, a zero-shot text-conditioned object detection model. OWL-ViT uses CLIP as its multi-modal backbone, with a ViT-like Transformer to get visual features and a causal language model to get the text features. To use CLIP for detection, OWL-ViT removes the final token pooling layer of the vision model and attaches a lightweight classification and box head to each transformer output token. Open-vocabulary classification is enabled by replacing the fixed classification layer weights with the class-name embeddings obtained from the text model. The authors first train CLIP from scratch and fine-tune it end-to-end with the classification and box heads on standard detection datasets using a bipartite matching loss. One or multiple text queries per image can be used to perform zero-shot text-conditioned object detection.

Owlv2ImageProcessor can be used to resize (or rescale) and normalize images for the model and CLIPTokenizer is used to encode the text. Owlv2Processor wraps Owlv2ImageProcessor and CLIPTokenizer into a single instance to both encode the text and prepare the images. The following example shows how to perform object detection using Owlv2Processor and Owlv2ForObjectDetection.

>>> import requests

>>> from PIL import Image

>>> import torch

>>> from transformers import Owlv2Processor, Owlv2ForObjectDetection

>>> processor = Owlv2Processor.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> model = Owlv2ForObjectDetection.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> text_labels = [["a photo of a cat", "a photo of a dog"]]

>>> inputs = processor(text=text_labels, images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> # Target image sizes (height, width) to rescale box predictions [batch_size, 2]

>>> target_sizes = torch.tensor([(image.height, image.width)])

>>> # Convert outputs (bounding boxes and class logits) to Pascal VOC format (xmin, ymin, xmax, ymax)

>>> results = processor.post_process_grounded_object_detection(

... outputs=outputs, target_sizes=target_sizes, threshold=0.1, text_labels=text_labels

... )

>>> # Retrieve predictions for the first image for the corresponding text queries

>>> result = results[0]

>>> boxes, scores, text_labels = result["boxes"], result["scores"], result["text_labels"]

>>> for box, score, text_label in zip(boxes, scores, text_labels):

... box = [round(i, 2) for i in box.tolist()]

... print(f"Detected {text_label} with confidence {round(score.item(), 3)} at location {box}")

Detected a photo of a cat with confidence 0.614 at location [341.67, 23.39, 642.32, 371.35]

Detected a photo of a cat with confidence 0.665 at location [6.75, 51.96, 326.62, 473.13]Resources

- A demo notebook on using OWLv2 for zero- and one-shot (image-guided) object detection can be found here.

- Zero-shot object detection task guide

The architecture of OWLv2 is identical to OWL-ViT, however the object detection head now also includes an objectness classifier, which predicts the (query-agnostic) likelihood that a predicted box contains an object (as opposed to background). The objectness score can be used to rank or filter predictions independently of text queries. Usage of OWLv2 is identical to OWL-ViT with a new, updated image processor (Owlv2ImageProcessor).

Owlv2Config

class transformers.Owlv2Config

< source >( text_config = None vision_config = None projection_dim = 512 logit_scale_init_value = 2.6592 return_dict = True **kwargs )

Parameters

- text_config (

dict, optional) — Dictionary of configuration options used to initialize Owlv2TextConfig. - vision_config (

dict, optional) — Dictionary of configuration options used to initialize Owlv2VisionConfig. - projection_dim (

int, optional, defaults to 512) — Dimensionality of text and vision projection layers. - logit_scale_init_value (

float, optional, defaults to 2.6592) — The initial value of the logit_scale parameter. Default is used as per the original OWLv2 implementation. - return_dict (

bool, optional, defaults toTrue) — Whether or not the model should return a dictionary. IfFalse, returns a tuple. - kwargs (optional) — Dictionary of keyword arguments.

Owlv2Config is the configuration class to store the configuration of an Owlv2Model. It is used to instantiate an OWLv2 model according to the specified arguments, defining the text model and vision model configs. Instantiating a configuration with the defaults will yield a similar configuration to that of the OWLv2 google/owlv2-base-patch16 architecture.

Configuration objects inherit from PreTrainedConfig and can be used to control the model outputs. Read the documentation from PreTrainedConfig for more information.

Owlv2TextConfig

class transformers.Owlv2TextConfig

< source >( vocab_size = 49408 hidden_size = 512 intermediate_size = 2048 num_hidden_layers = 12 num_attention_heads = 8 max_position_embeddings = 16 hidden_act = 'quick_gelu' layer_norm_eps = 1e-05 attention_dropout = 0.0 initializer_range = 0.02 initializer_factor = 1.0 pad_token_id = 0 bos_token_id = 49406 eos_token_id = 49407 **kwargs )

Parameters

- vocab_size (

int, optional, defaults to 49408) — Vocabulary size of the OWLv2 text model. Defines the number of different tokens that can be represented by theinputs_idspassed when calling Owlv2TextModel. - hidden_size (

int, optional, defaults to 512) — Dimensionality of the encoder layers and the pooler layer. - intermediate_size (

int, optional, defaults to 2048) — Dimensionality of the “intermediate” (i.e., feed-forward) layer in the Transformer encoder. - num_hidden_layers (

int, optional, defaults to 12) — Number of hidden layers in the Transformer encoder. - num_attention_heads (

int, optional, defaults to 8) — Number of attention heads for each attention layer in the Transformer encoder. - max_position_embeddings (

int, optional, defaults to 16) — The maximum sequence length that this model might ever be used with. Typically set this to something large just in case (e.g., 512 or 1024 or 2048). - hidden_act (

strorfunction, optional, defaults to"quick_gelu") — The non-linear activation function (function or string) in the encoder and pooler. If string,"gelu","relu","selu"and"gelu_new""quick_gelu"are supported. - layer_norm_eps (

float, optional, defaults to 1e-05) — The epsilon used by the layer normalization layers. - attention_dropout (

float, optional, defaults to 0.0) — The dropout ratio for the attention probabilities. - initializer_range (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. - initializer_factor (

float, optional, defaults to 1.0) — A factor for initializing all weight matrices (should be kept to 1, used internally for initialization testing). - pad_token_id (

int, optional, defaults to 0) — The id of the padding token in the input sequences. - bos_token_id (

int, optional, defaults to 49406) — The id of the beginning-of-sequence token in the input sequences. - eos_token_id (

int, optional, defaults to 49407) — The id of the end-of-sequence token in the input sequences.

This is the configuration class to store the configuration of an Owlv2TextModel. It is used to instantiate an Owlv2 text encoder according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the Owlv2 google/owlv2-base-patch16 architecture.

Configuration objects inherit from PreTrainedConfig and can be used to control the model outputs. Read the documentation from PreTrainedConfig for more information.

Example:

>>> from transformers import Owlv2TextConfig, Owlv2TextModel

>>> # Initializing a Owlv2TextModel with google/owlv2-base-patch16 style configuration

>>> configuration = Owlv2TextConfig()

>>> # Initializing a Owlv2TextConfig from the google/owlv2-base-patch16 style configuration

>>> model = Owlv2TextModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configOwlv2VisionConfig

class transformers.Owlv2VisionConfig

< source >( hidden_size = 768 intermediate_size = 3072 num_hidden_layers = 12 num_attention_heads = 12 num_channels = 3 image_size = 768 patch_size = 16 hidden_act = 'quick_gelu' layer_norm_eps = 1e-05 attention_dropout = 0.0 initializer_range = 0.02 initializer_factor = 1.0 **kwargs )

Parameters

- hidden_size (

int, optional, defaults to 768) — Dimensionality of the encoder layers and the pooler layer. - intermediate_size (

int, optional, defaults to 3072) — Dimensionality of the “intermediate” (i.e., feed-forward) layer in the Transformer encoder. - num_hidden_layers (

int, optional, defaults to 12) — Number of hidden layers in the Transformer encoder. - num_attention_heads (

int, optional, defaults to 12) — Number of attention heads for each attention layer in the Transformer encoder. - num_channels (

int, optional, defaults to 3) — Number of channels in the input images. - image_size (

int, optional, defaults to 768) — The size (resolution) of each image. - patch_size (

int, optional, defaults to 16) — The size (resolution) of each patch. - hidden_act (

strorfunction, optional, defaults to"quick_gelu") — The non-linear activation function (function or string) in the encoder and pooler. If string,"gelu","relu","selu"and"gelu_new""quick_gelu"are supported. - layer_norm_eps (

float, optional, defaults to 1e-05) — The epsilon used by the layer normalization layers. - attention_dropout (

float, optional, defaults to 0.0) — The dropout ratio for the attention probabilities. - initializer_range (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. - initializer_factor (

float, optional, defaults to 1.0) — A factor for initializing all weight matrices (should be kept to 1, used internally for initialization testing).

This is the configuration class to store the configuration of an Owlv2VisionModel. It is used to instantiate an OWLv2 image encoder according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the OWLv2 google/owlv2-base-patch16 architecture.

Configuration objects inherit from PreTrainedConfig and can be used to control the model outputs. Read the documentation from PreTrainedConfig for more information.

Example:

>>> from transformers import Owlv2VisionConfig, Owlv2VisionModel

>>> # Initializing a Owlv2VisionModel with google/owlv2-base-patch16 style configuration

>>> configuration = Owlv2VisionConfig()

>>> # Initializing a Owlv2VisionModel model from the google/owlv2-base-patch16 style configuration

>>> model = Owlv2VisionModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configOwlv2ImageProcessor

class transformers.Owlv2ImageProcessor

< source >( do_rescale: bool = True rescale_factor: typing.Union[int, float] = 0.00392156862745098 do_pad: bool = True do_resize: bool = True size: typing.Optional[dict[str, int]] = None resample: Resampling = <Resampling.BILINEAR: 2> do_normalize: bool = True image_mean: typing.Union[float, list[float], NoneType] = None image_std: typing.Union[float, list[float], NoneType] = None **kwargs )

Parameters

- do_rescale (

bool, optional, defaults toTrue) — Whether to rescale the image by the specified scalerescale_factor. Can be overridden bydo_rescalein thepreprocessmethod. - rescale_factor (

intorfloat, optional, defaults to1/255) — Scale factor to use if rescaling the image. Can be overridden byrescale_factorin thepreprocessmethod. - do_pad (

bool, optional, defaults toTrue) — Whether to pad the image to a square with gray pixels on the bottom and the right. Can be overridden bydo_padin thepreprocessmethod. - do_resize (

bool, optional, defaults toTrue) — Controls whether to resize the image’s (height, width) dimensions to the specifiedsize. Can be overridden bydo_resizein thepreprocessmethod. - size (

dict[str, int]optional, defaults to{"height" -- 960, "width": 960}): Size to resize the image to. Can be overridden bysizein thepreprocessmethod. - resample (

PILImageResampling, optional, defaults toResampling.BILINEAR) — Resampling method to use if resizing the image. Can be overridden byresamplein thepreprocessmethod. - do_normalize (

bool, optional, defaults toTrue) — Whether to normalize the image. Can be overridden by thedo_normalizeparameter in thepreprocessmethod. - image_mean (

floatorlist[float], optional, defaults toOPENAI_CLIP_MEAN) — Mean to use if normalizing the image. This is a float or list of floats the length of the number of channels in the image. Can be overridden by theimage_meanparameter in thepreprocessmethod. - image_std (

floatorlist[float], optional, defaults toOPENAI_CLIP_STD) — Standard deviation to use if normalizing the image. This is a float or list of floats the length of the number of channels in the image. Can be overridden by theimage_stdparameter in thepreprocessmethod.

Constructs an OWLv2 image processor.

preprocess

< source >( images: typing.Union[ForwardRef('PIL.Image.Image'), numpy.ndarray, ForwardRef('torch.Tensor'), list['PIL.Image.Image'], list[numpy.ndarray], list['torch.Tensor']] do_pad: typing.Optional[bool] = None do_resize: typing.Optional[bool] = None size: typing.Optional[dict[str, int]] = None do_rescale: typing.Optional[bool] = None rescale_factor: typing.Optional[float] = None do_normalize: typing.Optional[bool] = None image_mean: typing.Union[float, list[float], NoneType] = None image_std: typing.Union[float, list[float], NoneType] = None return_tensors: typing.Union[str, transformers.utils.generic.TensorType, NoneType] = None data_format: ChannelDimension = <ChannelDimension.FIRST: 'channels_first'> input_data_format: typing.Union[str, transformers.image_utils.ChannelDimension, NoneType] = None )

Parameters

- images (

ImageInput) — Image to preprocess. Expects a single or batch of images with pixel values ranging from 0 to 255. If passing in images with pixel values between 0 and 1, setdo_rescale=False. - do_pad (

bool, optional, defaults toself.do_pad) — Whether to pad the image to a square with gray pixels on the bottom and the right. - do_resize (

bool, optional, defaults toself.do_resize) — Whether to resize the image. - size (

dict[str, int], optional, defaults toself.size) — Size to resize the image to. - do_rescale (

bool, optional, defaults toself.do_rescale) — Whether to rescale the image values between [0 - 1]. - rescale_factor (

float, optional, defaults toself.rescale_factor) — Rescale factor to rescale the image by ifdo_rescaleis set toTrue. - do_normalize (

bool, optional, defaults toself.do_normalize) — Whether to normalize the image. - image_mean (

floatorlist[float], optional, defaults toself.image_mean) — Image mean. - image_std (

floatorlist[float], optional, defaults toself.image_std) — Image standard deviation. - return_tensors (

strorTensorType, optional) — The type of tensors to return. Can be one of:- Unset: Return a list of

np.ndarray. TensorType.PYTORCHor'pt': Return a batch of typetorch.Tensor.TensorType.NUMPYor'np': Return a batch of typenp.ndarray.

- Unset: Return a list of

- data_format (

ChannelDimensionorstr, optional, defaults toChannelDimension.FIRST) — The channel dimension format for the output image. Can be one of:"channels_first"orChannelDimension.FIRST: image in (num_channels, height, width) format."channels_last"orChannelDimension.LAST: image in (height, width, num_channels) format.- Unset: Use the channel dimension format of the input image.

- input_data_format (

ChannelDimensionorstr, optional) — The channel dimension format for the input image. If unset, the channel dimension format is inferred from the input image. Can be one of:"channels_first"orChannelDimension.FIRST: image in (num_channels, height, width) format."channels_last"orChannelDimension.LAST: image in (height, width, num_channels) format."none"orChannelDimension.NONE: image in (height, width) format.

Preprocess an image or batch of images.

post_process_object_detection

< source >( outputs: Owlv2ObjectDetectionOutput threshold: float = 0.1 target_sizes: typing.Union[transformers.utils.generic.TensorType, list[tuple], NoneType] = None ) → list[Dict]

Parameters

- outputs (

Owlv2ObjectDetectionOutput) — Raw outputs of the model. - threshold (

float, optional, defaults to 0.1) — Score threshold to keep object detection predictions. - target_sizes (

torch.Tensororlist[tuple[int, int]], optional) — Tensor of shape(batch_size, 2)or list of tuples (tuple[int, int]) containing the target size(height, width)of each image in the batch. If unset, predictions will not be resized.

Returns

list[Dict]

A list of dictionaries, each dictionary containing the following keys:

- “scores”: The confidence scores for each predicted box on the image.

- “labels”: Indexes of the classes predicted by the model on the image.

- “boxes”: Image bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format.

Converts the raw output of Owlv2ForObjectDetection into final bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format.

post_process_image_guided_detection

< source >( outputs threshold = 0.0 nms_threshold = 0.3 target_sizes = None ) → list[Dict]

Parameters

- outputs (

OwlViTImageGuidedObjectDetectionOutput) — Raw outputs of the model. - threshold (

float, optional, defaults to 0.0) — Minimum confidence threshold to use to filter out predicted boxes. - nms_threshold (

float, optional, defaults to 0.3) — IoU threshold for non-maximum suppression of overlapping boxes. - target_sizes (

torch.Tensor, optional) — Tensor of shape (batch_size, 2) where each entry is the (height, width) of the corresponding image in the batch. If set, predicted normalized bounding boxes are rescaled to the target sizes. If left to None, predictions will not be unnormalized.

Returns

list[Dict]

A list of dictionaries, each dictionary containing the scores, labels and boxes for an image

in the batch as predicted by the model. All labels are set to None as

OwlViTForObjectDetection.image_guided_detection perform one-shot object detection.

Converts the output of OwlViTForObjectDetection.image_guided_detection() into the format expected by the COCO api.

Owlv2ImageProcessorFast

class transformers.Owlv2ImageProcessorFast

< source >( **kwargs: typing_extensions.Unpack[transformers.processing_utils.ImagesKwargs] )

Constructs a fast Owlv2 image processor.

preprocess

< source >( images: typing.Union[ForwardRef('PIL.Image.Image'), numpy.ndarray, ForwardRef('torch.Tensor'), list['PIL.Image.Image'], list[numpy.ndarray], list['torch.Tensor']] *args **kwargs: typing_extensions.Unpack[transformers.processing_utils.ImagesKwargs] ) → <class 'transformers.image_processing_base.BatchFeature'>

Parameters

- images (

Union[PIL.Image.Image, numpy.ndarray, torch.Tensor, list['PIL.Image.Image'], list[numpy.ndarray], list['torch.Tensor']]) — Image to preprocess. Expects a single or batch of images with pixel values ranging from 0 to 255. If passing in images with pixel values between 0 and 1, setdo_rescale=False. - do_convert_rgb (

bool, optional) — Whether to convert the image to RGB. - do_resize (

bool, optional) — Whether to resize the image. - size (

Annotated[Union[int, list[int], tuple[int, ...], dict[str, int], NoneType], None]) — Describes the maximum input dimensions to the model. - crop_size (

Annotated[Union[int, list[int], tuple[int, ...], dict[str, int], NoneType], None]) — Size of the output image after applyingcenter_crop. - resample (

Annotated[Union[PILImageResampling, int, NoneType], None]) — Resampling filter to use if resizing the image. This can be one of the enumPILImageResampling. Only has an effect ifdo_resizeis set toTrue. - do_rescale (

bool, optional) — Whether to rescale the image. - rescale_factor (

float, optional) — Rescale factor to rescale the image by ifdo_rescaleis set toTrue. - do_normalize (

bool, optional) — Whether to normalize the image. - image_mean (

Union[float, list[float], tuple[float, ...], NoneType]) — Image mean to use for normalization. Only has an effect ifdo_normalizeis set toTrue. - image_std (

Union[float, list[float], tuple[float, ...], NoneType]) — Image standard deviation to use for normalization. Only has an effect ifdo_normalizeis set toTrue. - do_pad (

bool, optional) — Whether to pad the image. Padding is done either to the largest size in the batch or to a fixed square size per image. The exact padding strategy depends on the model. - pad_size (

Annotated[Union[int, list[int], tuple[int, ...], dict[str, int], NoneType], None]) — The size in{"height": int, "width" int}to pad the images to. Must be larger than any image size provided for preprocessing. Ifpad_sizeis not provided, images will be padded to the largest height and width in the batch. Applied only whendo_pad=True. - do_center_crop (

bool, optional) — Whether to center crop the image. - data_format (

Union[str, ~image_utils.ChannelDimension, NoneType]) — OnlyChannelDimension.FIRSTis supported. Added for compatibility with slow processors. - input_data_format (

Union[str, ~image_utils.ChannelDimension, NoneType]) — The channel dimension format for the input image. If unset, the channel dimension format is inferred from the input image. Can be one of:"channels_first"orChannelDimension.FIRST: image in (num_channels, height, width) format."channels_last"orChannelDimension.LAST: image in (height, width, num_channels) format."none"orChannelDimension.NONE: image in (height, width) format.

- device (

Annotated[str, None], optional) — The device to process the images on. If unset, the device is inferred from the input images. - return_tensors (

Annotated[Union[str, ~utils.generic.TensorType, NoneType], None]) — Returns stacked tensors if set to `pt, otherwise returns a list of tensors. - disable_grouping (

bool, optional) — Whether to disable grouping of images by size to process them individually and not in batches. If None, will be set to True if the images are on CPU, and False otherwise. This choice is based on empirical observations, as detailed here: https://github.com/huggingface/transformers/pull/38157

Returns

<class 'transformers.image_processing_base.BatchFeature'>

- data (

dict) — Dictionary of lists/arrays/tensors returned by the call method (‘pixel_values’, etc.). - tensor_type (

Union[None, str, TensorType], optional) — You can give a tensor_type here to convert the lists of integers in PyTorch/Numpy Tensors at initialization.

post_process_object_detection

< source >( outputs: Owlv2ObjectDetectionOutput threshold: float = 0.1 target_sizes: typing.Union[transformers.utils.generic.TensorType, list[tuple], NoneType] = None ) → list[Dict]

Parameters

- outputs (

Owlv2ObjectDetectionOutput) — Raw outputs of the model. - threshold (

float, optional, defaults to 0.1) — Score threshold to keep object detection predictions. - target_sizes (

torch.Tensororlist[tuple[int, int]], optional) — Tensor of shape(batch_size, 2)or list of tuples (tuple[int, int]) containing the target size(height, width)of each image in the batch. If unset, predictions will not be resized.

Returns

list[Dict]

A list of dictionaries, each dictionary containing the following keys:

- “scores”: The confidence scores for each predicted box on the image.

- “labels”: Indexes of the classes predicted by the model on the image.

- “boxes”: Image bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format.

Converts the raw output of Owlv2ForObjectDetection into final bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format.

post_process_image_guided_detection

< source >( outputs threshold = 0.0 nms_threshold = 0.3 target_sizes = None ) → list[Dict]

Parameters

- outputs (

Owlv2ImageGuidedObjectDetectionOutput) — Raw outputs of the model. - threshold (

float, optional, defaults to 0.0) — Minimum confidence threshold to use to filter out predicted boxes. - nms_threshold (

float, optional, defaults to 0.3) — IoU threshold for non-maximum suppression of overlapping boxes. - target_sizes (

torch.Tensor, optional) — Tensor of shape (batch_size, 2) where each entry is the (height, width) of the corresponding image in the batch. If set, predicted normalized bounding boxes are rescaled to the target sizes. If left to None, predictions will not be unnormalized.

Returns

list[Dict]

A list of dictionaries, each dictionary containing the scores, labels and boxes for an image

in the batch as predicted by the model. All labels are set to None as

Owlv2ForObjectDetection.image_guided_detection perform one-shot object detection.

Converts the output of Owlv2ForObjectDetection.image_guided_detection() into the format expected by the COCO api.

Owlv2Processor

class transformers.Owlv2Processor

< source >( image_processor tokenizer **kwargs )

Constructs an Owlv2 processor which wraps Owlv2ImageProcessor/Owlv2ImageProcessorFast and CLIPTokenizer/CLIPTokenizerFast into a single processor that inherits both the image processor and tokenizer functionalities. See the call() and decode() for more information.

__call__

< source >( images: typing.Union[ForwardRef('PIL.Image.Image'), numpy.ndarray, ForwardRef('torch.Tensor'), list['PIL.Image.Image'], list[numpy.ndarray], list['torch.Tensor'], NoneType] = None text: typing.Union[str, list[str], list[list[str]]] = None **kwargs: typing_extensions.Unpack[transformers.models.owlv2.processing_owlv2.Owlv2ProcessorKwargs] ) → BatchFeature

Parameters

- images (

PIL.Image.Image,np.ndarray,torch.Tensor,list[PIL.Image.Image],list[np.ndarray], — -

list[torch.Tensor]) — The image or batch of images to be prepared. Each image can be a PIL image, NumPy array or PyTorch tensor. Both channels-first and channels-last formats are supported. - text (

str,list[str],list[list[str]]) — The sequence or batch of sequences to be encoded. Each sequence can be a string or a list of strings (pretokenized string). If the sequences are provided as list of strings (pretokenized), you must setis_split_into_words=True(to lift the ambiguity with a batch of sequences). - query_images (

PIL.Image.Image,np.ndarray,torch.Tensor,list[PIL.Image.Image],list[np.ndarray],list[torch.Tensor]) — The query image to be prepared, one query image is expected per target image to be queried. Each image can be a PIL image, NumPy array or PyTorch tensor. In case of a NumPy array/PyTorch tensor, each image should be of shape (C, H, W), where C is a number of channels, H and W are image height and width. - return_tensors (

stror TensorType, optional) — If set, will return tensors of a particular framework. Acceptable values are:'pt': Return PyTorchtorch.Tensorobjects.'np': Return NumPynp.ndarrayobjects.

Returns

A BatchFeature with the following fields:

- input_ids — List of token ids to be fed to a model. Returned when

textis notNone. - attention_mask — List of indices specifying which tokens should be attended to by the model (when

return_attention_mask=Trueor if “attention_mask” is inself.model_input_namesand iftextis notNone). - pixel_values — Pixel values to be fed to a model. Returned when

imagesis notNone. - query_pixel_values — Pixel values of the query images to be fed to a model. Returned when

query_imagesis notNone.

Main method to prepare for the model one or several text(s) and image(s). This method forwards the text and

kwargs arguments to CLIPTokenizerFast’s call() if text is not None to encode:

the text. To prepare the image(s), this method forwards the images and kwargs arguments to

CLIPImageProcessor’s call() if images is not None. Please refer to the docstring

of the above two methods for more information.

post_process_grounded_object_detection

< source >( outputs: Owlv2ObjectDetectionOutput threshold: float = 0.1 target_sizes: typing.Union[transformers.utils.generic.TensorType, list[tuple], NoneType] = None text_labels: typing.Optional[list[list[str]]] = None ) → list[Dict]

Parameters

- outputs (

Owlv2ObjectDetectionOutput) — Raw outputs of the model. - threshold (

float, optional, defaults to 0.1) — Score threshold to keep object detection predictions. - target_sizes (

torch.Tensororlist[tuple[int, int]], optional) — Tensor of shape(batch_size, 2)or list of tuples (tuple[int, int]) containing the target size(height, width)of each image in the batch. If unset, predictions will not be resized. - text_labels (

list[list[str]], optional) — List of lists of text labels for each image in the batch. If unset, “text_labels” in output will be set toNone.

Returns

list[Dict]

A list of dictionaries, each dictionary containing the following keys:

- “scores”: The confidence scores for each predicted box on the image.

- “labels”: Indexes of the classes predicted by the model on the image.

- “boxes”: Image bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format.

- “text_labels”: The text labels for each predicted bounding box on the image.

Converts the raw output of Owlv2ForObjectDetection into final bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format.

post_process_image_guided_detection

< source >( outputs: Owlv2ImageGuidedObjectDetectionOutput threshold: float = 0.0 nms_threshold: float = 0.3 target_sizes: typing.Union[transformers.utils.generic.TensorType, list[tuple], NoneType] = None ) → list[Dict]

Parameters

- outputs (

Owlv2ImageGuidedObjectDetectionOutput) — Raw outputs of the model. - threshold (

float, optional, defaults to 0.0) — Minimum confidence threshold to use to filter out predicted boxes. - nms_threshold (

float, optional, defaults to 0.3) — IoU threshold for non-maximum suppression of overlapping boxes. - target_sizes (

torch.Tensor, optional) — Tensor of shape (batch_size, 2) where each entry is the (height, width) of the corresponding image in the batch. If set, predicted normalized bounding boxes are rescaled to the target sizes. If left to None, predictions will not be unnormalized.

Returns

list[Dict]

A list of dictionaries, each dictionary containing the following keys:

- “scores”: The confidence scores for each predicted box on the image.

- “boxes”: Image bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format.

- “labels”: Set to

None.

Converts the output of Owlv2ForObjectDetection.image_guided_detection() into the format expected by the COCO api.

Owlv2Model

class transformers.Owlv2Model

< source >( config: Owlv2Config )

Parameters

- config (Owlv2Config) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare Owlv2 Model outputting raw hidden-states without any specific head on top.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( input_ids: typing.Optional[torch.LongTensor] = None pixel_values: typing.Optional[torch.FloatTensor] = None attention_mask: typing.Optional[torch.Tensor] = None return_loss: typing.Optional[bool] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None interpolate_pos_encoding: bool = False return_base_image_embeds: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → transformers.models.owlv2.modeling_owlv2.Owlv2Output or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of input sequence tokens in the vocabulary. Padding will be ignored by default.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, image_size, image_size), optional) — The tensors corresponding to the input images. Pixel values can be obtained using Owlv2ImageProcessor. See Owlv2ImageProcessor.call() for details (Owlv2Processor uses Owlv2ImageProcessor for processing images). - attention_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

- return_loss (

bool, optional) — Whether or not to return the contrastive loss. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - interpolate_pos_encoding (

bool, defaults toFalse) — Whether to interpolate the pre-trained position encodings. - return_base_image_embeds (

bool, optional) — Whether or not to return the base image embeddings. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.owlv2.modeling_owlv2.Owlv2Output or tuple(torch.FloatTensor)

A transformers.models.owlv2.modeling_owlv2.Owlv2Output or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (Owlv2Config) and inputs.

- loss (

torch.FloatTensorof shape(1,), optional, returned whenreturn_lossisTrue) — Contrastive loss for image-text similarity. - logits_per_image (

torch.FloatTensorof shape(image_batch_size, text_batch_size)) — The scaled dot product scores betweenimage_embedsandtext_embeds. This represents the image-text similarity scores. - logits_per_text (

torch.FloatTensorof shape(text_batch_size, image_batch_size)) — The scaled dot product scores betweentext_embedsandimage_embeds. This represents the text-image similarity scores. - text_embeds (

torch.FloatTensorof shape(batch_size * num_max_text_queries, output_dim) — The text embeddings obtained by applying the projection layer to the pooled output of Owlv2TextModel. - image_embeds (

torch.FloatTensorof shape(batch_size, output_dim) — The image embeddings obtained by applying the projection layer to the pooled output of Owlv2VisionModel. - text_model_output (

<class '~modeling_outputs.BaseModelOutputWithPooling'>.text_model_output, defaults toNone) — The output of the Owlv2TextModel. - vision_model_output (

<class '~modeling_outputs.BaseModelOutputWithPooling'>.vision_model_output, defaults toNone) — The output of the Owlv2VisionModel.

The Owlv2Model forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the pre and post processing steps while the latter silently ignores them.

Examples:

>>> from PIL import Image

>>> import requests

>>> from transformers import AutoProcessor, Owlv2Model

>>> model = Owlv2Model.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> processor = AutoProcessor.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> inputs = processor(text=[["a photo of a cat", "a photo of a dog"]], images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> logits_per_image = outputs.logits_per_image # this is the image-text similarity score

>>> probs = logits_per_image.softmax(dim=1) # we can take the softmax to get the label probabilitiesget_text_features

< source >( input_ids: Tensor attention_mask: typing.Optional[torch.Tensor] = None ) → text_features (torch.FloatTensor of shape (batch_size, output_dim)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size * num_max_text_queries, sequence_length)) — Indices of input sequence tokens in the vocabulary. Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details. What are input IDs? - attention_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

Returns

text_features (torch.FloatTensor of shape (batch_size, output_dim)

The text embeddings obtained by applying the projection layer to the pooled output of Owlv2TextModel.

Examples:

>>> import torch

>>> from transformers import AutoProcessor, Owlv2Model

>>> model = Owlv2Model.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> processor = AutoProcessor.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> inputs = processor(

... text=[["a photo of a cat", "a photo of a dog"], ["photo of a astranaut"]], return_tensors="pt"

... )

>>> with torch.inference_mode():

... text_features = model.get_text_features(**inputs)get_image_features

< source >( pixel_values: Tensor interpolate_pos_encoding: bool = False ) → image_features (torch.FloatTensor of shape (batch_size, output_dim)

Parameters

- pixel_values (

torch.Tensorof shape(batch_size, num_channels, image_size, image_size)) — The tensors corresponding to the input images. Pixel values can be obtained using Owlv2ImageProcessor. See Owlv2ImageProcessor.call() for details (Owlv2Processor uses Owlv2ImageProcessor for processing images). - interpolate_pos_encoding (

bool, defaults toFalse) — Whether to interpolate the pre-trained position encodings.

Returns

image_features (torch.FloatTensor of shape (batch_size, output_dim)

The image embeddings obtained by applying the projection layer to the pooled output of Owlv2VisionModel.

Examples:

>>> import torch

>>> from transformers.image_utils import load_image

>>> from transformers import AutoProcessor, Owlv2Model

>>> model = Owlv2Model.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> processor = AutoProcessor.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = load_image(url)

>>> inputs = processor(images=image, return_tensors="pt")

>>> with torch.inference_mode():

... image_features = model.get_image_features(**inputs)Owlv2TextModel

forward

< source >( input_ids: Tensor attention_mask: typing.Optional[torch.Tensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → transformers.modeling_outputs.BaseModelOutputWithPooling or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size * num_max_text_queries, sequence_length)) — Indices of input sequence tokens in the vocabulary. Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details. What are input IDs? - attention_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

- output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.modeling_outputs.BaseModelOutputWithPooling or tuple(torch.FloatTensor)

A transformers.modeling_outputs.BaseModelOutputWithPooling or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (Owlv2Config) and inputs.

-

last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model. -

pooler_output (

torch.FloatTensorof shape(batch_size, hidden_size)) — Last layer hidden-state of the first token of the sequence (classification token) after further processing through the layers used for the auxiliary pretraining task. E.g. for BERT-family of models, this returns the classification token after processing through a linear layer and a tanh activation function. The linear layer weights are trained from the next sentence prediction (classification) objective during pretraining. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The Owlv2TextModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the pre and post processing steps while the latter silently ignores them.

Examples:

>>> from transformers import AutoProcessor, Owlv2TextModel

>>> model = Owlv2TextModel.from_pretrained("google/owlv2-base-patch16")

>>> processor = AutoProcessor.from_pretrained("google/owlv2-base-patch16")

>>> inputs = processor(

... text=[["a photo of a cat", "a photo of a dog"], ["photo of a astranaut"]], return_tensors="pt"

... )

>>> outputs = model(**inputs)

>>> last_hidden_state = outputs.last_hidden_state

>>> pooled_output = outputs.pooler_output # pooled (EOS token) statesOwlv2VisionModel

forward

< source >( pixel_values: typing.Optional[torch.FloatTensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None interpolate_pos_encoding: bool = False return_dict: typing.Optional[bool] = None ) → transformers.modeling_outputs.BaseModelOutputWithPooling or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, image_size, image_size), optional) — The tensors corresponding to the input images. Pixel values can be obtained using Owlv2ImageProcessor. See Owlv2ImageProcessor.call() for details (Owlv2Processor uses Owlv2ImageProcessor for processing images). - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - interpolate_pos_encoding (

bool, defaults toFalse) — Whether to interpolate the pre-trained position encodings. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.modeling_outputs.BaseModelOutputWithPooling or tuple(torch.FloatTensor)

A transformers.modeling_outputs.BaseModelOutputWithPooling or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (Owlv2Config) and inputs.

-

last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model. -

pooler_output (

torch.FloatTensorof shape(batch_size, hidden_size)) — Last layer hidden-state of the first token of the sequence (classification token) after further processing through the layers used for the auxiliary pretraining task. E.g. for BERT-family of models, this returns the classification token after processing through a linear layer and a tanh activation function. The linear layer weights are trained from the next sentence prediction (classification) objective during pretraining. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The Owlv2VisionModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the pre and post processing steps while the latter silently ignores them.

Examples:

>>> from PIL import Image

>>> import requests

>>> from transformers import AutoProcessor, Owlv2VisionModel

>>> model = Owlv2VisionModel.from_pretrained("google/owlv2-base-patch16")

>>> processor = AutoProcessor.from_pretrained("google/owlv2-base-patch16")

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> inputs = processor(images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> last_hidden_state = outputs.last_hidden_state

>>> pooled_output = outputs.pooler_output # pooled CLS statesOwlv2ForObjectDetection

forward

< source >( input_ids: Tensor pixel_values: FloatTensor attention_mask: typing.Optional[torch.Tensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None interpolate_pos_encoding: bool = False return_dict: typing.Optional[bool] = None ) → transformers.models.owlv2.modeling_owlv2.Owlv2ObjectDetectionOutput or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size * num_max_text_queries, sequence_length), optional) — Indices of input sequence tokens in the vocabulary. Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details. What are input IDs?. - pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, image_size, image_size)) — The tensors corresponding to the input images. Pixel values can be obtained using Owlv2ImageProcessor. See Owlv2ImageProcessor.call() for details (Owlv2Processor uses Owlv2ImageProcessor for processing images). - attention_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

- output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the last hidden state. Seetext_model_last_hidden_stateandvision_model_last_hidden_stateunder returned tensors for more detail. - interpolate_pos_encoding (

bool, defaults toFalse) — Whether to interpolate the pre-trained position encodings. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.owlv2.modeling_owlv2.Owlv2ObjectDetectionOutput or tuple(torch.FloatTensor)

A transformers.models.owlv2.modeling_owlv2.Owlv2ObjectDetectionOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (Owlv2Config) and inputs.

- loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsare provided)) — Total loss as a linear combination of a negative log-likehood (cross-entropy) for class prediction and a bounding box loss. The latter is defined as a linear combination of the L1 loss and the generalized scale-invariant IoU loss. - loss_dict (

Dict, optional) — A dictionary containing the individual losses. Useful for logging. - logits (

torch.FloatTensorof shape(batch_size, num_patches, num_queries)) — Classification logits (including no-object) for all queries. - objectness_logits (

torch.FloatTensorof shape(batch_size, num_patches, 1)) — The objectness logits of all image patches. OWL-ViT represents images as a set of image patches where the total number of patches is (image_size / patch_size)**2. - pred_boxes (

torch.FloatTensorof shape(batch_size, num_patches, 4)) — Normalized boxes coordinates for all queries, represented as (center_x, center_y, width, height). These values are normalized in [0, 1], relative to the size of each individual image in the batch (disregarding possible padding). You can use post_process_object_detection() to retrieve the unnormalized bounding boxes. - text_embeds (

torch.FloatTensorof shape(batch_size, num_max_text_queries, output_dim) — The text embeddings obtained by applying the projection layer to the pooled output of Owlv2TextModel. - image_embeds (

torch.FloatTensorof shape(batch_size, patch_size, patch_size, output_dim) — Pooled output of Owlv2VisionModel. OWLv2 represents images as a set of image patches and computes image embeddings for each patch. - class_embeds (

torch.FloatTensorof shape(batch_size, num_patches, hidden_size)) — Class embeddings of all image patches. OWLv2 represents images as a set of image patches where the total number of patches is (image_size / patch_size)**2. - text_model_output (

<class '~modeling_outputs.BaseModelOutputWithPooling'>.text_model_output, defaults toNone) — The output of the Owlv2TextModel. - vision_model_output (

<class '~modeling_outputs.BaseModelOutputWithPooling'>.vision_model_output, defaults toNone) — The output of the Owlv2VisionModel.

The Owlv2ForObjectDetection forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the pre and post processing steps while the latter silently ignores them.

Examples:

>>> import requests

>>> from PIL import Image

>>> import torch

>>> from transformers import Owlv2Processor, Owlv2ForObjectDetection

>>> processor = Owlv2Processor.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> model = Owlv2ForObjectDetection.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> text_labels = [["a photo of a cat", "a photo of a dog"]]

>>> inputs = processor(text=text_labels, images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> # Target image sizes (height, width) to rescale box predictions [batch_size, 2]

>>> target_sizes = torch.tensor([(image.height, image.width)])

>>> # Convert outputs (bounding boxes and class logits) to Pascal VOC format (xmin, ymin, xmax, ymax)

>>> results = processor.post_process_grounded_object_detection(

... outputs=outputs, target_sizes=target_sizes, threshold=0.1, text_labels=text_labels

... )

>>> # Retrieve predictions for the first image for the corresponding text queries

>>> result = results[0]

>>> boxes, scores, text_labels = result["boxes"], result["scores"], result["text_labels"]

>>> for box, score, text_label in zip(boxes, scores, text_labels):

... box = [round(i, 2) for i in box.tolist()]

... print(f"Detected {text_label} with confidence {round(score.item(), 3)} at location {box}")

Detected a photo of a cat with confidence 0.614 at location [341.67, 23.39, 642.32, 371.35]

Detected a photo of a cat with confidence 0.665 at location [6.75, 51.96, 326.62, 473.13]image_guided_detection

< source >( pixel_values: FloatTensor query_pixel_values: typing.Optional[torch.FloatTensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None interpolate_pos_encoding: bool = False return_dict: typing.Optional[bool] = None ) → transformers.models.owlv2.modeling_owlv2.Owlv2ImageGuidedObjectDetectionOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, image_size, image_size)) — The tensors corresponding to the input images. Pixel values can be obtained using Owlv2ImageProcessor. See Owlv2ImageProcessor.call() for details (Owlv2Processor uses Owlv2ImageProcessor for processing images). - query_pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values of query image(s) to be detected. Pass in one query image per target image. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - interpolate_pos_encoding (

bool, defaults toFalse) — Whether to interpolate the pre-trained position encodings. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.owlv2.modeling_owlv2.Owlv2ImageGuidedObjectDetectionOutput or tuple(torch.FloatTensor)

A transformers.models.owlv2.modeling_owlv2.Owlv2ImageGuidedObjectDetectionOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (Owlv2Config) and inputs.

- logits (

torch.FloatTensorof shape(batch_size, num_patches, num_queries)) — Classification logits (including no-object) for all queries. - image_embeds (

torch.FloatTensorof shape(batch_size, patch_size, patch_size, output_dim) — Pooled output of Owlv2VisionModel. OWLv2 represents images as a set of image patches and computes image embeddings for each patch. - query_image_embeds (

torch.FloatTensorof shape(batch_size, patch_size, patch_size, output_dim) — Pooled output of Owlv2VisionModel. OWLv2 represents images as a set of image patches and computes image embeddings for each patch. - target_pred_boxes (

torch.FloatTensorof shape(batch_size, num_patches, 4)) — Normalized boxes coordinates for all queries, represented as (center_x, center_y, width, height). These values are normalized in [0, 1], relative to the size of each individual target image in the batch (disregarding possible padding). You can use post_process_object_detection() to retrieve the unnormalized bounding boxes. - query_pred_boxes (

torch.FloatTensorof shape(batch_size, num_patches, 4)) — Normalized boxes coordinates for all queries, represented as (center_x, center_y, width, height). These values are normalized in [0, 1], relative to the size of each individual query image in the batch (disregarding possible padding). You can use post_process_object_detection() to retrieve the unnormalized bounding boxes. - class_embeds (

torch.FloatTensorof shape(batch_size, num_patches, hidden_size)) — Class embeddings of all image patches. OWLv2 represents images as a set of image patches where the total number of patches is (image_size / patch_size)**2. - text_model_output (

<class '~modeling_outputs.BaseModelOutputWithPooling'>.text_model_output, defaults toNone) — The output of the Owlv2TextModel. - vision_model_output (

<class '~modeling_outputs.BaseModelOutputWithPooling'>.vision_model_output, defaults toNone) — The output of the Owlv2VisionModel.

Examples:

>>> import requests

>>> from PIL import Image

>>> import torch

>>> from transformers import AutoProcessor, Owlv2ForObjectDetection

>>> processor = AutoProcessor.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> model = Owlv2ForObjectDetection.from_pretrained("google/owlv2-base-patch16-ensemble")

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> query_url = "http://images.cocodataset.org/val2017/000000001675.jpg"

>>> query_image = Image.open(requests.get(query_url, stream=True).raw)

>>> inputs = processor(images=image, query_images=query_image, return_tensors="pt")

>>> # forward pass

>>> with torch.no_grad():

... outputs = model.image_guided_detection(**inputs)

>>> target_sizes = torch.Tensor([image.size[::-1]])

>>> # Convert outputs (bounding boxes and class logits) to Pascal VOC format (xmin, ymin, xmax, ymax)

>>> results = processor.post_process_image_guided_detection(

... outputs=outputs, threshold=0.9, nms_threshold=0.3, target_sizes=target_sizes

... )

>>> i = 0 # Retrieve predictions for the first image

>>> boxes, scores = results[i]["boxes"], results[i]["scores"]

>>> for box, score in zip(boxes, scores):

... box = [round(i, 2) for i in box.tolist()]

... print(f"Detected similar object with confidence {round(score.item(), 3)} at location {box}")

Detected similar object with confidence 0.938 at location [327.31, 54.94, 547.39, 268.06]

Detected similar object with confidence 0.959 at location [5.78, 360.65, 619.12, 366.39]

Detected similar object with confidence 0.902 at location [2.85, 360.01, 627.63, 380.8]

Detected similar object with confidence 0.985 at location [176.98, -29.45, 672.69, 182.83]

Detected similar object with confidence 1.0 at location [6.53, 14.35, 624.87, 470.82]

Detected similar object with confidence 0.998 at location [579.98, 29.14, 615.49, 489.05]

Detected similar object with confidence 0.985 at location [206.15, 10.53, 247.74, 466.01]

Detected similar object with confidence 0.947 at location [18.62, 429.72, 646.5, 457.72]

Detected similar object with confidence 0.996 at location [523.88, 20.69, 586.84, 483.18]

Detected similar object with confidence 0.998 at location [3.39, 360.59, 617.29, 499.21]

Detected similar object with confidence 0.969 at location [4.47, 449.05, 614.5, 474.76]

Detected similar object with confidence 0.966 at location [31.44, 463.65, 654.66, 471.07]

Detected similar object with confidence 0.924 at location [30.93, 468.07, 635.35, 475.39]