Diffusers documentation

Textual Inversion

Textual Inversion

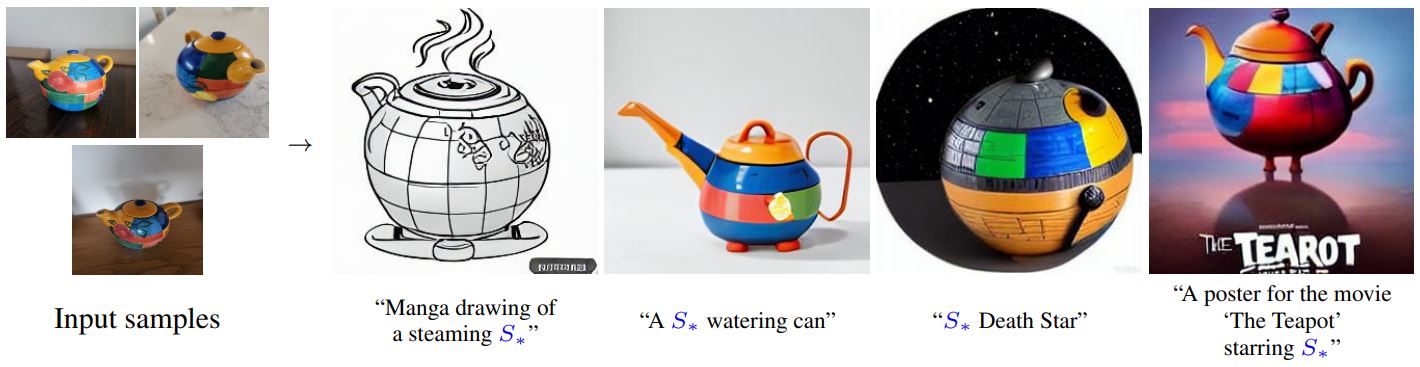

Textual Inversion is a technique for capturing novel concepts from a small number of example images in a way that can later be used to control text-to-image pipelines. It does so by learning new ‘words’ in the embedding space of the pipeline’s text encoder. These special words can then be used within text prompts to achieve very fine-grained control of the resulting images.

This technique was introduced in An Image is Worth One Word: Personalizing Text-to-Image Generation using Textual Inversion. The paper demonstrated the concept using a latent diffusion model but the idea has since been applied to other variants such as Stable Diffusion.

How It Works

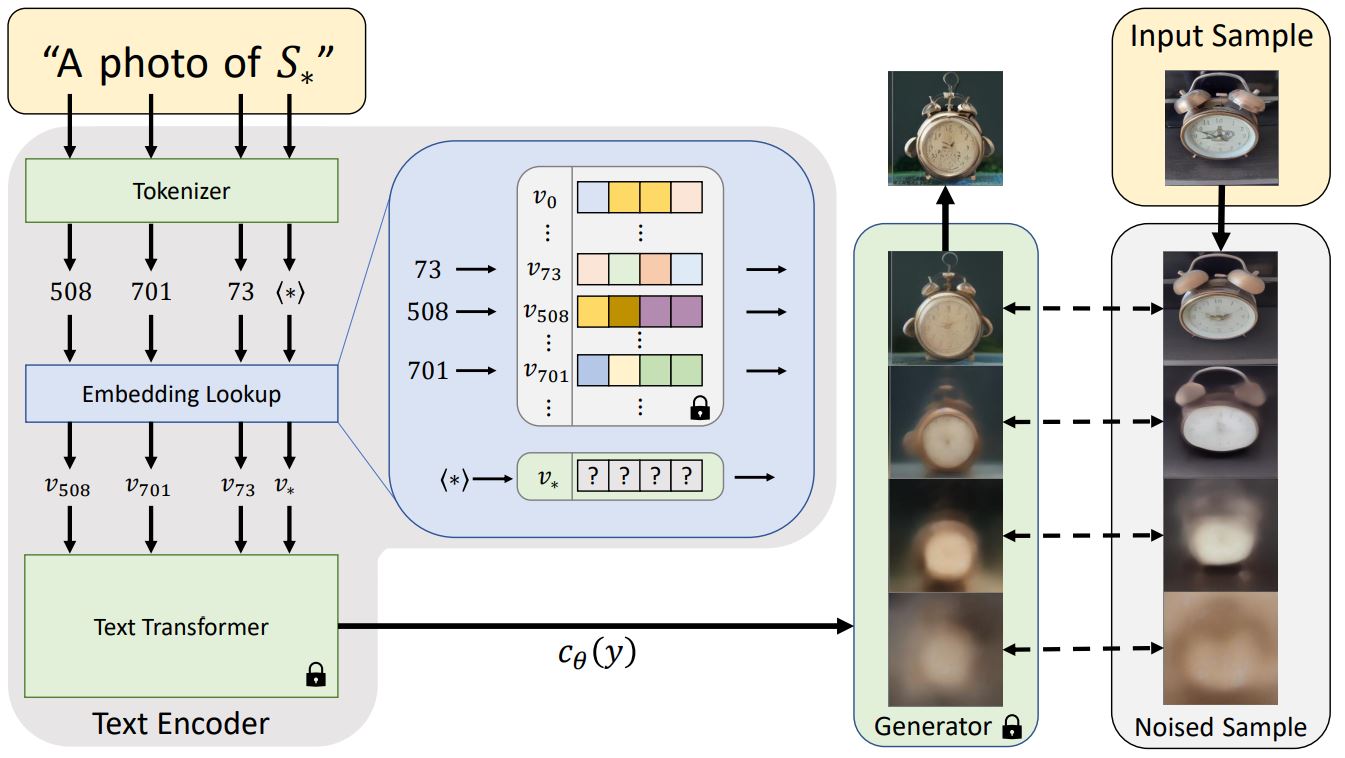

Before a text prompt can be used in a diffusion model, it must first be processed into a numerical representation. This typically involves tokenizing the text, converting each token to an embedding and then feeding those embeddings through a model (typically a transformer) whose output will be used as the conditioning for the diffusion model.

Textual inversion learns a new token embedding (v* in the diagram above). A prompt (that includes a token which will be mapped to this new embedding) is used in conjunction with a noised version of one or more training images as inputs to the generator model, which attempts to predict the denoised version of the image. The embedding is optimized based on how well the model does at this task - an embedding that better captures the object or style shown by the training images will give more useful information to the diffusion model and thus result in a lower denoising loss. After many steps (typically several thousand) with a variety of prompt and image variants the learned embedding should hopefully capture the essence of the new concept being taught.

Usage

To train your own textual inversions, see the example script here.

There is also a notebook for training:

In addition to using concepts you have trained yourself, there is a community-created collection of trained textual inversions in the new Stable Diffusion public concepts library which you can also use from the inference notebook above. Over time this will hopefully grow into a useful resource as more examples are added.

Example: Running locally

The textual_inversion.py script here shows how to implement the training procedure and adapt it for stable diffusion.

Installing the dependencies

Before running the scipts, make sure to install the library’s training dependencies:

pip install diffusers[training] accelerate transformers

And initialize an 🤗Accelerate environment with:

accelerate config

Cat toy example

You need to accept the model license before downloading or using the weights. In this example we’ll use model version v1-4, so you’ll need to visit its card, read the license and tick the checkbox if you agree.

You have to be a registered user in 🤗 Hugging Face Hub, and you’ll also need to use an access token for the code to work. For more information on access tokens, please refer to this section of the documentation.

Run the following command to autheticate your token

huggingface-cli login

If you have already cloned the repo, then you won’t need to go through these steps. You can simple remove the --use_auth_token arg from the following command.

Now let’s get our dataset.Download 3-4 images from here and save them in a directory. This will be our training data.

And launch the training using

export MODEL_NAME="CompVis/stable-diffusion-v1-4"

export DATA_DIR="path-to-dir-containing-images"

accelerate launch textual_inversion.py \

--pretrained_model_name_or_path=$MODEL_NAME --use_auth_token \

--train_data_dir=$DATA_DIR \

--learnable_property="object" \

--placeholder_token="<cat-toy>" --initializer_token="toy" \

--resolution=512 \

--train_batch_size=1 \

--gradient_accumulation_steps=4 \

--max_train_steps=3000 \

--learning_rate=5.0e-04 --scale_lr \

--lr_scheduler="constant" \

--lr_warmup_steps=0 \

--output_dir="textual_inversion_cat"A full training run takes ~1 hour on one V100 GPU.

Inference

Once you have trained a model using above command, the inference can be done simply using the StableDiffusionPipeline. Make sure to include the placeholder_token in your prompt.

from torch import autocast

from diffusers import StableDiffusionPipeline

model_id = "path-to-your-trained-model"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16).to("cuda")

prompt = "A <cat-toy> backpack"

with autocast("cuda"):

image = pipe(prompt, num_inference_steps=50, guidance_scale=7.5).images[0]

image.save("cat-backpack.png")