url

stringlengths 58

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 72

75

| comments_url

stringlengths 67

70

| events_url

stringlengths 65

68

| html_url

stringlengths 48

51

| id

int64 600M

1.08B

| node_id

stringlengths 18

24

| number

int64 2

3.45k

| title

stringlengths 1

276

| user

dict | labels

list | state

stringclasses 2

values | locked

bool 1

class | assignee

null | assignees

sequence | milestone

null | comments

sequence | created_at

int64 1,587B

1,640B

| updated_at

int64 1,588B

1,640B

| closed_at

null 1,588B

1,640B

⌀ | author_association

stringclasses 3

values | active_lock_reason

null | body

stringlengths 0

228k

⌀ | reactions

dict | timeline_url

stringlengths 67

70

| performed_via_github_app

null | draft

null | pull_request

null | is_pull_request

bool 1

class |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/3452 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3452/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3452/comments | https://api.github.com/repos/huggingface/datasets/issues/3452/events | https://github.com/huggingface/datasets/issues/3452 | 1,083,803,178 | I_kwDODunzps5AmYYq | 3,452 | why the stratify option is omitted from test_train_split function? | {

"login": "j-sieger",

"id": 9985334,

"node_id": "MDQ6VXNlcjk5ODUzMzQ=",

"avatar_url": "https://avatars.githubusercontent.com/u/9985334?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/j-sieger",

"html_url": "https://github.com/j-sieger",

"followers_url": "https://api.github.com/users/j-sieger/followers",

"following_url": "https://api.github.com/users/j-sieger/following{/other_user}",

"gists_url": "https://api.github.com/users/j-sieger/gists{/gist_id}",

"starred_url": "https://api.github.com/users/j-sieger/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/j-sieger/subscriptions",

"organizations_url": "https://api.github.com/users/j-sieger/orgs",

"repos_url": "https://api.github.com/users/j-sieger/repos",

"events_url": "https://api.github.com/users/j-sieger/events{/privacy}",

"received_events_url": "https://api.github.com/users/j-sieger/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] | open | false | null | [] | null | [] | 1,639,823,867,000 | 1,639,823,867,000 | null | NONE | null | why the stratify option is omitted from test_train_split function?

is there any other way implement the stratify option while splitting the dataset? as it is important point to be considered while splitting the dataset. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3452/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3452/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3450 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3450/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3450/comments | https://api.github.com/repos/huggingface/datasets/issues/3450/events | https://github.com/huggingface/datasets/issues/3450 | 1,083,450,158 | I_kwDODunzps5AlCMu | 3,450 | Unexpected behavior doing Split + Filter | {

"login": "jbrachat",

"id": 26432605,

"node_id": "MDQ6VXNlcjI2NDMyNjA1",

"avatar_url": "https://avatars.githubusercontent.com/u/26432605?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jbrachat",

"html_url": "https://github.com/jbrachat",

"followers_url": "https://api.github.com/users/jbrachat/followers",

"following_url": "https://api.github.com/users/jbrachat/following{/other_user}",

"gists_url": "https://api.github.com/users/jbrachat/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jbrachat/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jbrachat/subscriptions",

"organizations_url": "https://api.github.com/users/jbrachat/orgs",

"repos_url": "https://api.github.com/users/jbrachat/repos",

"events_url": "https://api.github.com/users/jbrachat/events{/privacy}",

"received_events_url": "https://api.github.com/users/jbrachat/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | open | false | null | [] | null | [] | 1,639,760,439,000 | 1,639,761,479,000 | null | NONE | null | ## Describe the bug

I observed unexpected behavior when applying 'train_test_split' followed by 'filter' on dataset. Elements of the training dataset eventually end up in the test dataset (after applying the 'filter')

## Steps to reproduce the bug

```

from datasets import Dataset

import pandas as pd

dic = {'x': [1,2,3,4,5,6,7,8,9], 'y':['q','w','e','r','t','y','u','i','o']}

df = pd.DataFrame.from_dict(dic)

dataset = Dataset.from_pandas(df)

split_dataset = dataset.train_test_split(test_size=0.5, shuffle=False, seed=42)

train_dataset = split_dataset["train"]

eval_dataset = split_dataset["test"]

eval_dataset_2 = eval_dataset.filter(lambda example: example['x'] % 2 == 0)

print( eval_dataset['x'])

print(eval_dataset_2['x'])

```

One observes that elements in eval_dataset2 are actually coming from the training dataset...

## Expected results

The expected results would be that the filtered eval dataset would only contain elements from the original eval dataset.

## Actual results

Specify the actual results or traceback.

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 1.12.1

- Platform: Windows 10

- Python version: 3.7

- PyArrow version: 5.0.0

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3450/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3450/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3449 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3449/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3449/comments | https://api.github.com/repos/huggingface/datasets/issues/3449/events | https://github.com/huggingface/datasets/issues/3449 | 1,083,373,018 | I_kwDODunzps5AkvXa | 3,449 | Add `__add__()`, `__iadd__()` and similar to `Dataset` class | {

"login": "sgraaf",

"id": 8904453,

"node_id": "MDQ6VXNlcjg5MDQ0NTM=",

"avatar_url": "https://avatars.githubusercontent.com/u/8904453?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/sgraaf",

"html_url": "https://github.com/sgraaf",

"followers_url": "https://api.github.com/users/sgraaf/followers",

"following_url": "https://api.github.com/users/sgraaf/following{/other_user}",

"gists_url": "https://api.github.com/users/sgraaf/gists{/gist_id}",

"starred_url": "https://api.github.com/users/sgraaf/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sgraaf/subscriptions",

"organizations_url": "https://api.github.com/users/sgraaf/orgs",

"repos_url": "https://api.github.com/users/sgraaf/repos",

"events_url": "https://api.github.com/users/sgraaf/events{/privacy}",

"received_events_url": "https://api.github.com/users/sgraaf/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] | open | false | null | [] | null | [] | 1,639,754,951,000 | 1,639,754,951,000 | null | NONE | null | **Is your feature request related to a problem? Please describe.**

No.

**Describe the solution you'd like**

I would like to be able to concatenate datasets as follows:

```python

>>> dataset["train"] += dataset["validation"]

```

... instead of using `concatenate_datasets()`:

```python

>>> raw_datasets["train"] = concatenate_datasets([raw_datasets["train"], raw_datasets["validation"]])

>>> del raw_datasets["dev"]

```

**Describe alternatives you've considered**

Well, I have considered `concatenate_datasets()` 😀

**Additional context**

N.a.

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3449/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3449/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3448 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3448/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3448/comments | https://api.github.com/repos/huggingface/datasets/issues/3448/events | https://github.com/huggingface/datasets/issues/3448 | 1,083,231,080 | I_kwDODunzps5AkMto | 3,448 | JSONDecodeError with HuggingFace dataset viewer | {

"login": "kathrynchapman",

"id": 57716109,

"node_id": "MDQ6VXNlcjU3NzE2MTA5",

"avatar_url": "https://avatars.githubusercontent.com/u/57716109?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/kathrynchapman",

"html_url": "https://github.com/kathrynchapman",

"followers_url": "https://api.github.com/users/kathrynchapman/followers",

"following_url": "https://api.github.com/users/kathrynchapman/following{/other_user}",

"gists_url": "https://api.github.com/users/kathrynchapman/gists{/gist_id}",

"starred_url": "https://api.github.com/users/kathrynchapman/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/kathrynchapman/subscriptions",

"organizations_url": "https://api.github.com/users/kathrynchapman/orgs",

"repos_url": "https://api.github.com/users/kathrynchapman/repos",

"events_url": "https://api.github.com/users/kathrynchapman/events{/privacy}",

"received_events_url": "https://api.github.com/users/kathrynchapman/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer",

"name": "dataset-viewer",

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co"

}

] | open | false | null | [] | null | [

"Hi ! I think the issue comes from the dataset_infos.json file: it has the \"flat\" field twice.\r\n\r\nCan you try deleting this file and regenerating it please ?",

"Thanks! That fixed that, but now I am getting:\r\nServer Error\r\nStatus code: 400\r\nException: KeyError\r\nMessage: 'feature'\r\n\r\nI checked the dataset_infos.json and pubmed_neg.py script, I don't use 'feature' anywhere as a key. Is the dataset viewer expecting that I do?"

] | 1,639,745,561,000 | 1,639,750,374,000 | null | NONE | null | ## Dataset viewer issue for 'pubmed_neg'

**Link:** https://huggingface.co/datasets/IGESML/pubmed_neg

I am getting the error:

Status code: 400

Exception: JSONDecodeError

Message: Expecting property name enclosed in double quotes: line 61 column 2 (char 1202)

I have checked all files - I am not using single quotes anywhere. Not sure what is causing this issue.

Am I the one who added this dataset ? Yes

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3448/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3448/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3447 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3447/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3447/comments | https://api.github.com/repos/huggingface/datasets/issues/3447/events | https://github.com/huggingface/datasets/issues/3447 | 1,082,539,790 | I_kwDODunzps5Ahj8O | 3,447 | HF_DATASETS_OFFLINE=1 didn't stop datasets.builder from downloading | {

"login": "dunalduck0",

"id": 51274745,

"node_id": "MDQ6VXNlcjUxMjc0NzQ1",

"avatar_url": "https://avatars.githubusercontent.com/u/51274745?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/dunalduck0",

"html_url": "https://github.com/dunalduck0",

"followers_url": "https://api.github.com/users/dunalduck0/followers",

"following_url": "https://api.github.com/users/dunalduck0/following{/other_user}",

"gists_url": "https://api.github.com/users/dunalduck0/gists{/gist_id}",

"starred_url": "https://api.github.com/users/dunalduck0/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/dunalduck0/subscriptions",

"organizations_url": "https://api.github.com/users/dunalduck0/orgs",

"repos_url": "https://api.github.com/users/dunalduck0/repos",

"events_url": "https://api.github.com/users/dunalduck0/events{/privacy}",

"received_events_url": "https://api.github.com/users/dunalduck0/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | open | false | null | [] | null | [

"Hi ! Indeed it says \"downloading and preparing\" but in your case it didn't need to download anything since you used local files (it would have thrown an error otherwise). I think we can improve the logging to make it clearer in this case",

"@lhoestq Thank you for explaining. I am sorry but I was not clear about my intention. I didn't want to kill internet traffic; I wanted to kill all write activity. In other words, you can imagine that my storage has only read access but crashes on write.\r\n\r\nWhen run_clm.py is invoked with the same parameters, the hash in the cache directory \"datacache/trainpy.v2/json/default-471372bed4b51b53/0.0.0/...\" doesn't change, and my job can load cached data properly. This is great.\r\n\r\nUnfortunately, when params change (which happens sometimes), the hash changes and the old cache is invalid. datasets builder would create a new cache directory with the new hash and create JSON builder there, even though every JSON builder is the same. I didn't find a way to avoid such behavior.\r\n\r\nThis problem can be resolved when using datasets.map() for tokenizing and grouping text. This function allows me to specify output filenames with --cache_file_names, so that the cached files are always valid.\r\n\r\nThis is the code that I used to freeze cache filenames for tokenization. I wish I could do the same to datasets.load_dataset()\r\n```\r\n tokenized_datasets = raw_datasets.map(\r\n tokenize_function,\r\n batched=True,\r\n num_proc=data_args.preprocessing_num_workers,\r\n remove_columns=column_names,\r\n load_from_cache_file=not data_args.overwrite_cache,\r\n desc=\"Running tokenizer on dataset\",\r\n cache_file_names={k: os.path.join(model_args.cache_dir, f'{k}-tokenized') for k in raw_datasets},\r\n )\r\n```"

] | 1,639,680,673,000 | 1,639,782,442,000 | null | NONE | null | ## Describe the bug

According to https://huggingface.co/docs/datasets/loading_datasets.html#loading-a-dataset-builder, setting HF_DATASETS_OFFLINE to 1 should make datasets to "run in full offline mode". It didn't work for me. At the very beginning, datasets still tried to download "custom data configuration" for JSON, despite I have run the program once and cached all data into the same --cache_dir.

"Downloading" is not an issue when running with local disk, but crashes often with cloud storage because (1) multiply GPU processes try to access the same file, AND (2) FileLocker fails to synchronize all processes, due to storage throttling. 99% of times, when the main process releases FileLocker, the file is not actually ready for access in cloud storage and thus triggers "FileNotFound" errors for all other processes. Well, another way to resolve the problem is to investigate super reliable cloud storage, but that's out of scope here.

## Steps to reproduce the bug

```

export HF_DATASETS_OFFLINE=1

python run_clm.py --model_name_or_path=models/gpt-j-6B --train_file=trainpy.v2.train.json --validation_file=trainpy.v2.eval.json --cache_dir=datacache/trainpy.v2

```

## Expected results

datasets should stop all "downloading" behavior but reuse the cached JSON configuration. I think the problem here is part of the cache directory path, "default-471372bed4b51b53", is randomly generated, and it could change if some parameters changed. And I didn't find a way to use a fixed path to ensure datasets to reuse cached data every time.

## Actual results

The logging shows datasets are still downloading into "datacache/trainpy.v2/json/default-471372bed4b51b53/0.0.0/c2d554c3377ea79c7664b93dc65d0803b45e3279000f993c7bfd18937fd7f426".

```

12/16/2021 10:25:59 - WARNING - datasets.builder - Using custom data configuration default-471372bed4b51b53

12/16/2021 10:25:59 - INFO - datasets.builder - Generating dataset json (datacache/trainpy.v2/json/default-471372bed4b51b53/0.0.0/c2d554c3377ea79c7664b93dc65d0803b45e3279000f993c7bfd18937fd7f426)

Downloading and preparing dataset json/default to datacache/trainpy.v2/json/default-471372bed4b51b53/0.0.0/c2d554c3377ea79c7664b93dc65d0803b45e3279000f993c7bfd18937fd7f426...

100%|██████████████████████████████████████████████████████████████████████████████████| 2/2 [00:00<00:00, 17623.13it/s]

12/16/2021 10:25:59 - INFO - datasets.utils.download_manager - Downloading took 0.0 min

12/16/2021 10:26:00 - INFO - datasets.utils.download_manager - Checksum Computation took 0.0 min

100%|███████████████████████████████████████████████████████████████████████████████████| 2/2 [00:00<00:00, 1206.99it/s]

12/16/2021 10:26:00 - INFO - datasets.utils.info_utils - Unable to verify checksums.

12/16/2021 10:26:00 - INFO - datasets.builder - Generating split train

12/16/2021 10:26:01 - INFO - datasets.builder - Generating split validation

12/16/2021 10:26:02 - INFO - datasets.utils.info_utils - Unable to verify splits sizes.

Dataset json downloaded and prepared to datacache/trainpy.v2/json/default-471372bed4b51b53/0.0.0/c2d554c3377ea79c7664b93dc65d0803b45e3279000f993c7bfd18937fd7f426. Subsequent calls will reuse this data.

100%|█████████████████████████████████████████████████████████████████████████████████████| 2/2 [00:00<00:00, 53.54it/s]

```

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 1.16.1

- Platform: Linux

- Python version: 3.8.10

- PyArrow version: 6.0.1

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3447/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3447/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3445 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3445/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3445/comments | https://api.github.com/repos/huggingface/datasets/issues/3445/events | https://github.com/huggingface/datasets/issues/3445 | 1,082,370,968 | I_kwDODunzps5Ag6uY | 3,445 | question | {

"login": "BAKAYOKO0232",

"id": 38075175,

"node_id": "MDQ6VXNlcjM4MDc1MTc1",

"avatar_url": "https://avatars.githubusercontent.com/u/38075175?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/BAKAYOKO0232",

"html_url": "https://github.com/BAKAYOKO0232",

"followers_url": "https://api.github.com/users/BAKAYOKO0232/followers",

"following_url": "https://api.github.com/users/BAKAYOKO0232/following{/other_user}",

"gists_url": "https://api.github.com/users/BAKAYOKO0232/gists{/gist_id}",

"starred_url": "https://api.github.com/users/BAKAYOKO0232/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/BAKAYOKO0232/subscriptions",

"organizations_url": "https://api.github.com/users/BAKAYOKO0232/orgs",

"repos_url": "https://api.github.com/users/BAKAYOKO0232/repos",

"events_url": "https://api.github.com/users/BAKAYOKO0232/events{/privacy}",

"received_events_url": "https://api.github.com/users/BAKAYOKO0232/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer",

"name": "dataset-viewer",

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co"

}

] | open | false | null | [] | null | [

"Hi ! What's your question ?"

] | 1,639,670,220,000 | 1,639,749,168,000 | null | NONE | null | ## Dataset viewer issue for '*name of the dataset*'

**Link:** *link to the dataset viewer page*

*short description of the issue*

Am I the one who added this dataset ? Yes-No

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3445/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3445/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3444 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3444/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3444/comments | https://api.github.com/repos/huggingface/datasets/issues/3444/events | https://github.com/huggingface/datasets/issues/3444 | 1,082,078,961 | I_kwDODunzps5Afzbx | 3,444 | Align the Dataset and IterableDataset processing API | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"Yes I agree, these should be as aligned as possible. Maybe we can also check the feedback in the survey at http://hf.co/oss-survey and see if people mentioned related things on the API (in particular if we go the breaking change way, it would be good to be sure we are taking the right direction for the community).",

"I like this proposal.\r\n\r\n> There is also an important difference in terms of behavior:\r\nDataset.map adds new columns (with dict.update)\r\nBUT\r\nIterableDataset discards previous columns (it overwrites the dict)\r\nIMO the two methods should have the same behavior. This would be an important breaking change though.\r\n\r\n> The main breaking change would be the change of behavior of IterableDataset.map, because currently it discards all the previous columns instead of keeping them.\r\n\r\nYes, this behavior of `IterableDataset.map` was surprising to me the first time I used it because I was expecting the same behavior as `Dataset.map`, so I'm OK with the breaking change here.\r\n\r\n> IterableDataset only supports \"torch\" (it misses tf, jax, pandas, arrow) and is missing the parameters: columns, output_all_columns and format_kwargs\r\n\r\n\\+ it's also missing the actual formatting code (we return unformatted tensors)\r\n> We could have a completely aligned map method if both methods were lazy by default, but this is a very big breaking change so I'm not sure we can consider doing that.\r\n\r\n> For information, TFDS does lazy map by default, and has an additional .cache() method.\r\n\r\nIf I understand this part correctly, the idea would be for `Dataset.map` to behave similarly to `Dataset.with_transform` (lazy processing) and to have an option to cache processed data (with `.cache()`). This idea is really nice because it can also be applied to `IterableDataset` to fix https://github.com/huggingface/datasets/issues/3142 (again we get the aligned APIs). However, this change would break a lot of things, so I'm still not sure if this is a step in the right direction (maybe it's OK for Datasets 2.0?) \r\n> If the two APIs are more aligned it would be awesome for the examples in transformers, and it would create a satisfactory experience for users that want to switch from one mode to the other.\r\n\r\nYes, it would be amazing to have an option to easily switch between these two modes.\r\n\r\nI agree with the rest.\r\n",

"> If I understand this part correctly, the idea would be for Dataset.map to behave similarly to Dataset.with_transform (lazy processing) and to have an option to cache processed data (with .cache()). This idea is really nice because it can also be applied to IterableDataset to fix #3142 (again we get the aligned APIs). However, this change would break a lot of things, so I'm still not sure if this is a step in the right direction (maybe it's OK for Datasets 2.0?)\r\n\r\nYea this is too big of a change in my opinion. Anyway it's fine as it is right now with streaming=lazy and regular=eager."

] | 1,639,653,971,000 | 1,639,664,831,000 | null | MEMBER | null | ## Intro

Currently the two classes have two distinct API for processing:

### The `.map()` method

Both have those parameters in common: function, batched, batch_size

- IterableDataset is missing those parameters:

with_indices, with_rank, input_columns, drop_last_batch, remove_columns, features, disable_nullable, fn_kwargs, num_proc

- Dataset also has additional parameters that are exclusive, due to caching:

keep_in_memory, load_from_cache_file, cache_file_name, writer_batch_size, suffix_template, new_fingerprint

- There is also an important difference in terms of behavior:

**Dataset.map adds new columns** (with dict.update)

BUT

**IterableDataset discards previous columns** (it overwrites the dict)

IMO the two methods should have the same behavior. This would be an important breaking change though.

- Dataset.map is eager while IterableDataset.map is lazy

### The `.shuffle()` method

- Both have an optional seed parameter, but IterableDataset requires a mandatory parameter buffer_size to control the size of the local buffer used for approximate shuffling.

- IterableDataset is missing the parameter generator

- Also Dataset has exclusive parameters due to caching: keep_in_memory, load_from_cache_file, indices_cache_file_name, writer_batch_size, new_fingerprint

### The `.with_format()` method

- IterableDataset only supports "torch" (it misses tf, jax, pandas, arrow) and is missing the parameters: columns, output_all_columns and format_kwargs

### Other methods

- Both have the same `remove_columns` method

- IterableDataset is missing: cast, cast_column, filter, rename_column, rename_columns, class_encode_column, flatten, prepare_for_task, train_test_split, shard

- Some other methods are missing but we can discuss them: set_transform, formatted_as, with_transform

- And others don't really make sense for an iterable dataset: select, sort, add_column, add_item

- Dataset is missing skip and take, that IterableDataset implements.

## Questions

I think it would be nice to be able to switch between streaming and regular dataset easily, without changing the processing code significantly.

1. What should be aligned and what shouldn't between those two APIs ?

IMO the minimum is to align the main processing methods.

It would mean aligning breaking the current `Iterable.map` to have the same behavior as `Dataset.map` (add columns with dict.update), and add multiprocessing as well as the missing parameters.

It would also mean implementing the missing methods: cast, cast_column, filter, rename_column, rename_columns, class_encode_column, flatten, prepare_for_task, train_test_split, shard

2. What are the breaking changes for IterableDataset ?

The main breaking change would be the change of behavior of `IterableDataset.map`, because currently it discards all the previous columns instead of keeping them.

3. Shall we also do some changes for regular datasets ?

I agree the simplest would be to have the exact same methods for both Dataset and IterableDataset. However this is probably not a good idea because it would prevent users from using the best benefits of them. That's why we can keep some aspects of regular datasets as they are:

- keep the eager Dataset.map with caching

- keep the with_transform method for lazy processing

- keep Dataset.select (it could also be added to IterableDataset even though it's not recommended)

We could have a completely aligned `map` method if both methods were lazy by default, but this is a very big breaking change so I'm not sure we can consider doing that.

For information, TFDS does lazy map by default, and has an additional `.cache()` method.

## Opinions ?

I'd love to gather some opinions about this here. If the two APIs are more aligned it would be awesome for the examples in `transformers`, and it would create a satisfactory experience for users that want to switch from one mode to the other.

cc @mariosasko @albertvillanova @thomwolf @patrickvonplaten @sgugger | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3444/reactions",

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3444/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3441 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3441/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3441/comments | https://api.github.com/repos/huggingface/datasets/issues/3441/events | https://github.com/huggingface/datasets/issues/3441 | 1,081,571,784 | I_kwDODunzps5Ad3nI | 3,441 | Add QuALITY dataset | {

"login": "lewtun",

"id": 26859204,

"node_id": "MDQ6VXNlcjI2ODU5MjA0",

"avatar_url": "https://avatars.githubusercontent.com/u/26859204?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lewtun",

"html_url": "https://github.com/lewtun",

"followers_url": "https://api.github.com/users/lewtun/followers",

"following_url": "https://api.github.com/users/lewtun/following{/other_user}",

"gists_url": "https://api.github.com/users/lewtun/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lewtun/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lewtun/subscriptions",

"organizations_url": "https://api.github.com/users/lewtun/orgs",

"repos_url": "https://api.github.com/users/lewtun/repos",

"events_url": "https://api.github.com/users/lewtun/events{/privacy}",

"received_events_url": "https://api.github.com/users/lewtun/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 2067376369,

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request",

"name": "dataset request",

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset"

}

] | open | false | null | [] | null | [] | 1,639,607,179,000 | 1,639,607,179,000 | null | MEMBER | null | ## Adding a Dataset

- **Name:** QuALITY

- **Description:** A challenging question answering with very long contexts (Twitter [thread](https://twitter.com/sleepinyourhat/status/1471225421794529281?s=20))

- **Paper:** No ArXiv link yet, but draft is [here](https://github.com/nyu-mll/quality/blob/main/quality_preprint.pdf)

- **Data:** GitHub repo [here](https://github.com/nyu-mll/quality)

- **Motivation:** This dataset would serve as a nice way to benchmark long-range Transformer models like BigBird, Longformer and their descendants. In particular, it would be very interesting to see how the S4 model fares on this given it's impressive performance on the Long Range Arena

Instructions to add a new dataset can be found [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md).

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3441/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3441/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3440 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3440/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3440/comments | https://api.github.com/repos/huggingface/datasets/issues/3440/events | https://github.com/huggingface/datasets/issues/3440 | 1,081,528,426 | I_kwDODunzps5AdtBq | 3,440 | datasets keeps reading from cached files, although I disabled it | {

"login": "dorost1234",

"id": 79165106,

"node_id": "MDQ6VXNlcjc5MTY1MTA2",

"avatar_url": "https://avatars.githubusercontent.com/u/79165106?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/dorost1234",

"html_url": "https://github.com/dorost1234",

"followers_url": "https://api.github.com/users/dorost1234/followers",

"following_url": "https://api.github.com/users/dorost1234/following{/other_user}",

"gists_url": "https://api.github.com/users/dorost1234/gists{/gist_id}",

"starred_url": "https://api.github.com/users/dorost1234/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/dorost1234/subscriptions",

"organizations_url": "https://api.github.com/users/dorost1234/orgs",

"repos_url": "https://api.github.com/users/dorost1234/repos",

"events_url": "https://api.github.com/users/dorost1234/events{/privacy}",

"received_events_url": "https://api.github.com/users/dorost1234/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | open | false | null | [] | null | [

"Hi ! What version of `datasets` are you using ? Can you also provide the logs you get before it raises the error ?"

] | 1,639,603,582,000 | 1,639,668,747,000 | null | NONE | null | ## Describe the bug

Hi,

I am trying to avoid dataset library using cached files, I get the following bug when this tried to read the cached files. I tried to do the followings:

```

from datasets import set_caching_enabled

set_caching_enabled(False)

```

also force redownlaod:

```

download_mode='force_redownload'

```

but none worked so far, this is on a cluster and on some of the machines this reads from the cached files, I really appreciate any idea on how to fully remove caching @lhoestq

many thanks

```

File "run_clm.py", line 496, in <module>

main()

File "run_clm.py", line 419, in main

train_result = trainer.train(resume_from_checkpoint=checkpoint)

File "/users/dara/codes/fewshot/debug/fewshot/third_party/trainers/trainer.py", line 943, in train

self._maybe_log_save_evaluate(tr_loss, model, trial, epoch, ignore_keys_for_eval)

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/transformers/trainer.py", line 1445, in _maybe_log_save_evaluate

metrics = self.evaluate(ignore_keys=ignore_keys_for_eval)

File "/users/dara/codes/fewshot/debug/fewshot/third_party/trainers/trainer.py", line 172, in evaluate

output = self.eval_loop(

File "/users/dara/codes/fewshot/debug/fewshot/third_party/trainers/trainer.py", line 241, in eval_loop

metrics = self.compute_pet_metrics(eval_datasets, model, self.extra_info[metric_key_prefix], task=task)

File "/users/dara/codes/fewshot/debug/fewshot/third_party/trainers/trainer.py", line 268, in compute_pet_metrics

centroids = self._compute_per_token_train_centroids(model, task=task)

File "/users/dara/codes/fewshot/debug/fewshot/third_party/trainers/trainer.py", line 353, in _compute_per_token_train_centroids

data = get_label_samples(self.get_per_task_train_dataset(task), label)

File "/users/dara/codes/fewshot/debug/fewshot/third_party/trainers/trainer.py", line 350, in get_label_samples

return dataset.filter(lambda example: int(example['labels']) == label)

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/arrow_dataset.py", line 470, in wrapper

out: Union["Dataset", "DatasetDict"] = func(self, *args, **kwargs)

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/fingerprint.py", line 406, in wrapper

out = func(self, *args, **kwargs)

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/arrow_dataset.py", line 2519, in filter

indices = self.map(

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/arrow_dataset.py", line 2036, in map

return self._map_single(

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/arrow_dataset.py", line 503, in wrapper

out: Union["Dataset", "DatasetDict"] = func(self, *args, **kwargs)

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/arrow_dataset.py", line 470, in wrapper

out: Union["Dataset", "DatasetDict"] = func(self, *args, **kwargs)

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/fingerprint.py", line 406, in wrapper

out = func(self, *args, **kwargs)

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/arrow_dataset.py", line 2248, in _map_single

return Dataset.from_file(cache_file_name, info=info, split=self.split)

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/arrow_dataset.py", line 654, in from_file

return cls(

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/arrow_dataset.py", line 593, in __init__

self.info.features = self.info.features.reorder_fields_as(inferred_features)

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/features/features.py", line 1092, in reorder_fields_as

return Features(recursive_reorder(self, other))

File "/users/dara/conda/envs/multisuccess/lib/python3.8/site-packages/datasets/features/features.py", line 1081, in recursive_reorder

raise ValueError(f"Keys mismatch: between {source} and {target}" + stack_position)

ValueError: Keys mismatch: between {'indices': Value(dtype='uint64', id=None)} and {'candidates_ids': Sequence(feature=Value(dtype='null', id=None), length=-1, id=None), 'labels': Value(dtype='int64', id=None), 'attention_mask': Sequence(feature=Value(dtype='int8', id=None), length=-1, id=None), 'input_ids': Sequence(feature=Value(dtype='int32', id=None), length=-1, id=None), 'extra_fields': {}, 'task': Value(dtype='string', id=None)}

```

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version:

- Platform: linux

- Python version: 3.8.12

- PyArrow version: 6.0.1

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3440/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3440/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3434 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3434/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3434/comments | https://api.github.com/repos/huggingface/datasets/issues/3434/events | https://github.com/huggingface/datasets/issues/3434 | 1,080,917,446 | I_kwDODunzps5AbX3G | 3,434 | Add The People's Speech | {

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 2067376369,

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request",

"name": "dataset request",

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset"

},

{

"id": 2725241052,

"node_id": "MDU6TGFiZWwyNzI1MjQxMDUy",

"url": "https://api.github.com/repos/huggingface/datasets/labels/speech",

"name": "speech",

"color": "d93f0b",

"default": false,

"description": ""

}

] | open | false | null | [] | null | [] | 1,639,567,281,000 | 1,639,567,281,000 | null | CONTRIBUTOR | null | ## Adding a Dataset

- **Name:** The People's Speech

- **Description:** a massive English-language dataset of audio transcriptions of full sentences.

- **Paper:** https://openreview.net/pdf?id=R8CwidgJ0yT

- **Data:** https://mlcommons.org/en/peoples-speech/

- **Motivation:** With over 30,000 hours of speech, this dataset is the largest and most diverse freely available English speech recognition corpus today.

[The article](https://thegradient.pub/new-datasets-to-democratize-speech-recognition-technology-2/) which may be useful when working on the dataset.

cc: @anton-l

Instructions to add a new dataset can be found [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md).

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3434/reactions",

"total_count": 3,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 3,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3434/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3433 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3433/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3433/comments | https://api.github.com/repos/huggingface/datasets/issues/3433/events | https://github.com/huggingface/datasets/issues/3433 | 1,080,910,724 | I_kwDODunzps5AbWOE | 3,433 | Add Multilingual Spoken Words dataset | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 2067376369,

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request",

"name": "dataset request",

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset"

},

{

"id": 2725241052,

"node_id": "MDU6TGFiZWwyNzI1MjQxMDUy",

"url": "https://api.github.com/repos/huggingface/datasets/labels/speech",

"name": "speech",

"color": "d93f0b",

"default": false,

"description": ""

}

] | open | false | null | [] | null | [] | 1,639,566,884,000 | 1,639,566,884,000 | null | MEMBER | null | ## Adding a Dataset

- **Name:** Multilingual Spoken Words

- **Description:** Multilingual Spoken Words Corpus is a large and growing audio dataset of spoken words in 50 languages for academic research and commercial applications in keyword spotting and spoken term search, licensed under CC-BY 4.0. The dataset contains more than 340,000 keywords, totaling 23.4 million 1-second spoken examples (over 6,000 hours).

Read more: https://mlcommons.org/en/news/spoken-words-blog/

- **Paper:** https://datasets-benchmarks-proceedings.neurips.cc/paper/2021/file/fe131d7f5a6b38b23cc967316c13dae2-Paper-round2.pdf

- **Data:** https://mlcommons.org/en/multilingual-spoken-words/

- **Motivation:**

Instructions to add a new dataset can be found [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md).

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3433/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3433/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3431 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3431/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3431/comments | https://api.github.com/repos/huggingface/datasets/issues/3431/events | https://github.com/huggingface/datasets/issues/3431 | 1,079,866,083 | I_kwDODunzps5AXXLj | 3,431 | Unable to resolve any data file after loading once | {

"login": "Fischer-love-fish",

"id": 84694183,

"node_id": "MDQ6VXNlcjg0Njk0MTgz",

"avatar_url": "https://avatars.githubusercontent.com/u/84694183?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/Fischer-love-fish",

"html_url": "https://github.com/Fischer-love-fish",

"followers_url": "https://api.github.com/users/Fischer-love-fish/followers",

"following_url": "https://api.github.com/users/Fischer-love-fish/following{/other_user}",

"gists_url": "https://api.github.com/users/Fischer-love-fish/gists{/gist_id}",

"starred_url": "https://api.github.com/users/Fischer-love-fish/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Fischer-love-fish/subscriptions",

"organizations_url": "https://api.github.com/users/Fischer-love-fish/orgs",

"repos_url": "https://api.github.com/users/Fischer-love-fish/repos",

"events_url": "https://api.github.com/users/Fischer-love-fish/events{/privacy}",

"received_events_url": "https://api.github.com/users/Fischer-love-fish/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [] | 1,639,494,135,000 | 1,639,494,135,000 | null | NONE | null | when I rerun my program, it occurs this error

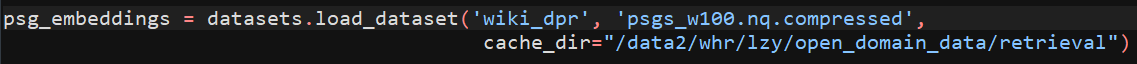

" Unable to resolve any data file that matches '['**train*']' at /data2/whr/lzy/open_domain_data/retrieval/wiki_dpr with any supported extension ['csv', 'tsv', 'json', 'jsonl', 'parquet', 'txt', 'zip']", so how could i deal with this problem?

thx.

And below is my code .

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3431/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3431/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3425 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3425/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3425/comments | https://api.github.com/repos/huggingface/datasets/issues/3425/events | https://github.com/huggingface/datasets/issues/3425 | 1,078,598,140 | I_kwDODunzps5AShn8 | 3,425 | Getting configs names takes too long | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | open | false | null | [

{

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"maybe related to https://github.com/huggingface/datasets/issues/2859\r\n",

"It looks like it's currently calling `HfFileSystem.ls()` ~8 times at the root and for each subdirectory:\r\n- \"\"\r\n- \"en.noblocklist\"\r\n- \"en.noclean\"\r\n- \"en\"\r\n- \"multilingual\"\r\n- \"realnewslike\"\r\n\r\nCurrently `ls` is slow because it iterates on all the files inside the repository.\r\n\r\nAn easy optimization would be to cache the result of each call to `ls`.\r\nWe can also optimize `ls` by using a tree structure per directory instead of a list of all the files.\r\n",

"ok\r\n"

] | 1,639,405,677,000 | 1,639,407,213,000 | null | CONTRIBUTOR | null |

## Steps to reproduce the bug

```python

from datasets import get_dataset_config_names

get_dataset_config_names("allenai/c4")

```

## Expected results

I would expect to get the answer quickly, at least in less than 10s

## Actual results

It takes about 45s on my environment

## Environment info

- `datasets` version: 1.16.1

- Platform: Linux-5.11.0-1022-aws-x86_64-with-glibc2.31

- Python version: 3.9.6

- PyArrow version: 4.0.1 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3425/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3425/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3423 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3423/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3423/comments | https://api.github.com/repos/huggingface/datasets/issues/3423/events | https://github.com/huggingface/datasets/issues/3423 | 1,078,049,638 | I_kwDODunzps5AQbtm | 3,423 | data duplicate when setting num_works > 1 with streaming data | {

"login": "cloudyuyuyu",

"id": 16486492,

"node_id": "MDQ6VXNlcjE2NDg2NDky",

"avatar_url": "https://avatars.githubusercontent.com/u/16486492?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/cloudyuyuyu",

"html_url": "https://github.com/cloudyuyuyu",

"followers_url": "https://api.github.com/users/cloudyuyuyu/followers",

"following_url": "https://api.github.com/users/cloudyuyuyu/following{/other_user}",

"gists_url": "https://api.github.com/users/cloudyuyuyu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/cloudyuyuyu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/cloudyuyuyu/subscriptions",

"organizations_url": "https://api.github.com/users/cloudyuyuyu/orgs",

"repos_url": "https://api.github.com/users/cloudyuyuyu/repos",

"events_url": "https://api.github.com/users/cloudyuyuyu/events{/privacy}",

"received_events_url": "https://api.github.com/users/cloudyuyuyu/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

},

{

"id": 3287858981,

"node_id": "MDU6TGFiZWwzMjg3ODU4OTgx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/streaming",

"name": "streaming",

"color": "fef2c0",

"default": false,

"description": ""

}

] | open | false | null | [] | null | [

"Hi ! Thanks for reporting :)\r\n\r\nWhen using a PyTorch's data loader with `num_workers>1` and an iterable dataset, each worker streams the exact same data by default, resulting in duplicate data when iterating using the data loader.\r\n\r\nWe can probably fix this in `datasets` by checking `torch.utils.data.get_worker_info()` which gives the worker id if it happens.",

"> Hi ! Thanks for reporting :)\r\n> \r\n> When using a PyTorch's data loader with `num_workers>1` and an iterable dataset, each worker streams the exact same data by default, resulting in duplicate data when iterating using the data loader.\r\n> \r\n> We can probably fix this in `datasets` by checking `torch.utils.data.get_worker_info()` which gives the worker id if it happens.\r\nHi ! Thanks for reply\r\n\r\nDo u have some plans to fix the problem?\r\n",

"Isn’t that somehow a bug on PyTorch side? (Just asking because this behavior seems quite general and maybe not what would be intended)",

"From PyTorch's documentation [here](https://pytorch.org/docs/stable/data.html#dataset-types):\r\n\r\n> When using an IterableDataset with multi-process data loading. The same dataset object is replicated on each worker process, and thus the replicas must be configured differently to avoid duplicated data. See [IterableDataset](https://pytorch.org/docs/stable/data.html#torch.utils.data.IterableDataset) documentations for how to achieve this.\r\n\r\nIt looks like an intended behavior from PyTorch\r\n\r\nAs suggested in the [docstring of the IterableDataset class](https://pytorch.org/docs/stable/data.html#torch.utils.data.IterableDataset), we could pass a `worker_init_fn` to the DataLoader to fix this. It could be called `streaming_worker_init_fn` for example.\r\n\r\nHowever, while this solution works, I'm worried that many users simply don't know about this parameter and just start their training with duplicate data without knowing it. That's why I'm more in favor of integrating the check on the worker id directly in `datasets` in our implementation of `IterableDataset.__iter__`."

] | 1,639,366,997,000 | 1,639,479,210,000 | null | NONE | null | ## Describe the bug

The data is repeated num_works times when we load_dataset with streaming and set num_works > 1 when construct dataloader

## Steps to reproduce the bug

```python

# Sample code to reproduce the bug

import pandas as pd

import numpy as np

import os

from datasets import load_dataset

from torch.utils.data import DataLoader

from tqdm import tqdm

import shutil

NUM_OF_USER = 1000000

NUM_OF_ACTION = 50000

NUM_OF_SEQUENCE = 10000

NUM_OF_FILES = 32

NUM_OF_WORKERS = 16

if __name__ == "__main__":

shutil.rmtree("./dataset")

for i in range(NUM_OF_FILES):

sequence_data = pd.DataFrame(

{

"imei": np.random.randint(1, NUM_OF_USER, size=NUM_OF_SEQUENCE),

"sequence": np.random.randint(1, NUM_OF_ACTION, size=NUM_OF_SEQUENCE)

}

)

if not os.path.exists("./dataset"):

os.makedirs("./dataset")

sequence_data.to_csv(f"./dataset/sequence_data_{i}.csv",

index=False)

dataset = load_dataset("csv",

data_files=[os.path.join("./dataset",file) for file in os.listdir("./dataset") if file.endswith(".csv")],

split="train",

streaming=True).with_format("torch")

data_loader = DataLoader(dataset,

batch_size=1024,

num_workers=NUM_OF_WORKERS)

result = pd.DataFrame()

for i, batch in tqdm(enumerate(data_loader)):

result = pd.concat([result,

pd.DataFrame(batch)],

axis=0)

result.to_csv(f"num_work_{NUM_OF_WORKERS}.csv", index=False)

```

## Expected results

data do not duplicate

## Actual results

data duplicate NUM_OF_WORKERS = 16

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version:datasets==1.14.0

- Platform:transformers==4.11.3

- Python version:3.8

- PyArrow version:

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3423/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3423/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3422 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3422/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3422/comments | https://api.github.com/repos/huggingface/datasets/issues/3422/events | https://github.com/huggingface/datasets/issues/3422 | 1,078,022,619 | I_kwDODunzps5AQVHb | 3,422 | Error about load_metric | {

"login": "jiacheng-ye",

"id": 30772464,

"node_id": "MDQ6VXNlcjMwNzcyNDY0",

"avatar_url": "https://avatars.githubusercontent.com/u/30772464?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jiacheng-ye",

"html_url": "https://github.com/jiacheng-ye",

"followers_url": "https://api.github.com/users/jiacheng-ye/followers",

"following_url": "https://api.github.com/users/jiacheng-ye/following{/other_user}",

"gists_url": "https://api.github.com/users/jiacheng-ye/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jiacheng-ye/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jiacheng-ye/subscriptions",

"organizations_url": "https://api.github.com/users/jiacheng-ye/orgs",

"repos_url": "https://api.github.com/users/jiacheng-ye/repos",

"events_url": "https://api.github.com/users/jiacheng-ye/events{/privacy}",

"received_events_url": "https://api.github.com/users/jiacheng-ye/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | open | false | null | [] | null | [

"Hi ! I wasn't able to reproduce your error.\r\n\r\nCan you try to clear your cache at `~/.cache/huggingface/modules` and try again ?"

] | 1,639,363,791,000 | 1,639,507,463,000 | null | NONE | null | ## Describe the bug

File "/opt/conda/lib/python3.8/site-packages/datasets/load.py", line 1371, in load_metric

metric = metric_cls(

TypeError: 'NoneType' object is not callable

## Steps to reproduce the bug

```python

metric = load_metric("glue", "sst2")

```

## Environment info

- `datasets` version: 1.16.1

- Platform: Linux-4.15.0-161-generic-x86_64-with-glibc2.10

- Python version: 3.8.3

- PyArrow version: 6.0.1

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3422/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3422/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3419 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3419/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3419/comments | https://api.github.com/repos/huggingface/datasets/issues/3419/events | https://github.com/huggingface/datasets/issues/3419 | 1,077,350,974 | I_kwDODunzps5ANxI- | 3,419 | `.to_json` is extremely slow after `.select` | {

"login": "eladsegal",

"id": 13485709,

"node_id": "MDQ6VXNlcjEzNDg1NzA5",

"avatar_url": "https://avatars.githubusercontent.com/u/13485709?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/eladsegal",

"html_url": "https://github.com/eladsegal",

"followers_url": "https://api.github.com/users/eladsegal/followers",

"following_url": "https://api.github.com/users/eladsegal/following{/other_user}",

"gists_url": "https://api.github.com/users/eladsegal/gists{/gist_id}",

"starred_url": "https://api.github.com/users/eladsegal/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/eladsegal/subscriptions",

"organizations_url": "https://api.github.com/users/eladsegal/orgs",

"repos_url": "https://api.github.com/users/eladsegal/repos",

"events_url": "https://api.github.com/users/eladsegal/events{/privacy}",

"received_events_url": "https://api.github.com/users/eladsegal/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | open | false | null | [] | null | [

"Hi ! It's slower indeed because a datasets on which `select`/`shard`/`train_test_split`/`shuffle` has been called has to do additional steps to retrieve the data of the dataset table in the right order.\r\n\r\nIndeed, if you call `dataset.select([0, 5, 10])`, the underlying table of the dataset is not altered to keep the examples at index 0, 5, and 10. Instead, an indices mapping is added on top of the table, that says that the first example is at index 0, the second at index 5 and the last one at index 10.\r\n\r\nTherefore accessing the examples of the dataset is slower because of the additional step that uses the indices mapping.\r\n\r\nThe step that takes the most time is to query the dataset table from a list of indices here:\r\n\r\nhttps://github.com/huggingface/datasets/blob/047dc756ed20fbf06e6bcaf910464aba0e20610a/src/datasets/formatting/formatting.py#L61-L63\r\n\r\nIn your case it can be made significantly faster by checking if the indices are contiguous. If they're contiguous, we could pass a python `slice` or `range` instead of a list of integers to `_query_table`. This way `_query_table` will do only one lookup to get the queried batch instead of `batch_size` lookups.\r\n\r\nGiven that calling `select` with contiguous indices is a common use case I'm in favor of implementing such an optimization :)\r\nLet me know what you think",

"Hi, thanks for the response!\r\nI still don't understand why it is so much slower than iterating and saving:\r\n```python\r\nfrom datasets import load_dataset\r\n\r\noriginal = load_dataset(\"squad\", split=\"train\")\r\noriginal.to_json(\"from_original.json\") # Takes 0 seconds\r\n\r\nselected_subset1 = original.select([i for i in range(len(original))])\r\nselected_subset1.to_json(\"from_select1.json\") # Takes 99 seconds\r\n\r\nselected_subset2 = original.select([i for i in range(int(len(original) / 2))])\r\nselected_subset2.to_json(\"from_select2.json\") # Takes 47 seconds\r\n\r\nselected_subset3 = original.select([i for i in range(len(original)) if i % 2 == 0])\r\nselected_subset3.to_json(\"from_select3.json\") # Takes 49 seconds\r\n\r\nimport json\r\nimport time\r\ndef fast_to_json(dataset, path):\r\n start = time.time()\r\n with open(path, mode=\"w\") as f:\r\n for example in dataset:\r\n f.write(json.dumps(example, separators=(',', ':')) + \"\\n\")\r\n end = time.time()\r\n print(f\"Saved {len(dataset)} examples to {path} in {end - start} seconds.\")\r\n\r\nfast_to_json(original, \"from_original_fast.json\")\r\nfast_to_json(selected_subset1, \"from_select1_fast.json\")\r\nfast_to_json(selected_subset2, \"from_select2_fast.json\")\r\nfast_to_json(selected_subset3, \"from_select3_fast.json\")\r\n```\r\n```\r\nSaved 87599 examples to from_original_fast.json in 8 seconds.\r\nSaved 87599 examples to from_select1_fast.json in 10 seconds.\r\nSaved 43799 examples to from_select2_fast.json in 6 seconds.\r\nSaved 43800 examples to from_select3_fast.json in 5 seconds.\r\n```",

"There are slight differences between what you're doing and what `to_json` is actually doing.\r\nIn particular `to_json` currently converts batches of rows (as an arrow table) to a pandas dataframe, and then to JSON Lines. From your benchmark it looks like it's faster if we don't use pandas.\r\n\r\nThanks for investigating, I think we can optimize `to_json` significantly thanks to your test.",

"Thanks for your observations, @eladsegal! I spent some time with this and tried different approaches. Turns out that https://github.com/huggingface/datasets/blob/bb13373637b1acc55f8a468a8927a56cf4732230/src/datasets/io/json.py#L100 is giving the problem when we use `to_json` after `select`. This is when `indices` parameter in `query_table` is not `None` (if it is `None` then `to_json` should work as expected)\r\n\r\nIn order to circumvent this problem, I found out instead of doing Arrow Table -> Pandas-> JSON we can directly go to JSON by using `to_pydict()` which is a little slower than the current approach but at least `select` works properly now. Lmk what you guys think of it @lhoestq, @eladsegal?"

] | 1,639,186,591,000 | 1,639,816,971,000 | null | CONTRIBUTOR | null | ## Describe the bug

Saving a dataset to JSON with `to_json` is extremely slow after using `.select` on the original dataset.

## Steps to reproduce the bug

```python

from datasets import load_dataset

original = load_dataset("squad", split="train")

original.to_json("from_original.json") # Takes 0 seconds

selected_subset1 = original.select([i for i in range(len(original))])

selected_subset1.to_json("from_select1.json") # Takes 212 seconds

selected_subset2 = original.select([i for i in range(int(len(original) / 2))])

selected_subset2.to_json("from_select2.json") # Takes 90 seconds

```

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: master (https://github.com/huggingface/datasets/commit/6090f3cfb5c819f441dd4a4bb635e037c875b044)

- Platform: Linux-4.4.0-19041-Microsoft-x86_64-with-glibc2.27

- Python version: 3.9.7

- PyArrow version: 6.0.0

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3419/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3419/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3416 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3416/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3416/comments | https://api.github.com/repos/huggingface/datasets/issues/3416/events | https://github.com/huggingface/datasets/issues/3416 | 1,076,868,771 | I_kwDODunzps5AL7aj | 3,416 | disaster_response_messages unavailable | {

"login": "sacdallago",

"id": 6240943,

"node_id": "MDQ6VXNlcjYyNDA5NDM=",

"avatar_url": "https://avatars.githubusercontent.com/u/6240943?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/sacdallago",

"html_url": "https://github.com/sacdallago",

"followers_url": "https://api.github.com/users/sacdallago/followers",

"following_url": "https://api.github.com/users/sacdallago/following{/other_user}",

"gists_url": "https://api.github.com/users/sacdallago/gists{/gist_id}",

"starred_url": "https://api.github.com/users/sacdallago/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sacdallago/subscriptions",

"organizations_url": "https://api.github.com/users/sacdallago/orgs",

"repos_url": "https://api.github.com/users/sacdallago/repos",

"events_url": "https://api.github.com/users/sacdallago/events{/privacy}",

"received_events_url": "https://api.github.com/users/sacdallago/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer",

"name": "dataset-viewer",

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co"

}

] | closed | false | null | [] | null | [

"Hi, thanks for reporting! This is a duplicate of https://github.com/huggingface/datasets/issues/3240. We are working on a fix.\r\n\r\n"

] | 1,639,144,157,000 | 1,639,492,709,000 | null | NONE | null | ## Dataset viewer issue for '* disaster_response_messages*'

**Link:** https://huggingface.co/datasets/disaster_response_messages

Dataset unavailable. Link dead: https://datasets.appen.com/appen_datasets/disaster_response_data/disaster_response_messages_training.csv

Am I the one who added this dataset ?No

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3416/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3416/timeline | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3415 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3415/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3415/comments | https://api.github.com/repos/huggingface/datasets/issues/3415/events | https://github.com/huggingface/datasets/issues/3415 | 1,076,472,534 | I_kwDODunzps5AKarW | 3,415 | Non-deterministic tests: CI tests randomly fail | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,