VAGO solutions SauerkrautLM-Phi-3-medium

Introducing SauerkrautLM-Phi-3-medium – our Sauerkraut version of the powerful unsloth/Phi-3-medium-4k-instruct!

- Aligned with DPO using Spectrum QLoRA (by Eric Hartford, Lucas Atkins, Fernando Fernandes Neto and David Golchinfar) targeting 50% of the layers.

Table of Contents

- Overview of all SauerkrautLM-Phi-3-medium

- Model Details

- Evaluation

- Disclaimer

- Contact

- Collaborations

- Acknowledgement

All SauerkrautLM-Phi-3-medium

| Model | HF | EXL2 | GGUF | AWQ |

|---|---|---|---|---|

| SauerkrautLM-Phi-3-medium | Link | coming soon | coming soon | coming soon |

Model Details

SauerkrautLM-Phi-3-medium

- Model Type: SauerkrautLM-Phi-3-medium is a finetuned Model based on unsloth/Phi-3-medium-4k-instruct

- Language(s): German, English

- License: MIT

- Contact: VAGO solutions

Training procedure:

- We trained this model with Spectrum QLoRA DPO Fine-Tuning for 1 epoch with 70k samples targeting 50% of the layers with a high Learningrate of 5e-04. This relatively high learning rate was feasible due to the selective targeting of layers; had we applied this rate to all layers, the gradients would have exploded.

Fine-Tuning Details

Epochs: 1 Data Size: 70,000 samples Targeted Layers: 50% Learning Rate: 5e-04 Warm-up Ratio: 0.03 The strategy of targeting only half of the layers also enabled us to use a very low warm-up ratio of 0.03, contributing to the overall stability of the fine-tuning process.

Results

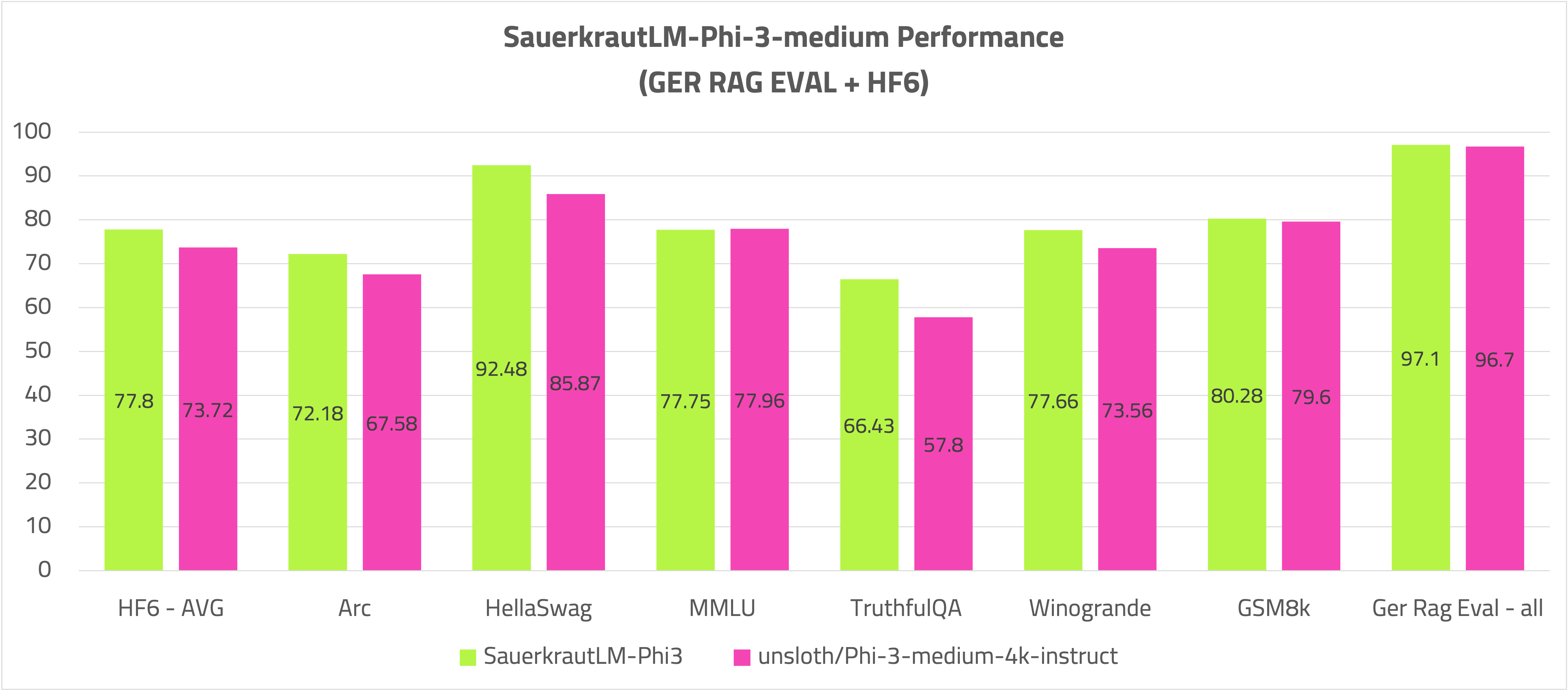

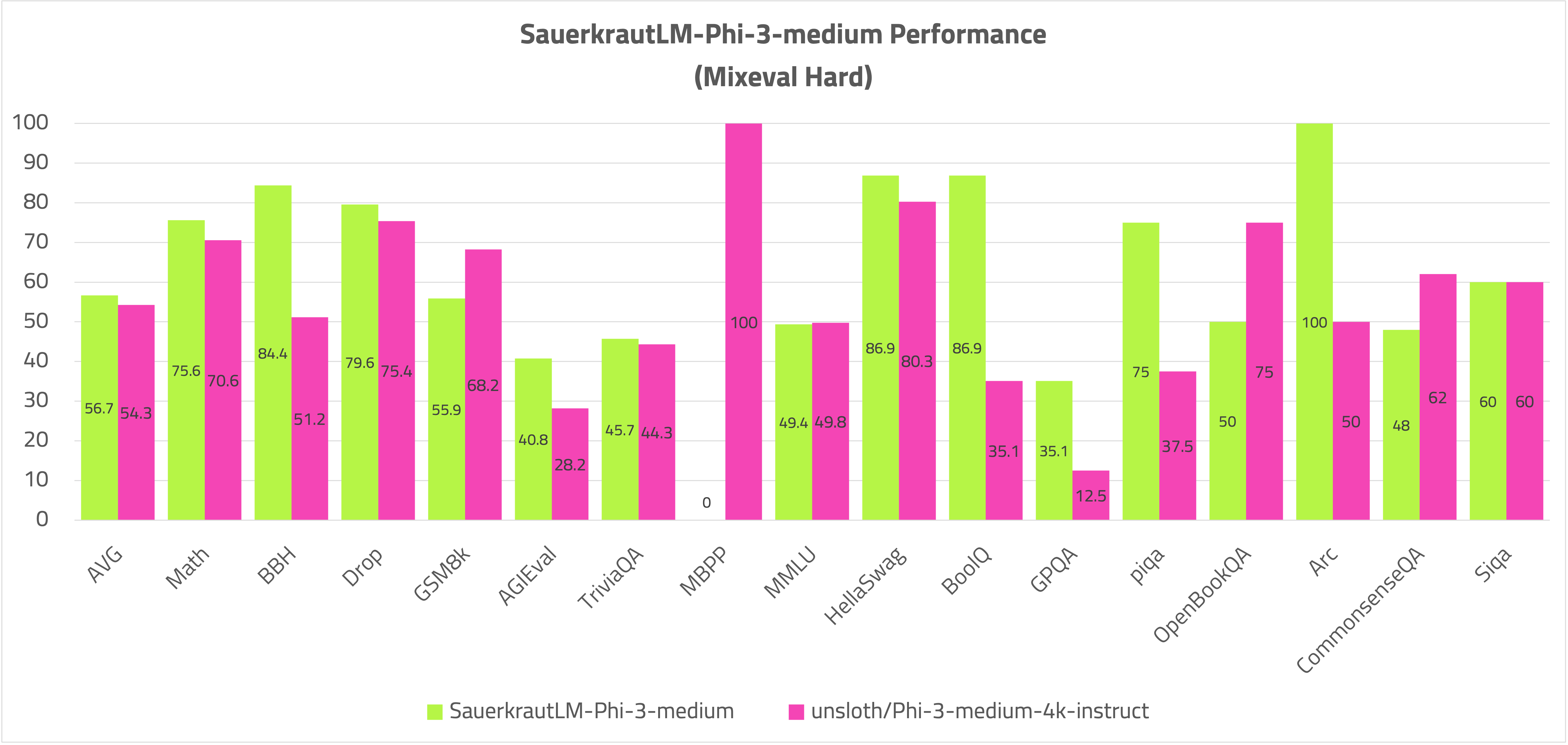

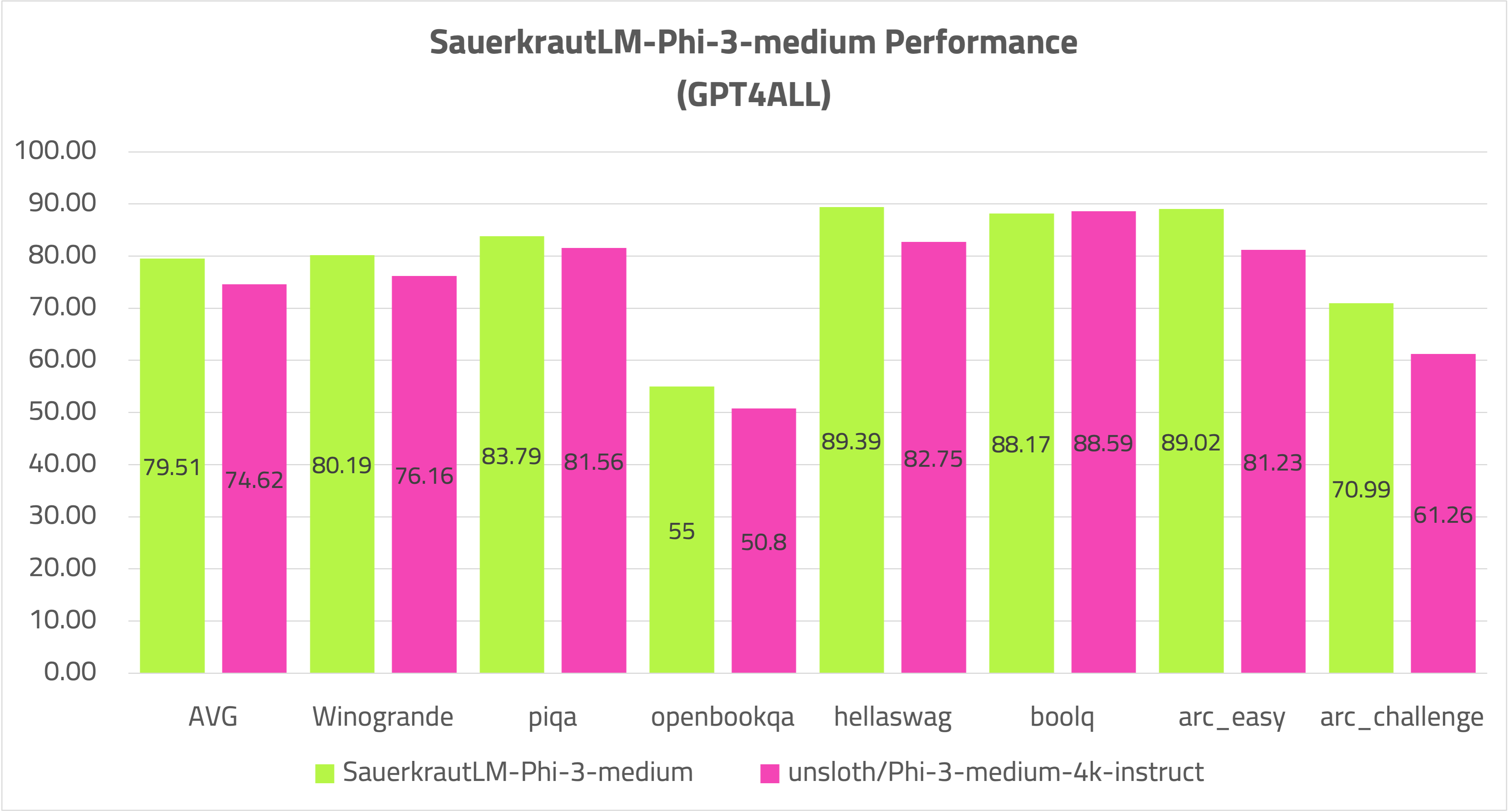

This fine-tuning approach resulted in a noticeable improvement in the model's reasoning capabilities. The model's performance was evaluated using a variety of benchmark suites, including the newly introduced MixEval, which shows a 96% correlation with Chatbot Arena. MixEval uses regular updated test data, providing a reliable benchmark for model performance.

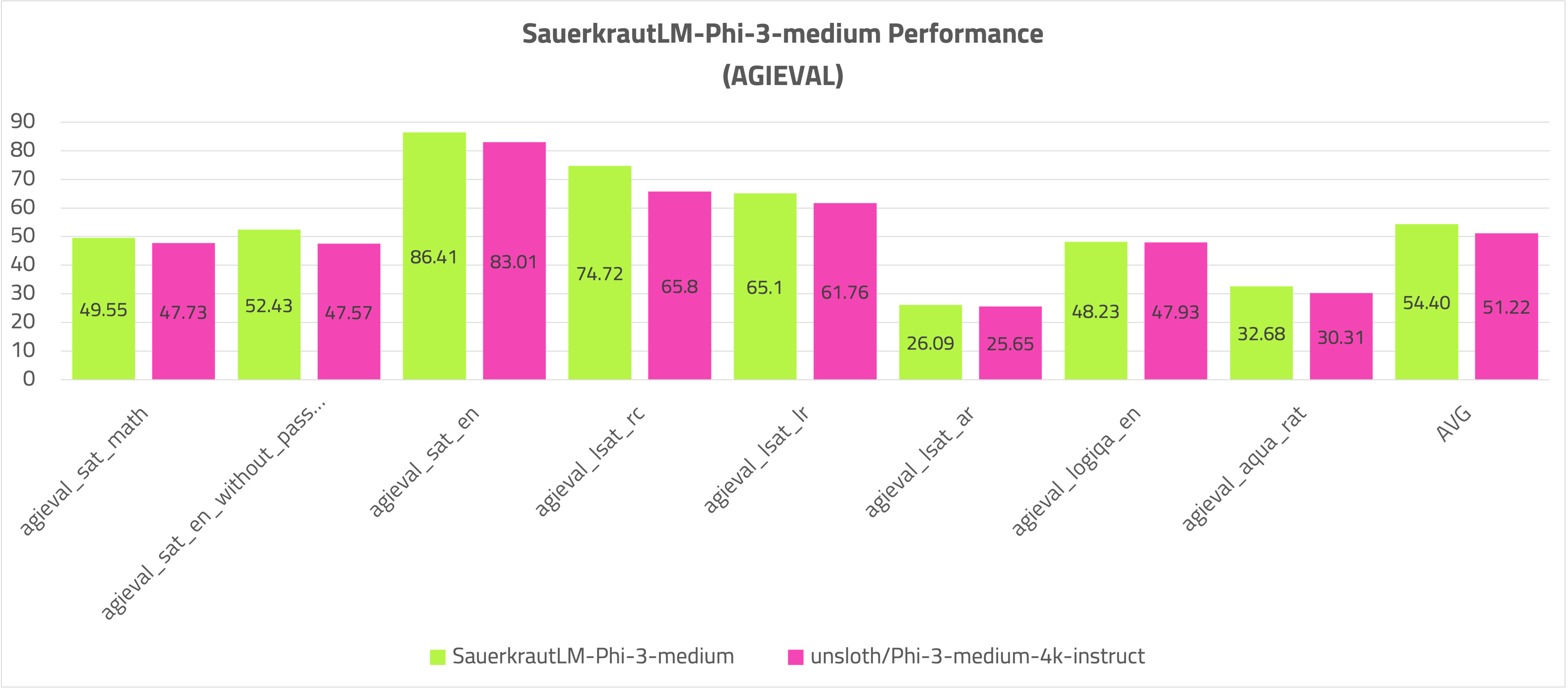

Evaluation

Open LLM Leaderboard and German RAG:

AGIEval

Disclaimer

We must inform users that despite our best efforts in data cleansing, the possibility of uncensored content slipping through cannot be entirely ruled out. However, we cannot guarantee consistently appropriate behavior. Therefore, if you encounter any issues or come across inappropriate content, we kindly request that you inform us through the contact information provided. Additionally, it is essential to understand that the licensing of these models does not constitute legal advice. We are not held responsible for the actions of third parties who utilize our models.

Contact

If you are interested in customized LLMs for business applications, please get in contact with us via our websites. We are also grateful for your feedback and suggestions.

Collaborations

We are also keenly seeking support and investment for our startup, VAGO solutions where we continuously advance the development of robust language models designed to address a diverse range of purposes and requirements. If the prospect of collaboratively navigating future challenges excites you, we warmly invite you to reach out to us at VAGO solutions

Acknowledgement

Many thanks to unsloth and Microsoft for providing such valuable model to the Open-Source community.

- Downloads last month

- 3,268