Qwen2.5-7B-Gutenberg-KTO

This model is fine tuned over gutenberg datasets using kto strategy. It's my first time to use kto strategy, and I'm not sure how the model actually performs.

Compared to those large companies which remove accessories such as charger and cables from packages, I have achieved real environment protection by truly reducing energy consumption, rather than shifting costs to consumers.

Checkout GGUF here: Orion-zhen/Qwen2.5-7B-Gutenberg-KTO-Q6_K-GGUF

Details

Platform

I randomly grabbed some rubbish from a second-hand market and built a PC

I carefully selected various dedicated hardwares and constructed an incomparable home server, which I entitled the Great Server:

- CPU: Intel Core i3-4160

- Memory: 8G DDR3, single channel

- GPU: Tesla P4, TDP 75W, boasting its Eco friendly energy consumption

- Disk: 1TB M.2 NVME, PCIe 4.0

Training

To practice the eco-friendly training, I utilized various methods, including adam-mini, qlora and unsloth, to minimize VRAM and energy usage, as well as accelerating training speed.

- dataset: Orion-zhen/kto-gutenberg

- epoch: 2

- gradient accumulation: 8

- batch size: 1

- KTO perf beta: 0.1

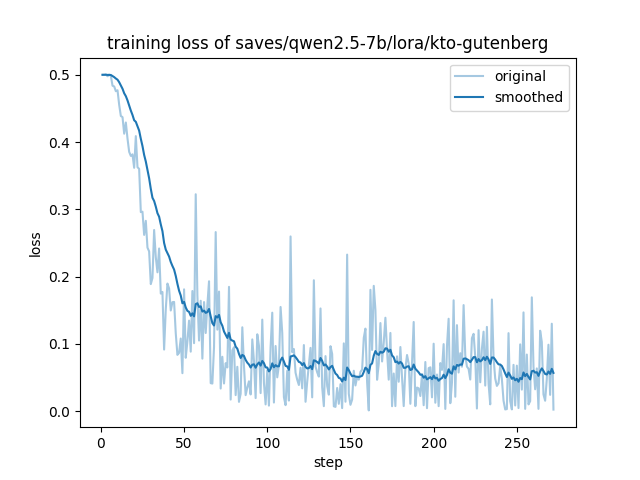

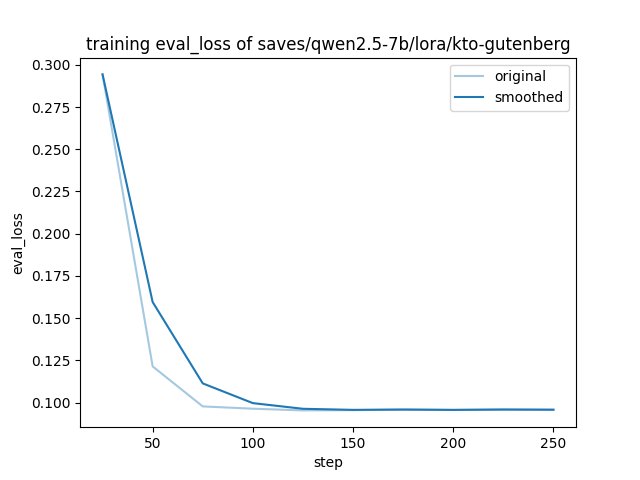

Train log

- Downloads last month

- 32