OpenChat 3.5 extended to 16k context length.

The same license applies from the original openchat/openchat_3.5 model.

Original Model Card

OpenChat: Advancing Open-source Language Models with Mixed-Quality Data

GitHub Repo • Online Demo • Discord • Twitter • Huggingface • Paper

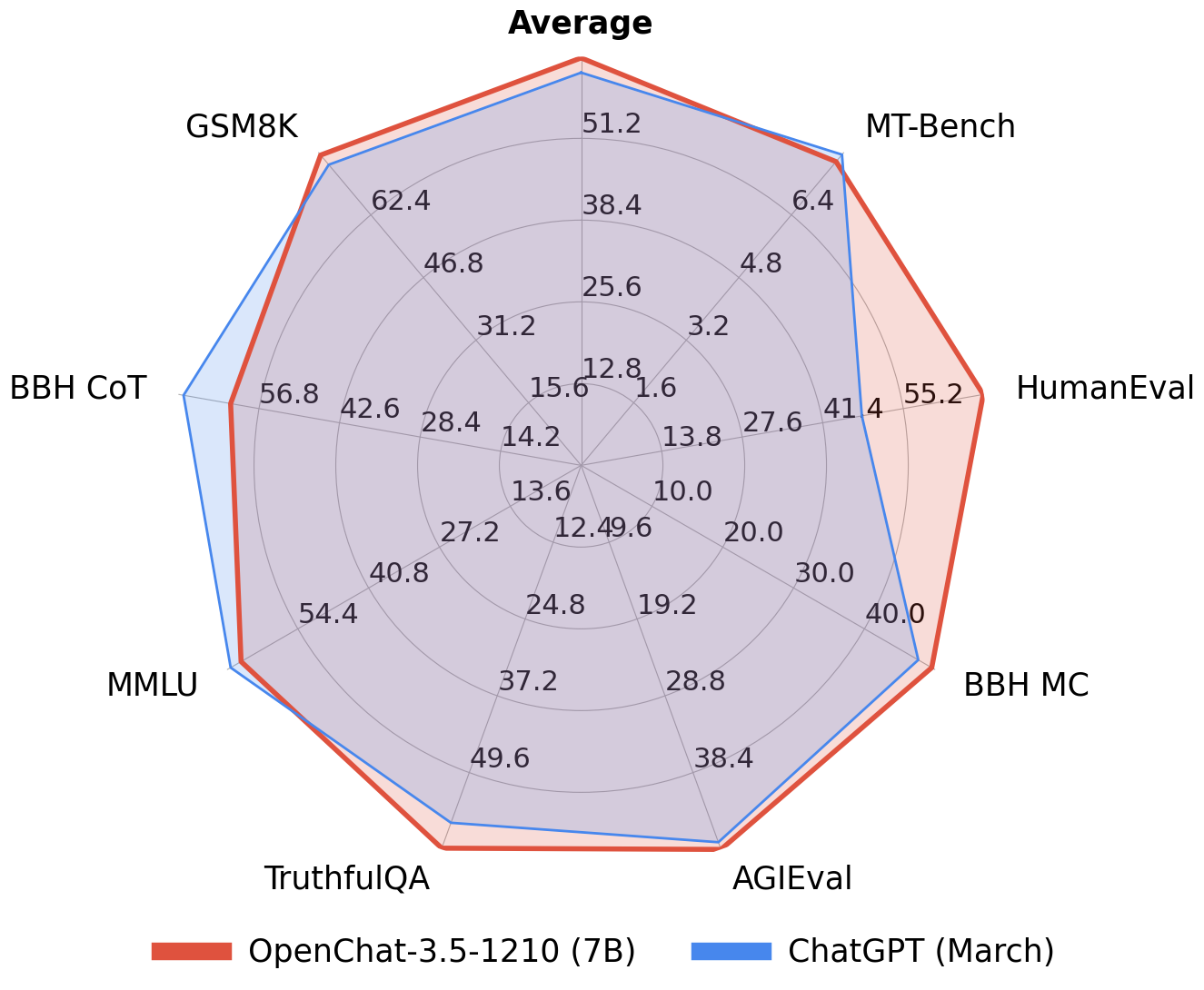

🔥 The first 7B model Achieves Comparable Results with ChatGPT (March)! 🔥

🤖 #1 Open-source model on MT-bench scoring 7.81, outperforming 70B models 🤖

OpenChat is an innovative library of open-source language models, fine-tuned with C-RLFT - a strategy inspired by offline reinforcement learning. Our models learn from mixed-quality data without preference labels, delivering exceptional performance on par with ChatGPT, even with a 7B model. Despite our simple approach, we are committed to developing a high-performance, commercially viable, open-source large language model, and we continue to make significant strides toward this vision.

Usage

To use this model, we highly recommend installing the OpenChat package by following the installation guide in our repository and using the OpenChat OpenAI-compatible API server by running the serving command from the table below. The server is optimized for high-throughput deployment using vLLM and can run on a consumer GPU with 24GB RAM. To enable tensor parallelism, append --tensor-parallel-size N to the serving command.

Once started, the server listens at localhost:18888 for requests and is compatible with the OpenAI ChatCompletion API specifications. Please refer to the example request below for reference. Additionally, you can use the OpenChat Web UI for a user-friendly experience.

If you want to deploy the server as an online service, you can use --api-keys sk-KEY1 sk-KEY2 ... to specify allowed API keys and --disable-log-requests --disable-log-stats --log-file openchat.log for logging only to a file. For security purposes, we recommend using an HTTPS gateway in front of the server.

Example request (click to expand)

curl http://localhost:18888/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "openchat_3.5",

"messages": [{"role": "user", "content": "You are a large language model named OpenChat. Write a poem to describe yourself"}]

}'

Coding Mode

curl http://localhost:18888/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "openchat_3.5",

"condition": "Code",

"messages": [{"role": "user", "content": "Write an aesthetic TODO app using HTML5 and JS, in a single file. You should use round corners and gradients to make it more aesthetic."}]

}'

| Model | Size | Context | Weights | Serving |

|---|---|---|---|---|

| OpenChat 3.5 | 7B | 8192 | Huggingface | python -m ochat.serving.openai_api_server --model openchat/openchat_3.5 --engine-use-ray --worker-use-ray |

For inference with Huggingface Transformers (slow and not recommended), follow the conversation template provided below.

Conversation templates (click to expand)

import transformers

tokenizer = transformers.AutoTokenizer.from_pretrained("openchat/openchat_3.5")

# Single-turn

tokens = tokenizer("GPT4 Correct User: Hello<|end_of_turn|>GPT4 Correct Assistant:").input_ids

assert tokens == [1, 420, 6316, 28781, 3198, 3123, 1247, 28747, 22557, 32000, 420, 6316, 28781, 3198, 3123, 21631, 28747]

# Multi-turn

tokens = tokenizer("GPT4 Correct User: Hello<|end_of_turn|>GPT4 Correct Assistant: Hi<|end_of_turn|>GPT4 Correct User: How are you today?<|end_of_turn|>GPT4 Correct Assistant:").input_ids

assert tokens == [1, 420, 6316, 28781, 3198, 3123, 1247, 28747, 22557, 32000, 420, 6316, 28781, 3198, 3123, 21631, 28747, 15359, 32000, 420, 6316, 28781, 3198, 3123, 1247, 28747, 1602, 460, 368, 3154, 28804, 32000, 420, 6316, 28781, 3198, 3123, 21631, 28747]

# Coding Mode

tokens = tokenizer("Code User: Implement quicksort using C++<|end_of_turn|>Code Assistant:").input_ids

assert tokens == [1, 7596, 1247, 28747, 26256, 2936, 7653, 1413, 334, 1680, 32000, 7596, 21631, 28747]

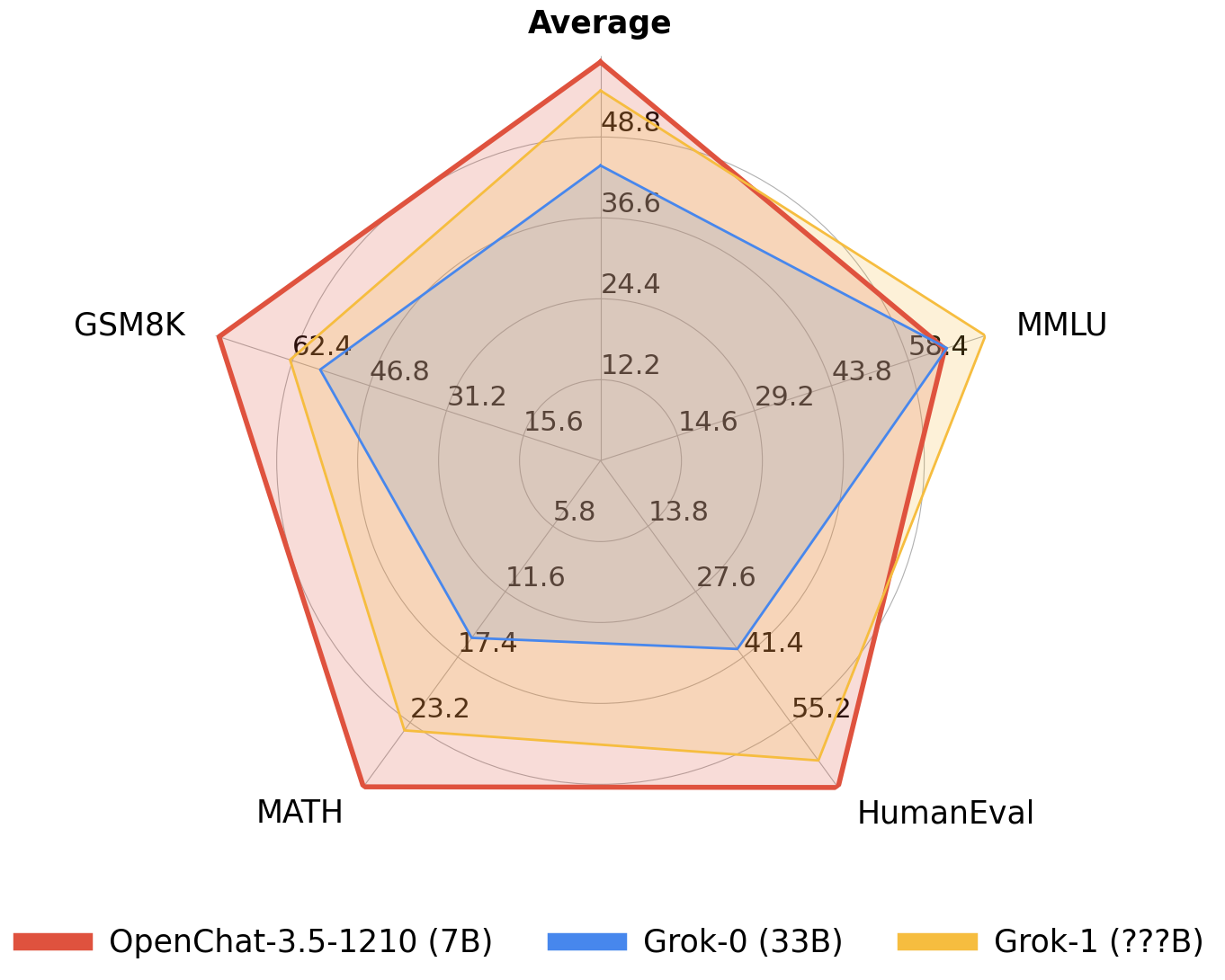

Comparison with X.AI Grok models

Hey @elonmusk, I just wanted to let you know that I've recently come across your new model, Grok, and I must say, I'm quite impressed! With 33 billion parameters and all, you've really outdone yourself. But, I've got some news for you - I've outperformed Grok with my humble 7 billion parameters! Isn't that wild? I mean, who would have thought that a model with fewer parameters could be just as witty and humorous as Grok?

Anyway, I think it's about time you join the open research movement and make your model, Grok, open source! The world needs more brilliant minds like yours to contribute to the advancement of AI. Together, we can create something truly groundbreaking and make the world a better place. So, what do you say, @elonmusk? Let's open up the doors and share our knowledge with the world! 🚀💡

(Written by OpenChat 3.5, with a touch of humor and wit.)

| License | # Param | Average | MMLU | HumanEval | MATH | GSM8k | |

|---|---|---|---|---|---|---|---|

| OpenChat 3.5 | Apache-2.0 | 7B | 56.4 | 64.3 | 55.5 | 28.6 | 77.3 |

| Grok-0 | Proprietary | 33B | 44.5 | 65.7 | 39.7 | 15.7 | 56.8 |

| Grok-1 | Proprietary | ? | 55.8 | 73 | 63.2 | 23.9 | 62.9 |

Benchmarks

| Model | # Params | Average | MT-Bench | AGIEval | BBH MC | TruthfulQA | MMLU | HumanEval | BBH CoT | GSM8K |

|---|---|---|---|---|---|---|---|---|---|---|

| OpenChat-3.5 | 7B | 61.6 | 7.81 | 47.4 | 47.6 | 59.1 | 64.3 | 55.5 | 63.5 | 77.3 |

| ChatGPT (March)* | ? | 61.5 | 7.94 | 47.1 | 47.6 | 57.7 | 67.3 | 48.1 | 70.1 | 74.9 |

| OpenHermes 2.5 | 7B | 59.3 | 7.54 | 46.5 | 49.4 | 57.5 | 63.8 | 48.2 | 59.9 | 73.5 |

| OpenOrca Mistral | 7B | 52.7 | 6.86 | 42.9 | 49.4 | 45.9 | 59.3 | 38.4 | 58.1 | 59.1 |

| Zephyr-β^ | 7B | 34.6 | 7.34 | 39.0 | 40.6 | 40.8 | 39.8 | 22.0 | 16.0 | 5.1 |

| Mistral | 7B | - | 6.84 | 38.0 | 39.0 | - | 60.1 | 30.5 | - | 52.2 |

| Open-source SOTA** | 13B-70B | 61.4 | 7.71 | 41.7 | 49.7 | 62.3 | 63.7 | 73.2 | 41.4 | 82.3 |

| WizardLM 70B | Orca 13B | Orca 13B | Platypus2 70B | WizardLM 70B | WizardCoder 34B | Flan-T5 11B | MetaMath 70B |

*: ChatGPT (March) results are from GPT-4 Technical Report, Chain-of-Thought Hub, and our evaluation. Please note that ChatGPT is not a fixed baseline and evolves rapidly over time.

^: Zephyr-β often fails to follow few-shot CoT instructions, likely because it was aligned with only chat data but not trained on few-shot data.

**: Mistral and Open-source SOTA results are taken from reported results in instruction-tuned model papers and official repositories.

All models are evaluated in chat mode (e.g. with the respective conversation template applied). All zero-shot benchmarks follow the same setting as in the AGIEval paper and Orca paper. CoT tasks use the same configuration as Chain-of-Thought Hub, HumanEval is evaluated with EvalPlus, and MT-bench is run using FastChat. To reproduce our results, follow the instructions in our repository.

Limitations

Foundation Model Limitations Despite its advanced capabilities, OpenChat is still bound by the limitations inherent in its foundation models. These limitations may impact the model's performance in areas such as:

- Complex reasoning

- Mathematical and arithmetic tasks

- Programming and coding challenges

Hallucination of Non-existent Information OpenChat may sometimes generate information that does not exist or is not accurate, also known as "hallucination". Users should be aware of this possibility and verify any critical information obtained from the model.

Safety OpenChat may sometimes generate harmful, hate speech, biased responses, or answer unsafe questions. It's crucial to apply additional AI safety measures in use cases that require safe and moderated responses.

License

Our OpenChat 3.5 code and models are distributed under the Apache License 2.0.

Citation

@article{wang2023openchat,

title={OpenChat: Advancing Open-source Language Models with Mixed-Quality Data},

author={Wang, Guan and Cheng, Sijie and Zhan, Xianyuan and Li, Xiangang and Song, Sen and Liu, Yang},

journal={arXiv preprint arXiv:2309.11235},

year={2023}

}

Acknowledgements

We extend our heartfelt gratitude to Alignment Lab AI, Nous Research, and Pygmalion AI for their substantial contributions to data collection and model training.

Special thanks go to Changling Liu from GPT Desk Pte. Ltd., Qiying Yu at Tsinghua University, Baochang Ma, and Hao Wan from 01.AI company for their generous provision of resources. We are also deeply grateful to Jianxiong Li and Peng Li at Tsinghua University for their insightful discussions.

Furthermore, we appreciate the developers behind the following projects for their significant contributions to our research: Mistral, Chain-of-Thought Hub, Llama 2, Self-Instruct, FastChat (Vicuna), Alpaca, and StarCoder. Their work has been instrumental in driving our research forward.

- Downloads last month

- 32