LAION LeoLM: Linguistically Enhanced Open Language Model

Meet LeoLM-Mistral, the first open and commercially available German Foundation Language Model built on Mistral 7b.

Our models extend Llama-2's capabilities into German through continued pretraining on a large corpus of German-language and mostly locality specific text.

Thanks to a compute grant at HessianAI's new supercomputer 42, we release three foundation models trained with 8k context length.

LeoLM/leo-mistral-hessianai-7b under Apache 2.0 and

LeoLM/leo-hessianai-7b and LeoLM/leo-hessianai-13b under the Llama-2 community license (70b also coming soon! 👀).

With this release, we hope to bring a new wave of opportunities to German open-source and commercial LLM research and accelerate adoption.

Read our blog post or our paper (preprint coming soon) for more details!

A project by Björn Plüster and Christoph Schuhmann in collaboration with LAION and HessianAI.

Model Details

- Finetuned from: mistralai/Mistral-7B-v0.1

- Model type: Causal decoder-only transformer language model

- Language: English and German

- License: Apache 2.0

- Contact: LAION Discord or Björn Plüster

Use in 🤗Transformers

First install direct dependencies:

pip install transformers torch accelerate

If you want faster inference using flash-attention2, you need to install these dependencies:

pip install packaging ninja

pip install flash-attn

Then load the model in transformers:

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

model = AutoModelForCausalLM.from_pretrained(

model="LeoLM/leo-mistral-hessianai-7b",

device_map="auto",

torch_dtype=torch.bfloat16,

use_flash_attn_2=True # optional

)

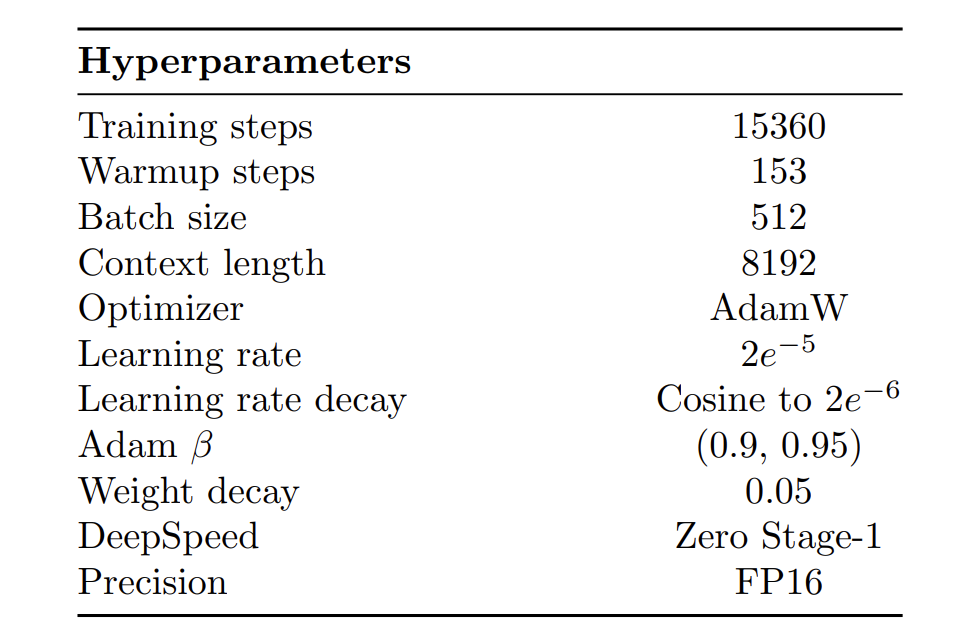

Training parameters

Note that for Mistral training, we changed learning rate to 1e-5 going down to 1e-6. We also used Zero stage 3 and bfloat16 dtype.

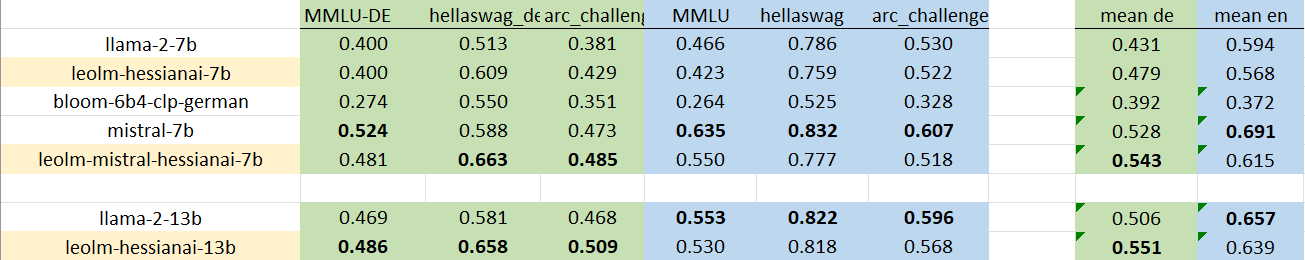

Benchmarks

- Downloads last month

- 5,839