🏠 MoTCoder

• 🤗 Data • 🤗 Model • 🐱 Code • 📃 Paper

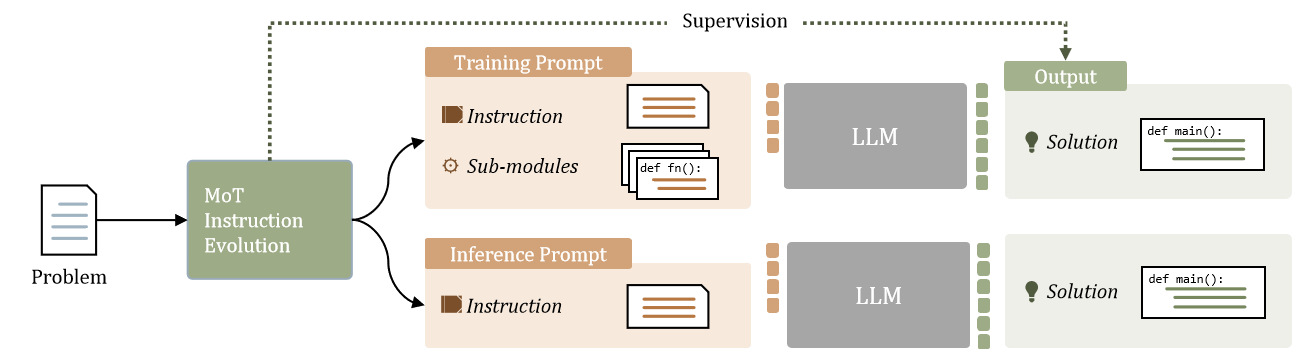

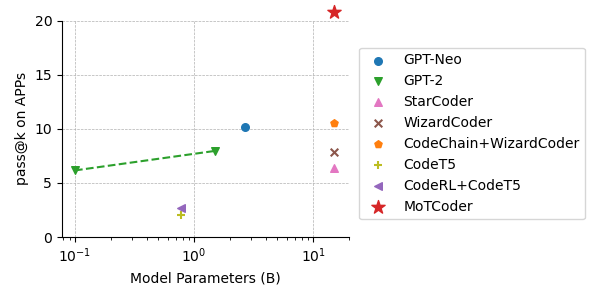

Large Language Models (LLMs) have showcased impressive capabilities in handling straightforward programming tasks. However, their performance tends to falter when confronted with more challenging programming problems. We observe that conventional models often generate solutions as monolithic code blocks, restricting their effectiveness in tackling intricate questions. To overcome this limitation, we present Modular-of-Thought Coder (MoTCoder). We introduce a pioneering framework for MoT instruction tuning, designed to promote the decomposition of tasks into logical sub-tasks and sub-modules. Our investigations reveal that, through the cultivation and utilization of sub-modules, MoTCoder significantly improves both the modularity and correctness of the generated solutions, leading to substantial relative pass@1 improvements of 12.9% on APPS and 9.43% on CodeContests.

Performance

Performance on APPS

| Model | Size | Pass@ | Introductory | Interview | Competition | All |

|---|---|---|---|---|---|---|

| CodeT5 | 770M | 1 | 6.60 | 1.03 | 0.30 | 2.00 |

| GPT-Neo | 2.7B | 1 | 14.68 | 9.85 | 6.54 | 10.15 |

| 5 | 19.89 | 13.19 | 9.90 | 13.87 | ||

| GPT-2 | 0.1B | 1 | 5.64 | 6.93 | 4.37 | 6.16 |

| 5 | 13.81 | 10.97 | 7.03 | 10.75 | ||

| 1.5B | 1 | 7.40 | 9.11 | 5.05 | 7.96 | |

| 5 | 16.86 | 13.84 | 9.01 | 13.48 | ||

| GPT-3 | 175B | 1 | 0.57 | 0.65 | 0.21 | 0.55 |

| StarCoder | 15B | 1 | 7.25 | 6.89 | 4.08 | 6.40 |

| WizardCoder | 15B | 1 | 26.04 | 4.21 | 0.81 | 7.90 |

| MoTCoder | 15B | 1 | 33.80 | 19.70 | 11.09 | 20.80 |

| text-davinci-002 | - | 1 | - | - | - | 7.48 |

| code-davinci-002 | - | 1 | 29.30 | 6.40 | 2.50 | 10.20 |

| GPT3.5 | - | 1 | 48.00 | 19.42 | 5.42 | 22.33 |

Performance on CodeContests

| Model | Size | Revision | Val pass@1 | Val pass@5 | Test pass@1 | Test pass@5 | Average pass@1 | Average pass@5 |

|---|---|---|---|---|---|---|---|---|

| code-davinci-002 | - | - | - | - | 1.00 | - | 1.00 | - |

| code-davinci-002 + CodeT | - | 5 | - | - | 3.20 | - | 3.20 | - |

| WizardCoder | 15B | - | 1.11 | 3.18 | 1.98 | 3.27 | 1.55 | 3.23 |

| WizardCoder + CodeChain | 15B | 5 | 2.35 | 3.29 | 2.48 | 3.30 | 2.42 | 3.30 |

| MoTCoder | 15B | - | 2.39 | 7.69 | 6.18 | 12.73 | 4.29 | 10.21 |

| GPT3.5 | - | - | 6.81 | 16.23 | 5.82 | 11.16 | 6.32 | 13.70 |

- Downloads last month

- 16