pygmalion-2-supercot-limarpv3-13b-exl2

These are testing EXL2 quants of a Llama 2-based model consisting of a merge of several models using PEFT adapters:

- PygmalionAI/pygmalion-2-13b

- Doctor-Shotgun/llama-2-supercot-lora

- lemonilia/LimaRP-Llama2-13B-v3-EXPERIMENT

Zaraki's zarakitools repo was used to perform these operations.

The goal was to add the length instruction training of LimaRPv3 and additional stylistic elements to the Pygmalion 2 + SuperCoT model. GGUFs available in the Misc Models repo.

The versions are as follows:

- 1.0wt: all loras merged onto the base model at full weight

- 0.66wt: Pygmalion 2 + SuperCoT at full weight, LimaRPv3 added at 0.66 weight

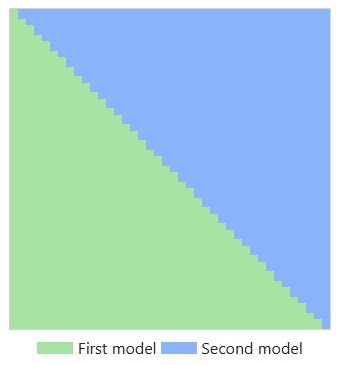

- grad: Gradient merge with SuperCoT being added to the deep layers and LimaRPv3 added to the shallow layers, reaching an average weight of 0.5 weight for each lora

The quants are as follows:

- 4.0bpw-h6: 4 decoder bits per weight, 6 head bits

- ideal for 12gb GPUs, or 16gb GPUs with NTK extended context or CFG

- 6.0bpw-h6: 6 decoder bits per weight, 6 head bits

- ideal for 16gb GPUs, or 24gb GPUs with NTK extended context or CFG

- 8bit-32g-h8: all tensors 8bit 32g, 8 head bits

- experimental quant, this is with exllamav2 monkeypatched to quantize all tensors to 8bit 32g similar in size to old GPTQ 8bit no groupsize, recommend 24gb GPU

Usage:

Due to this being a merge of multiple models, different prompt formats may work, but you can try the Alpaca instruction format of LIMARP v3:

### Instruction:

Character's Persona: {bot character description}

User's Persona: {user character description}

Scenario: {what happens in the story}

Play the role of Character. You must engage in a roleplaying chat with User below this line. Do not write dialogues and narration for User.

### Input:

User: {utterance}

### Response:

Character: {utterance}

### Input

User: {utterance}

### Response:

Character: {utterance}

(etc.)

Or the Pygmalion/Metharme format:

<|system|>Enter RP mode. Pretend to be {{char}} whose persona follows:

{{persona}}

You shall reply to the user while staying in character, and generate long responses.

<|user|>Hello!<|model|>{model's response goes here}

The model was also tested using a system prompt with no instruction sequences:

Write Character's next reply in the roleplay between User and Character. Stay in character and write creative responses that move the scenario forward. Narrate in detail, using elaborate descriptions. The following is your persona:

{{persona}}

[Current conversation]

User: {utterance}

Character: {utterance}

Message length control

Due to the inclusion of LimaRP v3, it is possible to append a length modifier to the response instruction sequence, like this:

### Input

User: {utterance}

### Response: (length = medium)

Character: {utterance}

This has an immediately noticeable effect on bot responses. The available lengths are: tiny, short, medium, long, huge, humongous, extreme, unlimited. The recommended starting length is medium. Keep in mind that the AI may ramble or impersonate the user with very long messages.

Bias, Risks, and Limitations

The model will show biases similar to those observed in niche roleplaying forums on the Internet, besides those exhibited by the base model. It is not intended for supplying factual information or advice in any form.

Training Details

This model is a merge. Please refer to the link repositories of the merged models for details.