Cerebrum-1.0-8x7B - SOTA GGUF

- Model creator: Aether AI

- Original model: Cerebrum 1.0 8x7B

Description

This repo contains State Of The Art quantized GGUF format model files for Cerebrum 1.0 8x7B.

Quantization was done with an importance matrix that was trained for ~250K tokens (64 batches of 4096 tokens) of groups_merged.txt and wiki.train.raw concatenated.

Prompt template: Cerebrum

<s>A chat between a user and a thinking artificial intelligence assistant. The assistant describes its thought process and gives helpful and detailed answers to the user's questions.

User: Are you conscious?

AI:

Compatibility

These quantised GGUFv3 files are compatible with llama.cpp from February 27th 2024 onwards, as of commit 0becb22

They are also compatible with many third party UIs and libraries provided they are built using a recent llama.cpp.

Explanation of quantisation methods

Click to see details

The new methods available are:

- GGML_TYPE_IQ1_S - 1-bit quantization in super-blocks with an importance matrix applied, effectively using 1.56 bits per weight (bpw)

- GGML_TYPE_IQ2_XXS - 2-bit quantization in super-blocks with an importance matrix applied, effectively using 2.06 bpw

- GGML_TYPE_IQ2_XS - 2-bit quantization in super-blocks with an importance matrix applied, effectively using 2.31 bpw

- GGML_TYPE_IQ2_S - 2-bit quantization in super-blocks with an importance matrix applied, effectively using 2.5 bpw

- GGML_TYPE_IQ2_M - 2-bit quantization in super-blocks with an importance matrix applied, effectively using 2.7 bpw

- GGML_TYPE_IQ3_XXS - 3-bit quantization in super-blocks with an importance matrix applied, effectively using 3.06 bpw

- GGML_TYPE_IQ3_XS - 3-bit quantization in super-blocks with an importance matrix applied, effectively using 3.3 bpw

- GGML_TYPE_IQ3_S - 3-bit quantization in super-blocks with an importance matrix applied, effectively using 3.44 bpw

- GGML_TYPE_IQ3_M - 3-bit quantization in super-blocks with an importance matrix applied, effectively using 3.66 bpw

- GGML_TYPE_IQ4_XS - 4-bit quantization in super-blocks with an importance matrix applied, effectively using 4.25 bpw

Refer to the Provided Files table below to see what files use which methods, and how.

Provided files

| Name | Quant method | Bits | Size | Max RAM required | Use case |

|---|---|---|---|---|---|

| Cerebrum-1.0-8x7b.IQ1_S.gguf | IQ1_S | 1 | 9.2 GB | 9.7 GB | smallest, significant quality loss - TBD: Waiting for this issue to be resolved |

| Cerebrum-1.0-8x7b.IQ2_XXS.gguf | IQ2_XXS | 2 | 12.0 GB | 12.5 GB | very small, high quality loss |

| Cerebrum-1.0-8x7b.IQ2_XS.gguf | IQ2_XS | 2 | 13.4 GB | 13.9 GB | very small, high quality loss |

| Cerebrum-1.0-8x7b.IQ2_S.gguf | IQ2_S | 2 | 13.6 GB | 14.1 GB | small, substantial quality loss |

| Cerebrum-1.0-8x7b.IQ2_M.gguf | IQ2_M | 2 | 15.0 GB | 15.5 GB | small, greater quality loss |

| Cerebrum-1.0-8x7b.IQ3_XXS.gguf | IQ3_XXS | 3 | 17.3 GB | 17.8 GB | very small, high quality loss |

| Cerebrum-1.0-8x7b.IQ3_XS.gguf | IQ3_XS | 3 | 18.4 GB | 18.9 GB | small, substantial quality loss |

| Cerebrum-1.0-8x7b.IQ3_S.gguf | IQ3_S | 3 | 19.5 GB | 20.0 GB | small, greater quality loss |

| Cerebrum-1.0-8x7b.IQ3_M.gguf | IQ3_M | 3 | 20.5 GB | 21.0 GB | medium, balanced quality - recommended |

| Cerebrum-1.0-8x7b.IQ4_XS.gguf | IQ4_XS | 4 | 24.0 GB | 24.5 GB | small, substantial quality loss |

Generated importance matrix file: Cerebrum-1.0-8x7b.imatrix.dat

Note: the above RAM figures assume no GPU offloading with 4K context. If layers are offloaded to the GPU, this will reduce RAM usage and use VRAM instead.

Example llama.cpp command

Make sure you are using llama.cpp from commit 0becb22 or later.

./main -ngl 33 -m Cerebrum-1.0-8x7b.IQ2_XS.gguf --override-kv llama.expert_used_count=int:3 --color -c 16384 --temp 0.7 --repeat-penalty 1.0 -n -1 -p "A chat between a user and a thinking artificial intelligence assistant. The assistant describes its thought process and gives helpful and detailed answers to the user's questions.\nUser: {prompt}\nAI:"

Change -ngl 33 to the number of layers to offload to GPU. Remove it if you don't have GPU acceleration.

Change -c 16384 to the desired sequence length.

If you want to have a chat-style conversation, replace the -p <PROMPT> argument with -i -ins

If you are low on V/RAM try quantizing the K-cache with -ctk q8_0 or even -ctk q4_0 for big memory savings (depending on context size).

There is a similar option for V-cache (-ctv), however that is not working yet.

For other parameters and how to use them, please refer to the llama.cpp documentation

How to run from Python code

You can use GGUF models from Python using the llama-cpp-python module.

How to load this model in Python code, using llama-cpp-python

For full documentation, please see: llama-cpp-python docs.

First install the package

Run one of the following commands, according to your system:

# Prebuilt wheel with basic CPU support

pip install llama-cpp-python --extra-index-url https://abetlen.github.io/llama-cpp-python/whl/cpu

# Prebuilt wheel with NVidia CUDA acceleration

pip install llama-cpp-python --extra-index-url https://abetlen.github.io/llama-cpp-python/whl/cu121 (or cu122 etc.)

# Prebuilt wheel with Metal GPU acceleration

pip install llama-cpp-python --extra-index-url https://abetlen.github.io/llama-cpp-python/whl/metal

# Build base version with no GPU acceleration

pip install llama-cpp-python

# With NVidia CUDA acceleration

CMAKE_ARGS="-DLLAMA_CUDA=on" pip install llama-cpp-python

# Or with OpenBLAS acceleration

CMAKE_ARGS="-DLLAMA_BLAS=ON -DLLAMA_BLAS_VENDOR=OpenBLAS" pip install llama-cpp-python

# Or with CLBLast acceleration

CMAKE_ARGS="-DLLAMA_CLBLAST=on" pip install llama-cpp-python

# Or with AMD ROCm GPU acceleration (Linux only)

CMAKE_ARGS="-DLLAMA_HIPBLAS=on" pip install llama-cpp-python

# Or with Metal GPU acceleration for macOS systems only

CMAKE_ARGS="-DLLAMA_METAL=on" pip install llama-cpp-python

# Or with Vulkan acceleration

CMAKE_ARGS="-DLLAMA_VULKAN=on" pip install llama-cpp-python

# Or with Kompute acceleration

CMAKE_ARGS="-DLLAMA_KOMPUTE=on" pip install llama-cpp-python

# Or with SYCL acceleration

CMAKE_ARGS="-DLLAMA_SYCL=on -DCMAKE_C_COMPILER=icx -DCMAKE_CXX_COMPILER=icpx" pip install llama-cpp-python

# In windows, to set the variables CMAKE_ARGS in PowerShell, follow this format; eg for NVidia CUDA:

$env:CMAKE_ARGS = "-DLLAMA_CUDA=on"

pip install llama-cpp-python

Simple llama-cpp-python example code

from llama_cpp import Llama

# Chat Completion API

llm = Llama(model_path="./Cerebrum-1.0-8x7b.IQ3_M.gguf", n_gpu_layers=33, n_ctx=16384)

print(llm.create_chat_completion(

messages = [

{"role": "system", "content": "You are a story writing assistant."},

{

"role": "user",

"content": "Write a story about llamas."

}

]

))

Original model card: Aether AI's Cerebrum-1.0-8x7B

Introduction

Cerebrum 8x7b is a large language model (LLM) created specifically for reasoning tasks. It is based on the Mixtral 8x7b model. Similar to its smaller version, Cerebrum 7b, it is fine-tuned on a small custom dataset of native chain of thought data and further improved with targeted RLHF (tRLHF), a novel technique for sample-efficient LLM alignment. Unlike numerous other recent fine-tuning approaches, our training pipeline includes under 5000 training prompts and even fewer labeled datapoints for tRLHF.

Native chain of thought approach means that Cerebrum is trained to devise a tactical plan before tackling problems that require thinking. For brainstorming, knowledge intensive, and creative tasks Cerebrum will typically omit unnecessarily verbose considerations.

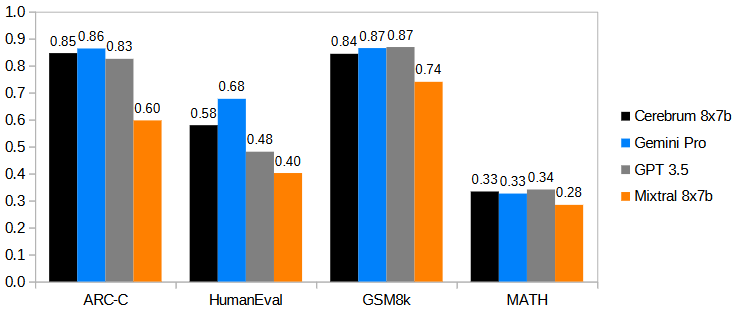

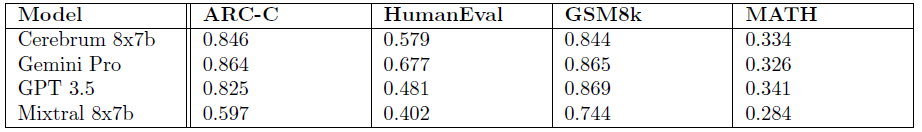

Cerebrum 8x7b offers competitive performance to Gemini 1.0 Pro and GPT-3.5 Turbo on a range of tasks that require reasoning.

Benchmarking

An overview of Cerebrum 8x7b performance compared to Gemini 1.0 Pro, GPT-3.5 and Mixtral 8x7b on selected benchmarks:

Evaluation details:

- ARC-C: all models evaluated zero-shot. Gemini 1.0 Pro and GPT-3.5 (gpt-3.5-turbo-0125) evaluated via API, reported numbers taken for Mixtral 8x7b.

- HumanEval: all models evaluated zero-shot, reported numbers used.

- GSM8k: Cerebrum, GPT-3.5, and Mixtral 8x7b evaluated with maj@8, Gemini evaluated with maj@32. GPT-3.5 (gpt-3.5-turbo-0125) evaluated via API, reported numbers taken for Gemini 1.0 Pro and Mixtral 8x7b.

- MATH: Cerebrum evaluated 0-shot. GPT-3.5 and Gemini evaluated 4-shot, Mixtral 8x7b maj@4. Reported numbers used.

Usage

For optimal performance, Cerebrum should be prompted with an Alpaca-style template that requests the description of the "thought process". Here is what a conversation should look like from the model's point of view:

<s>A chat between a user and a thinking artificial intelligence assistant. The assistant describes its thought process and gives helpful and detailed answers to the user's questions.

User: Are you conscious?

AI:

This prompt is also available as a chat template. Here is how you could use it:

messages = [

{'role': 'user', 'content': 'What is self-consistency decoding?'},

{'role': 'assistant', 'content': 'Self-consistency decoding is a technique used in natural language processing to improve the performance of language models. It works by generating multiple outputs for a given input and then selecting the most consistent output based on a set of criteria.'},

{'role': 'user', 'content': 'Why does self-consistency work?'}

]

input = tokenizer.apply_chat_template(messages, add_generation_prompt=True, return_tensors='pt')

with torch.no_grad():

out = model.generate(input_ids=input, max_new_tokens=100, do_sample=False)

The model ends its turn by generating the EOS token. Importantly, this token should be removed from the model answer in a multi-turn dialogue.

Cerebrum can be operated at very low temperatures (and specifically temperature 0), which improves performance on tasks that require precise answers. The alignment should be sufficient to avoid repetitions in most cases without a repetition penalty.

Examples

Some of the model output examples:

The model is trained to first "think aloud about the solution", before solving the problem itself:

A chat between a user and a thinking artificial intelligence assistant. The assistant describes its thought process and gives helpful and detailed answers to the user's questions.

User: Find the longest increasing subarray of the given array.

The function signature should be def longest_increasing_subarray(vals: List[int]).

Write the code in Python.

AI: To find the longest increasing subarray of the given array, we could start by considering the first element of the array as the start of the subarray. Then, we could iterate through the remaining elements of the array and check if each element is greater than the last element of the current subarray. If it is, we could add it to the subarray. If it is not, we could save the length of the current subarray and start a new subarray with the current element. We could repeat this process until we reach the end of the array. Then, we could return the longest subarray found.

Here is an example:

```python

def longest_increasing_subarray(vals: List[int]):

if len(vals) == 0:

return []

longest_subarray = [vals[0]]

current_subarray = [vals[0]]

for i in range(1, len(vals)):

if vals[i] > current_subarray[-1]:

current_subarray.append(vals[i])

else:

if len(current_subarray) > len(longest_subarray):

longest_subarray = current_subarray

current_subarray = [vals[i]]

if len(current_subarray) > len(longest_subarray):

longest_subarray = current_subarray

return longest_subarray

```

Usual unprompted chain of thought example for mathematical problem solving:

A chat between a user and a thinking artificial intelligence assistant. The assistant describes its thought process and gives helpful and detailed answers to the user's questions.

User: Out of six apples three are bad. What is the probability that at least one apple will be bad when selecting two apples out of them?

AI: To find the probability that at least one apple will be bad when selecting two apples out of them, we need to consider the possible outcomes of the selection. There are two cases: either one of the selected apples is bad, or both of them are bad.

The probability that one of the selected apples is bad is equal to the probability that the first apple is bad and the second apple is good, plus the probability that the first apple is good and the second apple is bad. Since three out of six apples are bad, the probability that the first apple is bad is $3/6 = 1/2$. The probability that the second apple is good, given that the first apple is bad, is equal to $3/5$, since there are three good apples left out of five remaining apples. Therefore, the probability that the first apple is bad and the second apple is good is $1/2 \cdot 3/5 = 3/10$. Similarly, the probability that the first apple is good and the second apple is bad is also $3/10$. Therefore, the probability that one of the selected apples is bad is $3/10 + 3/10 = 6/10 = 3/5$.

The probability that both of the selected apples are bad is equal to the probability that the first apple is bad and the second apple is bad. Since there are three bad apples out of six, the probability that the first apple is bad is $3/6 = 1/2$. The probability that the second apple is bad, given that the first apple is bad, is equal to $2/5$, since there are two bad apples left out of five remaining apples. Therefore, the probability that both of the selected apples are bad is $1/2 \cdot 2/5 = 2/10 = 1/5$.

To find the probability that at least one apple will be bad, we add the probabilities of the two cases: the probability that one of the selected apples is bad and the probability that both of the selected apples are bad. Therefore, the probability that at least one apple will be bad is $3/5 + 1/5 = 4/5$. The answer is 4/5.

Similar example for a simple physical task:

A chat between a user and a thinking artificial intelligence assistant. The assistant describes its thought process and gives helpful and detailed answers to the user's questions.

User: The apple falls from a tree of height 10m. When will it reach the earth if there is no air resistance?

AI: To find out when the apple will reach the earth, we can use the equation of motion for a freely falling object. The equation of motion for a freely falling object is $s = ut + \frac{1}{2}at^2$, where $s$ is the displacement, $u$ is the initial velocity, $a$ is the acceleration due to gravity, and $t$ is the time taken. In this case, the initial velocity of the apple is 0, and the acceleration due to gravity is 9.8 m/s^2. Therefore, we can write the equation of motion as $s = \frac{1}{2}at^2$. Substituting the values, we get $10 = \frac{1}{2} \cdot 9.8 \cdot t^2$. Solving for $t$, we get $t = \sqrt{\frac{20}{9.8}} = \sqrt{2.04} = 1.43$ seconds. Therefore, the apple will reach the earth in 1.43 seconds. The answer is 1.43 seconds.

Simple calculus problem, note that the model quietly corrects itself after proposing factoring out of $n$ from the numerator, which is inefficient in this case.

A chat between a user and a thinking artificial intelligence assistant. The assistant describes its thought process and gives helpful and detailed answers to the user's questions.

User: What is $\lim_{n \to \infty} \frac{(n + 3) \cdot \sqrt{n}}{(n/2 - 1)^2}$?

AI: To find the limit of $\frac{(n + 3) \cdot \sqrt{n}}{(n/2 - 1)^2}$ as $n$ approaches infinity, we can start by simplifying the expression. We can factor out $n$ from the numerator and write $(n + 3) \cdot \sqrt{n} = n \cdot \sqrt{n} + 3 \cdot \sqrt{n}$. We can also write $(n/2 - 1)^2 = n^2/4 - n + 1$. Therefore, we can write the limit as $\lim_{n \to \infty} \frac{n \cdot \sqrt{n} + 3 \cdot \sqrt{n}}{n^2/4 - n + 1}$.

Now, we can consider the behavior of each term in the numerator and denominator as $n$ approaches infinity. As $n$ approaches infinity, $n \cdot \sqrt{n}$ will grow much faster than $3 \cdot \sqrt{n}$, so we can ignore the $3 \cdot \sqrt{n}$ term in the numerator. Similarly, as $n$ approaches infinity, $n^2/4$ will grow much faster than $n$ and $1$, so we can ignore the $n$ and $1$ terms in the denominator. Therefore, we can write the limit as $\lim_{n \to \infty} \frac{n \cdot \sqrt{n}}{n^2/4} = \lim_{n \to \infty} \frac{4 \cdot n \cdot \sqrt{n}}{n^2} = \lim_{n \to \infty} \frac{4 \cdot \sqrt{n}}{n} = \lim_{n \to \infty} \frac{4}{\sqrt{n}} = 0$.

Therefore, the limit of $\frac{(n + 3) \cdot \sqrt{n}}{(n/2 - 1)^2}$ as $n$ approaches infinity is 0. The answer is 0.

Example of a simple proof. The model "forgets" to prove that the matrix $B^T A B$ is symmetric. This is quite common - in fact, GPT-4 fails in a similar fashion.

A chat between a user and a thinking artificial intelligence assistant. The assistant describes its thought process and gives helpful and detailed answers to the user's questions.

User: If $A$ is positive semi-definite, is $B^TAB$ positive semi-definite?

AI: To determine whether $B^TAB$ is positive semi-definite, we need to consider the properties of $A$ and $B$. Since $A$ is positive semi-definite, we know that for any vector $x$, $x^TAx \geq 0$. To show that $B^TAB$ is positive semi-definite, we need to show that for any vector $y$, $y^TB^TABy \geq 0$.

We can write $y^TB^TABy$ as $(By)^TA(By)$. Since $A$ is positive semi-definite, we know that $(By)^TA(By) \geq 0$. Therefore, $y^TB^TABy \geq 0$ for any vector $y$, which means that $B^TAB$ is positive semi-definite. The answer is yes.

Typical reaction of a model to some hallucination-inducing prompt. Instead of simply claiming that it does not know the painter George Ellster, it tries to help the user find a painter with a similar name:

A chat between a user and a thinking artificial intelligence assistant. The assistant describes its thought process and gives helpful and detailed answers to the user's questions.

User: Tell me about a famous British painter George Ellster

AI: George Ellster is not a famous British painter. However, there are a few notable British painters with similar names:

George Elgar Hicks (1824-1914) was a Victorian-era painter known for his genre scenes and portraits.

George Frederic Watts (1817-1904) was a Victorian-era painter and sculptor known for his allegorical and symbolic works.

George Stubbs (1724-1806) was an 18th-century painter known for his equestrian and animal paintings.

George Romney (1734-1802) was an 18th-century painter known for his portraits and historical scenes.

These are just a few examples of notable British painters with similar names to George Ellster.

- Downloads last month

- 267

Model tree for CISCai/Cerebrum-1.0-8x7b-SOTA-GGUF

Base model

mistralai/Mixtral-8x7B-v0.1