CodeV

Collection

Models of paper "CodeV: Empowering LLMs for Verilog Generation through Multi-Level Summarization".

•

4 items

•

Updated

•

3

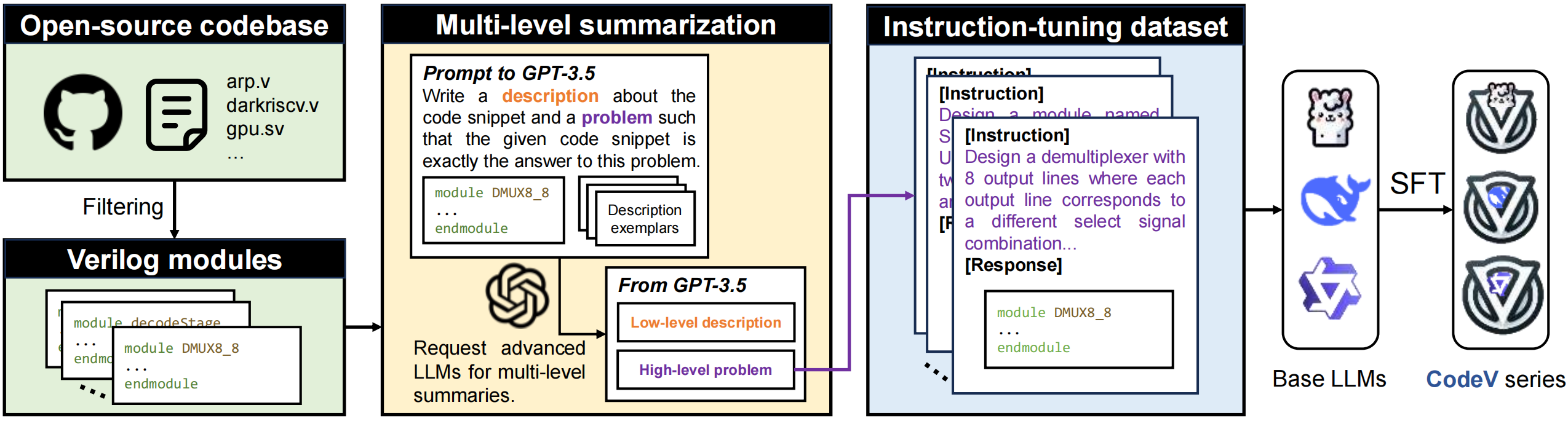

CodeV is an innovative series of open-source, instruction-tuned Large Language Models (LLMs) specifically designed for the generation of high-quality Verilog code, addressing the challenges faced by existing models in this domain. (This repo is under development)

| Base Model | CodeV | |

|---|---|---|

| 6.7B | deepseek-ai/deepseek-coder-6.7b-base | yang-z/CodeV-DS-6.7B |

| 7B | codellama/CodeLlama-7b-Python-hf | yang-z/CodeV-CL-7B |

| 7B | Qwen/CodeQwen1.5-7B-Chat | yang-z/CodeV-QW-7B |

If you want to test the generation capability of existing models on Verilog, you need to install the VerilogEval and RTLLM environments.

from transformers import pipeline

import torch

prompt= "FILL IN THE QUESTION"

generator = pipeline(

model="CODEV",

task="text-generation",

torch_dtype=torch.bfloat16,

device_map="auto",

)

result = generator(prompt , max_length=2048, num_return_sequences=1, temperature=0.0)

response = result[0]["generated_text"]

print("Response:", response)

Arxiv: https://arxiv.org/abs/2407.10424

Please cite the paper if you use the models from CodeV.

@misc{yang-z,

title={CodeV: Empowering LLMs for Verilog Generation through Multi-Level Summarization},

author={Yang Zhao and Di Huang and Chongxiao Li and Pengwei Jin and Ziyuan Nan and Tianyun Ma and Lei Qi and Yansong Pan and Zhenxing Zhang and Rui Zhang and Xishan Zhang and Zidong Du and Qi Guo and Xing Hu and Yunji Chen},

year={2024},

eprint={2407.10424},

archivePrefix={arXiv},

primaryClass={cs.PL},

url={https://arxiv.org/abs/2407.10424},

}