bert-base-uncased-e_CARE

This model is a fine-tuned version of bert-base-uncased.

It achieves the following results on the evaluation set:

- Loss: 1.7677

- Accuracy: 0.7212

Model description

For more information on how it was created, check out the following link: https://github.com/DunnBC22/NLP_Projects/blob/main/Multiple%20Choice/e-CARE/e_CARE_Multiple_Choice_Using_BERT.ipynb

Intended uses & limitations

This model is intended to demonstrate my ability to solve a complex problem using technology.

Training and evaluation data

Dataset Source: https://huggingface.co/datasets/12ml/e-CARE

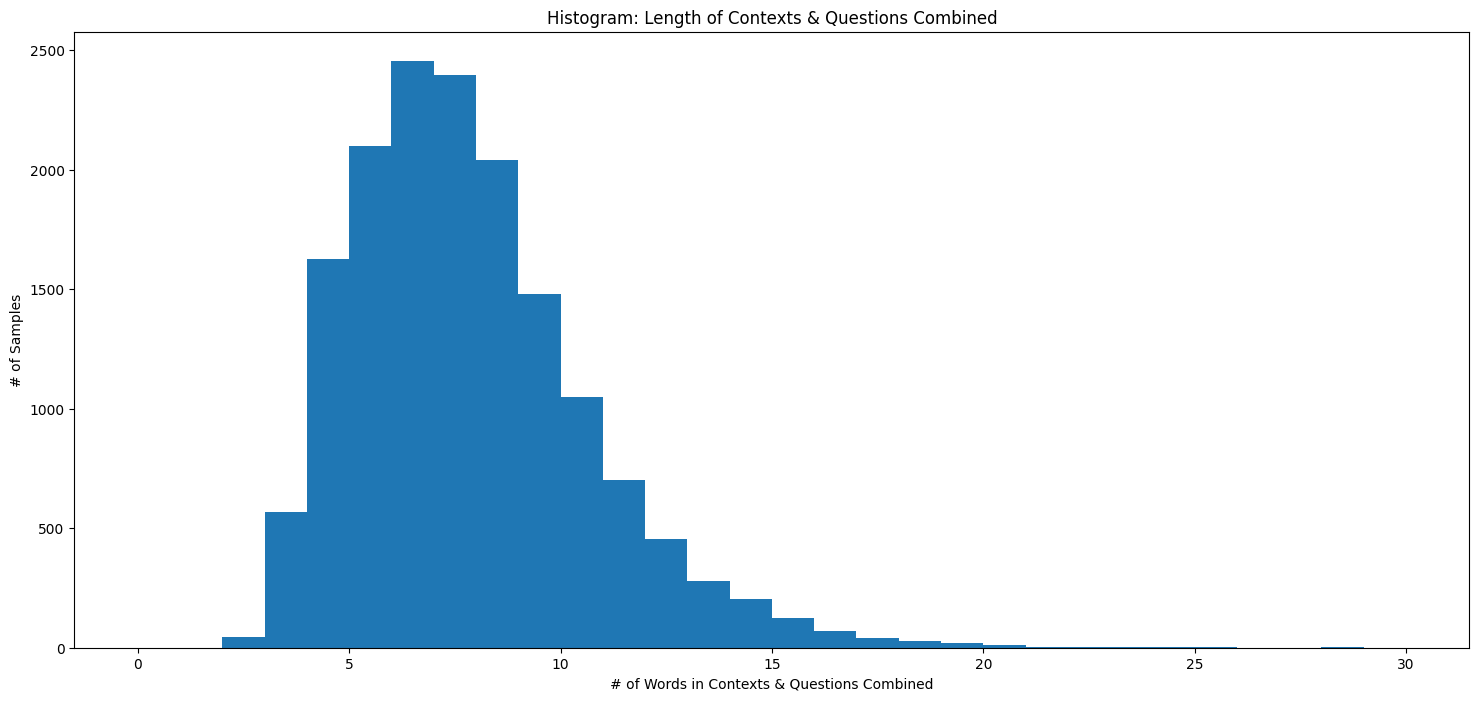

Histogram of Input Lengths

Training procedure

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|---|---|---|---|---|

| 0.5637 | 1.0 | 1571 | 0.5282 | 0.7244 |

| 0.345 | 2.0 | 3142 | 0.6667 | 0.7320 |

| 0.1098 | 3.0 | 4713 | 1.3113 | 0.7257 |

| 0.0212 | 4.0 | 6284 | 1.8194 | 0.7225 |

| 0.0185 | 5.0 | 7855 | 1.7677 | 0.7212 |

Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.2

- Tokenizers 0.13.3

- Downloads last month

- 62

This model does not have enough activity to be deployed to Inference API (serverless) yet. Increase its social

visibility and check back later, or deploy to Inference Endpoints (dedicated)

instead.

Model tree for DunnBC22/bert-base-uncased-e_CARE

Base model

google-bert/bert-base-uncased