File size: 7,513 Bytes

7b696a9 b16bf29 7b696a9 0efbbd1 b837cef 2695353 7c262c0 78ff946 b16bf29 b3dd314 b16bf29 b3dd314 b16bf29 b3dd314 b16bf29 b3dd314 b16bf29 b3dd314 b16bf29 b3dd314 b16bf29 b3dd314 b16bf29 b3dd314 7b696a9 d4e9403 7b696a9 a7a2697 7b696a9 722a99c 7464d19 781637d eecfcaa f0113bc 7c83090 f0113bc 7b696a9 bd7a321 027fe5b a37eda0 db30dbe a7a2697 7b696a9 691294e 5efe811 7b696a9 691294e b837cef eecfcaa 691294e eecfcaa 691294e b837cef 691294e b837cef 691294e eecfcaa b16bf29 b3dd314 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 |

---

language:

- en

license: apache-2.0

library_name: transformers

tags:

- mergekit

- merge

base_model:

- arcee-ai/Virtuoso-Small

- CultriX/SeQwence-14B-EvolMerge

- CultriX/Qwen2.5-14B-Wernicke

- sthenno-com/miscii-14b-1028

- underwoods/medius-erebus-magnum-14b

- sometimesanotion/lamarck-14b-prose-model_stock

- sometimesanotion/lamarck-14b-reason-model_stock

metrics:

- accuracy

pipeline_tag: text-generation

model-index:

- name: Lamarck-14B-v0.3

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: IFEval (0-Shot)

type: HuggingFaceH4/ifeval

args:

num_few_shot: 0

metrics:

- type: inst_level_strict_acc and prompt_level_strict_acc

value: 50.32

name: strict accuracy

source:

url: >-

https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=sometimesanotion/Lamarck-14B-v0.3

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: BBH (3-Shot)

type: BBH

args:

num_few_shot: 3

metrics:

- type: acc_norm

value: 51.27

name: normalized accuracy

source:

url: >-

https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=sometimesanotion/Lamarck-14B-v0.3

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MATH Lvl 5 (4-Shot)

type: hendrycks/competition_math

args:

num_few_shot: 4

metrics:

- type: exact_match

value: 32.4

name: exact match

source:

url: >-

https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=sometimesanotion/Lamarck-14B-v0.3

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GPQA (0-shot)

type: Idavidrein/gpqa

args:

num_few_shot: 0

metrics:

- type: acc_norm

value: 18.46

name: acc_norm

source:

url: >-

https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=sometimesanotion/Lamarck-14B-v0.3

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MuSR (0-shot)

type: TAUR-Lab/MuSR

args:

num_few_shot: 0

metrics:

- type: acc_norm

value: 18

name: acc_norm

source:

url: >-

https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=sometimesanotion/Lamarck-14B-v0.3

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU-PRO (5-shot)

type: TIGER-Lab/MMLU-Pro

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 49.01

name: accuracy

source:

url: >-

https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=sometimesanotion/Lamarck-14B-v0.3

name: Open LLM Leaderboard

new_version: sometimesanotion/Lamarck-14B-v0.4-Qwenvergence

---

---

# merge

Lamarck-14B is the product of a multi-stage merge which emphasizes [arcee-ai/Virtuoso-Small](https://huggingface.co/arcee-ai/Virtuoso-Small) in early and finishing layers, and midway features strong emphasis on reasoning, and ends balanced somewhat towards Virtuoso again.

For GGUFs, [mradermacher/Lamarck-14B-v0.3-i1-GGUF](https://huggingface.co/mradermacher/Lamarck-14B-v0.3-i1-GGUF) has you covered. Thank you @mradermacher!

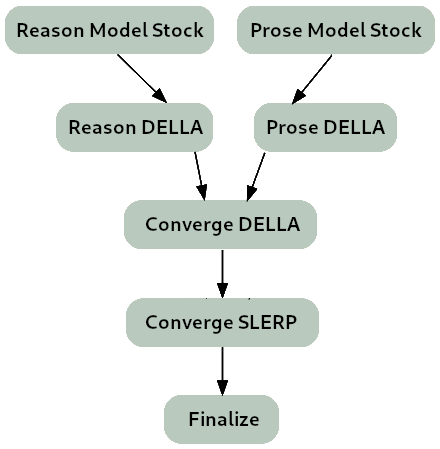

**The merge strategy of Lamarck 0.3 can be summarized as:**

- Two model_stocks commence specialized branches for reasoning and prose quality.

- For refinement on both model_stocks, DELLA merges re-emphasize selected ancestors.

- For smooth instruction following, a SLERP merges Virtuoso with a DELLA merge of the two branches, where reason vs. prose quality are balanced.

- For finalization and normalization, a TIES merge.

### Thanks go to:

- @arcee-ai's team for the ever-capable mergekit, and the exceptional Virtuoso Small model.

- @CultriX for the helpful examples of memory-efficient sliced merges and evolutionary merging. Their contribution of tinyevals on version 0.1 of Lamarck did much to validate the hypotheses of the DELLA->SLERP gradient process used here.

- The authors behind the capable models that appear in the model_stock.

### Models Merged

**Top influences:** These ancestors are base models and present in the model_stocks, but are heavily re-emphasized in the DELLA and SLERP merges.

- **[arcee-ai/Virtuoso-Small](https://huggingface.co/arcee-ai/Virtuoso-Small)** - A brand new model from Arcee, refined from the notable cross-architecture Llama-to-Qwen distillation [arcee-ai/SuperNova-Medius](https://huggingface.co/arcee-ai/SuperNova-Medius). The first two layers are nearly exclusively from Virtuoso. It has proven to be a well-rounded performer, and contributes a noticeable boost to the model's prose quality.

- **[CultriX/SeQwence-14B-EvolMerge](http://huggingface.co/CultriX/SeQwence-14B-EvolMerge)** - A top contender on reasoning benchmarks.

**Reason:** Virtuoso Small is the strongest influence on starting and ending layers, but there are other contributions between:

- **[CultriX/Qwen2.5-14B-Wernicke](http://huggingface.co/CultriX/Qwen2.5-14B-Wernicke)** - A top performer for Arc and GPQA, Wernicke is re-emphasized in small but highly-ranked portions of the model.

- **[VAGOsolutions/SauerkrautLM-v2-14b-DPO](https://huggingface.co/VAGOsolutions/SauerkrautLM-v2-14b-DPO)** - This model's influence is understated, but aids BBH and coding capability.

**Prose:** While the prose module is gently applied, its impact is noticeable on Lamarck 0.3's prose quality, and a DELLA merge re-emphasizes the contributions of two models particularly:

- **[sthenno-com/miscii-14b-1028](https://huggingface.co/sthenno-com/miscii-14b-1028)**

- **[underwoods/medius-erebus-magnum-14b](https://huggingface.co/underwoods/medius-erebus-magnum-14b)**

**Model stock:** Two model_stock merges, specialized for specific aspects of performance, are used to mildly influence a large range of the model.

- **[sometimesanotion/lamarck-14b-reason-model_stock](https://huggingface.co/sometimesanotion/lamarck-14b-reason-model_stock)**

- **[sometimesanotion/lamarck-14b-prose-model_stock](https://huggingface.co/sometimesanotion/lamarck-14b-prose-model_stock)** - This brings in a little influence from [EVA-UNIT-01/EVA-Qwen2.5-14B-v0.2](https://huggingface.co/EVA-UNIT-01/EVA-Qwen2.5-14B-v0.2), [oxyapi/oxy-1-small](https://huggingface.co/oxyapi/oxy-1-small), and [allura-org/TQ2.5-14B-Sugarquill-v1](https://huggingface.co/allura-org/TQ2.5-14B-Sugarquill-v1).

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_sometimesanotion__Lamarck-14B-v0.3)

| Metric |Value|

|-------------------|----:|

|Avg. |36.58|

|IFEval (0-Shot) |50.32|

|BBH (3-Shot) |51.27|

|MATH Lvl 5 (4-Shot)|32.40|

|GPQA (0-shot) |18.46|

|MuSR (0-shot) |18.00|

|MMLU-PRO (5-shot) |49.01| |