Fork of stabilityai/stable-diffusion-2-inpainting

Stable Diffusion is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input. For more information about how Stable Diffusion functions, please have a look at 🤗's Stable Diffusion with 🧨Diffusers blog.

For more information about the model, license and limitations check the original model card at stabilityai/stable-diffusion-2-inpainting.

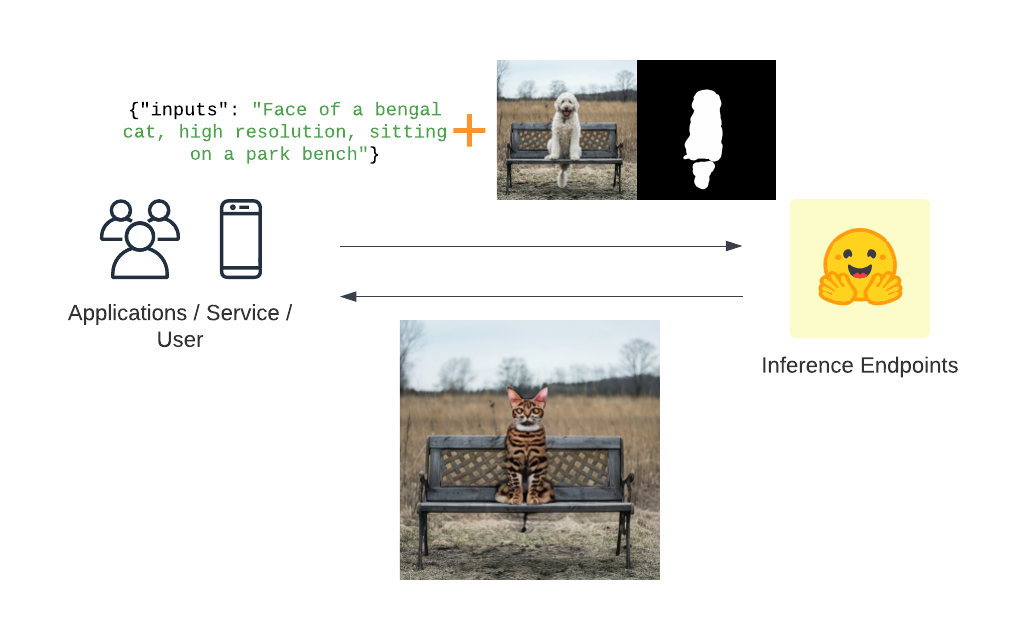

This repository implements a custom handler task for text-guided-to-image-inpainting for 🤗 Inference Endpoints. The code for the customized pipeline is in the handler.py.

There is also a notebook included, on how to create the handler.py

expected Request payload

{

"inputs": "A prompt used for image generation",

"image" : "iVBORw0KGgoAAAANSUhEUgAAAgAAAAIACAIAAAB7GkOtAAAABGdBTUEAALGPC",

"mask_image": "iVBORw0KGgoAAAANSUhEUgAAAgAAAAIACAIAAAB7GkOtAAAABGdBTUEAALGPC",

}

below is an example on how to run a request using Python and requests.

Run Request

import json

from typing import List

import requests as r

import base64

from PIL import Image

from io import BytesIO

ENDPOINT_URL = ""

HF_TOKEN = ""

# helper image utils

def encode_image(image_path):

with open(image_path, "rb") as i:

b64 = base64.b64encode(i.read())

return b64.decode("utf-8")

def predict(prompt, image, mask_image):

image = encode_image(image)

mask_image = encode_image(mask_image)

# prepare sample payload

request = {"inputs": prompt, "image": image, "mask_image": mask_image}

# headers

headers = {

"Authorization": f"Bearer {HF_TOKEN}",

"Content-Type": "application/json",

"Accept": "image/png" # important to get an image back

}

response = r.post(ENDPOINT_URL, headers=headers, json=payload)

img = Image.open(BytesIO(response.content))

return img

prediction = predict(

prompt="Face of a bengal cat, high resolution, sitting on a park bench",

image="dog.png",

mask_image="mask_dog.png"

)

expected output

- Downloads last month

- 17

This model does not have enough activity to be deployed to Inference API (serverless) yet. Increase its social

visibility and check back later, or deploy to Inference Endpoints (dedicated)

instead.