cmd_product_matcher_steel

This model is a fine-tuned version of microsoft/deberta-v3-xsmall on product aliases, edits, BoL data history and additional data.

Evaluation Results

Overall Metrics

- Loss: 0.0838

- Accuracy: 0.9800

- Macro F1 Score: 0.9627

- Weighted F1 Score: 0.9800

- Macro Precision: 0.9605

- Macro Recall: 0.9649

Per-Class Metrics

| Class | Precision | Recall | F1-score | Support |

|---|---|---|---|---|

| Irrelevant | 0.9830 | 0.9715 | 0.9772 | 66024 |

| Scrap | 0.8921 | 0.8769 | 0.8844 | 2291 |

| Steel Bars/Billets | 0.9818 | 0.9896 | 0.9857 | 14903 |

| Steel Beams | 0.9502 | 0.9854 | 0.9675 | 2731 |

| Steel Coils | 0.9874 | 0.9858 | 0.9866 | 30880 |

| Steel Pipes | 0.9852 | 0.9937 | 0.9895 | 58042 |

| Steel Plate | 0.9462 | 0.9512 | 0.9487 | 7748 |

| Steel Rods | 0.9500 | 0.9641 | 0.9570 | 6760 |

| Steel Slab | 0.9688 | 0.9663 | 0.9676 | 772 |

| Accuracy: 0.9800 | ||||

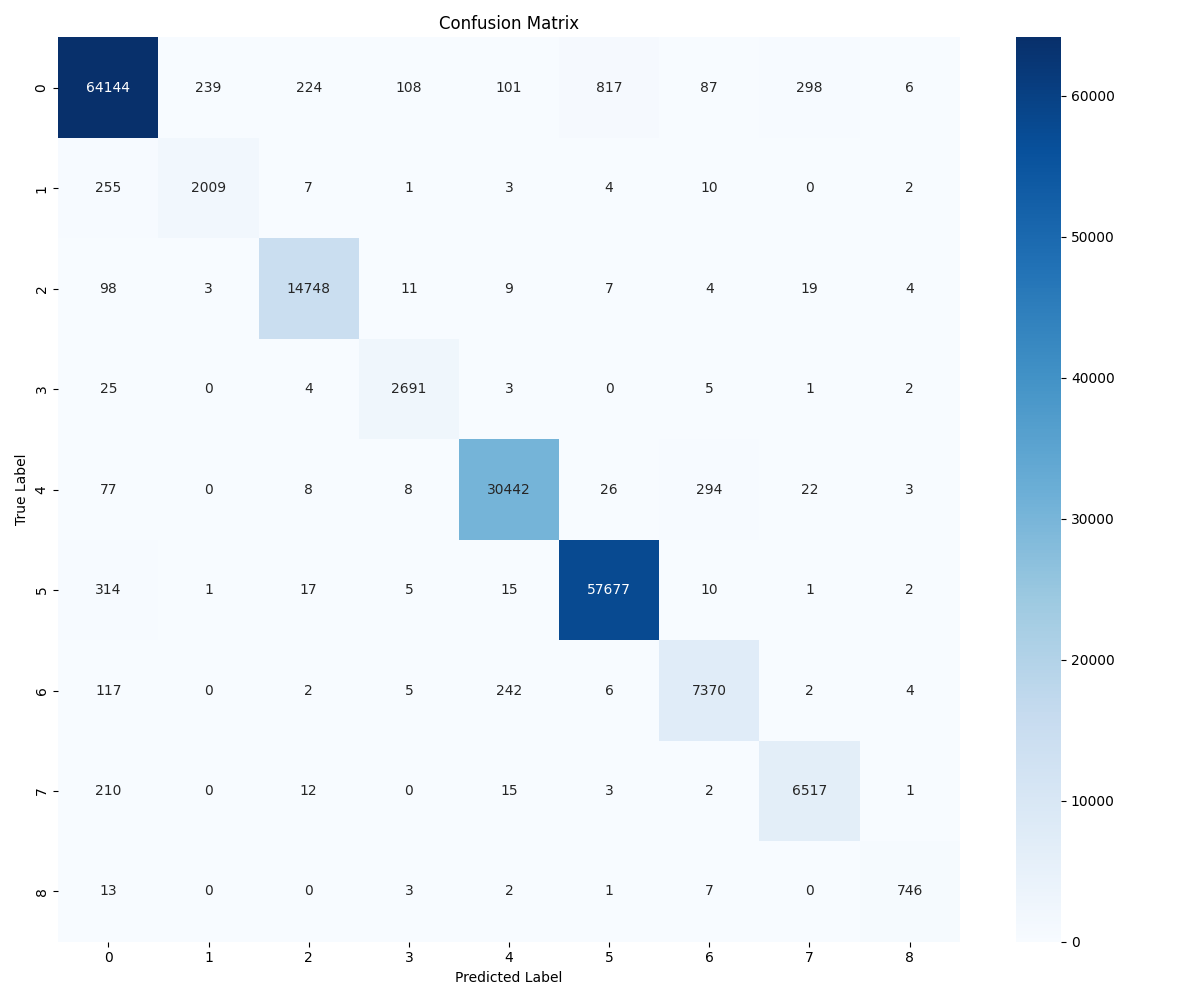

Confusion Matrix

Usage

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("cmd_product_matcher_steel")

model = AutoModelForSequenceClassification.from_pretrained("cmd_product_matcher_steel")

# Example usage

text = "STEEL BARS ASTM 4959, 23,000 MT"

inputs = tokenizer(text, return_tensors="pt")

outputs = model(**inputs)

predictions = outputs.logits.argmax(-1)

- Downloads last month

- 0

This model does not have enough activity to be deployed to Inference API (serverless) yet. Increase its social

visibility and check back later, or deploy to Inference Endpoints (dedicated)

instead.

Model tree for pbaath/cmd_product_matcher_steel

Base model

microsoft/deberta-v3-xsmall

Finetuned

this model