Llama-3-6B-Instruct-pruned

Experimental

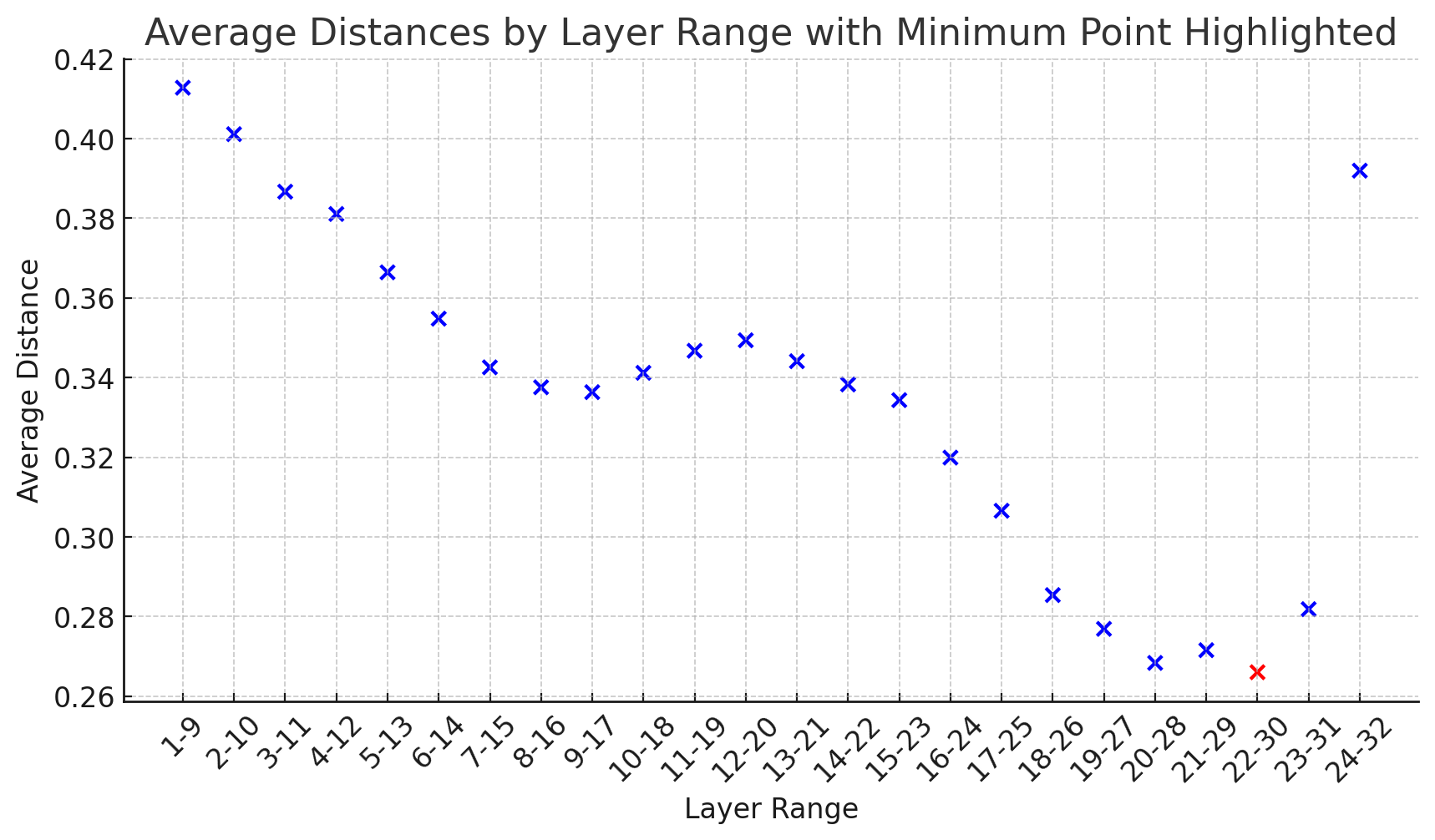

Using PruneMe to find minimal average distance. Thank you for awesome toolkit @arcee-ai !

It shows pruning the 22-30 layer is the best option, but I'm worried about drasitical change between 22 to 23.

It shows pruning the 22-30 layer is the best option, but I'm worried about drasitical change between 22 to 23.

Disclaimer

I haven't done any post-training (called 'healing' process as the paper suggests), will do it later but no guarantee at all.

This is a merge of pre-trained language models created using mergekit.

Merge Details

Merge Method

This model was merged using the passthrough merge method.

Models Merged

The following models were included in the merge:

Configuration

The following YAML configuration was used to produce this model:

dtype: bfloat16

merge_method: passthrough

slices:

- sources:

- layer_range: [0, 21]

model:

model:

path: meta-llama/Meta-Llama-3-8B-Instruct

- sources:

- layer_range: [29, 32]

model:

model:

path: meta-llama/Meta-Llama-3-8B-Instruct

- Downloads last month

- 6

Inference Providers

NEW

This model isn't deployed by any Inference Provider.

🙋

Ask for provider support