Model description

This repo contains the model and the notebook for fine-tuning BERT model on SNLI Corpus for Semantic Similarity. Natural language image search with a Dual Encoder.

Full credits go to Khalid Salama

Reproduced by Vu Minh Chien

Motivation: build a dual encoder (also known as a two-tower) neural network model to search for images using natural language. The model is inspired by the CLIP approach, introduced by Alec Radford et al. The idea is to train a vision encoder and a text encoder jointly to project the representation of images and their captions into the same embedding space, such that the caption embeddings are located near the embeddings of the images they describe.

Training and evaluation data

The MS-COCO dataset was used to train the dual encoder model. MS-COCO contains over 82,000 images, each of which has at least 5 different caption annotations. The dataset is usually used for image captioning tasks, but in this tutorial, the image-caption pairs were used to train dual encoder model for image search.

In this example, the train size is set to 30,000 images, which is about 35% of the dataset.

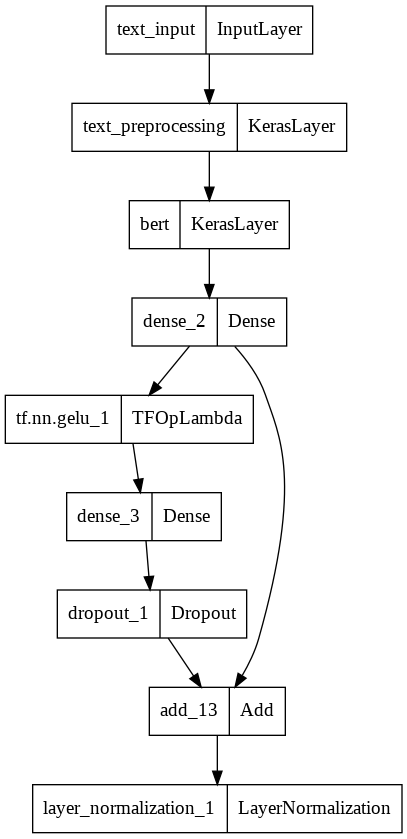

Model Plot

- Downloads last month

- 22