Links for Reference

- Repository: https://github.com/kaistAI/LangBridge

- Paper: LangBridge: Multilingual Reasoning Without Multilingual Supervision

- Point of Contact: dkyoon@kaist.ac.kr

TL;DR

🤔LMs good at reasoning are mostly English-centric (MetaMath, Orca 2, etc).

😃Let’s adapt them to solve multilingual tasks. BUT without using multilingual data!

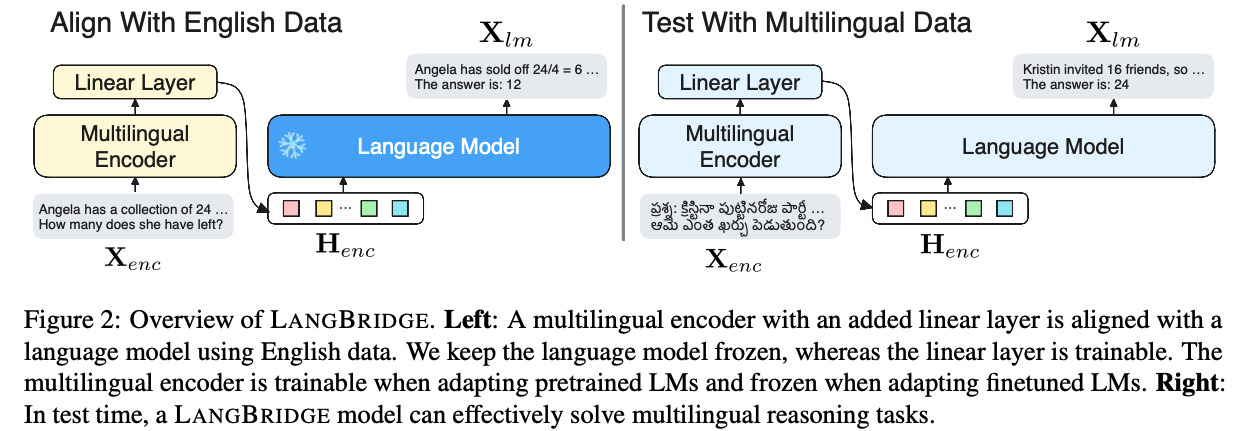

LangBridge “bridges” mT5 encoder and the target LM together while utilizing only English data. In test time, LangBridge models can solve multilingual reasoning tasks effectively.

Usage

This is the tokenizer used for the encoder models of LangBridge. LangBridge models require two tokenizers, one for the encoder model and one for the language model. To the best of our knowledge there is no way of uploading two tokenizers for a model. So this seperate repository was created.

Please refer to the Github repository for detailed usage examples.

Related Models

Check out other LangBridge models.

We have:

- Llama 2

- Llemma

- MetaMath

- Code Llama

- Orca 2

Citation

If you find the following model helpful, please consider citing our paper!

BibTeX:

@misc{yoon2024langbridge,

title={LangBridge: Multilingual Reasoning Without Multilingual Supervision},

author={Dongkeun Yoon and Joel Jang and Sungdong Kim and Seungone Kim and Sheikh Shafayat and Minjoon Seo},

year={2024},

eprint={2401.10695},

archivePrefix={arXiv},

primaryClass={cs.CL}

}