[ECCV 2024] OneRestore: A Universal Restoration Framework for Composite Degradation

OneRestore: A Universal Restoration Framework for Composite Degradation

Yu Guo† , Yuan Gao† , Yuxu Lu, Huilin Zhu, Ryan Wen Liu* , Shengfeng He*

(† Co-first Author, * Corresponding Author)

European Conference on Computer Vision

Abstract: In real-world scenarios, image impairments often manifest as composite degradations, presenting a complex interplay of elements such as low light, haze, rain, and snow. Despite this reality, existing restoration methods typically target isolated degradation types, thereby falling short in environments where multiple degrading factors coexist. To bridge this gap, our study proposes a versatile imaging model that consolidates four physical corruption paradigms to accurately represent complex, composite degradation scenarios. In this context, we propose OneRestore, a novel transformer-based framework designed for adaptive, controllable scene restoration. The proposed framework leverages a unique cross-attention mechanism, merging degraded scene descriptors with image features, allowing for nuanced restoration. Our model allows versatile input scene descriptors, ranging from manual text embeddings to automatic extractions based on visual attributes. Our methodology is further enhanced through a composite degradation restoration loss, using extra degraded images as negative samples to fortify model constraints. Comparative results on synthetic and real-world datasets demonstrate OneRestore as a superior solution, significantly advancing the state-of-the-art in addressing complex, composite degradations.

News 🚀

- 2024.09.07: Hugging Face Demo is released.

- 2024.09.05: Video and poster are released.

- 2024.09.04: Code for data synthesis is released.

- 2024.07.27: Code for multiple GPUs training is released.

- 2024.07.20: New Website has been created.

- 2024.07.10: Paper is released on ArXiv.

- 2024.07.07: Code and Dataset are released.

- 2024.07.02: OneRestore is accepted by ECCV2024.

Network Architecture

Quick Start

Install

- python 3.7

- cuda 11.7

# git clone this repository

git clone https://github.com/gy65896/OneRestore.git

cd OneRestore

# create new anaconda env

conda create -n onerestore python=3.7

conda activate onerestore

# download ckpts

put embedder_model.tar and onerestore_cdd-11.tar in ckpts folder

# install pytorch (Take cuda 11.7 as an example to install torch 1.13)

pip install torch==1.13.0+cu117 torchvision==0.14.0+cu117 torchaudio==0.13.0 --extra-index-url https://download.pytorch.org/whl/cu117

# install other packages

pip install -r requirements.txt

pip install gensim

Pretrained Models

Please download our pre-trained models and put them in ./ckpts.

| Model | Description |

|---|---|

| embedder_model.tar | Text/Visual Embedder trained on our CDD-11. |

| onerestore_cdd-11.tar | OneRestore trained on our CDD-11. |

| onerestore_real.tar | OneRestore trained on our CDD-11 for Real Scenes. |

| onerestore_lol.tar | OneRestore trained on LOL (low light enhancement benchmark). |

| onerestore_reside_ots.tar | OneRestore trained on RESIDE-OTS (image dehazing benchmark). |

| onerestore_rain1200.tar | OneRestore trained on Rain1200 (image deraining benchmark). |

| onerestore_snow100k.tar | OneRestore trained on Snow100k-L (image desnowing benchmark). |

Inference

We provide two samples in ./image for the quick inference:

python test.py --embedder-model-path ./ckpts/embedder_model.tar --restore-model-path ./ckpts/onerestore_cdd-11.tar --input ./image/ --output ./output/ --concat

You can also input the prompt to perform controllable restoration. For example:

python test.py --embedder-model-path ./ckpts/embedder_model.tar --restore-model-path ./ckpts/onerestore_cdd-11.tar --prompt low_haze --input ./image/ --output ./output/ --concat

Training

Prepare Dataset

We provide the download link of our Composite Degradation Dataset with 11 types of degradation (CDD-11).

Preparing the train and test datasets as follows:

./data/

|--train

| |--clear

| | |--000001.png

| | |--000002.png

| |--low

| |--haze

| |--rain

| |--snow

| |--low_haze

| |--low_rain

| |--low_snow

| |--haze_rain

| |--haze_snow

| |--low_haze_rain

| |--low_haze_snow

|--test

Train Model

1. Train Text/Visual Embedder by

python train_Embedder.py --train-dir ./data/CDD-11_train --test-dir ./data/CDD-11_test --check-dir ./ckpts --batch 256 --num-workers 0 --epoch 200 --lr 1e-4 --lr-decay 50

2. Remove the optimizer weights in the Embedder model file by

python remove_optim.py --type Embedder --input-file ./ckpts/embedder_model.tar --output-file ./ckpts/embedder_model.tar

3. Generate the dataset.h5 file for training OneRestore by

python makedataset.py --train-path ./data/CDD-11_train --data-name dataset.h5 --patch-size 256 --stride 200

4. Train OneRestore model by

- Single GPU

python train_OneRestore_single-gpu.py --embedder-model-path ./ckpts/embedder_model.tar --save-model-path ./ckpts --train-input ./dataset.h5 --test-input ./data/CDD-11_test --output ./result/ --epoch 120 --bs 4 --lr 1e-4 --adjust-lr 30 --num-works 4

- Multiple GPUs

Assuming you train the OneRestore model using 4 GPUs (e.g., 0, 1, 2, and 3), you can use the following command. Note that the number of nproc_per_node should equal the number of GPUs.

CUDA_VISIBLE_DEVICES=0, 1, 2, 3 torchrun --nproc_per_node=4 train_OneRestore_multi-gpu.py --embedder-model-path ./ckpts/embedder_model.tar --save-model-path ./ckpts --train-input ./dataset.h5 --test-input ./data/CDD-11_test --output ./result/ --epoch 120 --bs 4 --lr 1e-4 --adjust-lr 30 --num-works 4

5. Remove the optimizer weights in the OneRestore model file by

python remove_optim.py --type OneRestore --input-file ./ckpts/onerestore_model.tar --output-file ./ckpts/onerestore_model.tar

Customize your own composite degradation dataset

1. Prepare raw data

- Collect your own clear images.

- Generate the depth map based on MegaDepth.

- Generate the light map based on LIME.

- Generate the rain mask database based on RainStreakGen.

- Download the snow mask database from Snow100k.

A generated example is as follows:

| Clear Image | Depth Map | Light Map | Rain Mask | Snow Mask |

|---|---|---|---|---|

|

|

|

|

|

(Note: The rain and snow masks do not require strict alignment with the image.)

- Prepare the dataset as follows:

./syn_data/

|--data

| |--clear

| | |--000001.png

| | |--000002.png

| |--depth_map

| | |--000001.png

| | |--000002.png

| |--light_map

| | |--000001.png

| | |--000002.png

| |--rain_mask

| | |--aaaaaa.png

| | |--bbbbbb.png

| |--snow_mask

| | |--cccccc.png

| | |--dddddd.png

|--out

2. Generate composite degradation images

- low+haze+rain

python syn_data.py --hq-file ./data/clear/ --light-file ./data/light_map/ --depth-file ./data/depth_map/ --rain-file ./data/rain_mask/ --snow-file ./data/snow_mask/ --out-file ./out/ --low --haze --rain

- low+haze+snow

python syn_data.py --hq-file ./data/clear/ --light-file ./data/light_map/ --depth-file ./data/depth_map/ --rain-file ./data/rain_mask/ --snow-file ./data/snow_mask/ --out-file ./out/ --low --haze --snow

(Note: The degradation types can be customized according to specific needs.)

| Clear Image | low+haze+rain | low+haze+snow |

|---|---|---|

|

|

|

Performance

CDD-11

| Types | Methods | Venue & Year | PSNR ↑ | SSIM ↑ | #Params |

|---|---|---|---|---|---|

| Input | Input | 16.00 | 0.6008 | - | |

| One-to-One | MIRNet | ECCV2020 | 25.97 | 0.8474 | 31.79M |

| One-to-One | MPRNet | CVPR2021 | 25.47 | 0.8555 | 15.74M |

| One-to-One | MIRNetv2 | TPAMI2022 | 25.37 | 0.8335 | 5.86M |

| One-to-One | Restormer | CVPR2022 | 26.99 | 0.8646 | 26.13M |

| One-to-One | DGUNet | CVPR2022 | 26.92 | 0.8559 | 17.33M |

| One-to-One | NAFNet | ECCV2022 | 24.13 | 0.7964 | 17.11M |

| One-to-One | SRUDC | ICCV2023 | 27.64 | 0.8600 | 6.80M |

| One-to-One | Fourmer | ICML2023 | 23.44 | 0.7885 | 0.55M |

| One-to-One | OKNet | AAAI2024 | 26.33 | 0.8605 | 4.72M |

| One-to-Many | AirNet | CVPR2022 | 23.75 | 0.8140 | 8.93M |

| One-to-Many | TransWeather | CVPR2022 | 23.13 | 0.7810 | 21.90M |

| One-to-Many | WeatherDiff | TPAMI2023 | 22.49 | 0.7985 | 82.96M |

| One-to-Many | PromptIR | NIPS2023 | 25.90 | 0.8499 | 38.45M |

| One-to-Many | WGWSNet | CVPR2023 | 26.96 | 0.8626 | 25.76M |

| One-to-Composite | OneRestore | ECCV2024 | 28.47 | 0.8784 | 5.98M |

| One-to-Composite | OneRestore† | ECCV2024 | 28.72 | 0.8821 | 5.98M |

Indicator calculation code and numerical results can be download here.

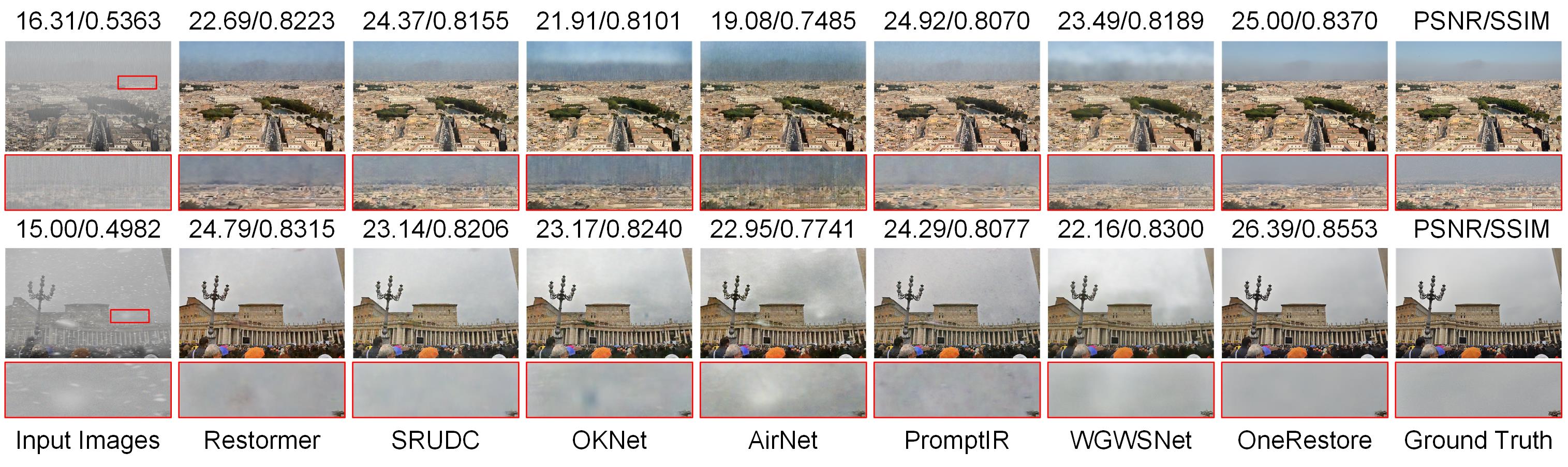

Real Scene

Controllability

Citation

@inproceedings{guo2024onerestore,

title={OneRestore: A Universal Restoration Framework for Composite Degradation},

author={Guo, Yu and Gao, Yuan and Lu, Yuxu and Liu, Ryan Wen and He, Shengfeng},

booktitle={European Conference on Computer Vision},

year={2024}

}

If you have any questions, please get in touch with me (guoyu65896@gmail.com).

Model tree for gy65896/OneRestore

Unable to build the model tree, the base model loops to the model itself. Learn more.