Diffusers documentation

Zero-Shot Text-to-Video Generation

Zero-Shot Text-to-Video Generation

Overview

Text2Video-Zero: Text-to-Image Diffusion Models are Zero-Shot Video Generators by Levon Khachatryan, Andranik Movsisyan, Vahram Tadevosyan, Roberto Henschel, Zhangyang Wang, Shant Navasardyan, Humphrey Shi.

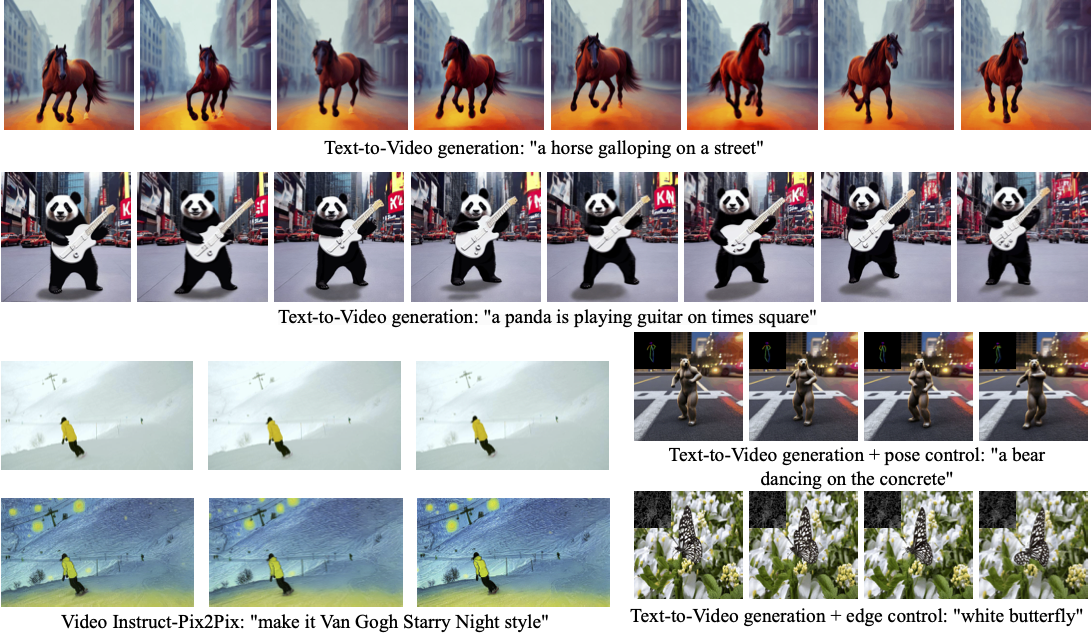

Our method Text2Video-Zero enables zero-shot video generation using either

- A textual prompt, or

- A prompt combined with guidance from poses or edges, or

- Video Instruct-Pix2Pix, i.e., instruction-guided video editing.

Results are temporally consistent and follow closely the guidance and textual prompts.

The abstract of the paper is the following:

Recent text-to-video generation approaches rely on computationally heavy training and require large-scale video datasets. In this paper, we introduce a new task of zero-shot text-to-video generation and propose a low-cost approach (without any training or optimization) by leveraging the power of existing text-to-image synthesis methods (e.g., Stable Diffusion), making them suitable for the video domain. Our key modifications include (i) enriching the latent codes of the generated frames with motion dynamics to keep the global scene and the background time consistent; and (ii) reprogramming frame-level self-attention using a new cross-frame attention of each frame on the first frame, to preserve the context, appearance, and identity of the foreground object. Experiments show that this leads to low overhead, yet high-quality and remarkably consistent video generation. Moreover, our approach is not limited to text-to-video synthesis but is also applicable to other tasks such as conditional and content-specialized video generation, and Video Instruct-Pix2Pix, i.e., instruction-guided video editing. As experiments show, our method performs comparably or sometimes better than recent approaches, despite not being trained on additional video data.

Resources:

Available Pipelines:

| Pipeline | Tasks | Demo |

|---|---|---|

| TextToVideoZeroPipeline | Zero-shot Text-to-Video Generation | 🤗 Space |

Usage example

Text-To-Video

To generate a video from prompt, run the following python command

import torch

import imageio

from diffusers import TextToVideoZeroPipeline

model_id = "runwayml/stable-diffusion-v1-5"

pipe = TextToVideoZeroPipeline.from_pretrained(model_id, torch_dtype=torch.float16).to("cuda")

prompt = "A panda is playing guitar on times square"

result = pipe(prompt=prompt).images

result = [(r * 255).astype("uint8") for r in result]

imageio.mimsave("video.mp4", result, fps=4)You can change these parameters in the pipeline call:

- Motion field strength (see the paper, Sect. 3.3.1):

motion_field_strength_xandmotion_field_strength_y. Default:motion_field_strength_x=12,motion_field_strength_y=12

TandT'(see the paper, Sect. 3.3.1)t0andt1in the range{0, ..., num_inference_steps}. Default:t0=45,t1=48

- Video length:

video_length, the number of frames video_length to be generated. Default:video_length=8

Text-To-Video with Pose Control

To generate a video from prompt with additional pose controlDownload a demo video

from huggingface_hub import hf_hub_download filename = "__assets__/poses_skeleton_gifs/dance1_corr.mp4" repo_id = "PAIR/Text2Video-Zero" video_path = hf_hub_download(repo_type="space", repo_id=repo_id, filename=filename)

Read video containing extracted pose images

from PIL import Image import imageio reader = imageio.get_reader(video_path, "ffmpeg") frame_count = 8 pose_images = [Image.fromarray(reader.get_data(i)) for i in range(frame_count)]To extract pose from actual video, read ControlNet documentation.

Run

StableDiffusionControlNetPipelinewith our custom attention processorimport torch from diffusers import StableDiffusionControlNetPipeline, ControlNetModel from diffusers.pipelines.text_to_video_synthesis.pipeline_text_to_video_zero import CrossFrameAttnProcessor model_id = "runwayml/stable-diffusion-v1-5" controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-openpose", torch_dtype=torch.float16) pipe = StableDiffusionControlNetPipeline.from_pretrained( model_id, controlnet=controlnet, torch_dtype=torch.float16 ).to("cuda") # Set the attention processor pipe.unet.set_attn_processor(CrossFrameAttnProcessor(batch_size=2)) pipe.controlnet.set_attn_processor(CrossFrameAttnProcessor(batch_size=2)) # fix latents for all frames latents = torch.randn((1, 4, 64, 64), device="cuda", dtype=torch.float16).repeat(len(pose_images), 1, 1, 1) prompt = "Darth Vader dancing in a desert" result = pipe(prompt=[prompt] * len(pose_images), image=pose_images, latents=latents).images imageio.mimsave("video.mp4", result, fps=4)

Text-To-Video with Edge Control

To generate a video from prompt with additional pose control, follow the steps described above for pose-guided generation using Canny edge ControlNet model.

Video Instruct-Pix2Pix

To perform text-guided video editing (with InstructPix2Pix):

Download a demo video

from huggingface_hub import hf_hub_download filename = "__assets__/pix2pix video/camel.mp4" repo_id = "PAIR/Text2Video-Zero" video_path = hf_hub_download(repo_type="space", repo_id=repo_id, filename=filename)Read video from path

from PIL import Image import imageio reader = imageio.get_reader(video_path, "ffmpeg") frame_count = 8 video = [Image.fromarray(reader.get_data(i)) for i in range(frame_count)]Run

StableDiffusionInstructPix2PixPipelinewith our custom attention processorimport torch from diffusers import StableDiffusionInstructPix2PixPipeline from diffusers.pipelines.text_to_video_synthesis.pipeline_text_to_video_zero import CrossFrameAttnProcessor model_id = "timbrooks/instruct-pix2pix" pipe = StableDiffusionInstructPix2PixPipeline.from_pretrained(model_id, torch_dtype=torch.float16).to("cuda") pipe.unet.set_attn_processor(CrossFrameAttnProcessor(batch_size=3)) prompt = "make it Van Gogh Starry Night style" result = pipe(prompt=[prompt] * len(video), image=video).images imageio.mimsave("edited_video.mp4", result, fps=4)

DreamBooth specialization

Methods Text-To-Video, Text-To-Video with Pose Control and Text-To-Video with Edge Control can run with custom DreamBooth models, as shown below for Canny edge ControlNet model and Avatar style DreamBooth model

Download a demo video

from huggingface_hub import hf_hub_download filename = "__assets__/canny_videos_mp4/girl_turning.mp4" repo_id = "PAIR/Text2Video-Zero" video_path = hf_hub_download(repo_type="space", repo_id=repo_id, filename=filename)Read video from path

from PIL import Image import imageio reader = imageio.get_reader(video_path, "ffmpeg") frame_count = 8 video = [Image.fromarray(reader.get_data(i)) for i in range(frame_count)]Run

StableDiffusionControlNetPipelinewith custom trained DreamBooth modelimport torch from diffusers import StableDiffusionControlNetPipeline, ControlNetModel from diffusers.pipelines.text_to_video_synthesis.pipeline_text_to_video_zero import CrossFrameAttnProcessor # set model id to custom model model_id = "PAIR/text2video-zero-controlnet-canny-avatar" controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16) pipe = StableDiffusionControlNetPipeline.from_pretrained( model_id, controlnet=controlnet, torch_dtype=torch.float16 ).to("cuda") # Set the attention processor pipe.unet.set_attn_processor(CrossFrameAttnProcessor(batch_size=2)) pipe.controlnet.set_attn_processor(CrossFrameAttnProcessor(batch_size=2)) # fix latents for all frames latents = torch.randn((1, 4, 64, 64), device="cuda", dtype=torch.float16).repeat(len(pose_images), 1, 1, 1) prompt = "oil painting of a beautiful girl avatar style" result = pipe(prompt=[prompt] * len(pose_images), image=pose_images, latents=latents).images imageio.mimsave("video.mp4", result, fps=4)

You can filter out some available DreamBooth-trained models with this link.

TextToVideoZeroPipeline

class diffusers.TextToVideoZeroPipeline

< source >( vae: AutoencoderKL text_encoder: CLIPTextModel tokenizer: CLIPTokenizer unet: UNet2DConditionModel scheduler: KarrasDiffusionSchedulers safety_checker: StableDiffusionSafetyChecker feature_extractor: CLIPImageProcessor requires_safety_checker: bool = True )

Parameters

- vae (AutoencoderKL) — Variational Auto-Encoder (VAE) Model to encode and decode images to and from latent representations.

-

text_encoder (

CLIPTextModel) — Frozen text-encoder. Stable Diffusion uses the text portion of CLIP, specifically the clip-vit-large-patch14 variant. -

tokenizer (

CLIPTokenizer) — Tokenizer of class CLIPTokenizer. - unet (UNet2DConditionModel) — Conditional U-Net architecture to denoise the encoded image latents.

-

scheduler (SchedulerMixin) —

A scheduler to be used in combination with

unetto denoise the encoded image latents. Can be one of DDIMScheduler, LMSDiscreteScheduler, or PNDMScheduler. -

safety_checker (

StableDiffusionSafetyChecker) — Classification module that estimates whether generated images could be considered offensive or harmful. Please, refer to the model card for details. -

feature_extractor (

CLIPImageProcessor) — Model that extracts features from generated images to be used as inputs for thesafety_checker.

Pipeline for zero-shot text-to-video generation using Stable Diffusion.

This model inherits from StableDiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

prompt: typing.Union[str, typing.List[str]]

video_length: typing.Optional[int] = 8

height: typing.Optional[int] = None

width: typing.Optional[int] = None

num_inference_steps: int = 50

guidance_scale: float = 7.5

negative_prompt: typing.Union[str, typing.List[str], NoneType] = None

num_videos_per_prompt: typing.Optional[int] = 1

eta: float = 0.0

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

latents: typing.Optional[torch.FloatTensor] = None

motion_field_strength_x: float = 12

motion_field_strength_y: float = 12

output_type: typing.Optional[str] = 'tensor'

return_dict: bool = True

callback: typing.Union[typing.Callable[[int, int, torch.FloatTensor], NoneType], NoneType] = None

callback_steps: typing.Optional[int] = 1

t0: int = 44

t1: int = 47

)

→

~pipelines.text_to_video_synthesis.TextToVideoPipelineOutput

Parameters

-

prompt (

strorList[str], optional) — The prompt or prompts to guide the image generation. If not defined, one has to passprompt_embeds. instead. -

video_length (

int, optional, defaults to 8) — The number of generated video frames -

height (

int, optional, defaults to self.unet.config.sample_size * self.vae_scale_factor) — The height in pixels of the generated image. -

width (

int, optional, defaults to self.unet.config.sample_size * self.vae_scale_factor) — The width in pixels of the generated image. -

num_inference_steps (

int, optional, defaults to 50) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

guidance_scale (

float, optional, defaults to 7.5) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

negative_prompt (

strorList[str], optional) — The prompt or prompts not to guide the image generation. If not defined, one has to passnegative_prompt_embedsinstead. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

num_videos_per_prompt (

int, optional, defaults to 1) — The number of videos to generate per prompt. -

eta (

float, optional, defaults to 0.0) — Corresponds to parameter eta (η) in the DDIM paper: https://arxiv.org/abs/2010.02502. Only applies to schedulers.DDIMScheduler, will be ignored for others. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

latents (

torch.FloatTensor, optional) — Pre-generated noisy latents, sampled from a Gaussian distribution, to be used as inputs for image generation. Can be used to tweak the same generation with different prompts. If not provided, a latents tensor will ge generated by sampling using the supplied randomgenerator. -

output_type (

str, optional, defaults to"numpy") — The output format of the generated image. Choose between"latent"and"numpy". -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a StableDiffusionPipelineOutput instead of a plain tuple. -

callback (

Callable, optional) — A function that will be called everycallback_stepssteps during inference. The function will be called with the following arguments:callback(step: int, timestep: int, latents: torch.FloatTensor). -

callback_steps (

int, optional, defaults to 1) — The frequency at which thecallbackfunction will be called. If not specified, the callback will be called at every step. -

motion_field_strength_x (

float, optional, defaults to 12) — Strength of motion in generated video along x-axis. See the paper, Sect. 3.3.1. -

motion_field_strength_y (

float, optional, defaults to 12) — Strength of motion in generated video along y-axis. See the paper, Sect. 3.3.1. -

t0 (

int, optional, defaults to 44) — Timestep t0. Should be in the range [0, num_inference_steps - 1]. See the paper, Sect. 3.3.1. -

t1 (

int, optional, defaults to 47) — Timestep t0. Should be in the range [t0 + 1, num_inference_steps - 1]. See the paper, Sect. 3.3.1.

Returns

~pipelines.text_to_video_synthesis.TextToVideoPipelineOutput

The output contains a ndarray of the generated images, when output_type != ‘latent’, otherwise a latent

codes of generated image, and a list of bools denoting whether the corresponding generated image

likely represents “not-safe-for-work” (nsfw) content, according to the safety_checker.

Function invoked when calling the pipeline for generation.

backward_loop

< source >( latents timesteps prompt_embeds guidance_scale callback callback_steps num_warmup_steps extra_step_kwargs cross_attention_kwargs = None ) → latents

Parameters

-

callback (

Callable, optional) — A function that will be called everycallback_stepssteps during inference. The function will be called with the following arguments:callback(step: int, timestep: int, latents: torch.FloatTensor). -

callback_steps (

int, optional, defaults to 1) — The frequency at which thecallbackfunction will be called. If not specified, the callback will be called at every step. extra_step_kwargs — extra_step_kwargs. cross_attention_kwargs — cross_attention_kwargs. num_warmup_steps — number of warmup steps.

Returns

latents

latents of backward process output at time timesteps[-1]

Perform backward process given list of time steps

forward_loop

< source >( x_t0 t0 t1 generator ) → x_t1

Returns

x_t1

forward process applied to x_t0 from time t0 to t1.

Perform ddpm forward process from time t0 to t1. This is the same as adding noise with corresponding variance.