I present my Magnum Opus of llm merges for 2023. This is a monster of a model created from merging x8 sonya-medium's into one mixture of experts.

Enjoy! ;)

Config:

base_model: dillfrescott/sonya-medium

gate_mode: hidden

dtype: bfloat16

experts:

- source_model: dillfrescott/sonya-medium

positive_prompts: [""]

- source_model: dillfrescott/sonya-medium

positive_prompts: [""]

- source_model: dillfrescott/sonya-medium

positive_prompts: [""]

- source_model: dillfrescott/sonya-medium

positive_prompts: [""]

- source_model: dillfrescott/sonya-medium

positive_prompts: [""]

- source_model: dillfrescott/sonya-medium

positive_prompts: [""]

- source_model: dillfrescott/sonya-medium

positive_prompts: [""]

- source_model: dillfrescott/sonya-medium

positive_prompts: [""]

P.S. Be careful with K quants of this model as they may be glitched!

The cover image is heavily inspired by Muzan Kibutsuji from Demon Slayer.

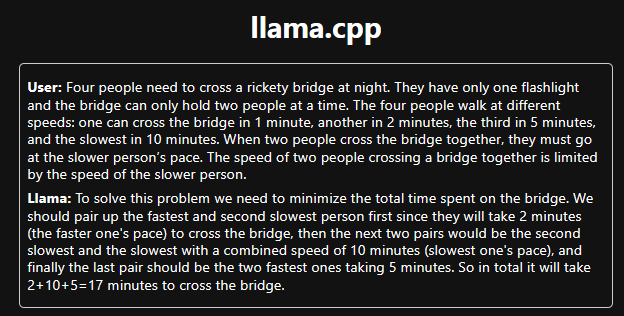

Example outputs and reasonings. (The following outputs are a q4_0 version so the fully unquantized model would likely perform even better):

- Downloads last month

- 2,977