Controlnet - Inpainting dreamer

This ControlNet has been conditioned on Inpainting and Outpainting.

It is an early alpha version made by experimenting in order to learn more about controlnet.

You want to support this kind of work and the development of this model ? Feel free to buy me a coffee !

It is designed to work with Stable Diffusion XL. It should work with any model based on it.

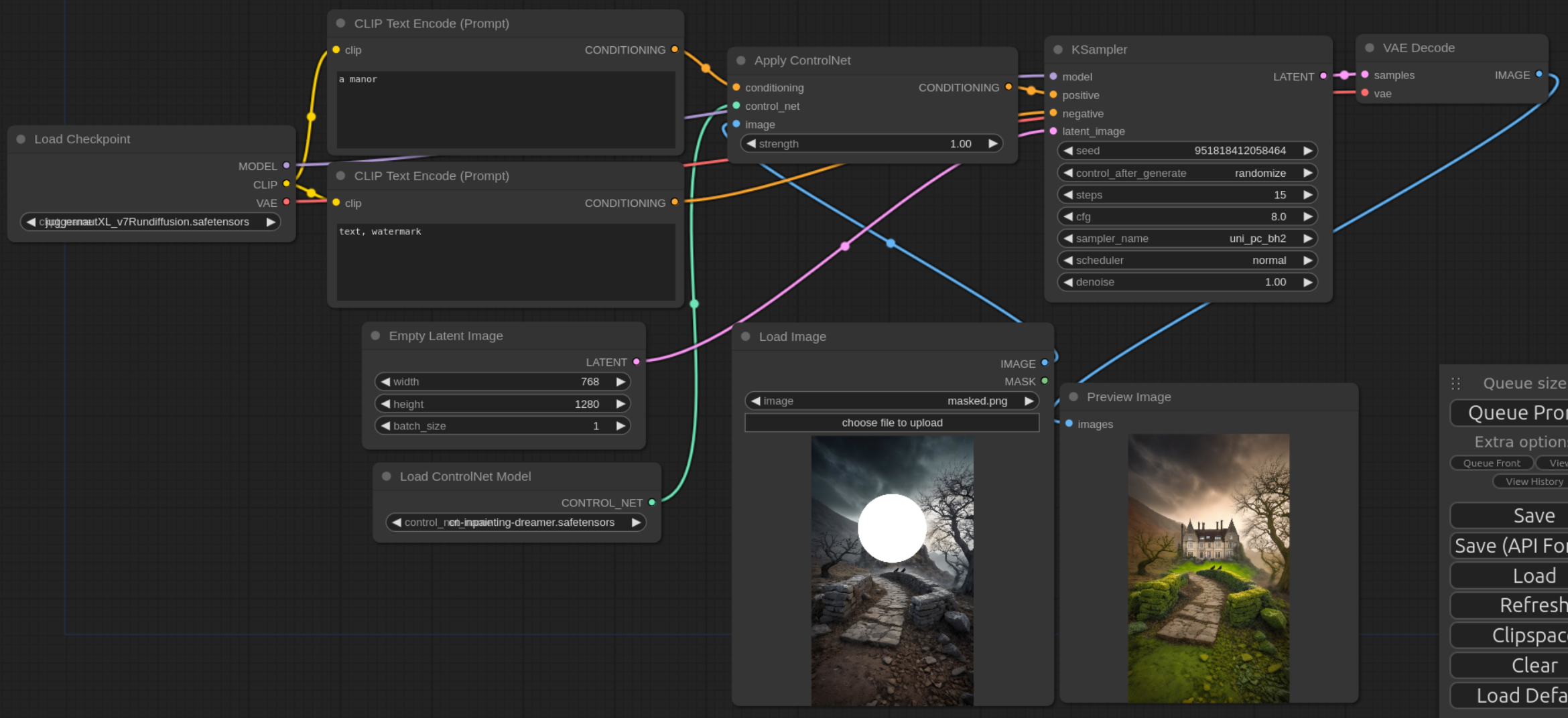

The image to inpaint or outpaint is to be used as input of the controlnet in a txt2img pipeline with denoising set to 1.0. The part to in/outpaint should be colors in solid white.

Depending on the prompts, the rest of the image might be kept as is or modified more or less.

Model Details

- Developed by: Destitech

- Model type: Controlnet

- License: The CreativeML OpenRAIL M license is an Open RAIL M license, adapted from the work that BigScience and the RAIL Initiative are jointly carrying in the area of responsible AI licensing. See also the article about the BLOOM Open RAIL license on which our license is based.

Released Checkpoints

Model link Model link - fp16 version - Built by OzzyGT

Usage with Diffusers

OzzyGT made a really good guide on how to use this model for outpainting, give it a try Here !

A big thank you to him for pointing me out how to name the files for diffusers compatibility and for the fp16 version, you should be able to use it this way with both normal and fp16 version:

from diffusers import ControlNetModel

import torch

controlnet = ControlNetModel.from_pretrained(

"destitech/controlnet-inpaint-dreamer-sdxl", torch_dtype=torch.float16, variant="fp16"

)

Usage with ComfyUI

More examples

- Downloads last month

- 1,045