metadata

tags:

- yi

- moe

license: apache-2.0

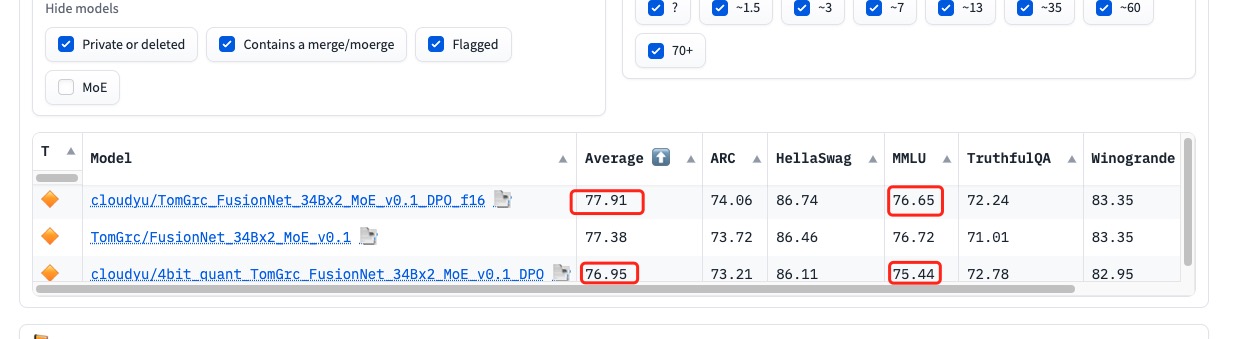

this is a 4 bit DPO fine-tuned MoE model for TomGrc/FusionNet_34Bx2_MoE_v0.1

DPO Trainer

TRL supports the DPO Trainer for training language models from preference data, as described in the paper Direct Preference Optimization: Your Language Model is Secretly a Reward Model by Rafailov et al., 2023.