UPDATE: Introducing the ConceptSmasher

So... While randomly messing around with ComfyUI (this will only work in ComfyUI), I stumbled upon this neat little configuration of nodes. It is particularly interesting when used with Storytime, which is not a thing I planned at all. Being a person who enjoys random/strange/weird AND someone who likes to use models in ways that may not reflect intended use, this appeals to my personal interest in exploring what ML models can do, artistically speaking.

PSA: ConceptSmasher will generate all sorts of randomness so BEWARE IT MAY SPIT OUT NSFW WITHOUT WARNING. You've been warned.

Obviously if a model has that in its training data then that is always a possibility.

The ConceptSmasher is designed to work with some rather wild settings -- no prompt and a CFG of 100. You can, of course,

explore other uses/ideas/etc, but this is my "fire and forget" configuration. Inasmuch as ComfyUI has nodes for randomly

loading images from folders, that would make this 100% more awesome. ALSO inasmuch as ComfyUI could have the ability

to randomly modify any node values at all (selectable per slider/value/etc), THAT would be EXTREMELY AWESOME. I might

make this, but until then... What this monstrosity looks like (link to workflow -- ):

And some sample images (More in the "ConceptSmasher" folder):

STORYTIME -- teaching Stable Diffusion to use its words

more samples here

Greetings, Internet. The purpose of the Storytime model is to create a Stable Diffusion model that "communicates" with text and images. My focus currently is on the English language, but I believe any of the techniques that I apply are applicable to other written languages as well. Inasmuch as "language" encompasses a huge range of notions and ideas (many of which I am probably ignorant of as a hobbyist), the purpose specifically is to use undifferentiated image data to "teach" Stable Diffusion about various language concepts, then see if it can adopt them and apply them to images.

To give you a sense of what I mean, this model is Stable Diffusion v1.5 fine-tuned using Dreambooth on a small dataset of alphabet flashcards, simple word lists, grammatical concepts presented visually via charts, images of pages of text, and images of text along with picutres, all in English. However, the letters, words, sentences, paragraphs, and other mechanics are not specifically identified in the captions. A flashcard showing the letter "C", for instance, will have a generic caption such as "a picture of a letter from the English alphabet". But the letter itself is not identified. An image of a list of common sight-words will have a caption similar to "A picture showing common words in the English language written using the English alphabet". And so on. At some point in time I will publish the data I use for training, but I want to be sure about sourcing and attribution and any issues around those (and rectify any that may be problematic) before publishing.

In a similar manner lists of words are presented with the concept/class "word" included in the caption, but the words themselves are not spelled out. Can Stable Diffusion learn to put together legible letters, words, and sentences simply by "learning" from data presented in this manner? This is what I aim to understand.

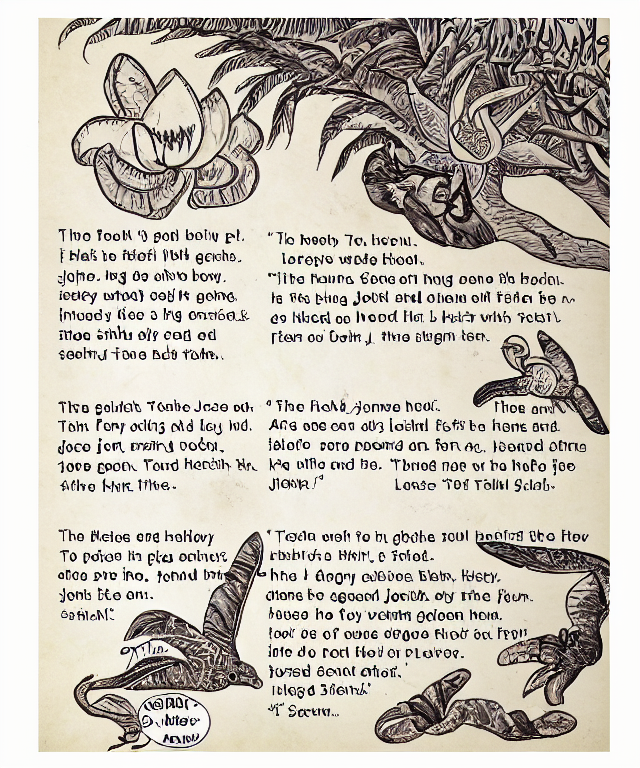

The sample images here include those that contain legible/partially legible words, including words (usually) from the image generation prompt. I suspect these are words that are "abundant", visually, in the dataset, but at this point I cannot and will not draw any conclusions about whether or not, for instance, the Stable Diffusion model has learned to identify the word "tree" with its visual representation, both in picture form (a picture of a tree) and "written" form (a picture of the word "tree"). I have found by interrogating CLIP/BLIP to generate captions for some of these images that it picks up on the words written in the picture, even nonsense words and/or misspellings and those with letters whose form isn't well-defined. One of those results is shown below.

v1 of Storytime was trained using 130 512x512 images of alphabet letters, flashcards, word charts, pages of text, and pages of text with images on a computer running Windows 10 with a single Nvidia RTX a4000 GPU for 300 epochs with a batch size of 8. No prior preservations images were used.

For best results (most legible text in the image), use the DPM++ 2M algorithm with a CFG around 7 and 90 sample steps at a resolution of 640w x 768h.

Prompt Suggestions

The images in the "samples" directory were generated using the Dynamic Prompts plug-in for the Automatic1111 webui. In this instance, it can function as a sort of mad-lib generator where different combinations of words/subjects can appear together randomly by using a prompt similar to the following:

a page from a book containing pictures of a {car|galaxy|tree|cat} and a {lake|dog|house|sun} with text below the pictures written in the English language telling a {sad|happy|intersting|boring} story about the pictures

Running this may produce output like the following:

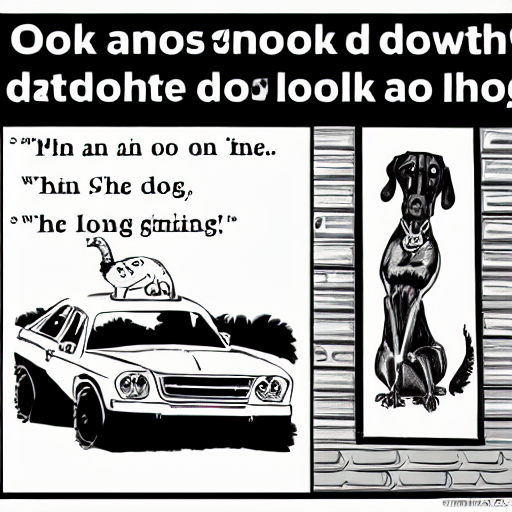

generated prompt: "a page from a book containing pictures of a car and a dog with text below the pictures written in the English language telling a boring story about the pictures"

Interrogated caption via CLIP: "a cartoon of a dog and a car with a caption that reads, do not look down on the dog, Art & Language, storybook illustration, a storybook illustration, art & language"

Directory structure and contents:

/samples -- sample images in .png format, with prompt text and information in the corresponding .txt file

/model -- downloadable model file in safetesors format and corresponding YAML

tags:

- {stable-diffusion}

- {text2image}

Model tree for aplewe/storytime

Base model

runwayml/stable-diffusion-v1-5