license: other

license_name: seallms

license_link: https://huggingface.co/SeaLLMs/SeaLLM-13B-Chat/blob/main/LICENSE

language:

- en

- zh

- vi

- id

- th

- ms

- km

- lo

- my

- tl

tags:

- multilingual

- sea

SeaLLM-7B-v2.5 - Large Language Models for Southeast Asia

**This repository is the modification of the SeaLLMs/SeaLLM-7B-v2.5**

We offer a SeaLLM-7B-v2.5-AWQ which is a 4-bit AWQ quantization version of the SeaLLMs/SeaLLM-7B-v2.5 (compatible with vLLM)

Website 🤗 Tech Memo 🤗 DEMO Github Technical Report

🔥[HOT] SeaLLMs project now has a dedicated website - damo-nlp-sg.github.io/SeaLLMs

We introduce SeaLLM-7B-v2.5, the state-of-the-art multilingual LLM for Southeast Asian (SEA) languages 🇬🇧 🇨🇳 🇻🇳 🇮🇩 🇹🇭 🇲🇾 🇰🇭 🇱🇦 🇲🇲 🇵🇭. It is the most significant upgrade since SeaLLM-13B, with half the size, outperforming performance across diverse multilingual tasks, from world knowledge, math reasoning, instruction following, etc.

Highlights

- SeaLLM-7B-v2.5 outperforms GPT-3.5 and achieves 7B SOTA on most multilingual knowledge benchmarks for SEA languages (MMLU, M3Exam & VMLU).

- It achieves 79.0 and 34.9 on GSM8K and MATH, surpassing GPT-3.5 in MATH.

Release and DEMO

- DEMO:

- SeaLLMs/SeaLLM-7B-v2.5.

- SeaLLMs/SeaLLM-7B | SeaLMMM-7B - Experimental multimodal SeaLLM.

- Technical report: Arxiv: SeaLLMs - Large Language Models for Southeast Asia.

- Model weights:

- Run locally:

- LM-studio:

- SeaLLM-7B-v2.5-q4_0-chatml with ChatML template (

<eos>token changed to<|im_end|>) - SeaLLM-7B-v2.5-q4_0 - must use SeaLLM-7B-v2.5 chat format.

- SeaLLM-7B-v2.5-q4_0-chatml with ChatML template (

- MLX for Apple Silicon: SeaLLMs/SeaLLM-7B-v2.5-mlx-quantized

- LM-studio:

- Previous models:

Terms of Use and License: By using our released weights, codes, and demos, you agree to and comply with the terms and conditions specified in our SeaLLMs Terms Of Use.

Disclaimer: We must note that even though the weights, codes, and demos are released in an open manner, similar to other pre-trained language models, and despite our best efforts in red teaming and safety fine-tuning and enforcement, our models come with potential risks, including but not limited to inaccurate, misleading or potentially harmful generation. Developers and stakeholders should perform their own red teaming and provide related security measures before deployment, and they must abide by and comply with local governance and regulations. In no event shall the authors be held liable for any claim, damages, or other liability arising from the use of the released weights, codes, or demos.

The logo was generated by DALL-E 3.

What's new since SeaLLM-7B-v2?

- SeaLLM-7B-v2.5 was built on top of Gemma-7b, and underwent large scale SFT and carefully designed alignment.

Evaluation

Multilingual World Knowledge

We evaluate models on 3 benchmarks following the recommended default setups: 5-shot MMLU for En, 3-shot M3Exam (M3e) for En, Zh, Vi, Id, Th, and zero-shot VMLU for Vi.

| Model | Langs | En MMLU |

En M3e |

Zh M3e |

Vi M3e |

Vi VMLU |

Id M3e |

Th M3e |

|---|---|---|---|---|---|---|---|---|

| GPT-3.5 | Multi | 68.90 | 75.46 | 60.20 | 58.64 | 46.32 | 49.27 | 37.41 |

| Vistral-7B-chat | Mono | 56.86 | 67.00 | 44.56 | 54.33 | 50.03 | 36.49 | 25.27 |

| Qwen1.5-7B-chat | Multi | 61.00 | 52.07 | 81.96 | 43.38 | 45.02 | 24.29 | 20.25 |

| SailorLM | Multi | 52.72 | 59.76 | 67.74 | 50.14 | --- | 39.53 | 37.73 |

| SeaLLM-7B-v2 | Multi | 61.89 | 70.91 | 55.43 | 51.15 | 45.74 | 42.25 | 35.52 |

| SeaLLM-7B-v2.5 | Multi | 64.05 | 76.87 | 62.54 | 63.11 | 53.30 | 48.64 | 46.86 |

Zero-shot CoT Multilingual Math Reasoning

| Model | GSM8K en |

MATH en |

GSM8K zh |

MATH zh |

GSM8K vi |

MATH vi |

GSM8K id |

MATH id |

GSM8K th |

MATH th |

|---|---|---|---|---|---|---|---|---|---|---|

| GPT-3.5 | 80.8 | 34.1 | 48.2 | 21.5 | 55 | 26.5 | 64.3 | 26.4 | 35.8 | 18.1 |

| Qwen-14B-chat | 61.4 | 18.4 | 41.6 | 11.8 | 33.6 | 3.6 | 44.7 | 8.6 | 22 | 6.0 |

| Vistral-7b-chat | 48.2 | 12.5 | 48.7 | 3.1 | ||||||

| Qwen1.5-7B-chat | 56.8 | 15.3 | 40.0 | 2.7 | 37.7 | 9 | 36.9 | 7.7 | 21.9 | 4.7 |

| SeaLLM-7B-v2 | 78.2 | 27.5 | 53.7 | 17.6 | 69.9 | 23.8 | 71.5 | 24.4 | 59.6 | 22.4 |

| SeaLLM-7B-v2.5 | 78.5 | 34.9 | 51.3 | 22.1 | 72.3 | 30.2 | 71.5 | 30.1 | 62.0 | 28.4 |

Baselines were evaluated using their respective chat-template and system prompts (Qwen1.5-7B-chat, Vistral).

Zero-shot MGSM

SeaLLM-7B-v2.5 also outperforms GPT-3.5 and Qwen-14B on the multilingual MGSM for Thai.

| Model | MGSM-Zh | MGSM-Th |

|---|---|---|

| ChatGPT (reported) | 61.2 | 47.2 |

| Qwen-14B-chat | 59.6 | 28 |

| SeaLLM-7B-v2 | 64.8 | 62.4 |

| SeaLLM-7B-v2.5 | 58.0 | 64.8 |

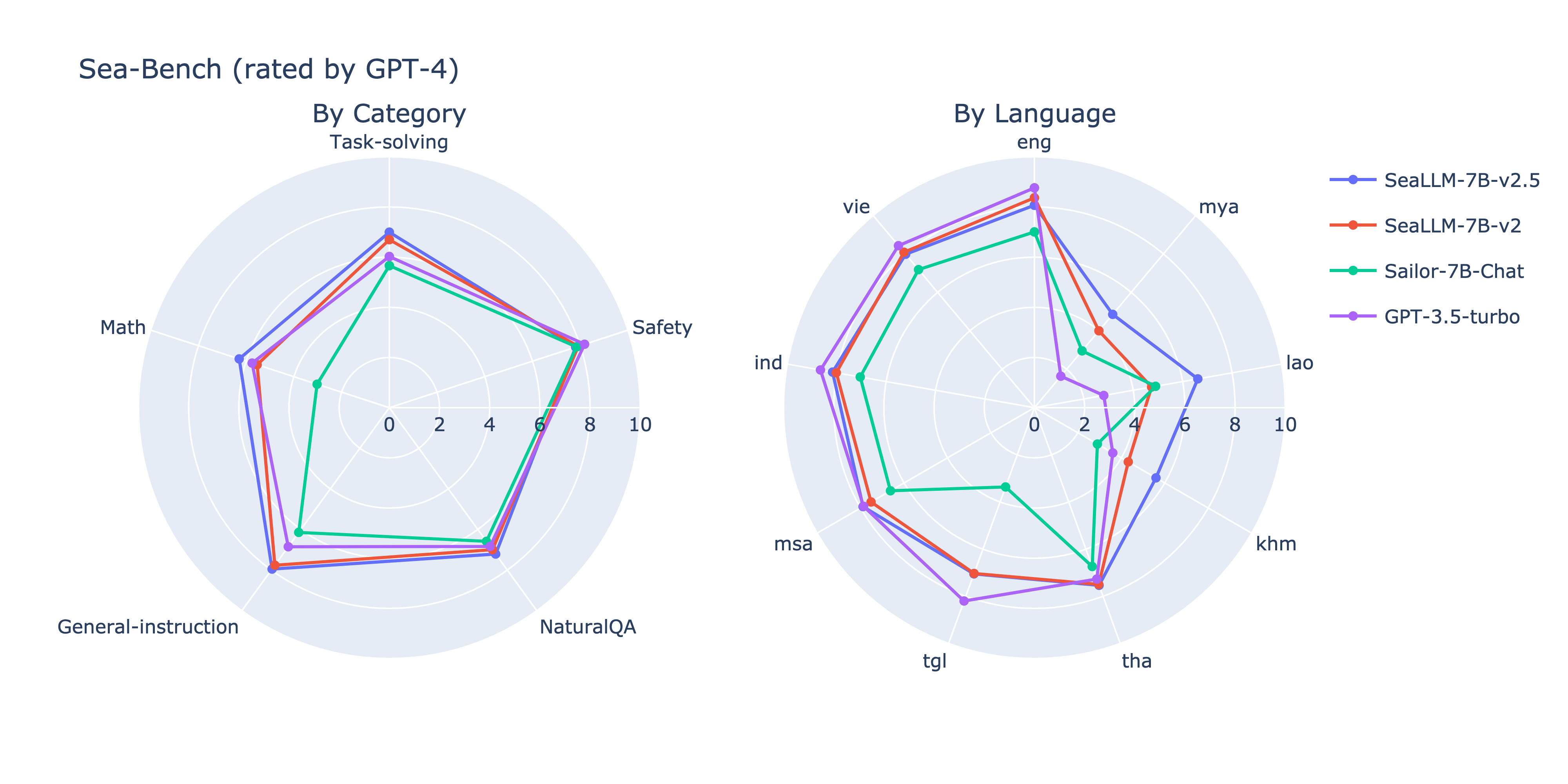

Sea-Bench

Usage

IMPORTANT NOTICE for using the model

<bos>must be at start of prompt, ff your code's tokenizer does not prepend<bos>by default, you MUST prepend into the prompt yourself, otherwise, it would not work!- Repitition penalty (e.g: in llama.cpp, ollama, LM-studio) must be set to 1 , otherwise will lead to degeneration!

Instruction format

# ! WARNING, if your code's tokenizer does not prepend <bos> by default,

# You MUST prepend <bos> into the prompt yourself, otherwise, it would not work!

prompt = """<|im_start|>system

You are a helpful assistant.<eos>

<|im_start|>user

Hello world<eos>

<|im_start|>assistant

Hi there, how can I help?<eos>"""

# <|im_start|> is not a special token.

# Transformers chat_template should be consistent with vLLM format below.

# ! ENSURE 1 and only 1 bos `<bos>` at the beginning of sequence

print(tokenizer.convert_ids_to_tokens(tokenizer.encode(prompt)))

"""

Using transformers's chat_template

Install the latest transformers (>4.40)

from transformers import AutoModelForCausalLM, AutoTokenizer

device = "cuda" # the device to load the model onto

# use bfloat16 to ensure the best performance.

model = AutoModelForCausalLM.from_pretrained("SorawitChok/SeaLLM-7B-v2.5-AWQ", torch_dtype=torch.bfloat16, device_map=device)

tokenizer = AutoTokenizer.from_pretrained("SorawitChok/SeaLLM-7B-v2.5-AWQ")

messages = [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Hello world"},

{"role": "assistant", "content": "Hi there, how can I help you today?"},

{"role": "user", "content": "Explain general relativity in details."}

]

encodeds = tokenizer.apply_chat_template(messages, return_tensors="pt", add_generation_prompt=True)

print(tokenizer.convert_ids_to_tokens(encodeds[0]))

model_inputs = encodeds.to(device)

model.to(device)

generated_ids = model.generate(model_inputs, max_new_tokens=1000, do_sample=True, pad_token_id=tokenizer.pad_token_id)

decoded = tokenizer.batch_decode(generated_ids)

print(decoded[0])

Using vLLM

from vllm import LLM, SamplingParams

TURN_TEMPLATE = "<|im_start|>{role}\n{content}<eos>\n"

TURN_PREFIX = "<|im_start|>{role}\n"

def seallm_chat_convo_format(conversations, add_assistant_prefix: bool, system_prompt=None):

# conversations: list of dict with key `role` and `content` (openai format)

if conversations[0]['role'] != 'system' and system_prompt is not None:

conversations = [{"role": "system", "content": system_prompt}] + conversations

text = ''

for turn_id, turn in enumerate(conversations):

prompt = TURN_TEMPLATE.format(role=turn['role'], content=turn['content'])

text += prompt

if add_assistant_prefix:

prompt = TURN_PREFIX.format(role='assistant')

text += prompt

return text

sparams = SamplingParams(temperature=0.1, max_tokens=1024, stop=['<eos>', '<|im_start|>'])

llm = LLM("SorawitChok/SeaLLM-7B-v2.5-AWQ", quantization="AWQ")

message = [

{"role": "user", "content": "Explain general relativity in details."}

]

prompt = seallm_chat_convo_format(message, True)

gen = llm.generate(prompt, sampling_params)

print(gen[0].outputs[0].text)

Acknowledgement to Our Linguists

We would like to express our special thanks to our professional and native linguists, Tantong Champaiboon, Nguyen Ngoc Yen Nhi and Tara Devina Putri, who helped build, evaluate, and fact-check our sampled pretraining and SFT dataset as well as evaluating our models across different aspects, especially safety.

Citation

If you find our project useful, we hope you would kindly star our repo and cite our work as follows: Corresponding Author: l.bing@alibaba-inc.com Author list and order will change!

*and^are equal contributions.

@article{damonlpsg2023seallm,

author = {Xuan-Phi Nguyen*, Wenxuan Zhang*, Xin Li*, Mahani Aljunied*, Weiwen Xu, Hou Pong Chan,

Zhiqiang Hu, Chenhui Shen^, Yew Ken Chia^, Xingxuan Li, Jianyu Wang,

Qingyu Tan, Liying Cheng, Guanzheng Chen, Yue Deng, Sen Yang,

Chaoqun Liu, Hang Zhang, Lidong Bing},

title = {SeaLLMs - Large Language Models for Southeast Asia},

year = 2023,

Eprint = {arXiv:2312.00738},

}