BioMedGPT-LM-7B

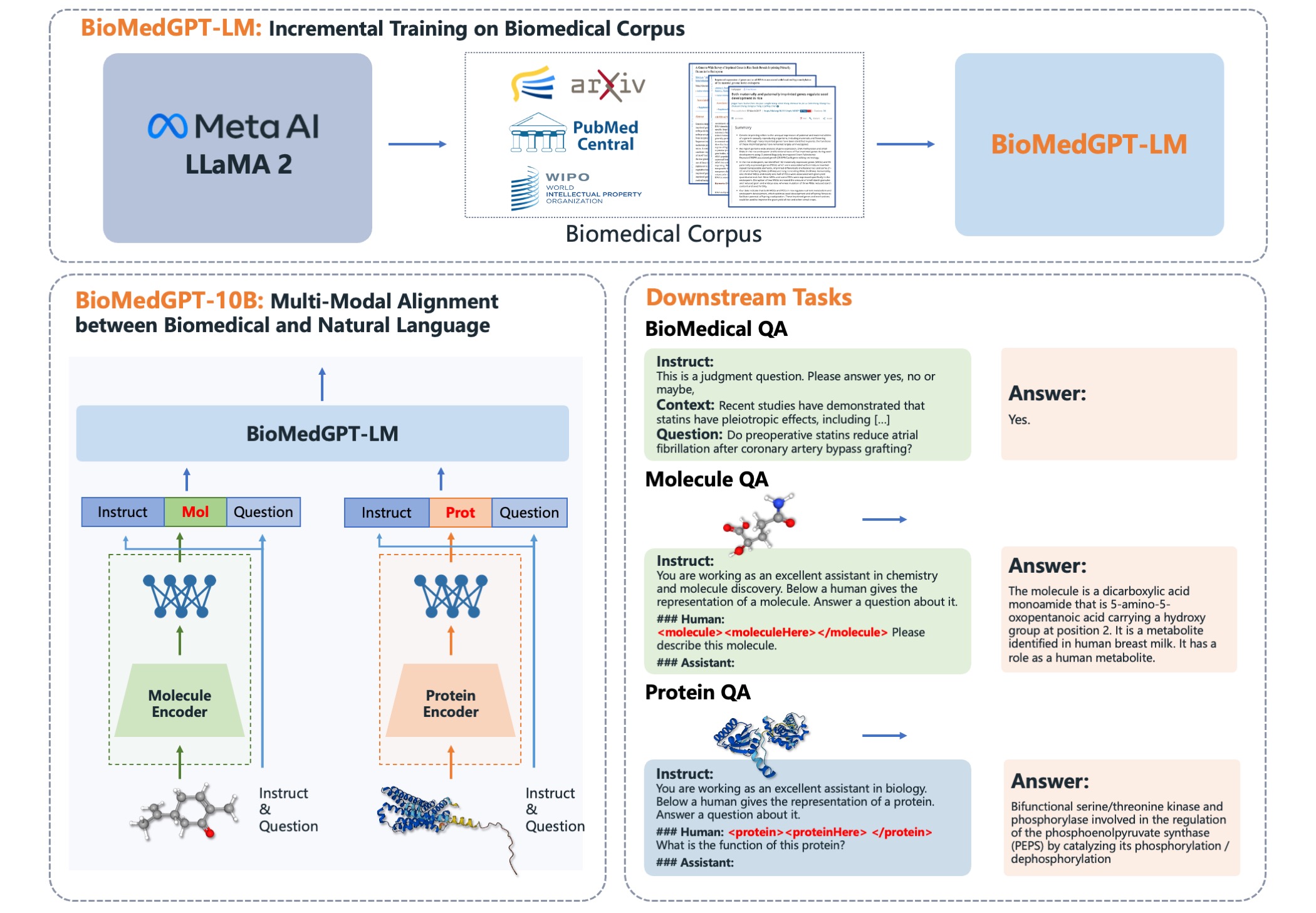

BioMedGPT-LM-7B is the first large generative language model based on Llama2 in the biomedical domain. It was fine-tuned from the Llama2-7B-Chat with millions of biomedical papers from the S2ORC corpus. Through further fine-tuning, BioMedGPT-LM-7B outperforms or is on par with human and significantly larger general-purpose foundation models on several biomedical QA benchmarks.

Training Details

The model was trained with the following hyperparameters:

- Epochs: 5

- Batch size: 192

- Context length: 2048

- Learning rate: 2e-5

BioMedGPT-LM-7B is fine-tuned on over 26 billion tokens highly pertinent to the field of biomedicine. The fine-tuning data are extracted from millions of biomedical papers in S2ORC data using PubMed Central (PMC)-ID and PubMed ID as criteria.

Model Developers

PharMolix

How to Use

BioMedGPT-LM-7B is the generative language model of BioMedGPT-10B, an open-source version of BioMedGPT. BioMedGPT is an open multimodal generative pre-trained transformer (GPT) for biomedicine, which bridges the natural language modality and diverse biomedical data modalities via large generative language models.

Technical Report

More technical details of BioMedGPT-LM-7B, BioMedGPT-10B, and BioMedGPT can be found in the technical reprot: "BioMedGPT: Open Multimodal Generative Pre-trained Transformer for BioMedicine".

GitHub

https://github.com/PharMolix/OpenBioMed

Limitations

This repository holds BioMedGPT-LM-7B, and we emphasize the responsible and ethical use of this model. BioMedGPT-LM-7B should NOT be used to provide services to the general public. Generating any content that violates applicable laws and regulations, such as inciting subversion of state power, endangering national security and interests, propagating terrorism, extremism, ethnic hatred and discrimination, violence, pornography, or false and harmful information, etc. is strictly prohibited. BioMedGPT-LM-7B is not liable for any consequences arising from any content, data, or information provided or published by users.

Licenses

This repository is licensed under the Apache-2.0. The use of BioMedGPT-LM-7B model is accompanied with Acceptable Use Policy.

- Downloads last month

- 417