datasets:

- Open-Orca/OpenOrca

language:

- en

library_name: transformers

pipeline_tag: text-generation

license: apache-2.0

🐋 Mistral-7B-OpenOrca 🐋

OpenOrca - Mistral - 7B - 8k

We have used our own OpenOrca dataset to fine-tune on top of Mistral 7B. This dataset is our attempt to reproduce the dataset generated for Microsoft Research's Orca Paper. We use OpenChat packing, trained with Axolotl.

This release is trained on a curated filtered subset of most of our GPT-4 augmented data. It is the same subset of our data as was used in our OpenOrcaxOpenChat-Preview2-13B model.

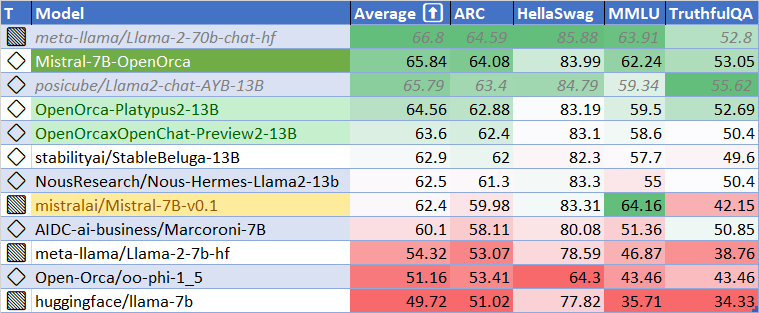

HF Leaderboard evals place this model as #2 for all models smaller than 30B at release time, outperforming all but one 13B model.

This release provides a first: a fully open model with class-breaking performance, capable of running fully accelerated on even moderate consumer GPUs. Our thanks to the Mistral team for leading the way here.

Want to visualize our full (pre-filtering) dataset? Check out our Nomic Atlas Map.

We are in-process with training more models, so keep a look out on our org for releases coming soon with exciting partners.

We will also give sneak-peak announcements on our Discord, which you can find here:

or check the OpenAccess AI Collective Discord for more information about Axolotl trainer here:

Prompt Template

We used OpenAI's Chat Markup Language (ChatML) format, with <|im_start|> and <|im_end|> tokens added to support this.

Example Prompt Exchange

<|im_start|>system

You are MistralOrca, a large language model trained by Alignment Lab AI. Write out your reasoning step-by-step to be sure you get the right answers!

<|im_end|>

<|im_start|>user

How are you<|im_end|>

<|im_start|>assistant

I am doing well!<|im_end|>

<|im_start|>user

Please tell me about how mistral winds have attracted super-orcas.<|im_end|>

Evaluation

HuggingFace Leaderboard Performance

We have evaluated using the methodology and tools for the HuggingFace Leaderboard, and find that we have dramatically improved upon the base model. We find 105% of the base model's performance on HF Leaderboard evals, averaging 65.33.

| Metric | Value |

|---|---|

| MMLU (5-shot) | 61.73 |

| ARC (25-shot) | 63.57 |

| HellaSwag (10-shot) | 83.79 |

| TruthfulQA (0-shot) | 52.24 |

| Avg. | 65.33 |

We use Language Model Evaluation Harness to run the benchmark tests above, using the same version as the HuggingFace LLM Leaderboard.

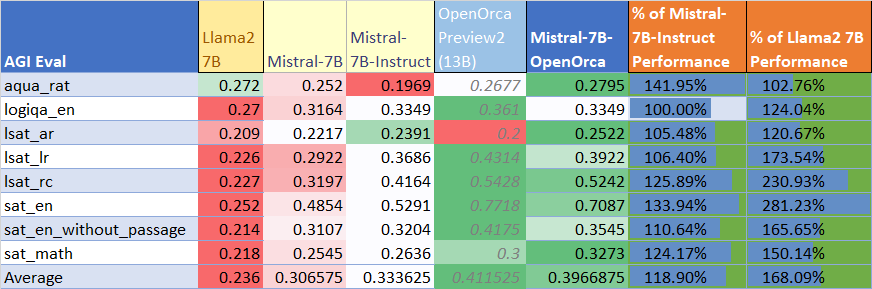

AGIEval Performance

We compare our results to our base Preview2 model (using LM Evaluation Harness).

We find 129% of the base model's performance on AGI Eval, averaging 0.397.

As well, we significantly improve upon the official mistralai/Mistral-7B-Instruct-v0.1 finetuning, achieving 119% of their performance.

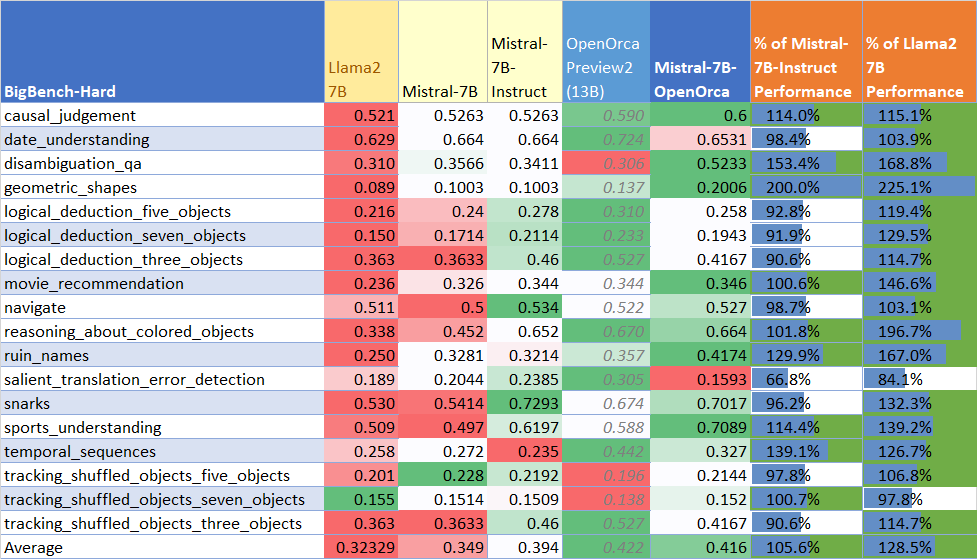

BigBench-Hard Performance

We compare our results to our base Preview2 model (using LM Evaluation Harness).

We find 119% of the base model's performance on BigBench-Hard, averaging 0.416.

Dataset

We used a curated, filtered selection of most of the GPT-4 augmented data from our OpenOrca dataset, which aims to reproduce the Orca Research Paper dataset.

Training

We trained with 8x A6000 GPUs for 62 hours, completing 4 epochs of full fine tuning on our dataset in one training run. Commodity cost was ~$400.

Citation

@misc{mukherjee2023orca,

title={Orca: Progressive Learning from Complex Explanation Traces of GPT-4},

author={Subhabrata Mukherjee and Arindam Mitra and Ganesh Jawahar and Sahaj Agarwal and Hamid Palangi and Ahmed Awadallah},

year={2023},

eprint={2306.02707},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

@misc{longpre2023flan,

title={The Flan Collection: Designing Data and Methods for Effective Instruction Tuning},

author={Shayne Longpre and Le Hou and Tu Vu and Albert Webson and Hyung Won Chung and Yi Tay and Denny Zhou and Quoc V. Le and Barret Zoph and Jason Wei and Adam Roberts},

year={2023},

eprint={2301.13688},

archivePrefix={arXiv},

primaryClass={cs.AI}

}