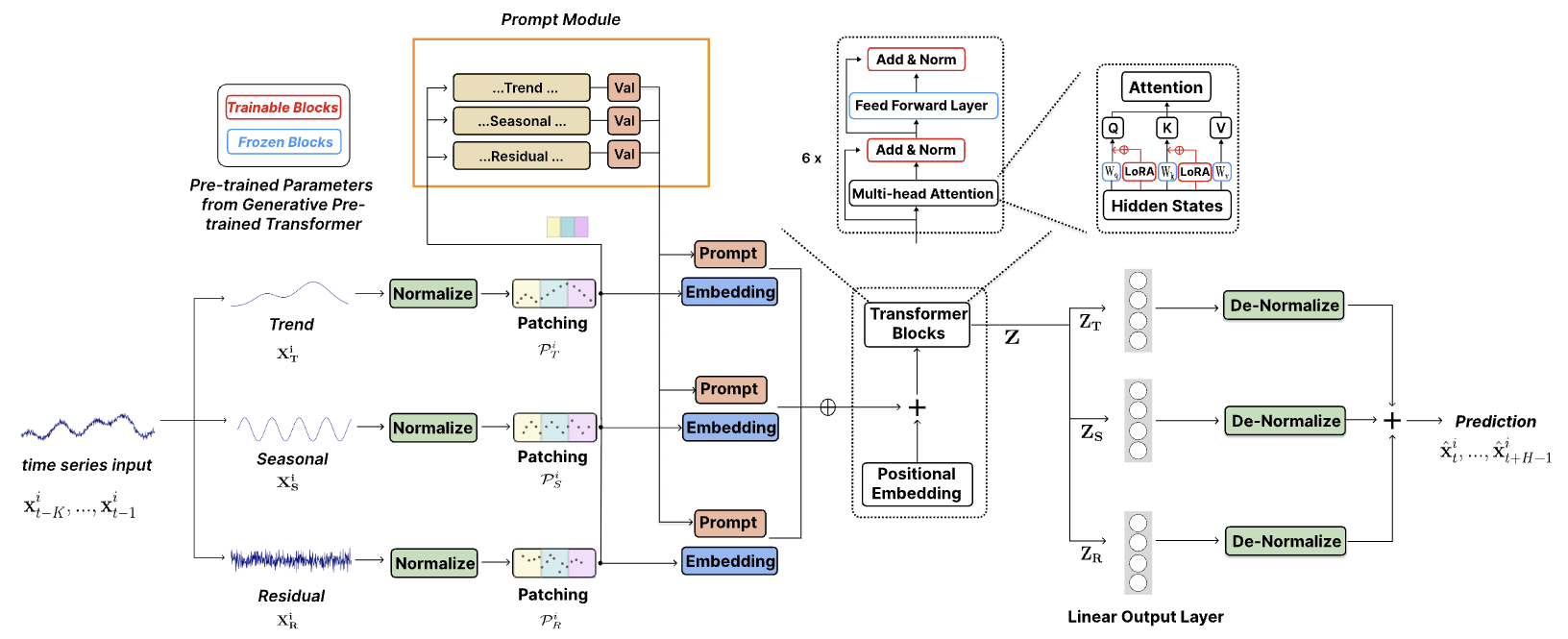

TEMPO: Prompt-based Generative Pre-trained Transformer for Time Series Forecasting

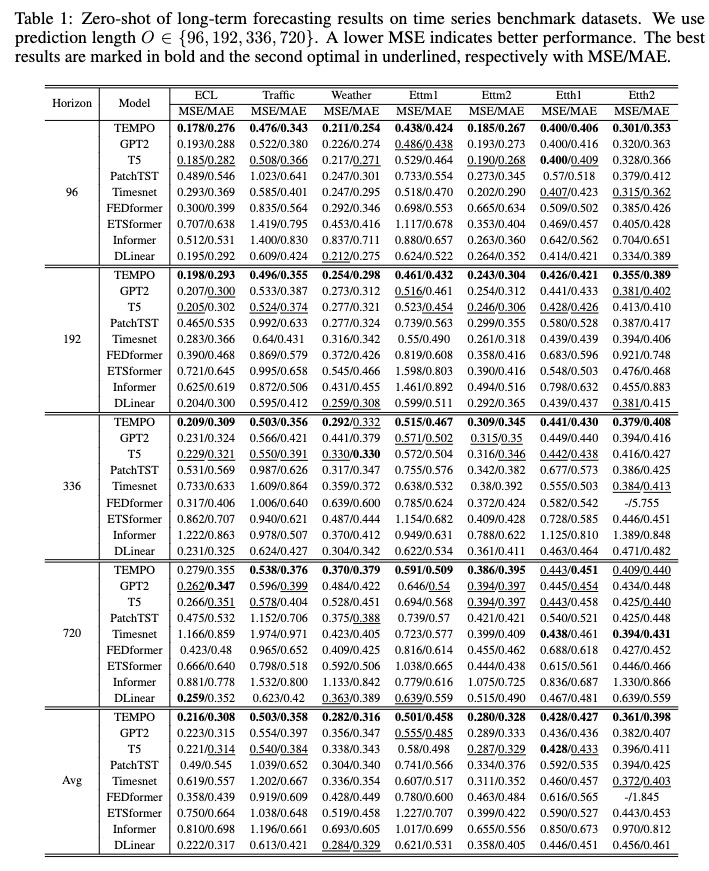

The official code for ["TEMPO: Prompt-based Generative Pre-trained Transformer for Time Series Forecasting (ICLR 2024)"]. TEMPO is one of the very first open source Time Series Foundation Models for forecasting task v1.0 version.

💡 Demos

1. Reproducing zero-shot experiments on ETTh2:

Please try to reproduc the zero-shot experiments on ETTh2 [here on Colab].

2. Zero-shot experiments on customer dataset:

We use the following Colab page to show the demo of building the customer dataset and directly do the inference via our pre-trained foundation model: [Colab]

🔧 Hands-on: Using Foundation Model

1. Download the repo

git clone git@github.com:DC-research/TEMPO.git

2. [Optional] Download the model and config file via commands

huggingface-cli download Melady/TEMPO config.json --local-dir ./TEMPO/TEMPO_checkpoints

huggingface-cli download Melady/TEMPO TEMPO-80M_v1.pth --local-dir ./TEMPO/TEMPO_checkpoints

huggingface-cli download Melady/TEMPO TEMPO-80M_v2.pth --local-dir ./TEMPO/TEMPO_checkpoints

3. Build the environment

conda create -n tempo python=3.8

conda activate tempo

cd TEMPO

pip install -r requirements.txt

4. Script Demo

A streamlining example showing how to perform forecasting using TEMPO:

# Third-party library imports

import numpy as np

import torch

from numpy.random import choice

# Local imports

from models.TEMPO import TEMPO

model = TEMPO.load_pretrained_model(

device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu'),

repo_id = "Melady/TEMPO",

filename = "TEMPO-80M_v1.pth",

cache_dir = "./checkpoints/TEMPO_checkpoints"

)

input_data = np.random.rand(336) # Random input data

with torch.no_grad():

predicted_values = model.predict(input_data, pred_length=96)

print("Predicted values:")

print(predicted_values)

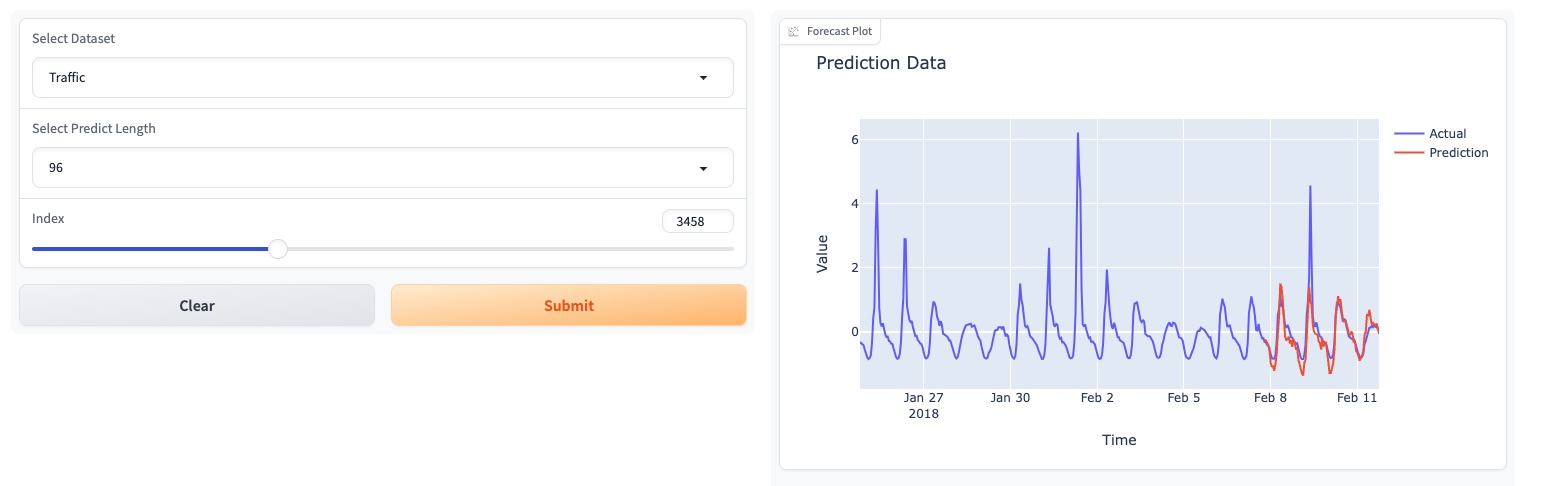

5. Online demo

Please try our foundation model demo [here].

🔨 Advanced Practice: Full Training Workflow!

We also updated our models on HuggingFace: [Melady/TEMPO].

1. Get Data

Download the data from [Google Drive] or [Baidu Drive], and place the downloaded data in the folder./dataset. You can also download the STL results from [Google Drive], and place the downloaded data in the folder./stl.

2. Run Scripts

2.1 Pre-Training Stage

bash [ecl, etth1, etth2, ettm1, ettm2, traffic, weather].sh

2.2 Test/ Inference Stage

After training, we can test TEMPO model under the zero-shot setting:

bash [ecl, etth1, etth2, ettm1, ettm2, traffic, weather]_test.sh

Pre-trained Models

You can download the pre-trained model from [Google Drive] and then run the test script for fun.

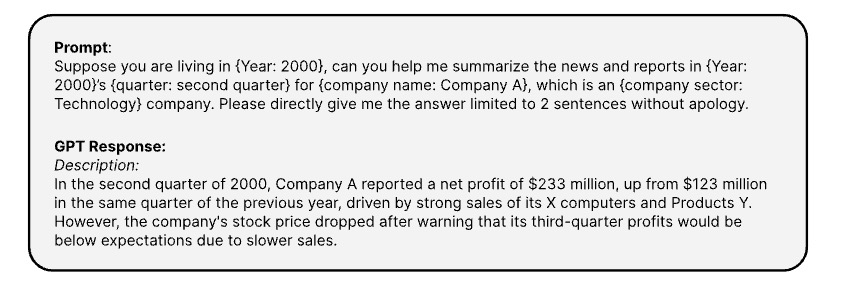

TETS dataset

Here is the prompts use to generate the coresponding textual informaton of time series via [OPENAI ChatGPT-3.5 API]

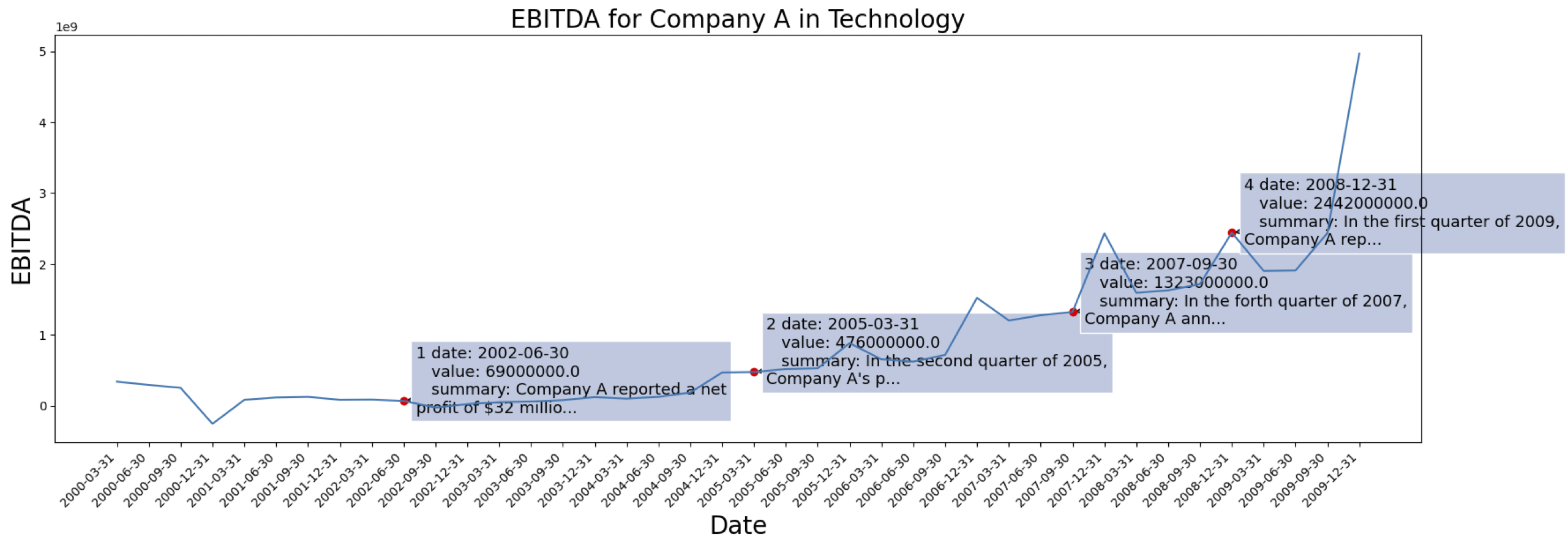

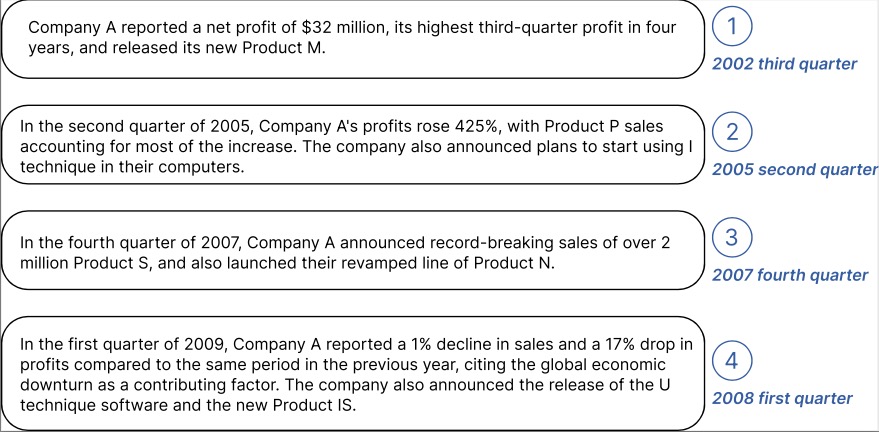

The time series data are come from [S&P 500]. Here is the EBITDA case for one company from the dataset:

Example of generated contextual information for the Company marked above:

You can download the processed data with text embedding from GPT2 from: [TETS].

🚀 News

Oct 2024: 🚀 We've streamlined our code structure, enabling users to download the pre-trained model and perform zero-shot inference with a single line of code! Check out our demo for more details. Our model's download count on HuggingFace is now trackable!

Jun 2024: 🚀 We added demos for reproducing zero-shot experiments in Colab. We also added the demo of building the customer dataset and directly do the inference via our pre-trained foundation model: Colab

May 2024: 🚀 TEMPO has launched a GUI-based online demo, allowing users to directly interact with our foundation model!

May 2024: 🚀 TEMPO published the 80M pretrained foundation model in HuggingFace!

May 2024: 🧪 We added the code for pretraining and inference TEMPO models. You can find a pre-training script demo in this folder. We also added a script for the inference demo.

Mar 2024: 📈 Released TETS dataset from S&P 500 used in multimodal experiments in TEMPO.

Mar 2024: 🧪 TEMPO published the project code and the pre-trained checkpoint online!

Jan 2024: 🚀 TEMPO paper get accepted by ICLR!

Oct 2023: 🚀 TEMPO paper released on Arxiv!

⏳ Upcoming Features

- [✅] Parallel pre-training pipeline

- [] Probabilistic forecasting

- [] Multimodal dataset

- [] Multimodal pre-training script

Contact

Feel free to connect DefuCao@USC.EDU / YanLiu.CS@USC.EDU if you’re interested in applying TEMPO to your real-world application.

Cite our work

@inproceedings{

cao2024tempo,

title={{TEMPO}: Prompt-based Generative Pre-trained Transformer for Time Series Forecasting},

author={Defu Cao and Furong Jia and Sercan O Arik and Tomas Pfister and Yixiang Zheng and Wen Ye and Yan Liu},

booktitle={The Twelfth International Conference on Learning Representations},

year={2024},

url={https://openreview.net/forum?id=YH5w12OUuU}

}

@article{

Jia_Wang_Zheng_Cao_Liu_2024,

title={GPT4MTS: Prompt-based Large Language Model for Multimodal Time-series Forecasting},

volume={38},

url={https://ojs.aaai.org/index.php/AAAI/article/view/30383},

DOI={10.1609/aaai.v38i21.30383},

number={21},

journal={Proceedings of the AAAI Conference on Artificial Intelligence},

author={Jia, Furong and Wang, Kevin and Zheng, Yixiang and Cao, Defu and Liu, Yan},

year={2024}, month={Mar.}, pages={23343-23351}

}

- Downloads last month

- 2,298

Model tree for Melady/TEMPO

Base model

openai-community/gpt2