GGUFs

Quantized versions of this model are available:

- https://huggingface.co/HaileyStorm/llama3-5.4b-instruct-Q8_0-GGUF

- https://huggingface.co/HaileyStorm/llama3-5.4b-instruct-Q6_K-GGUF

- https://huggingface.co/HaileyStorm/llama3-5.4b-instruct-Q5_K_M-GGUF

- https://huggingface.co/HaileyStorm/llama3-5.4b-instruct-Q4_0-GGUF

Pruned & Tuned

This is a "merge" of pre-trained language models created using mergekit. It is a prune of Meta-Llama-3-8B-Instruct from 32 layers down to 20, or about 5.4B parameter -- it's about 67% the size of the original. Mostly, this is a test of (significant) pruning & healing an instruct-tuned model.

Healing / Finetune

I healed the model by doing a full weight DPO finetune for 139k samples (3.15 epochs), and then a LoRA with r=128 a=256 for 73k samples (1.67 epochs). Both had 8k sequence length.

Prior to healing, the model returned absolute gibberish to any prompt, rarely two real words together. For example, give "2+2=" it might return "Mahmisan Pannpyout Na RMITa CMI TTi GP BP GP RSi TBi DD PS..."

The results are pretty good! The model has issues, but could have legitimate uses. It can carry on a conversation. It's certainly usable, if not useful.

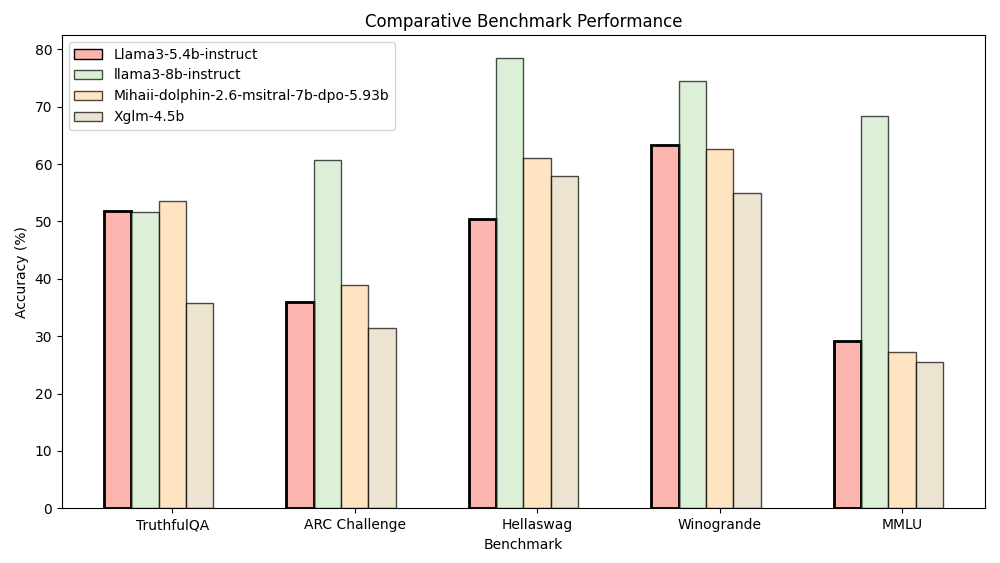

Truthfulness and commonsense reasoning suffered the least from the prune / were healed the best. Knowledge and complex reasoning suffered the most. This model has 67% the parameters of the original, and has:

- ~100% the TruthfulQA score of the original

- ~60% the ARC Challenge score

- ~65% the Hellaswag score

- ~85% the Winogrande score

- ~45% the the MMLU score

An average of 69% the benchmark scores for 67% the parameters, not bad! (Note, I had issues running the GSM8K and BBH benchmarks.) I do believe it could be much better, by doing the pruning in stages (say, 4 layers at a time) with some healing in between, and longer healing at the end with a more diverse dataset.

Benchmarks

Figure 1: Benchmark results for the pruned model, the original 8B model, and other models of similar size. Truthfulness and commonsense reasoning suffered the least from the prune / were healed the best. Knowledge and complex reasoning suffered the most.

Figure 1: Benchmark results for the pruned model, the original 8B model, and other models of similar size. Truthfulness and commonsense reasoning suffered the least from the prune / were healed the best. Knowledge and complex reasoning suffered the most.

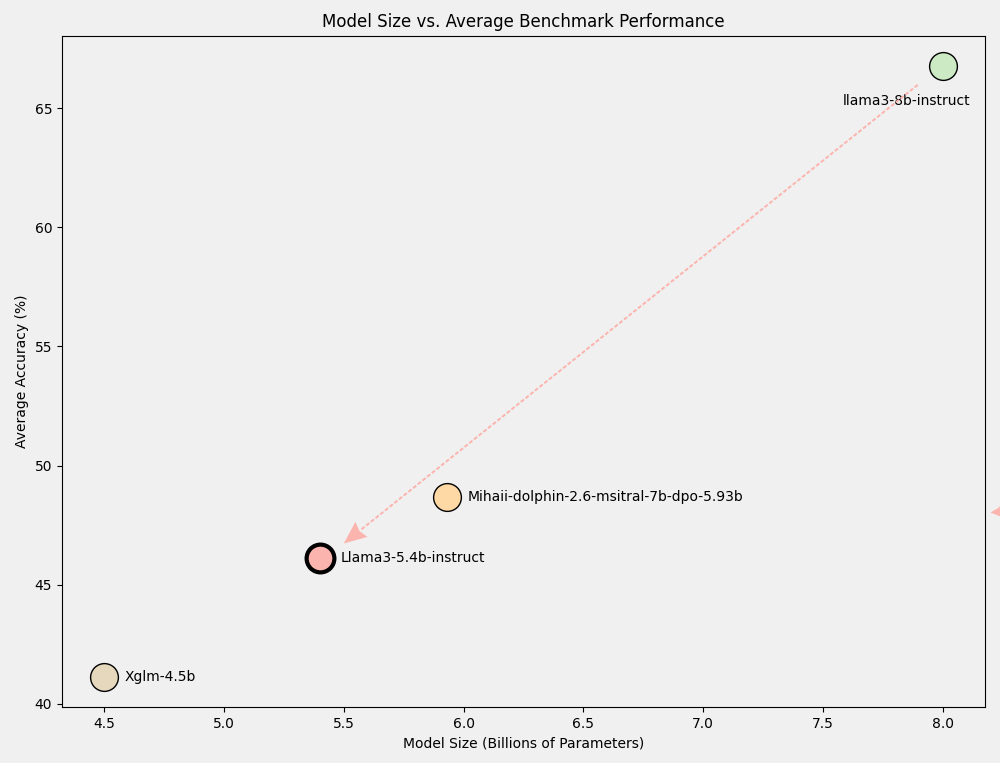

Figure 2: Model size vs average benchmark performance. Llama3-5.4b-instruct may not be fully healed, but its performance scales linearly with its size.

Figure 2: Model size vs average benchmark performance. Llama3-5.4b-instruct may not be fully healed, but its performance scales linearly with its size.

Why 5.4B?

This size should allow for:

- bf16 inference on 24GB VRAM

- Q8 or Q6 inference on 6GB VRAM

- Q5 inference on 4GB VRAM

- Fine-tuning on ... well, with less VRAM than an 8B model

And of course, as stated, it was a test of significant pruning, and of pruning&healing an instruct-tuned model. As a test, I think it's definitely successful.

Mergekit Details

Merge Method

This model was merged using the passthrough merge method.

Models Merged

The following models were included in the merge:

Configuration

The following YAML configuration was used to produce this model:

dtype: bfloat16

merge_method: passthrough

slices:

- sources:

- layer_range: [0, 16]

model: meta-llama/Meta-Llama-3-8B-Instruct

- sources:

- layer_range: [20, 21]

model: meta-llama/Meta-Llama-3-8B-Instruct

- sources:

- layer_range: [29, 32]

model: meta-llama/Meta-Llama-3-8B-Instruct

Weights & Biases Logs

Here are the logs for the full weight fine tune:

- https://wandb.ai/haileycollet/llama3-5b/runs/ryyqhc97

- https://wandb.ai/haileycollet/llama3-5b/runs/fpj2sct3

- https://wandb.ai/haileycollet/llama3-5b/runs/k9z6n9em

- https://wandb.ai/haileycollet/llama3-5b/runs/r3xqyhm2

And the LoRA logs:

- Downloads last month

- 8

Model tree for HaileyStorm/llama3-5.4b-instruct

Dataset used to train HaileyStorm/llama3-5.4b-instruct

Evaluation results

- TruthfulQA (0-Shot) on truthfulqa_mc2self-reported0.518

- AI2 Reasoning Challenge (25-Shot) on ai2_arcself-reported0.360

- HellaSwag (10-Shot) on hellaswagself-reported0.503

- Winogrande (5-Shot) on winograndeself-reported0.634

- MMLU (5-Shot) on mmluself-reported0.291