Model Card

![]()

📖 Technical report | 🏠 Code | 🐰 Demo

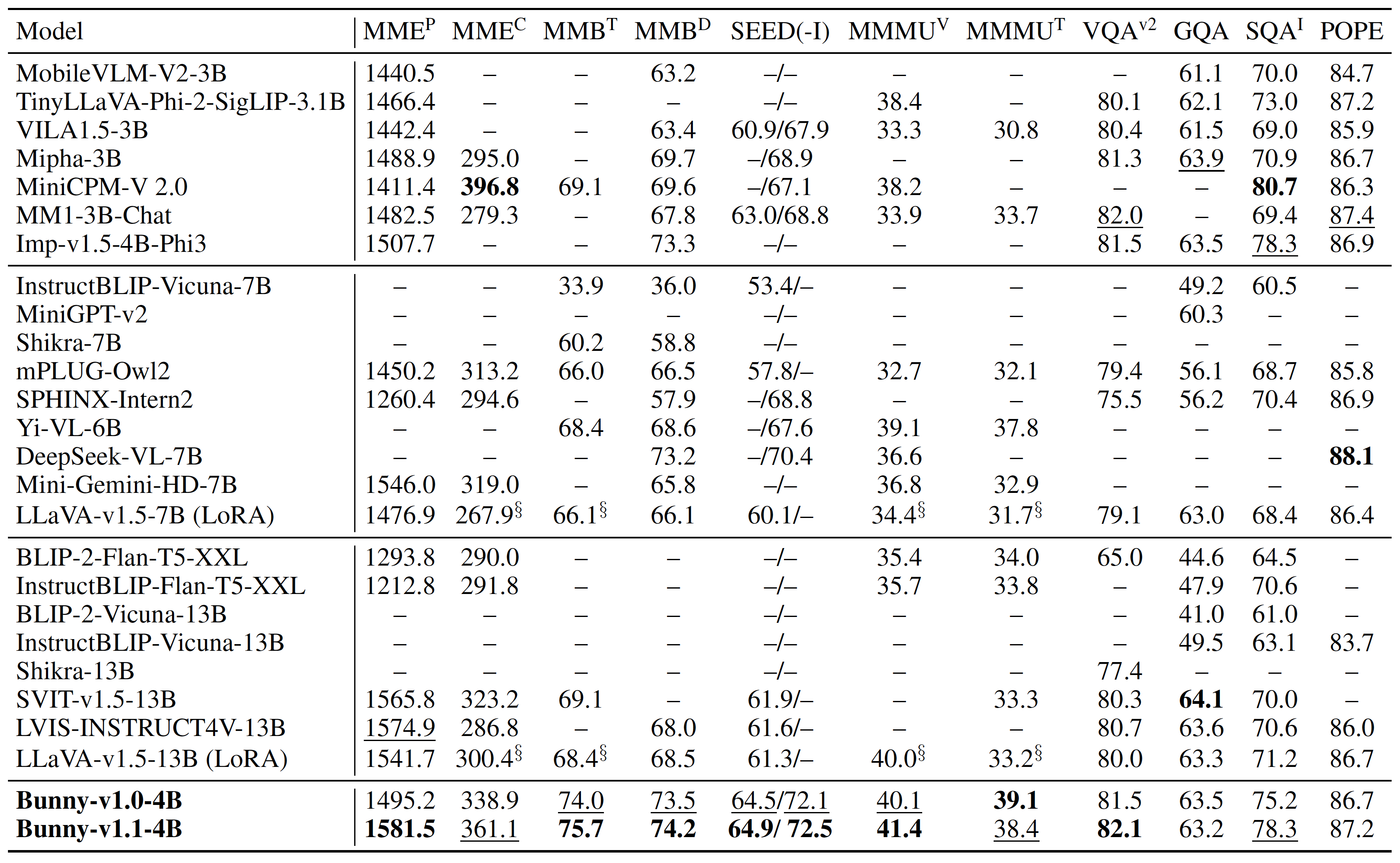

This is Bunny-v1.1-4B.

Bunny is a family of lightweight but powerful multimodal models. It offers multiple plug-and-play vision encoders, like EVA-CLIP, SigLIP and language backbones, including Phi-3-mini, Llama-3-8B, Phi-1.5, StableLM-2 and Phi-2. To compensate for the decrease in model size, we construct more informative training data by curated selection from a broader data source.

We provide Bunny-v1.1-4B, which is built upon SigLIP and Phi-3-mini-4k-instruct with S -Wrapper, supporting 1152x1152 resolution. More details about this model can be found in GitHub.

Quickstart

Here we show a code snippet to show you how to use the model with transformers.

Before running the snippet, you need to install the following dependencies:

pip install torch transformers accelerate pillow

If the CUDA memory is enough, it would be faster to execute this snippet by setting CUDA_VISIBLE_DEVICES=0.

Users especially those in Chinese mainland may want to refer to a HuggingFace mirror site.

import torch

import transformers

from transformers import AutoModelForCausalLM, AutoTokenizer

from PIL import Image

import warnings

# disable some warnings

transformers.logging.set_verbosity_error()

transformers.logging.disable_progress_bar()

warnings.filterwarnings('ignore')

# set device

device = 'cuda' # or cpu

torch.set_default_device(device)

# create model

model = AutoModelForCausalLM.from_pretrained(

'BAAI/Bunny-v1_1-4B',

torch_dtype=torch.float16, # float32 for cpu

device_map='auto',

trust_remote_code=True)

tokenizer = AutoTokenizer.from_pretrained(

'BAAI/Bunny-v1_1-4B',

trust_remote_code=True)

# text prompt

prompt = 'Why is the image funny?'

text = f"A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful, detailed, and polite answers to the user's questions. USER: <image>\n{prompt} ASSISTANT:"

text_chunks = [tokenizer(chunk).input_ids for chunk in text.split('<image>')]

input_ids = torch.tensor(text_chunks[0] + [-200] + text_chunks[1][1:], dtype=torch.long).unsqueeze(0).to(device)

# image, sample images can be found in images folder

image = Image.open('example_2.png')

image_tensor = model.process_images([image], model.config).to(dtype=model.dtype, device=device)

# generate

output_ids = model.generate(

input_ids,

images=image_tensor,

max_new_tokens=100,

use_cache=True,

repetition_penalty=1.0 # increase this to avoid chattering

)[0]

print(tokenizer.decode(output_ids[input_ids.shape[1]:], skip_special_tokens=True).strip())

- Downloads last month

- 144