Model Card for Antibody Generator (Based on ProGen2)

Model Details

- Model Name: Antibody Generator

- Version: 1.0

- Release Date: 12/15/2023

- Model Developer: Joesph Roberts, David Noble, Rahul Suresh, Neel Patel

- Model Type: Protein Generation, based on the ProGen2 architecture.

- License: Apache 2.0

- Code Repository: https://github.com/joethequant/docker_protein_generator, https://github.com/joethequant/docker_streamlit_antibody_protein_generation

- Baseline Model Reference: ProGen2 Paper

Model Overview

The Antibody Generator is a specialized protein generation model developed for creating therapeutic antibodies. It is based on the ProGen2 model, an advanced language model developed by Salesforce. ProGen2, an enhancement of the original ProGen model launched in 2020, is pre-trained on a vast dataset of over 280 million protein sequences. With up to 6.4B parameters, ProGen2 demonstrates state-of-the-art performance in generating novel, viable protein sequences and predicting protein fitness.

Intended Use

- Primary Use Case: Generation of therapeutic antibody sequences for use in immunology, vaccine development, and medical treatments.

- Target Users: Researchers and practitioners in bioinformatics, molecular biology, and related fields.

Training Data

- Baseline Model Data: ProGen2 was trained on a large collection of protein sequences from genomic, metagenomic, and immune repertoire databases, totaling over 280 million samples.

- Fine-tuning Data: For fine-tuning, the Structural Antibody Database was used, comprising approximately 5,000 experimentally-resolved crystal structures of antibodies and their antigens.

Model Variants

Models are labeled as progen2_

- Size: This refers to the size of the progen2 base model that was used. There are 4 variants available:

- Small: 151M params

- Medium: 764M params

- Large: 2.7B params

- xLarge: 6.4B params

- Finetuning_type: This refers to how the base model was finetuned. 2 types are supported:

- No finetuning

- Simple finetuning: The base model is finetuned with 5,000 experimentally-resolved crystal structures of antibodies and their antigens using hyperparameters below.

- Frozen layer finetuning:The base model is finetuned with 5,000 experimentally-resolved crystal structures of antibodies and their antigens using hyperparameters below. Additionally, all layers except last 3 are frozen to avoid overfitting.

- Prompting_type: This refers to whether the model was provided with any prompting during inference.

- Prompted: Use prompt engineering for generating therapeutic antibody sequences.

- Zeroshot: No prompting is provided.

Model Hyperparameters

- Batch size: 40

- Epochs: 10

- Learning rate: 0.00001

Evaluation and Performance

Evaluation Tools:

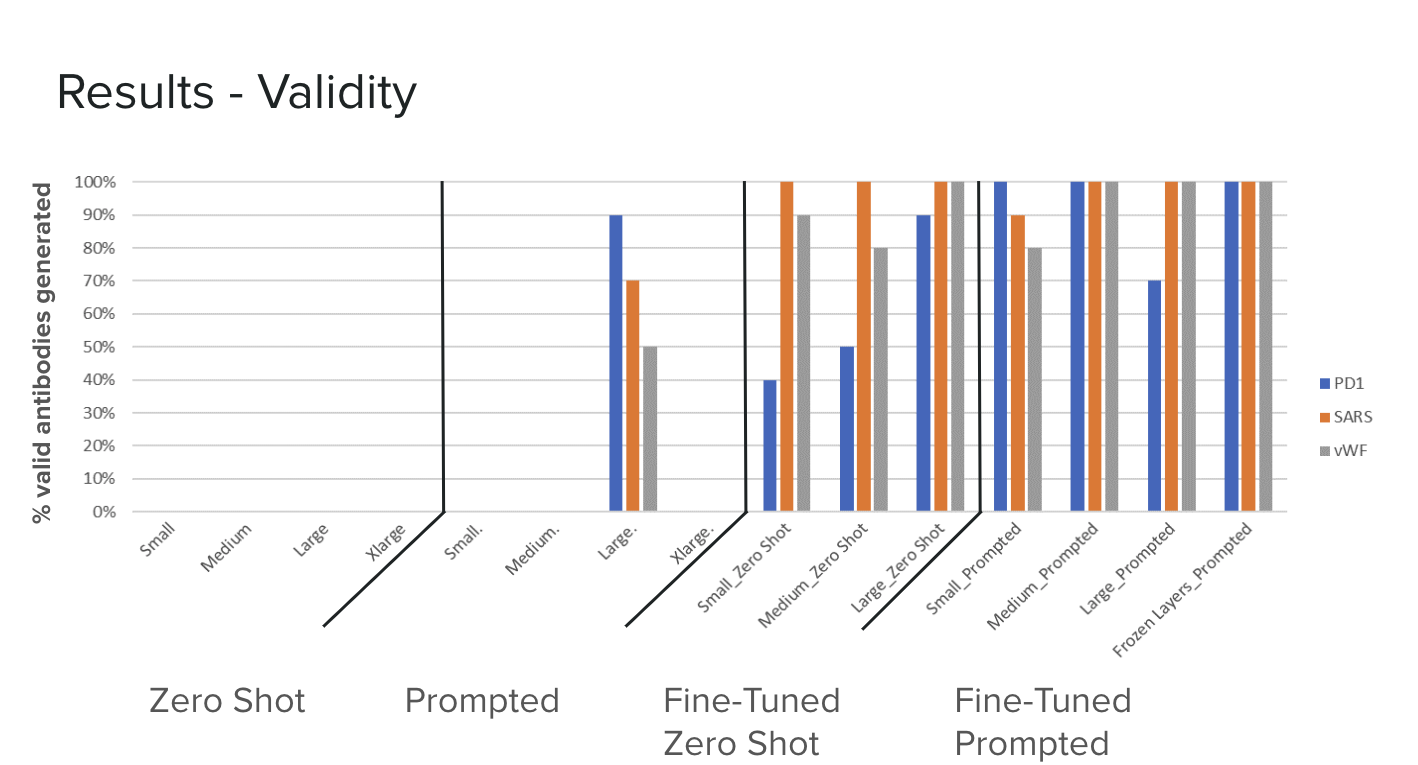

- ANARCI: The model is evaluated using ANARCI, a tool for antibody numbering and receptor classification. ANARCI is employed to analyze the generated antibody sequences for their conformity to known antibody sequence patterns and structures. It helps in assessing the accuracy of the model's outputs in terms of their structural viability and alignment with known antibody frameworks. This evaluation is crucial to ensure that the generated sequences are not only novel but also biologically relevant and potentially functional. ANARCI

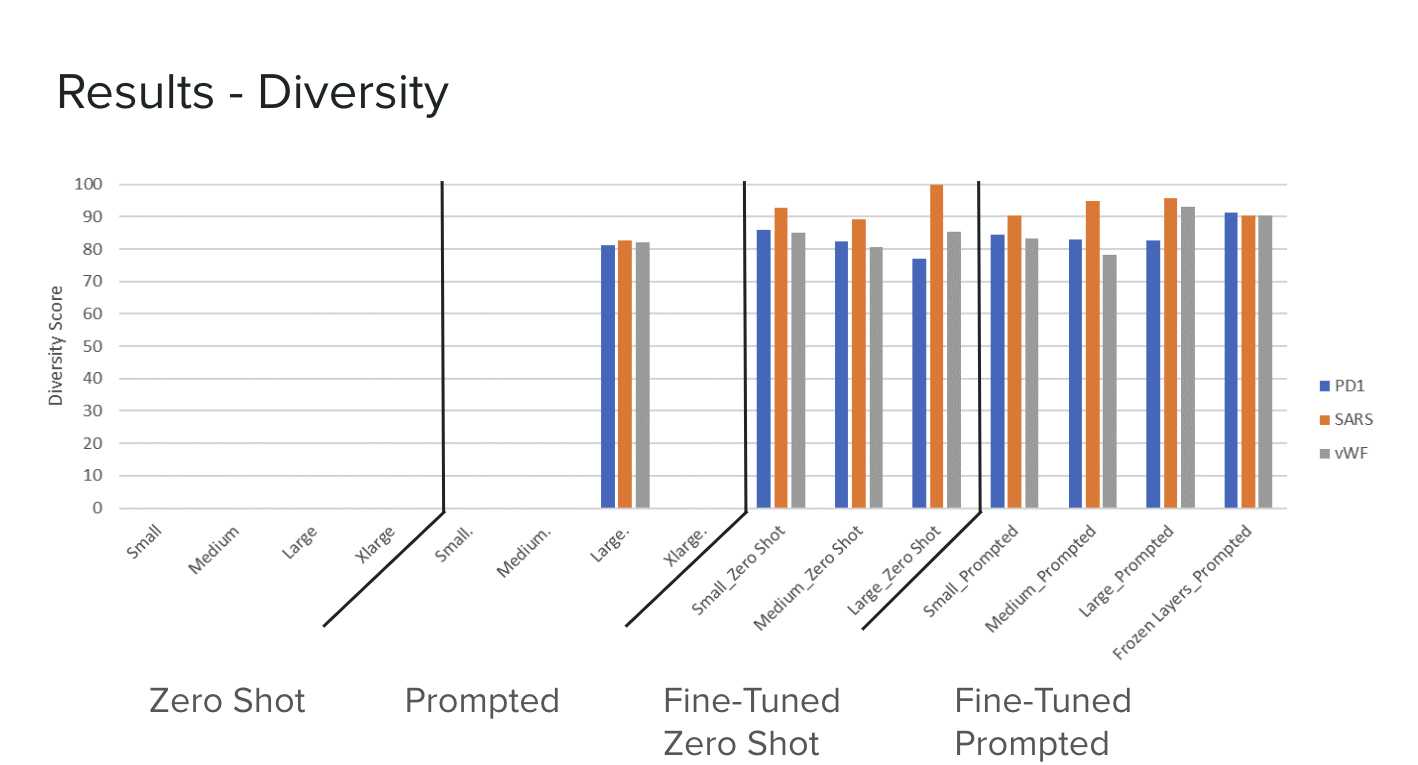

- Diversity Score: Diversity: We can measure how diverse each model’s outputs are by computing the sequence similarity between candidates for each possible pairing from the outputs. The average of this distribution indicates how widely the model’s outputs vary, which is useful to know for downstream evaluation of the generated candidates. We compute the average sequence similarity for both the entire variable sequence, as well as just the HCDR3 region.

Performance and analytics:

Ethical Considerations

- Use Case Limitations: Generated antibodies should be validated experimentally before clinical or research applications.

- Misuse Potential: Users should be aware of the potential misuse of generated sequences in harmful applications.

How to Use

Instructions on how to use the model, including example prompts and API documentation, are available in the Code Repository.

Example Code

from models.progen.modeling_progen import ProGenForCausalLM

import torch

from tokenizers import Tokenizer

import json

# Define the model identifier from Hugging Face's model hub

model_path = 'AntibodyGeneration/fine-tuned-progen2-small'

# Load the model and tokenizer

model = ProGenForCausalLM.from_pretrained(model_path)

tokenizer = Tokenizer.from_file('tokenizer.json')

# Define your sequence and other parameters

target_sequence = 'MQIPQAPWPVVWAVLQLGWRPGWFLDSPDRPWNPPTFSPALLVVTEGDNATFTCSFSNTSESFVLNWYRMSPSNQTDKLAAFPEDRSQPGQDCRFRVTQLPNGRDFHMSVVRARRNDSGTYLCGAISLAPKAQIKESLRAELRVTERRAEVPTAHPSPSPRPAGQFQTLVVGVVGGLLGSLVLLVWVLAVICSRAARGTIGARRTGQPLKEDPSAVPVFSVDYGELDFQWREKTPEPPVPCVPEQTEYATIVFPSGMGTSSPARRGSADGPRSAQPLRPEDGHCSWPL'

number_of_sequences = 2

# Tokenize the sequence

tokenized_sequence = tokenizer(target_sequence, return_tensors="pt")

# Move model and tensors to CUDA if available

device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')

model = model.to(device)

tokenized_sequence = tokenized_sequence.to(device)

# Generate sequences

with torch.no_grad():

output = model.generate(**tokenized_sequence, max_length=1024, pad_token_id=tokenizer.pad_token_id, do_sample=True, top_p=0.9, temperature=0.8, num_return_sequences=number_of_sequences)

# Decoding the output to get generated sequences

generated_sequences = [tokenizer.decode(output_seq, skip_special_tokens=True) for output_seq in output]

Links:

Additional Resources and Links

Limitations and Future Work

- Predictions require experimental validation for practical use.

- Future improvements will focus on incorporating diverse training data and enhancing prediction accuracy for the efficacy of generated antibodies.

Contact Information

For questions or feedback regarding this model, please contact [XYZ].

- Downloads last month

- 191