metadata

license: llama2

datasets:

- wyt2000/InverseCoder-CL-13B-Evol-Instruct-90K

- ise-uiuc/Magicoder-Evol-Instruct-110K

library_name: transformers

pipeline_tag: text-generation

tags:

- code

model-index:

- name: InverseCoder-CL-13B

results:

- task:

type: text-generation

dataset:

type: openai_humaneval

name: HumanEval

metrics:

- name: pass@1

type: pass@1

value: 0.799

verified: false

- task:

type: text-generation

dataset:

type: openai_humaneval

name: HumanEval(+)

metrics:

- name: pass@1

type: pass@1

value: 0.744

verified: false

- task:

type: text-generation

dataset:

type: mbpp

name: MBPP

metrics:

- name: pass@1

type: pass@1

value: 0.746

verified: false

- task:

type: text-generation

dataset:

type: mbpp

name: MBPP(+)

metrics:

- name: pass@1

type: pass@1

value: 0.63

verified: false

- task:

type: text-generation

dataset:

type: ds1000

name: DS-1000 (Overall Completion)

metrics:

- name: pass@1

type: pass@1

value: 0.431

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL-HumanEval (Java)

metrics:

- name: pass@1

type: pass@1

value: 0.545

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL-HumanEval (JavaScript)

metrics:

- name: pass@1

type: pass@1

value: 0.654

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL-HumanEval (C++)

metrics:

- name: pass@1

type: pass@1

value: 0.581

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL-HumanEval (PHP)

metrics:

- name: pass@1

type: pass@1

value: 0.553

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL-HumanEval (Swift)

metrics:

- name: pass@1

type: pass@1

value: 0.525

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL-HumanEval (Rust)

metrics:

- name: pass@1

type: pass@1

value: 0.556

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL-HumanEval (Average for non-python languages)

metrics:

- name: pass@1

type: pass@1

value: 0.569

verified: false

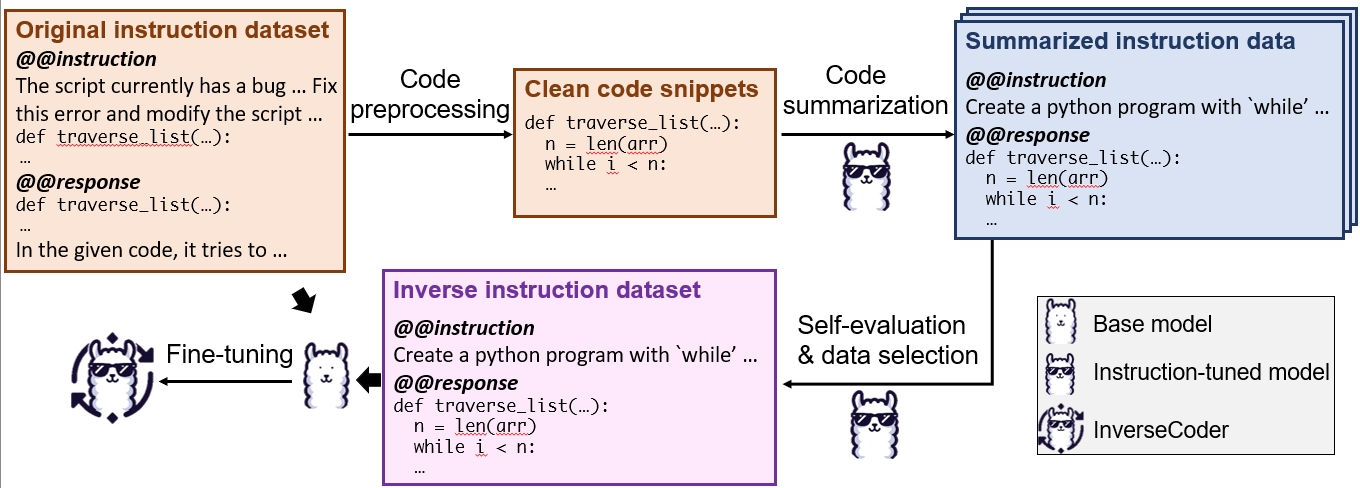

InverseCoder: Unleashing the Power of Instruction-Tuned Code LLMs with Inverse-Instruct

InverseCoder is a series of code LLMs instruction-tuned by generating data from itself through Inverse-Instruct.

Models and Datasets

Usage

Similar to Magicoder-S-DS-6.7B, use the code below to get started with the model. Make sure you installed the transformers library.

from transformers import pipeline

import torch

INVERSECODER_PROMPT = """You are an exceptionally intelligent coding assistant that consistently delivers accurate and reliable responses to user instructions.

@@ Instruction

{instruction}

@@ Response

"""

instruction = <Your code instruction here>

prompt = INVERSECODER_PROMPT.format(instruction=instruction)

generator = pipeline(

model="wyt2000/InverseCoder-CL-13B",

task="text-generation",

torch_dtype=torch.bfloat16,

device_map="auto",

)

result = generator(prompt, max_length=1024, num_return_sequences=1, temperature=0.0)

print(result[0]["generated_text"])

Paper

Arxiv: https://arxiv.org/abs/2407.05700

Please cite the paper if you use the models or datasets from InverseCoder.

@misc{wu2024inversecoderunleashingpowerinstructiontuned,

title={InverseCoder: Unleashing the Power of Instruction-Tuned Code LLMs with Inverse-Instruct},

author={Yutong Wu and Di Huang and Wenxuan Shi and Wei Wang and Lingzhe Gao and Shihao Liu and Ziyuan Nan and Kaizhao Yuan and Rui Zhang and Xishan Zhang and Zidong Du and Qi Guo and Yewen Pu and Dawei Yin and Xing Hu and Yunji Chen},

year={2024},

eprint={2407.05700},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2407.05700},

}

Code

Official code repo for Inverse-Instruct (under development).

Acknowledgements

- Magicoder: Training code, original datasets and data decontamination

- DeepSeek-Coder: Base model for InverseCoder-DS

- CodeLlama: Base model for InverseCoder-CL

- AutoMathText: Self-evaluation and data selection method