license: llama3

datasets:

- taddeusb90/finbro-v0.1.0

language:

- en

library_name: transformers

tags:

- finance

Fibro v0.1.0 Llama 3 8B Model with 1Million token context window

Model Description

The Fibro Llama 3 8B model is language model optimized for financial applications. This model aims to enhance financial analysis, automate data extraction, and improve financial literacy across various user expertise levels. It utilizes a massive 1 million token context window. This is just a sneak peek into what's coming, and future releases will be done periodically consistently improving it's performance.

Training:

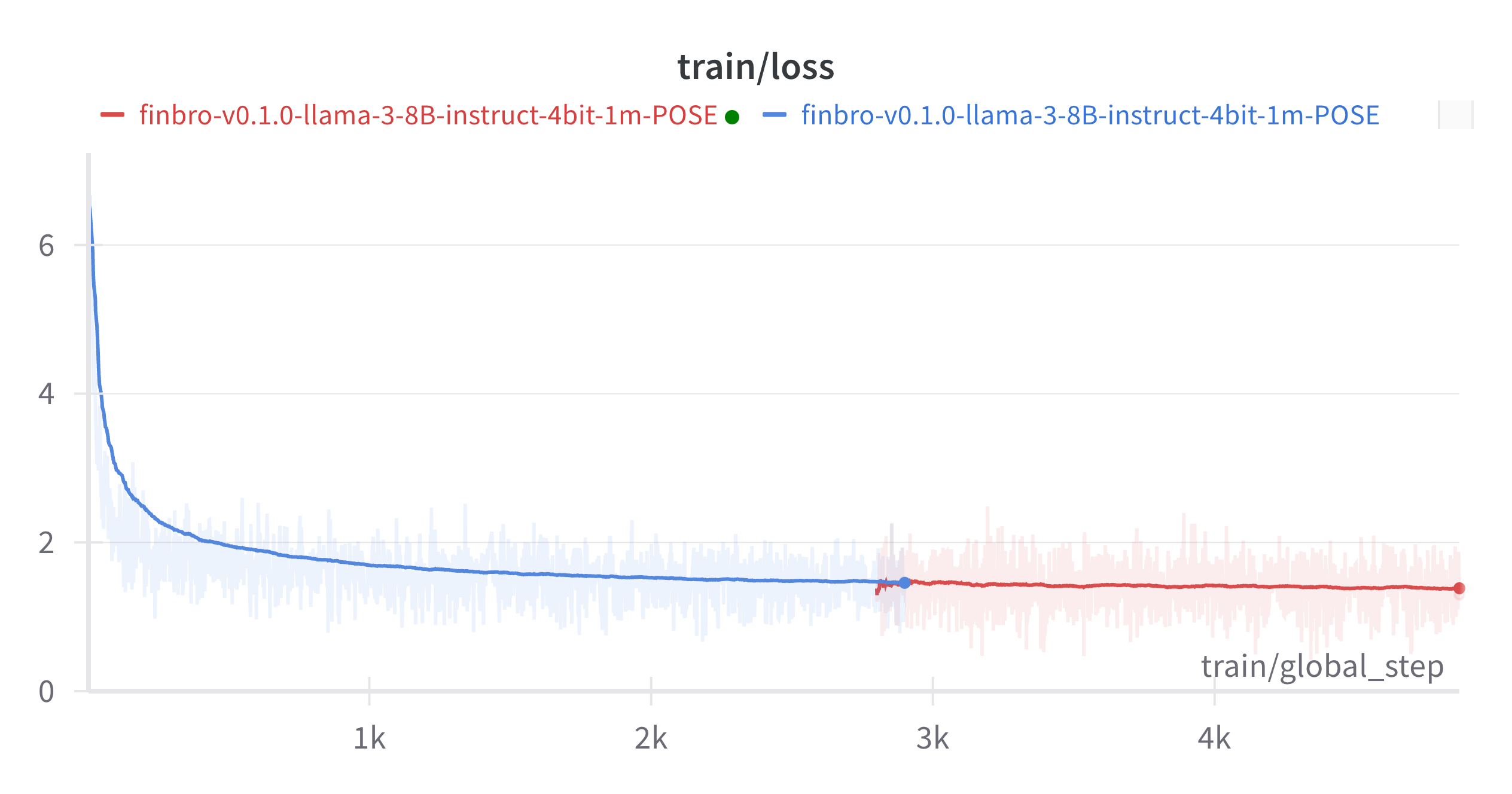

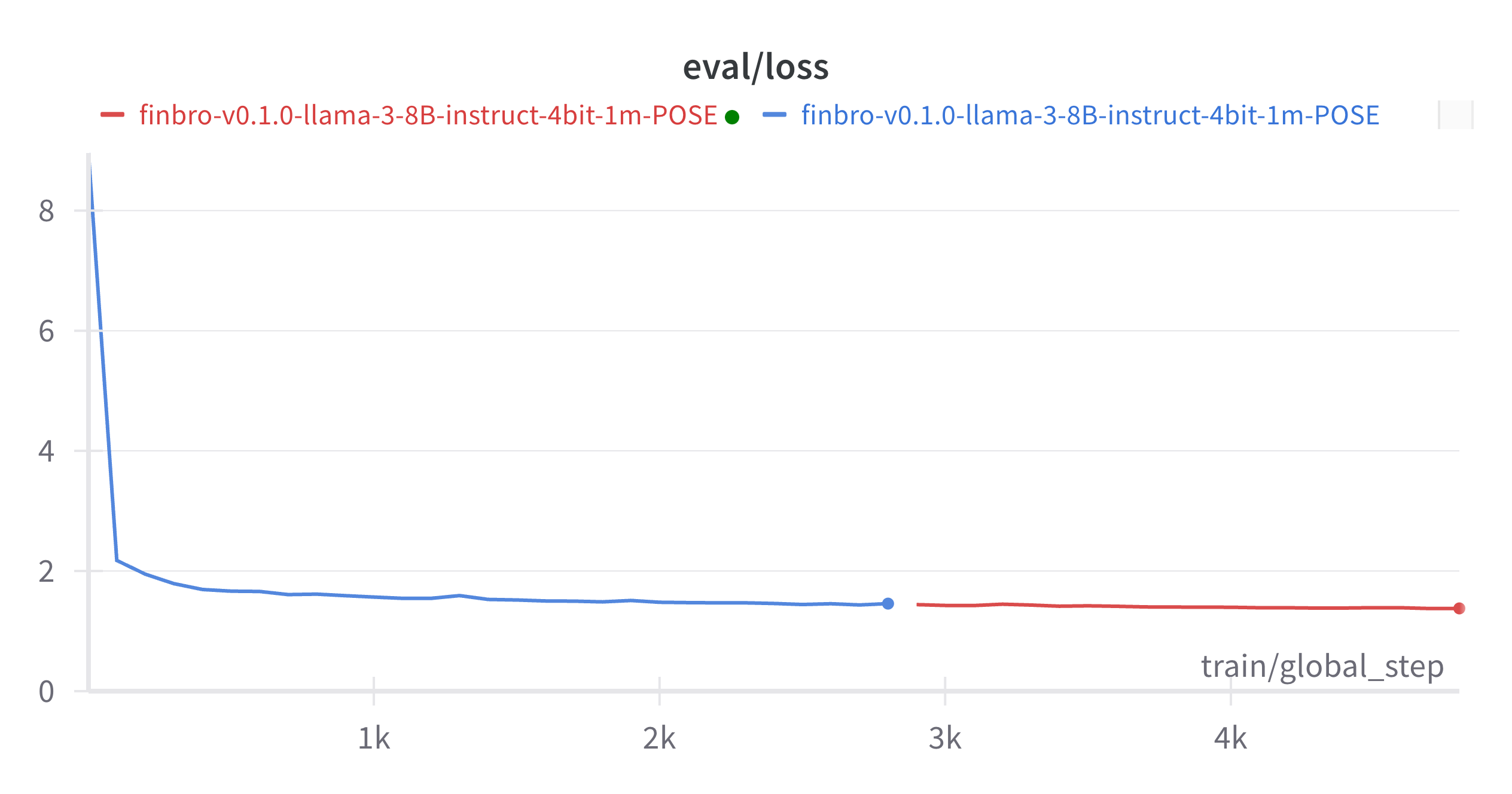

The model is still training, I will be sharing new incremental releases while it's improving so you have time to play around with it.

What's Next?

- Extended Capability: Continue training on the 8B model as it hasn't converged yet as I only scratched the surface here and transitioning to scale up with a 70B model for deeper insights and broader financial applications.

- Dataset Expansion: Continuous enhancement by integrating more diverse and comprehensive real and synthetic financial data.

- Advanced Financial Analysis: Future versions will support complex financial decision-making processes by interpreting and analyzing financial data within agentive workflows.

- Incremental Improvements: Regular updates are made to increase the model's efficiency and accuracy and extend it's capabilities in financial tasks.

Model Applications

- Information Extraction: Automates the process of extracting valuable data from unstructured financial documents.

- Financial Literacy: Provides explanations of financial documents at various levels, making financial knowledge more accessible.

How to Use

Here is how to load and use the model in your Python projects:

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "taddeusb90/finbro-v0.1.0-llama-3-8B-instruct-1m-POSE"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(model_name)

text = "Your financial query here"

inputs = tokenizer(text, return_tensors="pt")

outputs = model.generate(inputs['input_ids'])

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

Training Data

The Fibro Llama 3 8B model was trained on the Finbro Dataset, an extensive compilation of over 300,000 entries sourced from Investopedia and Sujet Finance. This dataset includes structured Q&A pairs, financial reports, and a variety of financial tasks pooled from multiple datasets.

The dataset can be found here

This dataset will be extended to contain real and synthetic data on a wide range of financial tasks such as:

- Investment valuation

- Value investing

- Security analysis

- Derivatives

- Asset and portfolio management

- Financial information extraction

- Quantitative finance

- Econometrics

- Applied computer science in finance and much more

Notice

Please exercise caution and use it at your own risk. I assume no responsibility for any losses incurred if used.

Licensing

This model is released under the META LLAMA 3 COMMUNITY LICENSE AGREEMENT.

Citation

If you use this model in your research, please cite it as follows:

@misc{

finbro_v0.1.0-llama-3-8B-1m-POSE,

author = {Taddeus Buica}, title = {Fibro Llama 3 8B Model for Financial Analysis},

year = {2024},

journal = {Hugging Face repository},

howpublished = {\url{https://huggingface.co/taddeusb90/finbro-v0.1.0-llama-3-8B-instruct-1m-POSE}}

}

Special thanks to the folks from AI@Meta for powering this project with their awesome models.

References

[1] Llama 3 Model Card by AI@Meta, Year: 2024

[2] Sujet Finance Dataset

[3] Dataset Card for investopedia-instruction-tuning