File size: 5,014 Bytes

8c0a5f0 683660e cfe5c70 8c0a5f0 683660e 9ec7436 08440f3 9ec7436 683660e d8f496e 683660e d61934d 5157c54 d61934d 683660e 3c41f7b 686095f cfe5c70 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 |

---

license: cc-by-4.0

model-index:

- name: piccolo-8x7b

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 69.62

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 86.98

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 64.13

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 64.17

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 79.87

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 72.02

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

---

# Piccolo-8x7b

**In loving memory of my dog Klaus (Piccolo)**

_~ Piccolo (Italian): the little one ~_

Based on mlabonne/NeuralBeagle-7b

Quants are available [here](https://huggingface.co/macadeliccc/piccolo-8x7b-GGUF)

# Code Example

Inference and Evaluation colab available [here](https://colab.research.google.com/drive/1ZqLNvVvtFHC_4v2CgcMVh7pP9Fvx0SbI?usp=sharing)

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

def generate_response(prompt):

"""

Generate a response from the model based on the input prompt.

Args:

prompt (str): Prompt for the model.

Returns:

str: The generated response from the model.

"""

inputs = tokenizer(prompt, return_tensors="pt")

outputs = model.generate(**inputs, max_new_tokens=256, eos_token_id=tokenizer.eos_token_id, pad_token_id=tokenizer.pad_token_id)

response = tokenizer.decode(outputs[0], skip_special_tokens=True)

return response

model_id = "macadeliccc/piccolo-8x7b"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id,load_in_4bit=True)

prompt = "What is the best way to train Cane Corsos?"

print("Response:")

print(generate_response(prompt), "\n")

```

The model is capable of quality code, math, and logical reasoning. Try whatever questions you think of.

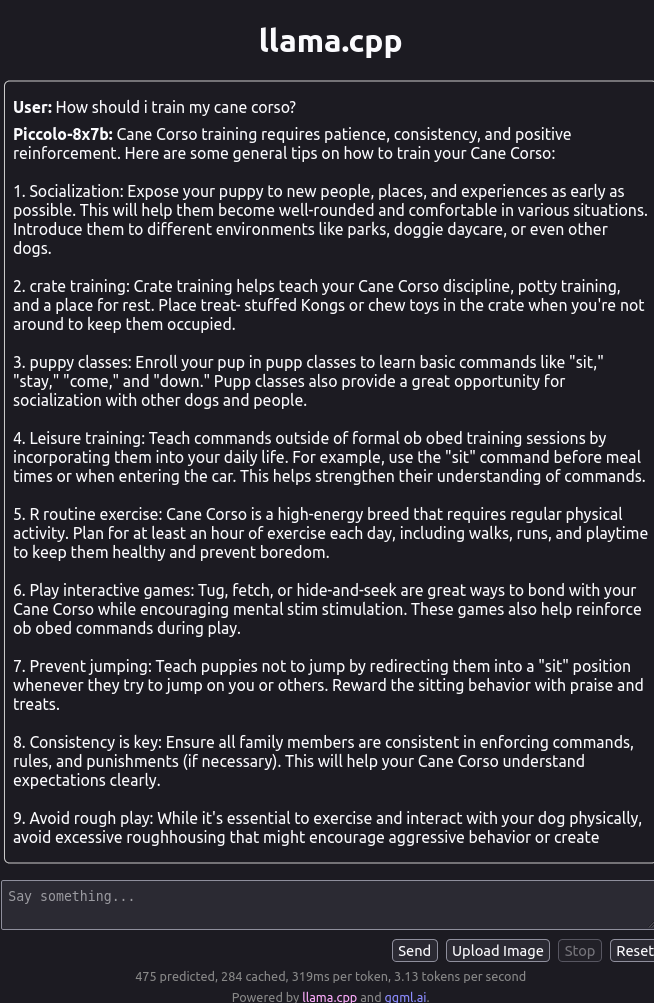

## Example output

# Evaluations

https://huggingface.co/datasets/open-llm-leaderboard/details_macadeliccc__piccolo-8x7b

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_macadeliccc__piccolo-8x7b)

| Metric |Value|

|---------------------------------|----:|

|Avg. |72.80|

|AI2 Reasoning Challenge (25-Shot)|69.62|

|HellaSwag (10-Shot) |86.98|

|MMLU (5-Shot) |64.13|

|TruthfulQA (0-shot) |64.17|

|Winogrande (5-shot) |79.87|

|GSM8k (5-shot) |72.02|

|