Model Card for Model ID

We explore benefits of unsupervised pretraining of wav2vec 2.0 (W2V2) using large-scale unlabeled home recordings collected using LittleBeats (LB) and LENA (Language Environment Analysis) devices. LittleBeats is a new infant wearable multi-modal device that we developed, which simultaneously records audio, movement of the infant, as well as heart-rate variablity. We use W2V2 to advance LB audio pipeline such that it automatically provides reliable labels of speaker diarization and vocalization classifications for family members, including infants, parents, and siblings, at home. We show that W2V2 pretrained on thousands hours of large-scale unlabeled home audio outperforms oracle W2V2 pretrained on 960 hours Librispeech released by Facebook/Meta in terms of automatic family audio analysis tasks.

For more details about LittleBeats, check out https://littlebeats.hdfs.illinois.edu/

Model Sources

For more information regarding this model, please checkout our paper

Model Description

Two versions of pretrained W2V2 models using fairseq are available:

- LB_1100/checkpoint_best.pt: pretrained using 1100-hour of LB home recordings collected from 110 families of children under 5-year-old

- LL_4300/checkpoint_best.pt: pretrained using 1100-hour of LB home recordings collected from 110 families + 3200-hour of LENA home recordings from 275 families of children under 5-year-old

One version of fine-tuned W2V2 models on labeled LB and LENA data using SpeechBrain is available:

- LL_4300_fine_tuned: pretrained on LL_4300 checkpoint and followed by fine-tuning on labeled LB and LENA home recordings + labeled lab recordings with data augmentation

Two pretrained ECAPA-TDNN speaker embeddings are available:

- ECAPA_TDNN_LB/embedding_model.ckpt: pretrained using 12-hour of labeled LB home recordings collected from 22 families of infants under 14-month-old

- ECAPA_TDNN_LB_LENA/embedding_model.ckpt: pretrained using 12-hour of labeled LB home recordings collected from 22 families + 18-hour of labeled LENA home recordings from 30 families of infants under 14-month-old

Uses

We develop our complete fine-tuning recipe using SpeechBrain toolkit available at

Quick Start

To extract features from pretrained W2V2 model, first install fairseq and speechbrain framework

pip install fairseq

pip install speechbrain

from fairseq_wav2vec import FairseqWav2Vec2

import torch

inputs = torch.rand([10, 6000]) # input wav B x T

save_path = "your/path/to/LL_4300/checkpoint_best.pt"

# extract features from all transformer layers

model = FairseqWav2Vec2(save_path) # Output all features from 12 transformer layers with shapes of 12 x B x T' x D

# To extract features from a certain transformer layer

# model = FairseqWav2Vec2(save_path, output_all_hiddens = False, tgt_layer = [1]) # Output features from the first transformer layer

# to load W2V2 model fine-tuned on LENA and LB audio data

fine_tuned_path = "your/path/to/LL_4300_fine_tuned/save/CKPT+2022-11-26+14-06-17+00/wav2vec2.ckpt"

model._load_sb_pretrained_w2v2_parameters(fine_tuned_path)

# To extract wav2vec features

outputs = model(inputs)

print(outputs.shape)

Evaluation

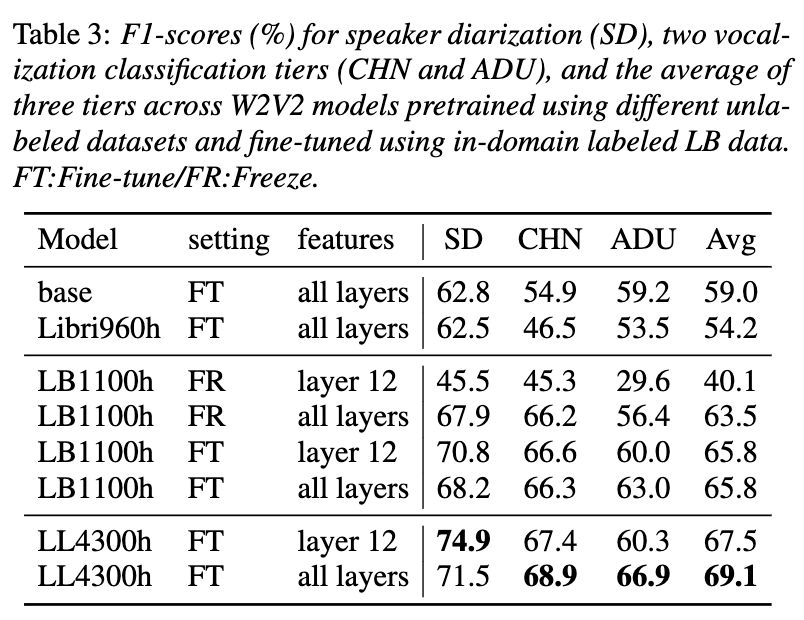

We test 4 unlabeled datasets on unsupervised pretrained W2V2-base models:

- base (oracle version): originally released version pretrained on ~960-hour unlabeled Librispeech audio

- Libri960h: oracle version fine-tuned using 960h Librispeech

- LB1100h: pretrain W2V2 using 1100h LB home recordings

- LL4300h: pretrain W2V2 using 4300h LB+LENA home recordings We then fine-tune pretrained models on 11.7h of LB labeled home recordings, the f1 scores across three tasks are

Additionally, we improve our model performances by adding relevant labeled home recordings and using data augmentation techniques of SpecAug and noise/reverberation corruption. For more details of experiments and results, please refer to our paper.

Paper/BibTex Citation

If you found this model helpful to you, please cite us as

@inproceedings{li23e_interspeech,

author={Jialu Li and Mark Hasegawa-Johnson and Nancy L. McElwain},

title={{Towards Robust Family-Infant Audio Analysis Based on Unsupervised Pretraining of Wav2vec 2.0 on Large-Scale Unlabeled Family Audio}},

year=2023,

booktitle={Proc. INTERSPEECH 2023},

pages={1035--1039},

doi={10.21437/Interspeech.2023-460}

}

Model Card Contact

Jialu Li, Ph.D. (she, her, hers)

E-mail: jialuli3@illinois.edu

Homepage: https://sites.google.com/view/jialuli/