You need to agree to share your contact information to access this model

This repository is publicly accessible, but you have to accept the conditions to access its files and content.

Access SeamlessExpressive on Hugging Face

To access SeamlessExpressive on Hugging Face:

- Please fill out the Meta request form and accept the license terms and acceptable policy BEFORE submitting this form. Requests will be processed in 1-2 days.

- Submit this form on Hugging Face afterwards.

Your Hugging Face account email address MUST match the email you provide on the Meta website, or your request will not be approved.

Log in or Sign Up to review the conditions and access this model content.

SeamlessExpressive

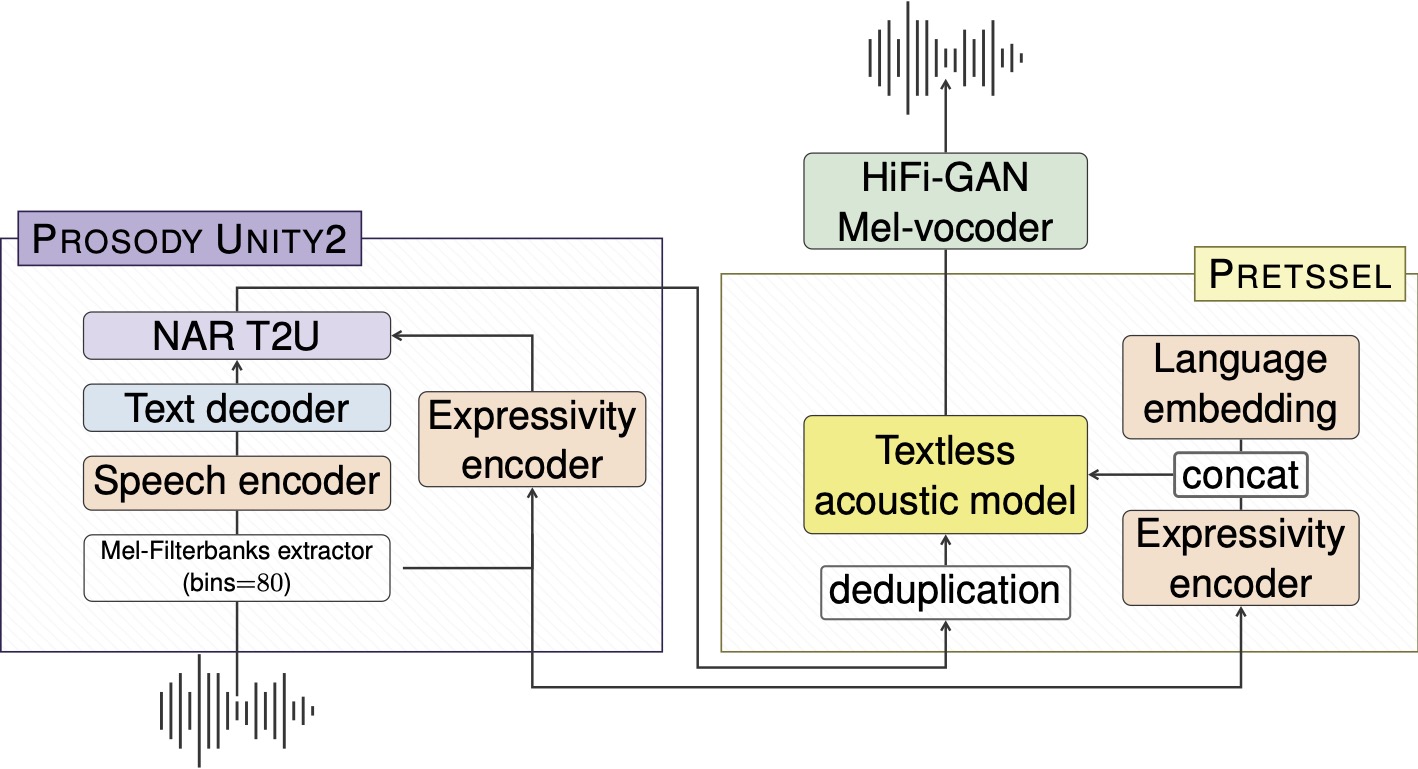

SeamlessExpressive model consists of two main modules: (1) Prosody UnitY2, which is a prosody-aware speech-to-unit translation model based on UnitY2 architecture; and (2) PRETSSEL, which is a unit-to-speech model featuring cross-lingual expressivity preservation.

Prosody UnitY2

Prosody UnitY2 is an expressive speech-to-unit translation model, injecting expressivity embedding from PRETSSEL into the unit generation. It could transfer phrase-level prosody such as speech rate or pauses.

PRETSSEL

Paralinguistic REpresentation-based TextleSS acoustic modEL (PRETSSEL) is an expressive unit-to-speech generator, and it can efficiently disentangle semantic and expressivity components from speech. It transfers utterance-level expressivity like the style of one's voice.

Running inference

Below is the script for efficient batched inference.

export MODEL_DIR="/path/to/SeamlessExpressive/model"

export TEST_SET_TSV="input.tsv" # Your dataset in a TSV file, with headers "id", "audio"

export TGT_LANG="spa" # Target language to translate into, options including "fra", "deu", "eng" ("cmn" and "ita" are experimental)

export OUTPUT_DIR="tmp/" # Output directory for generated text/unit/waveform

export TGT_TEXT_COL="tgt_text" # The column in your ${TEST_SET_TSV} for reference target text to calcuate BLEU score. You can skip this argument.

export DFACTOR="1.0" # Duration factor for model inference to tune predicted duration (preddur=DFACTOR*preddur) per each position which affects output speech rate. Greater value means slower speech rate (default to 1.0). See expressive evaluation README for details on duration factor we used.

python src/seamless_communication/cli/expressivity/evaluate/pretssel_inference.py \

${TEST_SET_TSV} --gated-model-dir ${MODEL_DIR} --task s2st --tgt_lang ${TGT_LANG}\

--audio_root_dir "" --output_path ${OUTPUT_DIR} --ref_field ${TGT_TEXT_COL} \

--model_name seamless_expressivity --vocoder_name vocoder_pretssel \

--text_unk_blocking True --duration_factor ${DFACTOR}

Benchmark Datasets

mExpresso (Multilingual Expresso)

mExpresso is an expressive S2ST dataset that includes seven styles of read speech (i.e., default, happy, sad, confused, enunciated, whisper and laughing) between English and five other languages -- French, German, Italian, Mandarin and Spanish. We create the dataset by expanding a subset of read speech in Expresso Dataset. We first translate the English transcriptions into other languages, including the emphasis markers in the transcription, and then the gender matched bilingual speakers read the translation in the style suggested by the markers.

We are currently open source the text translation of the other language to enable evaluating English to other directions. We will open source the audio files in the near future.

Text translation in other languages can be Downloaded.

Statistics of mExpresso

| language pair | subset | # items | English duration (hr) | # speakers |

|---|---|---|---|---|

| eng-cmn | dev | 2369 | 2.1 | 1 |

| test | 5003 | 4.8 | 2 | |

| eng-deu | dev | 4420 | 3.9 | 2 |

| test | 5733 | 5.6 | 2 | |

| eng-fra | dev | 4770 | 4.2 | 2 |

| test | 5742 | 5.6 | 2 | |

| eng-ita | dev | 4413 | 3.9 | 2 |

| test | 5756 | 5.7 | 2 | |

| eng-spa | dev | 4758 | 4.2 | 2 |

| test | 5693 | 5.5 | 2 |

Create mExpresso S2T dataset by downloading and combining with English Expresso

Run the following command to create English to other langauges speech-to-text dataset from scratch. It will first download the English Expresso dataset, downsample the audio to 16k Hz, and join with the text translation to form the manifest.

python3 -m seamless_communication.cli.expressivity.data.prepare_mexpresso \

<OUTPUT_FOLDER>

The output manifest will be located at <OUTPUT_FOLDER>/{dev,test}_mexpresso_eng_{spa,fra,deu,ita,cmn}.tsv

Automatic evaluation

Python package dependencies (on top of seamless_communication, coming from stopes pipelines):

- Unidecode

- scipy

- phonemizer

- s3prl

- syllables

- ipapy

- pkuseg

- nltk

- fire

- inflect

pip install Unidecode scipy phonemizer s3prl syllables ipapy pkuseg nltk fire inflect

As described in Section 4.3 we use following automatic metrics:

ASR-BLEU: refer to

/src/seamless_communication/cli/eval_utilsto see how the OpenAI whisper ASR model is used to extract transcriptions from generated audios.Vocal Style Similarity: refer to stopes/eval/vocal_style_similarity for implementation details.

AutoPCP: refer to stopes/eval/auto_pcp for implementation details.

Pause and Rate scores: refer to stopes/eval/local_prosody for implementation details. Rate score corresponds to the syllable speech rate spearman correlation between source and predicted speech. Pause score corresponds to the weighted mean joint score produced by

stopes/eval/local_prosody/compare_utterances.pyscript from stopes repo.

Evaluation results: mExpresso

Please see mExpresso section on how to download evaluation data

Important Notes:

We used empirically chosen duration factors per each tgt language towards the best perceptual quality: 1.0 (default) for cmn, spa, ita; 1.1 for deu; 1.2 for fra. Same settings were used to report results in the "Seamless: Multilingual Expressive and Streaming Speech Translation" paper.

Results here slightly differs from ones shown in the paper due to several descrepancies in the pipeline: results reported here use pipeline w/ fairseq2 backend for model's inference and pipeline includes watermarking.

| Language | Partition | ASR-BLEU | Vocal Style Sim | AutoPCP | Pause | Rate |

|---|---|---|---|---|---|---|

| eng_cmn | dev | 26.080 | 0.207 | 3.168 | 0.236 | 0.538 |

| eng_deu | dev | 36.940 | 0.261 | 3.298 | 0.319 | 0.717 |

| eng_fra | dev | 37.780 | 0.231 | 3.285 | 0.331 | 0.682 |

| eng_ita | dev | 40.170 | 0.226 | 3.322 | 0.388 | 0.734 |

| eng_spa | dev | 42.400 | 0.228 | 3.379 | 0.332 | 0.702 |

| eng_cmn | test | 23.320 | 0.249 | 2.984 | 0.385 | 0.522 |

| eng_deu | test | 27.780 | 0.290 | 3.117 | 0.483 | 0.717 |

| eng_fra | test | 38.360 | 0.270 | 3.117 | 0.506 | 0.663 |

| eng_ita | test | 38.020 | 0.274 | 3.130 | 0.523 | 0.686 |

| eng_spa | test | 42.920 | 0.274 | 3.183 | 0.508 | 0.675 |

Step-by-step evaluation

Pre-requisite: all steps described here assume that the generation/inference has been completed following steps.

For stopes installation please refer to stopes/eval.

The resulting directory of generated outputs:

export SPLIT="dev_mexpresso_eng_spa" # example, change for your split

export TGT_LANG="spa"

export SRC_LANG="eng"

export GENERATED_DIR="path_to_generated_output_for_given_data_split"

export GENERATED_TSV="generate-${SPLIT}.tsv"

export STOPES_ROOT="path_to_stopes_code_repo"

export SC_ROOT="path_to_this_repo"

ASR-BLEU evaluation

python ${SC_ROOT}/src/seamless_communication/cli/expressivity/evaluate/run_asr_bleu.py \

--generation_dir_path=${GENERATED_DIR} \

--generate_tsv_filename=generate-${SPLIT}.tsv \

--tgt_lang=${TGT_LANG}

generate-${SPLIT}.tsvis an expected output from inference described in pre-requisite

After completion resulting ASR-BLEU score is written in ${GENERATED_DIR}/s2st_asr_bleu_normalized.json.

Vocal Style Similarity

Download & set WavLM finetuned ckpt path (${SPEECH_ENCODER_MODEL_PATH}) as described in stopes README to reproduce our vocal style similarity eval.

python -m stopes.modules +vocal_style_similarity=base \

launcher.cluster=local \

vocal_style_similarity.model_type=valle \

+vocal_style_similarity.model_path=${SPEECH_ENCODER_MODEL_PATH} \

+vocal_style_similarity.input_file=${GENERATED_DIR}/${GENERATED_TSV} \

+vocal_style_similarity.output_file=${GENERATED_DIR}/vocal_style_sim_result.txt \

vocal_style_similarity.named_columns=true \

vocal_style_similarity.src_audio_column=src_audio \

vocal_style_similarity.tgt_audio_column=hypo_audio

- We report average number from all utterance scores written in

${GENERATED_DIR}/vocal_style_sim_result.txt.

AutoPCP

python -m stopes.modules +compare_audios=AutoPCP_multilingual_v2 \

launcher.cluster=local \

+compare_audios.input_file=${GENERATED_DIR}/${GENERATED_TSV} \

compare_audios.src_audio_column=src_audio \

compare_audios.tgt_audio_column=hypo_audio \

+compare_audios.named_columns=true \

+compare_audios.output_file=${GENERATED_DIR}/autopcp_result.txt

- We report average number from all utterance scores written in

${GENERATED_DIR}/autopcp_result.txt.

Pause and Rate

This stage includes 3 steps: (1) src lang annotation, (2) tgt lang annotation, (3) pairwise comparison

# src lang pause&rate annotation

python ${STOPES_ROOT}/stopes/eval/local_prosody/annotate_utterances.py \

+data_path=${GENERATED_DIR}/${GENERATED_TSV} \

+result_path=${GENERATED_DIR}/${SRC_LANG}_speech_rate_pause_annotation.tsv \

+audio_column=src_audio \

+text_column=src_text \

+speech_units=[syllable] \

+vad=true \

+net=true \

+lang=$SRC_LANG \

+forced_aligner=fairseq2_nar_t2u_aligner

# tgt lang pause&rate annotation

python ${STOPES_ROOT}/stopes/eval/local_prosody/annotate_utterances.py \

+data_path=${GENERATED_DIR}/${GENERATED_TSV} \

+result_path=${GENERATED_DIR}/${TGT_LANG}_speech_rate_pause_annotation.tsv \

+audio_column=hypo_audio \

+text_column=s2t_out \

+speech_units=[syllable] \

+vad=true \

+net=true \

+lang=$TGT_LANG \

+forced_aligner=fairseq2_nar_t2u_aligner

# pair wise comparison

python ${STOPES_ROOT}/stopes/eval/local_prosody/compare_utterances.py \

+src_path=${GENERATED_DIR}/${SRC_LANG}_speech_rate_pause_annotation.tsv \

+tgt_path=${GENERATED_DIR}/${TGT_LANG}_speech_rate_pause_annotation.tsv \

+result_path=${GENERATED_DIR}/${SRC_LANG}_${TGT_LANG}_pause_scores.tsv \

+pause_min_duration=0.1

For Rate reporting, please see the aggregation function

get_ratein${SC_ROOT}/src/seamless_communication/cli/expressivity/evaluate/post_process_pauserate.py;For Pause reporting, please see the aggregation function

get_pausein${SC_ROOT}/src/seamless_communication/cli/expressivity/evaluate/post_process_pauserate.py.