Transformers documentation

X-CLIP

X-CLIP

Overview

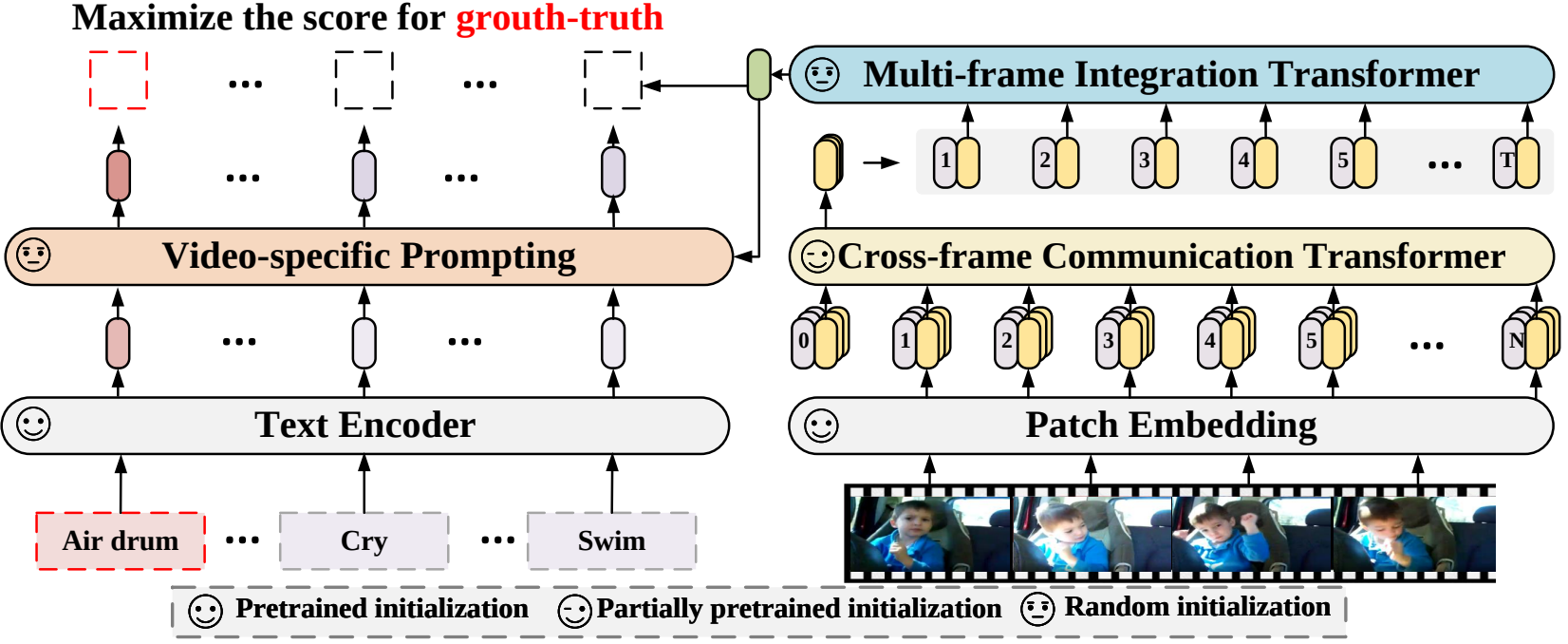

The X-CLIP model was proposed in Expanding Language-Image Pretrained Models for General Video Recognition by Bolin Ni, Houwen Peng, Minghao Chen, Songyang Zhang, Gaofeng Meng, Jianlong Fu, Shiming Xiang, Haibin Ling. X-CLIP is a minimal extension of CLIP for video. The model consists of a text encoder, a cross-frame vision encoder, a multi-frame integration Transformer, and a video-specific prompt generator.

The abstract from the paper is the following:

Contrastive language-image pretraining has shown great success in learning visual-textual joint representation from web-scale data, demonstrating remarkable “zero-shot” generalization ability for various image tasks. However, how to effectively expand such new language-image pretraining methods to video domains is still an open problem. In this work, we present a simple yet effective approach that adapts the pretrained language-image models to video recognition directly, instead of pretraining a new model from scratch. More concretely, to capture the long-range dependencies of frames along the temporal dimension, we propose a cross-frame attention mechanism that explicitly exchanges information across frames. Such module is lightweight and can be plugged into pretrained language-image models seamlessly. Moreover, we propose a video-specific prompting scheme, which leverages video content information for generating discriminative textual prompts. Extensive experiments demonstrate that our approach is effective and can be generalized to different video recognition scenarios. In particular, under fully-supervised settings, our approach achieves a top-1 accuracy of 87.1% on Kinectics-400, while using 12 times fewer FLOPs compared with Swin-L and ViViT-H. In zero-shot experiments, our approach surpasses the current state-of-the-art methods by +7.6% and +14.9% in terms of top-1 accuracy under two popular protocols. In few-shot scenarios, our approach outperforms previous best methods by +32.1% and +23.1% when the labeled data is extremely limited.

Tips:

- Usage of X-CLIP is identical to CLIP.

X-CLIP architecture. Taken from the original paper.

X-CLIP architecture. Taken from the original paper. This model was contributed by nielsr. The original code can be found here.

Resources

A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with X-CLIP.

- Demo notebooks for X-CLIP can be found here.

If you’re interested in submitting a resource to be included here, please feel free to open a Pull Request and we’ll review it! The resource should ideally demonstrate something new instead of duplicating an existing resource.

XCLIPProcessor

class transformers.XCLIPProcessor

< source >( image_processor = None tokenizer = None **kwargs )

Parameters

- image_processor (VideoMAEImageProcessor, optional) — The image processor is a required input.

- tokenizer (CLIPTokenizerFast, optional) — The tokenizer is a required input.

Constructs an X-CLIP processor which wraps a VideoMAE image processor and a CLIP tokenizer into a single processor.

XCLIPProcessor offers all the functionalities of VideoMAEImageProcessor and CLIPTokenizerFast. See the

__call__() and decode() for more information.

This method forwards all its arguments to CLIPTokenizerFast’s batch_decode(). Please refer to the docstring of this method for more information.

This method forwards all its arguments to CLIPTokenizerFast’s decode(). Please refer to the docstring of this method for more information.

XCLIPConfig

class transformers.XCLIPConfig

< source >( text_config = None vision_config = None projection_dim = 512 prompt_layers = 2 prompt_alpha = 0.1 prompt_hidden_act = 'quick_gelu' prompt_num_attention_heads = 8 prompt_attention_dropout = 0.0 prompt_projection_dropout = 0.0 logit_scale_init_value = 2.6592 **kwargs )

Parameters

- text_config (

dict, optional) — Dictionary of configuration options used to initialize XCLIPTextConfig. - vision_config (

dict, optional) — Dictionary of configuration options used to initialize XCLIPVisionConfig. - projection_dim (

int, optional, defaults to 512) — Dimensionality of text and vision projection layers. - prompt_layers (

int, optional, defaults to 2) — Number of layers in the video specific prompt generator. - prompt_alpha (

float, optional, defaults to 0.1) — Alpha value to use in the video specific prompt generator. - prompt_hidden_act (

strorfunction, optional, defaults to"quick_gelu") — The non-linear activation function (function or string) in the video specific prompt generator. If string,"gelu","relu","selu"and"gelu_new""quick_gelu"are supported. - prompt_num_attention_heads (

int, optional, defaults to 8) — Number of attention heads in the cross-attention of the video specific prompt generator. - prompt_attention_dropout (

float, optional, defaults to 0.0) — The dropout probability for the attention layers in the video specific prompt generator. - prompt_projection_dropout (

float, optional, defaults to 0.0) — The dropout probability for the projection layers in the video specific prompt generator. - logit_scale_init_value (

float, optional, defaults to 2.6592) — The inital value of the logit_scale parameter. Default is used as per the original XCLIP implementation. - kwargs (optional) — Dictionary of keyword arguments.

XCLIPConfig is the configuration class to store the configuration of a XCLIPModel. It is used to instantiate X-CLIP model according to the specified arguments, defining the text model and vision model configs. Instantiating a configuration with the defaults will yield a similar configuration to that of the X-CLIP microsoft/xclip-base-patch32 architecture.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

from_text_vision_configs

< source >( text_config: XCLIPTextConfig vision_config: XCLIPVisionConfig **kwargs ) → XCLIPConfig

Instantiate a XCLIPConfig (or a derived class) from xclip text model configuration and xclip vision model configuration.

XCLIPTextConfig

class transformers.XCLIPTextConfig

< source >( vocab_size = 49408 hidden_size = 512 intermediate_size = 2048 num_hidden_layers = 12 num_attention_heads = 8 max_position_embeddings = 77 hidden_act = 'quick_gelu' layer_norm_eps = 1e-05 attention_dropout = 0.0 initializer_range = 0.02 initializer_factor = 1.0 pad_token_id = 1 bos_token_id = 0 eos_token_id = 2 **kwargs )

Parameters

- vocab_size (

int, optional, defaults to 49408) — Vocabulary size of the X-CLIP text model. Defines the number of different tokens that can be represented by theinputs_idspassed when calling XCLIPModel. - hidden_size (

int, optional, defaults to 512) — Dimensionality of the encoder layers and the pooler layer. - intermediate_size (

int, optional, defaults to 2048) — Dimensionality of the “intermediate” (i.e., feed-forward) layer in the Transformer encoder. - num_hidden_layers (

int, optional, defaults to 12) — Number of hidden layers in the Transformer encoder. - num_attention_heads (

int, optional, defaults to 8) — Number of attention heads for each attention layer in the Transformer encoder. - max_position_embeddings (

int, optional, defaults to 77) — The maximum sequence length that this model might ever be used with. Typically set this to something large just in case (e.g., 512 or 1024 or 2048). - hidden_act (

strorfunction, optional, defaults to"quick_gelu") — The non-linear activation function (function or string) in the encoder and pooler. If string,"gelu","relu","selu"and"gelu_new""quick_gelu"are supported. - layer_norm_eps (

float, optional, defaults to 1e-5) — The epsilon used by the layer normalization layers. - attention_dropout (

float, optional, defaults to 0.0) — The dropout ratio for the attention probabilities. - initializer_range (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. - initializer_factor (

float, optional, defaults to 1) — A factor for initializing all weight matrices (should be kept to 1, used internally for initialization testing).

This is the configuration class to store the configuration of a XCLIPModel. It is used to instantiate an X-CLIP model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the X-CLIP microsoft/xclip-base-patch32 architecture.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

Example:

>>> from transformers import XCLIPTextModel, XCLIPTextConfig

>>> # Initializing a XCLIPTextModel with microsoft/xclip-base-patch32 style configuration

>>> configuration = XCLIPTextConfig()

>>> # Initializing a XCLIPTextConfig from the microsoft/xclip-base-patch32 style configuration

>>> model = XCLIPTextModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configXCLIPVisionConfig

class transformers.XCLIPVisionConfig

< source >( hidden_size = 768 intermediate_size = 3072 num_hidden_layers = 12 num_attention_heads = 12 mit_hidden_size = 512 mit_intermediate_size = 2048 mit_num_hidden_layers = 1 mit_num_attention_heads = 8 num_channels = 3 image_size = 224 patch_size = 32 num_frames = 8 hidden_act = 'quick_gelu' layer_norm_eps = 1e-05 attention_dropout = 0.0 initializer_range = 0.02 initializer_factor = 1.0 drop_path_rate = 0.0 **kwargs )

Parameters

- hidden_size (

int, optional, defaults to 768) — Dimensionality of the encoder layers and the pooler layer. - intermediate_size (

int, optional, defaults to 3072) — Dimensionality of the “intermediate” (i.e., feed-forward) layer in the Transformer encoder. - num_hidden_layers (

int, optional, defaults to 12) — Number of hidden layers in the Transformer encoder. - num_attention_heads (

int, optional, defaults to 12) — Number of attention heads for each attention layer in the Transformer encoder. - mit_hidden_size (

int, optional, defaults to 512) — Dimensionality of the encoder layers of the Multiframe Integration Transformer (MIT). - mit_intermediate_size (

int, optional, defaults to 2048) — Dimensionality of the “intermediate” (i.e., feed-forward) layer in the Multiframe Integration Transformer (MIT). - mit_num_hidden_layers (

int, optional, defaults to 1) — Number of hidden layers in the Multiframe Integration Transformer (MIT). - mit_num_attention_heads (

int, optional, defaults to 8) — Number of attention heads for each attention layer in the Multiframe Integration Transformer (MIT). - image_size (

int, optional, defaults to 224) — The size (resolution) of each image. - patch_size (

int, optional, defaults to 32) — The size (resolution) of each patch. - hidden_act (

strorfunction, optional, defaults to"quick_gelu") — The non-linear activation function (function or string) in the encoder and pooler. If string,"gelu","relu","selu","gelu_new"and"quick_gelu"are supported. - layer_norm_eps (

float, optional, defaults to 1e-5) — The epsilon used by the layer normalization layers. - attention_dropout (

float, optional, defaults to 0.0) — The dropout ratio for the attention probabilities. - initializer_range (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. - initializer_factor (

float, optional, defaults to 1) — A factor for initializing all weight matrices (should be kept to 1, used internally for initialization testing). - drop_path_rate (

float, optional, defaults to 0.0) — Stochastic depth rate.

This is the configuration class to store the configuration of a XCLIPModel. It is used to instantiate an X-CLIP model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the X-CLIP microsoft/xclip-base-patch32 architecture.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

Example:

>>> from transformers import XCLIPVisionModel, XCLIPVisionConfig

>>> # Initializing a XCLIPVisionModel with microsoft/xclip-base-patch32 style configuration

>>> configuration = XCLIPVisionConfig()

>>> # Initializing a XCLIPVisionModel model from the microsoft/xclip-base-patch32 style configuration

>>> model = XCLIPVisionModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configXCLIPModel

class transformers.XCLIPModel

< source >( config: XCLIPConfig )

Parameters

- config (XCLIPConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

This model is a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( input_ids: typing.Optional[torch.LongTensor] = None pixel_values: typing.Optional[torch.FloatTensor] = None attention_mask: typing.Optional[torch.Tensor] = None position_ids: typing.Optional[torch.LongTensor] = None return_loss: typing.Optional[bool] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None interpolate_pos_encoding: bool = False return_dict: typing.Optional[bool] = None ) → transformers.models.x_clip.modeling_x_clip.XCLIPOutput or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size, sequence_length)) — Indices of input sequence tokens in the vocabulary. Padding will be ignored by default should you provide it.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- attention_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

- position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. - pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Padding will be ignored by default should you provide it. Pixel values can be obtained using AutoImageProcessor. See CLIPImageProcessor.call() for details. - return_loss (

bool, optional) — Whether or not to return the contrastive loss. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - interpolate_pos_encoding (

bool, optional, defaultsFalse) — Whether to interpolate the pre-trained position encodings. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.x_clip.modeling_x_clip.XCLIPOutput or tuple(torch.FloatTensor)

A transformers.models.x_clip.modeling_x_clip.XCLIPOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (<class 'transformers.models.x_clip.configuration_x_clip.XCLIPConfig'>) and inputs.

- loss (

torch.FloatTensorof shape(1,), optional, returned whenreturn_lossisTrue) — Contrastive loss for video-text similarity. - logits_per_video (

torch.FloatTensorof shape(video_batch_size, text_batch_size)) — The scaled dot product scores betweenvideo_embedsandtext_embeds. This represents the video-text similarity scores. - logits_per_text (

torch.FloatTensorof shape(text_batch_size, video_batch_size)) — The scaled dot product scores betweentext_embedsandvideo_embeds. This represents the text-video similarity scores. - text_embeds(

torch.FloatTensorof shape(batch_size, output_dim) — The text embeddings obtained by applying the projection layer to the pooled output of XCLIPTextModel. - video_embeds(

torch.FloatTensorof shape(batch_size, output_dim) — The video embeddings obtained by applying the projection layer to the pooled output of XCLIPVisionModel. - text_model_output (

BaseModelOutputWithPooling) — The output of the XCLIPTextModel. - vision_model_output (

BaseModelOutputWithPooling) — The output of the XCLIPVisionModel. - mit_output (

BaseModelOutputWithPooling) — The output ofXCLIPMultiframeIntegrationTransformer(MIT for short).

The XCLIPModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> import av

>>> import torch

>>> import numpy as np

>>> from transformers import AutoProcessor, AutoModel

>>> from huggingface_hub import hf_hub_download

>>> np.random.seed(0)

>>> def read_video_pyav(container, indices):

... '''

... Decode the video with PyAV decoder.

... Args:

... container (`av.container.input.InputContainer`): PyAV container.

... indices (`List[int]`): List of frame indices to decode.

... Returns:

... result (np.ndarray): np array of decoded frames of shape (num_frames, height, width, 3).

... '''

... frames = []

... container.seek(0)

... start_index = indices[0]

... end_index = indices[-1]

... for i, frame in enumerate(container.decode(video=0)):

... if i > end_index:

... break

... if i >= start_index and i in indices:

... frames.append(frame)

... return np.stack([x.to_ndarray(format="rgb24") for x in frames])

>>> def sample_frame_indices(clip_len, frame_sample_rate, seg_len):

... '''

... Sample a given number of frame indices from the video.

... Args:

... clip_len (`int`): Total number of frames to sample.

... frame_sample_rate (`int`): Sample every n-th frame.

... seg_len (`int`): Maximum allowed index of sample's last frame.

... Returns:

... indices (`List[int]`): List of sampled frame indices

... '''

... converted_len = int(clip_len * frame_sample_rate)

... end_idx = np.random.randint(converted_len, seg_len)

... start_idx = end_idx - converted_len

... indices = np.linspace(start_idx, end_idx, num=clip_len)

... indices = np.clip(indices, start_idx, end_idx - 1).astype(np.int64)

... return indices

>>> # video clip consists of 300 frames (10 seconds at 30 FPS)

>>> file_path = hf_hub_download(

... repo_id="nielsr/video-demo", filename="eating_spaghetti.mp4", repo_type="dataset"

... )

>>> container = av.open(file_path)

>>> # sample 8 frames

>>> indices = sample_frame_indices(clip_len=8, frame_sample_rate=1, seg_len=container.streams.video[0].frames)

>>> video = read_video_pyav(container, indices)

>>> processor = AutoProcessor.from_pretrained("microsoft/xclip-base-patch32")

>>> model = AutoModel.from_pretrained("microsoft/xclip-base-patch32")

>>> inputs = processor(

... text=["playing sports", "eating spaghetti", "go shopping"],

... videos=list(video),

... return_tensors="pt",

... padding=True,

... )

>>> # forward pass

>>> with torch.no_grad():

... outputs = model(**inputs)

>>> logits_per_video = outputs.logits_per_video # this is the video-text similarity score

>>> probs = logits_per_video.softmax(dim=1) # we can take the softmax to get the label probabilities

>>> print(probs)

tensor([[1.9496e-04, 9.9960e-01, 2.0825e-04]])get_text_features

< source >( input_ids: typing.Optional[torch.Tensor] = None attention_mask: typing.Optional[torch.Tensor] = None position_ids: typing.Optional[torch.Tensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → text_features (torch.FloatTensor of shape (batch_size, output_dim)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size, sequence_length)) — Indices of input sequence tokens in the vocabulary. Padding will be ignored by default should you provide it.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- attention_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

- position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

text_features (torch.FloatTensor of shape (batch_size, output_dim)

The text embeddings obtained by applying the projection layer to the pooled output of XCLIPTextModel.

The XCLIPModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoTokenizer, AutoModel

>>> tokenizer = AutoTokenizer.from_pretrained("microsoft/xclip-base-patch32")

>>> model = AutoModel.from_pretrained("microsoft/xclip-base-patch32")

>>> inputs = tokenizer(["a photo of a cat", "a photo of a dog"], padding=True, return_tensors="pt")

>>> text_features = model.get_text_features(**inputs)get_video_features

< source >( pixel_values: typing.Optional[torch.FloatTensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → video_features (torch.FloatTensor of shape (batch_size, output_dim)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Padding will be ignored by default should you provide it. Pixel values can be obtained using AutoImageProcessor. See CLIPImageProcessor.call() for details. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - interpolate_pos_encoding (

bool, optional, defaultsFalse) — Whether to interpolate the pre-trained position encodings. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

video_features (torch.FloatTensor of shape (batch_size, output_dim)

The video embeddings obtained by

applying the projection layer to the pooled output of XCLIPVisionModel and

XCLIPMultiframeIntegrationTransformer.

The XCLIPModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> import av

>>> import torch

>>> import numpy as np

>>> from transformers import AutoProcessor, AutoModel

>>> from huggingface_hub import hf_hub_download

>>> np.random.seed(0)

>>> def read_video_pyav(container, indices):

... '''

... Decode the video with PyAV decoder.

... Args:

... container (`av.container.input.InputContainer`): PyAV container.

... indices (`List[int]`): List of frame indices to decode.

... Returns:

... result (np.ndarray): np array of decoded frames of shape (num_frames, height, width, 3).

... '''

... frames = []

... container.seek(0)

... start_index = indices[0]

... end_index = indices[-1]

... for i, frame in enumerate(container.decode(video=0)):

... if i > end_index:

... break

... if i >= start_index and i in indices:

... frames.append(frame)

... return np.stack([x.to_ndarray(format="rgb24") for x in frames])

>>> def sample_frame_indices(clip_len, frame_sample_rate, seg_len):

... '''

... Sample a given number of frame indices from the video.

... Args:

... clip_len (`int`): Total number of frames to sample.

... frame_sample_rate (`int`): Sample every n-th frame.

... seg_len (`int`): Maximum allowed index of sample's last frame.

... Returns:

... indices (`List[int]`): List of sampled frame indices

... '''

... converted_len = int(clip_len * frame_sample_rate)

... end_idx = np.random.randint(converted_len, seg_len)

... start_idx = end_idx - converted_len

... indices = np.linspace(start_idx, end_idx, num=clip_len)

... indices = np.clip(indices, start_idx, end_idx - 1).astype(np.int64)

... return indices

>>> # video clip consists of 300 frames (10 seconds at 30 FPS)

>>> file_path = hf_hub_download(

... repo_id="nielsr/video-demo", filename="eating_spaghetti.mp4", repo_type="dataset"

... )

>>> container = av.open(file_path)

>>> # sample 8 frames

>>> indices = sample_frame_indices(clip_len=8, frame_sample_rate=1, seg_len=container.streams.video[0].frames)

>>> video = read_video_pyav(container, indices)

>>> processor = AutoProcessor.from_pretrained("microsoft/xclip-base-patch32")

>>> model = AutoModel.from_pretrained("microsoft/xclip-base-patch32")

>>> inputs = processor(videos=list(video), return_tensors="pt")

>>> video_features = model.get_video_features(**inputs)XCLIPTextModel

forward

< source >( input_ids: typing.Optional[torch.Tensor] = None attention_mask: typing.Optional[torch.Tensor] = None position_ids: typing.Optional[torch.Tensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → transformers.modeling_outputs.BaseModelOutputWithPooling or tuple(torch.FloatTensor)

Parameters

- input_ids (

torch.LongTensorof shape(batch_size, sequence_length)) — Indices of input sequence tokens in the vocabulary. Padding will be ignored by default should you provide it.Indices can be obtained using AutoTokenizer. See PreTrainedTokenizer.encode() and PreTrainedTokenizer.call() for details.

- attention_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

- position_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Indices of positions of each input sequence tokens in the position embeddings. Selected in the range[0, config.max_position_embeddings - 1]. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.modeling_outputs.BaseModelOutputWithPooling or tuple(torch.FloatTensor)

A transformers.modeling_outputs.BaseModelOutputWithPooling or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (<class 'transformers.models.x_clip.configuration_x_clip.XCLIPTextConfig'>) and inputs.

-

last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model. -

pooler_output (

torch.FloatTensorof shape(batch_size, hidden_size)) — Last layer hidden-state of the first token of the sequence (classification token) after further processing through the layers used for the auxiliary pretraining task. E.g. for BERT-family of models, this returns the classification token after processing through a linear layer and a tanh activation function. The linear layer weights are trained from the next sentence prediction (classification) objective during pretraining. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The XCLIPTextModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoTokenizer, XCLIPTextModel

>>> model = XCLIPTextModel.from_pretrained("microsoft/xclip-base-patch32")

>>> tokenizer = AutoTokenizer.from_pretrained("microsoft/xclip-base-patch32")

>>> inputs = tokenizer(["a photo of a cat", "a photo of a dog"], padding=True, return_tensors="pt")

>>> outputs = model(**inputs)

>>> last_hidden_state = outputs.last_hidden_state

>>> pooled_output = outputs.pooler_output # pooled (EOS token) statesXCLIPVisionModel

forward

< source >( pixel_values: typing.Optional[torch.FloatTensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → transformers.modeling_outputs.BaseModelOutputWithPooling or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Padding will be ignored by default should you provide it. Pixel values can be obtained using AutoImageProcessor. See CLIPImageProcessor.call() for details. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - interpolate_pos_encoding (

bool, optional, defaultsFalse) — Whether to interpolate the pre-trained position encodings. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.modeling_outputs.BaseModelOutputWithPooling or tuple(torch.FloatTensor)

A transformers.modeling_outputs.BaseModelOutputWithPooling or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (<class 'transformers.models.x_clip.configuration_x_clip.XCLIPVisionConfig'>) and inputs.

-

last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model. -

pooler_output (

torch.FloatTensorof shape(batch_size, hidden_size)) — Last layer hidden-state of the first token of the sequence (classification token) after further processing through the layers used for the auxiliary pretraining task. E.g. for BERT-family of models, this returns the classification token after processing through a linear layer and a tanh activation function. The linear layer weights are trained from the next sentence prediction (classification) objective during pretraining. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the optional initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The XCLIPVisionModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> import av

>>> import torch

>>> import numpy as np

>>> from transformers import AutoProcessor, XCLIPVisionModel

>>> from huggingface_hub import hf_hub_download

>>> np.random.seed(0)

>>> def read_video_pyav(container, indices):

... '''

... Decode the video with PyAV decoder.

... Args:

... container (`av.container.input.InputContainer`): PyAV container.

... indices (`List[int]`): List of frame indices to decode.

... Returns:

... result (np.ndarray): np array of decoded frames of shape (num_frames, height, width, 3).

... '''

... frames = []

... container.seek(0)

... start_index = indices[0]

... end_index = indices[-1]

... for i, frame in enumerate(container.decode(video=0)):

... if i > end_index:

... break

... if i >= start_index and i in indices:

... frames.append(frame)

... return np.stack([x.to_ndarray(format="rgb24") for x in frames])

>>> def sample_frame_indices(clip_len, frame_sample_rate, seg_len):

... '''

... Sample a given number of frame indices from the video.

... Args:

... clip_len (`int`): Total number of frames to sample.

... frame_sample_rate (`int`): Sample every n-th frame.

... seg_len (`int`): Maximum allowed index of sample's last frame.

... Returns:

... indices (`List[int]`): List of sampled frame indices

... '''

... converted_len = int(clip_len * frame_sample_rate)

... end_idx = np.random.randint(converted_len, seg_len)

... start_idx = end_idx - converted_len

... indices = np.linspace(start_idx, end_idx, num=clip_len)

... indices = np.clip(indices, start_idx, end_idx - 1).astype(np.int64)

... return indices

>>> # video clip consists of 300 frames (10 seconds at 30 FPS)

>>> file_path = hf_hub_download(

... repo_id="nielsr/video-demo", filename="eating_spaghetti.mp4", repo_type="dataset"

... )

>>> container = av.open(file_path)

>>> # sample 16 frames

>>> indices = sample_frame_indices(clip_len=8, frame_sample_rate=1, seg_len=container.streams.video[0].frames)

>>> video = read_video_pyav(container, indices)

>>> processor = AutoProcessor.from_pretrained("microsoft/xclip-base-patch32")

>>> model = XCLIPVisionModel.from_pretrained("microsoft/xclip-base-patch32")

>>> pixel_values = processor(videos=list(video), return_tensors="pt").pixel_values

>>> batch_size, num_frames, num_channels, height, width = pixel_values.shape

>>> pixel_values = pixel_values.reshape(-1, num_channels, height, width)

>>> outputs = model(pixel_values)

>>> last_hidden_state = outputs.last_hidden_state