Transformers documentation

Load adapters with 🤗 PEFT

Load adapters with 🤗 PEFT

Parameter-Efficient Fine Tuning (PEFT) methods freeze the pretrained model parameters during fine-tuning and add a small number of trainable parameters (the adapters) on top of it. The adapters are trained to learn task-specific information. This approach has been shown to be very memory-efficient with lower compute usage while producing results comparable to a fully fine-tuned model.

Adapters trained with PEFT are also usually an order of magnitude smaller than the full model, making it convenient to share, store, and load them.

If you’re interested in learning more about the 🤗 PEFT library, check out the documentation.

Setup

Get started by installing 🤗 PEFT:

pip install peft

If you want to try out the brand new features, you might be interested in installing the library from source:

pip install git+https://github.com/huggingface/peft.git

Supported PEFT models

🤗 Transformers natively supports some PEFT methods, meaning you can load adapter weights stored locally or on the Hub and easily run or train them with a few lines of code. The following methods are supported:

If you want to use other PEFT methods, such as prompt learning or prompt tuning, or learn about the 🤗 PEFT library in general, please refer to the documentation.

Load a PEFT adapter

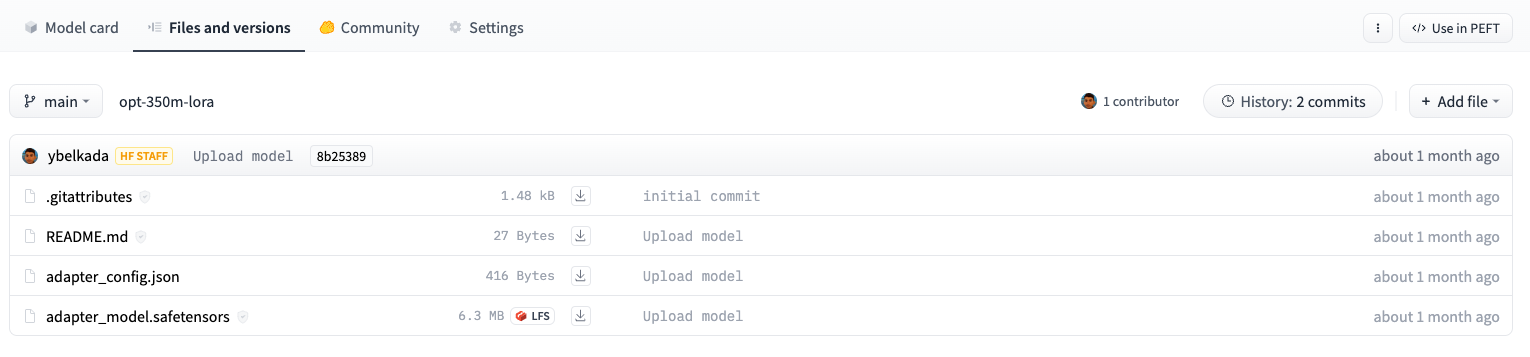

To load and use a PEFT adapter model from 🤗 Transformers, make sure the Hub repository or local directory contains an adapter_config.json file and the adapter weights, as shown in the example image above. Then you can load the PEFT adapter model using the AutoModelFor class. For example, to load a PEFT adapter model for causal language modeling:

- specify the PEFT model id

- pass it to the AutoModelForCausalLM class

from transformers import AutoModelForCausalLM, AutoTokenizer

peft_model_id = "ybelkada/opt-350m-lora"

model = AutoModelForCausalLM.from_pretrained(peft_model_id)You can load a PEFT adapter with either an AutoModelFor class or the base model class like OPTForCausalLM or LlamaForCausalLM.

You can also load a PEFT adapter by calling the load_adapter method:

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "facebook/opt-350m"

peft_model_id = "ybelkada/opt-350m-lora"

model = AutoModelForCausalLM.from_pretrained(model_id)

model.load_adapter(peft_model_id)Check out the API documentation section below for more details.

Load in 8bit or 4bit

The bitsandbytes integration supports 8bit and 4bit precision data types, which are useful for loading large models because it saves memory (see the bitsandbytes integration guide to learn more). Add the load_in_8bit or load_in_4bit parameters to from_pretrained() and set device_map="auto" to effectively distribute the model to your hardware:

from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfig

peft_model_id = "ybelkada/opt-350m-lora"

model = AutoModelForCausalLM.from_pretrained(peft_model_id, quantization_config=BitsAndBytesConfig(load_in_8bit=True))Add a new adapter

You can use ~peft.PeftModel.add_adapter to add a new adapter to a model with an existing adapter as long as the new adapter is the same type as the current one. For example, if you have an existing LoRA adapter attached to a model:

from transformers import AutoModelForCausalLM, OPTForCausalLM, AutoTokenizer

from peft import LoraConfig

model_id = "facebook/opt-350m"

model = AutoModelForCausalLM.from_pretrained(model_id)

lora_config = LoraConfig(

target_modules=["q_proj", "k_proj"],

init_lora_weights=False

)

model.add_adapter(lora_config, adapter_name="adapter_1")To add a new adapter:

# attach new adapter with same config

model.add_adapter(lora_config, adapter_name="adapter_2")Now you can use ~peft.PeftModel.set_adapter to set which adapter to use:

# use adapter_1

model.set_adapter("adapter_1")

output_disabled = model.generate(**inputs)

print(tokenizer.decode(output_disabled[0], skip_special_tokens=True))

# use adapter_2

model.set_adapter("adapter_2")

output_enabled = model.generate(**inputs)

print(tokenizer.decode(output_enabled[0], skip_special_tokens=True))Enable and disable adapters

Once you’ve added an adapter to a model, you can enable or disable the adapter module. To enable the adapter module:

from transformers import AutoModelForCausalLM, OPTForCausalLM, AutoTokenizer

from peft import PeftConfig

model_id = "facebook/opt-350m"

adapter_model_id = "ybelkada/opt-350m-lora"

tokenizer = AutoTokenizer.from_pretrained(model_id)

text = "Hello"

inputs = tokenizer(text, return_tensors="pt")

model = AutoModelForCausalLM.from_pretrained(model_id)

peft_config = PeftConfig.from_pretrained(adapter_model_id)

# to initiate with random weights

peft_config.init_lora_weights = False

model.add_adapter(peft_config)

model.enable_adapters()

output = model.generate(**inputs)To disable the adapter module:

model.disable_adapters() output = model.generate(**inputs)

Train a PEFT adapter

PEFT adapters are supported by the Trainer class so that you can train an adapter for your specific use case. It only requires adding a few more lines of code. For example, to train a LoRA adapter:

If you aren’t familiar with fine-tuning a model with Trainer, take a look at the Fine-tune a pretrained model tutorial.

- Define your adapter configuration with the task type and hyperparameters (see

~peft.LoraConfigfor more details about what the hyperparameters do).

from peft import LoraConfig

peft_config = LoraConfig(

lora_alpha=16,

lora_dropout=0.1,

r=64,

bias="none",

task_type="CAUSAL_LM",

)- Add adapter to the model.

model.add_adapter(peft_config)

- Now you can pass the model to Trainer!

trainer = Trainer(model=model, ...) trainer.train()

To save your trained adapter and load it back:

model.save_pretrained(save_dir) model = AutoModelForCausalLM.from_pretrained(save_dir)

Add additional trainable layers to a PEFT adapter

You can also fine-tune additional trainable adapters on top of a model that has adapters attached by passing modules_to_save in your PEFT config. For example, if you want to also fine-tune the lm_head on top of a model with a LoRA adapter:

from transformers import AutoModelForCausalLM, OPTForCausalLM, AutoTokenizer

from peft import LoraConfig

model_id = "facebook/opt-350m"

model = AutoModelForCausalLM.from_pretrained(model_id)

lora_config = LoraConfig(

target_modules=["q_proj", "k_proj"],

modules_to_save=["lm_head"],

)

model.add_adapter(lora_config)API docs

A class containing all functions for loading and using adapters weights that are supported in PEFT library. For more details about adapters and injecting them on a transformer-based model, check out the documentation of PEFT library: https://huggingface.co/docs/peft/index

Currently supported PEFT methods are all non-prefix tuning methods. Below is the list of supported PEFT methods that anyone can load, train and run with this mixin class:

- Low Rank Adapters (LoRA): https://huggingface.co/docs/peft/conceptual_guides/lora

- IA3: https://huggingface.co/docs/peft/conceptual_guides/ia3

- AdaLora: https://arxiv.org/abs/2303.10512

Other PEFT models such as prompt tuning, prompt learning are out of scope as these adapters are not “injectable” into a torch module. For using these methods, please refer to the usage guide of PEFT library.

With this mixin, if the correct PEFT version is installed, it is possible to:

- Load an adapter stored on a local path or in a remote Hub repository, and inject it in the model

- Attach new adapters in the model and train them with Trainer or by your own.

- Attach multiple adapters and iteratively activate / deactivate them

- Activate / deactivate all adapters from the model.

- Get the

state_dictof the active adapter.

load_adapter

< source >( peft_model_id: typing.Optional[str] = None adapter_name: typing.Optional[str] = None revision: typing.Optional[str] = None token: typing.Optional[str] = None device_map: typing.Optional[str] = 'auto' max_memory: typing.Optional[str] = None offload_folder: typing.Optional[str] = None offload_index: typing.Optional[int] = None peft_config: typing.Dict[str, typing.Any] = None adapter_state_dict: typing.Optional[typing.Dict[str, ForwardRef('torch.Tensor')]] = None low_cpu_mem_usage: bool = False is_trainable: bool = False adapter_kwargs: typing.Optional[typing.Dict[str, typing.Any]] = None )

Parameters

- peft_model_id (

str, optional) — The identifier of the model to look for on the Hub, or a local path to the saved adapter config file and adapter weights. - adapter_name (

str, optional) — The adapter name to use. If not set, will use the default adapter. - revision (

str, optional, defaults to"main") — The specific model version to use. It can be a branch name, a tag name, or a commit id, since we use a git-based system for storing models and other artifacts on huggingface.co, sorevisioncan be any identifier allowed by git.To test a pull request you made on the Hub, you can pass

revision="refs/pr/<pr_number>". - token (

str,optional) — Whether to use authentication token to load the remote folder. Userful to load private repositories that are on HuggingFace Hub. You might need to callhuggingface-cli loginand paste your tokens to cache it. - device_map (

strorDict[str, Union[int, str, torch.device]]orintortorch.device, optional) — A map that specifies where each submodule should go. It doesn’t need to be refined to each parameter/buffer name, once a given module name is inside, every submodule of it will be sent to the same device. If we only pass the device (e.g.,"cpu","cuda:1","mps", or a GPU ordinal rank like1) on which the model will be allocated, the device map will map the entire model to this device. Passingdevice_map = 0means put the whole model on GPU 0.To have Accelerate compute the most optimized

device_mapautomatically, setdevice_map="auto". For more information about each option see designing a device map. - max_memory (

Dict, optional) — A dictionary device identifier to maximum memory. Will default to the maximum memory available for each GPU and the available CPU RAM if unset. - offload_folder (

stroros.PathLike,optional) — If thedevice_mapcontains any value"disk", the folder where we will offload weights. - offload_index (

int,optional) —offload_indexargument to be passed toaccelerate.dispatch_modelmethod. - peft_config (

Dict[str, Any], optional) — The configuration of the adapter to add, supported adapters are non-prefix tuning and adaption prompts methods. This argument is used in case users directly pass PEFT state dicts - adapter_state_dict (

Dict[str, torch.Tensor], optional) — The state dict of the adapter to load. This argument is used in case users directly pass PEFT state dicts - low_cpu_mem_usage (

bool, optional, defaults toFalse) — Reduce memory usage while loading the PEFT adapter. This should also speed up the loading process. Requires PEFT version 0.13.0 or higher. - is_trainable (

bool, optional, defaults toFalse) — Whether the adapter should be trainable or not. IfFalse, the adapter will be frozen and can only be used for inference. - adapter_kwargs (

Dict[str, Any], optional) — Additional keyword arguments passed along to thefrom_pretrainedmethod of the adapter config andfind_adapter_config_filemethod.

Load adapter weights from file or remote Hub folder. If you are not familiar with adapters and PEFT methods, we invite you to read more about them on PEFT official documentation: https://huggingface.co/docs/peft

Requires peft as a backend to load the adapter weights.

If you are not familiar with adapters and PEFT methods, we invite you to read more about them on the PEFT official documentation: https://huggingface.co/docs/peft

Adds a fresh new adapter to the current model for training purpose. If no adapter name is passed, a default name is assigned to the adapter to follow the convention of PEFT library (in PEFT we use “default” as the default adapter name).

set_adapter

< source >( adapter_name: typing.Union[typing.List[str], str] )

If you are not familiar with adapters and PEFT methods, we invite you to read more about them on the PEFT official documentation: https://huggingface.co/docs/peft

Sets a specific adapter by forcing the model to use a that adapter and disable the other adapters.

If you are not familiar with adapters and PEFT methods, we invite you to read more about them on the PEFT official documentation: https://huggingface.co/docs/peft

Disable all adapters that are attached to the model. This leads to inferring with the base model only.

If you are not familiar with adapters and PEFT methods, we invite you to read more about them on the PEFT official documentation: https://huggingface.co/docs/peft

Enable adapters that are attached to the model.

If you are not familiar with adapters and PEFT methods, we invite you to read more about them on the PEFT official documentation: https://huggingface.co/docs/peft

Gets the current active adapters of the model. In case of multi-adapter inference (combining multiple adapters for inference) returns the list of all active adapters so that users can deal with them accordingly.

For previous PEFT versions (that does not support multi-adapter inference), module.active_adapter will return

a single string.

get_adapter_state_dict

< source >( adapter_name: typing.Optional[str] = None )

If you are not familiar with adapters and PEFT methods, we invite you to read more about them on the PEFT official documentation: https://huggingface.co/docs/peft

Gets the adapter state dict that should only contain the weights tensors of the specified adapter_name adapter. If no adapter_name is passed, the active adapter is used.