Transformers documentation

Conditional DETR

Conditional DETR

Overview

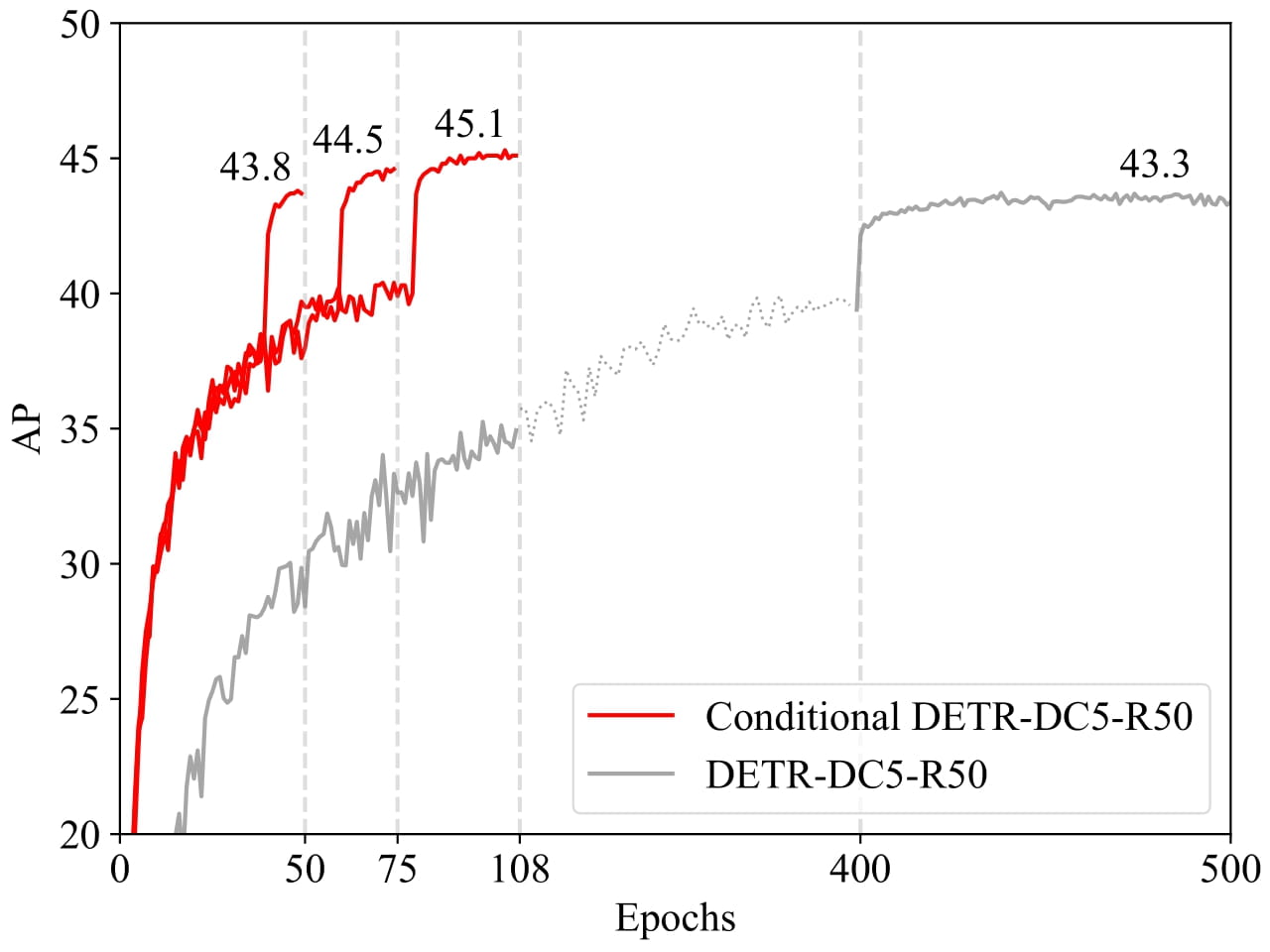

The Conditional DETR model was proposed in Conditional DETR for Fast Training Convergence by Depu Meng, Xiaokang Chen, Zejia Fan, Gang Zeng, Houqiang Li, Yuhui Yuan, Lei Sun, Jingdong Wang. Conditional DETR presents a conditional cross-attention mechanism for fast DETR training. Conditional DETR converges 6.7× to 10× faster than DETR.

The abstract from the paper is the following:

The recently-developed DETR approach applies the transformer encoder and decoder architecture to object detection and achieves promising performance. In this paper, we handle the critical issue, slow training convergence, and present a conditional cross-attention mechanism for fast DETR training. Our approach is motivated by that the cross-attention in DETR relies highly on the content embeddings for localizing the four extremities and predicting the box, which increases the need for high-quality content embeddings and thus the training difficulty. Our approach, named conditional DETR, learns a conditional spatial query from the decoder embedding for decoder multi-head cross-attention. The benefit is that through the conditional spatial query, each cross-attention head is able to attend to a band containing a distinct region, e.g., one object extremity or a region inside the object box. This narrows down the spatial range for localizing the distinct regions for object classification and box regression, thus relaxing the dependence on the content embeddings and easing the training. Empirical results show that conditional DETR converges 6.7× faster for the backbones R50 and R101 and 10× faster for stronger backbones DC5-R50 and DC5-R101. Code is available at https://github.com/Atten4Vis/ConditionalDETR.

Conditional DETR shows much faster convergence compared to the original DETR. Taken from the original paper.

Conditional DETR shows much faster convergence compared to the original DETR. Taken from the original paper.

This model was contributed by DepuMeng. The original code can be found here.

ConditionalDetrConfig

class transformers.ConditionalDetrConfig

< source >( num_channels = 3 num_queries = 300 max_position_embeddings = 1024 encoder_layers = 6 encoder_ffn_dim = 2048 encoder_attention_heads = 8 decoder_layers = 6 decoder_ffn_dim = 2048 decoder_attention_heads = 8 encoder_layerdrop = 0.0 decoder_layerdrop = 0.0 is_encoder_decoder = True activation_function = 'relu' d_model = 256 dropout = 0.1 attention_dropout = 0.0 activation_dropout = 0.0 init_std = 0.02 init_xavier_std = 1.0 classifier_dropout = 0.0 scale_embedding = False auxiliary_loss = False position_embedding_type = 'sine' backbone = 'resnet50' use_pretrained_backbone = True dilation = False class_cost = 2 bbox_cost = 5 giou_cost = 2 mask_loss_coefficient = 1 dice_loss_coefficient = 1 cls_loss_coefficient = 2 bbox_loss_coefficient = 5 giou_loss_coefficient = 2 focal_alpha = 0.25 **kwargs )

Parameters

-

num_channels (

int, optional, defaults to 3) — The number of input channels. -

num_queries (

int, optional, defaults to 100) — Number of object queries, i.e. detection slots. This is the maximal number of objects ConditionalDetrModel can detect in a single image. For COCO, we recommend 100 queries. -

d_model (

int, optional, defaults to 256) — Dimension of the layers. -

encoder_layers (

int, optional, defaults to 6) — Number of encoder layers. -

decoder_layers (

int, optional, defaults to 6) — Number of decoder layers. -

encoder_attention_heads (

int, optional, defaults to 8) — Number of attention heads for each attention layer in the Transformer encoder. -

decoder_attention_heads (

int, optional, defaults to 8) — Number of attention heads for each attention layer in the Transformer decoder. -

decoder_ffn_dim (

int, optional, defaults to 2048) — Dimension of the “intermediate” (often named feed-forward) layer in decoder. -

encoder_ffn_dim (

int, optional, defaults to 2048) — Dimension of the “intermediate” (often named feed-forward) layer in decoder. -

activation_function (

strorfunction, optional, defaults to"relu") — The non-linear activation function (function or string) in the encoder and pooler. If string,"gelu","relu","silu"and"gelu_new"are supported. -

dropout (

float, optional, defaults to 0.1) — The dropout probability for all fully connected layers in the embeddings, encoder, and pooler. -

attention_dropout (

float, optional, defaults to 0.0) — The dropout ratio for the attention probabilities. -

activation_dropout (

float, optional, defaults to 0.0) — The dropout ratio for activations inside the fully connected layer. -

init_std (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. -

init_xavier_std (

float, optional, defaults to 1) — The scaling factor used for the Xavier initialization gain in the HM Attention map module. -

encoder_layerdrop (

float, optional, defaults to 0.0) — The LayerDrop probability for the encoder. See the [LayerDrop paper](see https://arxiv.org/abs/1909.11556) for more details. -

decoder_layerdrop (

float, optional, defaults to 0.0) — The LayerDrop probability for the decoder. See the [LayerDrop paper](see https://arxiv.org/abs/1909.11556) for more details. -

auxiliary_loss (

bool, optional, defaults toFalse) — Whether auxiliary decoding losses (loss at each decoder layer) are to be used. -

position_embedding_type (

str, optional, defaults to"sine") — Type of position embeddings to be used on top of the image features. One of"sine"or"learned". -

backbone (

str, optional, defaults to"resnet50") — Name of convolutional backbone to use. Supports any convolutional backbone from the timm package. For a list of all available models, see this page. -

use_pretrained_backbone (

bool, optional, defaults toTrue) — Whether to use pretrained weights for the backbone. -

dilation (

bool, optional, defaults toFalse) — Whether to replace stride with dilation in the last convolutional block (DC5). -

class_cost (

float, optional, defaults to 1) — Relative weight of the classification error in the Hungarian matching cost. -

bbox_cost (

float, optional, defaults to 5) — Relative weight of the L1 error of the bounding box coordinates in the Hungarian matching cost. -

giou_cost (

float, optional, defaults to 2) — Relative weight of the generalized IoU loss of the bounding box in the Hungarian matching cost. -

mask_loss_coefficient (

float, optional, defaults to 1) — Relative weight of the Focal loss in the panoptic segmentation loss. -

dice_loss_coefficient (

float, optional, defaults to 1) — Relative weight of the DICE/F-1 loss in the panoptic segmentation loss. -

bbox_loss_coefficient (

float, optional, defaults to 5) — Relative weight of the L1 bounding box loss in the object detection loss. -

giou_loss_coefficient (

float, optional, defaults to 2) — Relative weight of the generalized IoU loss in the object detection loss. -

eos_coefficient (

float, optional, defaults to 0.1) — Relative classification weight of the ‘no-object’ class in the object detection loss.

This is the configuration class to store the configuration of a ConditionalDetrModel. It is used to instantiate a Conditional DETR model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the Conditional DETR microsoft/conditional-detr-resnet-50 architecture.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

Examples:

>>> from transformers import ConditionalDetrModel, ConditionalDetrConfig

>>> # Initializing a Conditional DETR microsoft/conditional-detr-resnet-50 style configuration

>>> configuration = ConditionalDetrConfig()

>>> # Initializing a model from the microsoft/conditional-detr-resnet-50 style configuration

>>> model = ConditionalDetrModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configConditionalDetrFeatureExtractor

class transformers.ConditionalDetrFeatureExtractor

< source >( format = 'coco_detection' do_resize = True size = 800 max_size = 1333 do_normalize = True image_mean = None image_std = None **kwargs )

Parameters

-

format (

str, optional, defaults to"coco_detection") — Data format of the annotations. One of “coco_detection” or “coco_panoptic”. -

do_resize (

bool, optional, defaults toTrue) — Whether to resize the input to a certainsize. -

size (

int, optional, defaults to 800) — Resize the input to the given size. Only has an effect ifdo_resizeis set toTrue. If size is a sequence like(width, height), output size will be matched to this. If size is an int, smaller edge of the image will be matched to this number. i.e, ifheight > width, then image will be rescaled to(size * height / width, size). -

max_size (

int, optional, defaults to1333) — The largest size an image dimension can have (otherwise it’s capped). Only has an effect ifdo_resizeis set toTrue. -

do_normalize (

bool, optional, defaults toTrue) — Whether or not to normalize the input with mean and standard deviation. -

image_mean (

int, optional, defaults to[0.485, 0.456, 0.406]) — The sequence of means for each channel, to be used when normalizing images. Defaults to the ImageNet mean. -

image_std (

int, optional, defaults to[0.229, 0.224, 0.225]) — The sequence of standard deviations for each channel, to be used when normalizing images. Defaults to the ImageNet std.

Constructs a Conditional DETR feature extractor.

This feature extractor inherits from FeatureExtractionMixin which contains most of the main methods. Users should refer to this superclass for more information regarding those methods.

__call__

< source >( images: typing.Union[PIL.Image.Image, numpy.ndarray, ForwardRef('torch.Tensor'), typing.List[PIL.Image.Image], typing.List[numpy.ndarray], typing.List[ForwardRef('torch.Tensor')]] annotations: typing.Union[typing.List[typing.Dict], typing.List[typing.List[typing.Dict]]] = None return_segmentation_masks: typing.Optional[bool] = False masks_path: typing.Optional[pathlib.Path] = None pad_and_return_pixel_mask: typing.Optional[bool] = True return_tensors: typing.Union[str, transformers.utils.generic.TensorType, NoneType] = None **kwargs ) → BatchFeature

Parameters

-

images (

PIL.Image.Image,np.ndarray,torch.Tensor,List[PIL.Image.Image],List[np.ndarray],List[torch.Tensor]) — The image or batch of images to be prepared. Each image can be a PIL image, NumPy array or PyTorch tensor. In case of a NumPy array/PyTorch tensor, each image should be of shape (C, H, W), where C is a number of channels, H and W are image height and width. -

annotations (

Dict,List[Dict], optional) — The corresponding annotations in COCO format.In case ConditionalDetrFeatureExtractor was initialized with

format = "coco_detection", the annotations for each image should have the following format: {‘image_id’: int, ‘annotations’: [annotation]}, with the annotations being a list of COCO object annotations.In case ConditionalDetrFeatureExtractor was initialized with

format = "coco_panoptic", the annotations for each image should have the following format: {‘image_id’: int, ‘file_name’: str, ‘segments_info’: [segment_info]} with segments_info being a list of COCO panoptic annotations. -

return_segmentation_masks (

Dict,List[Dict], optional, defaults toFalse) — Whether to also include instance segmentation masks as part of the labels in caseformat = "coco_detection". -

masks_path (

pathlib.Path, optional) — Path to the directory containing the PNG files that store the class-agnostic image segmentations. Only relevant in case ConditionalDetrFeatureExtractor was initialized withformat = "coco_panoptic". -

pad_and_return_pixel_mask (

bool, optional, defaults toTrue) — Whether or not to pad images up to the largest image in a batch and create a pixel mask.If left to the default, will return a pixel mask that is:

- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

-

return_tensors (

stror TensorType, optional) — If set, will return tensors instead of NumPy arrays. If set to'pt', return PyTorchtorch.Tensorobjects.

Returns

A BatchFeature with the following fields:

- pixel_values — Pixel values to be fed to a model.

- pixel_mask — Pixel mask to be fed to a model (when

pad_and_return_pixel_mask=Trueor if “pixel_mask” is inself.model_input_names). - labels — Optional labels to be fed to a model (when

annotationsare provided)

Main method to prepare for the model one or several image(s) and optional annotations. Images are by default padded up to the largest image in a batch, and a pixel mask is created that indicates which pixels are real/which are padding.

NumPy arrays and PyTorch tensors are converted to PIL images when resizing, so the most efficient is to pass PIL images.

pad_and_create_pixel_mask

< source >( pixel_values_list: typing.List[ForwardRef('torch.Tensor')] return_tensors: typing.Union[str, transformers.utils.generic.TensorType, NoneType] = None ) → BatchFeature

Parameters

-

pixel_values_list (

List[torch.Tensor]) — List of images (pixel values) to be padded. Each image should be a tensor of shape (C, H, W). -

return_tensors (

stror TensorType, optional) — If set, will return tensors instead of NumPy arrays. If set to'pt', return PyTorchtorch.Tensorobjects.

Returns

A BatchFeature with the following fields:

- pixel_values — Pixel values to be fed to a model.

- pixel_mask — Pixel mask to be fed to a model (when

pad_and_return_pixel_mask=Trueor if “pixel_mask” is inself.model_input_names).

Pad images up to the largest image in a batch and create a corresponding pixel_mask.

post_process

< source >(

outputs

target_sizes

)

→

List[Dict]

Parameters

-

outputs (

ConditionalDetrObjectDetectionOutput) — Raw outputs of the model. -

target_sizes (

torch.Tensorof shape(batch_size, 2)) — Tensor containing the size (h, w) of each image of the batch. For evaluation, this must be the original image size (before any data augmentation). For visualization, this should be the image size after data augment, but before padding.

Returns

List[Dict]

A list of dictionaries, each dictionary containing the scores, labels and boxes for an image in the batch as predicted by the model.

Converts the output of ConditionalDetrForObjectDetection into the format expected by the COCO api. Only supports PyTorch.

post_process_segmentation

< source >(

outputs

target_sizes

threshold = 0.9

mask_threshold = 0.5

)

→

List[Dict]

Parameters

-

outputs (

ConditionalDetrSegmentationOutput) — Raw outputs of the model. -

target_sizes (

torch.Tensorof shape(batch_size, 2)orList[Tuple]of lengthbatch_size) — Torch Tensor (or list) corresponding to the requested final size (h, w) of each prediction. -

threshold (

float, optional, defaults to 0.9) — Threshold to use to filter out queries. -

mask_threshold (

float, optional, defaults to 0.5) — Threshold to use when turning the predicted masks into binary values.

Returns

List[Dict]

A list of dictionaries, each dictionary containing the scores, labels, and masks for an image in the batch as predicted by the model.

Converts the output of ConditionalDetrForSegmentation into image segmentation predictions. Only supports PyTorch.

post_process_panoptic

< source >(

outputs

processed_sizes

target_sizes = None

is_thing_map = None

threshold = 0.85

)

→

List[Dict]

Parameters

-

outputs (

ConditionalDetrSegmentationOutput) — Raw outputs of the model. -

processed_sizes (

torch.Tensorof shape(batch_size, 2)orList[Tuple]of lengthbatch_size) — Torch Tensor (or list) containing the size (h, w) of each image of the batch, i.e. the size after data augmentation but before batching. -

target_sizes (

torch.Tensorof shape(batch_size, 2)orList[Tuple]of lengthbatch_size, optional) — Torch Tensor (or list) corresponding to the requested final size (h, w) of each prediction. If left to None, it will default to theprocessed_sizes. -

is_thing_map (

torch.Tensorof shape(batch_size, 2), optional) — Dictionary mapping class indices to either True or False, depending on whether or not they are a thing. If not set, defaults to theis_thing_mapof COCO panoptic. -

threshold (

float, optional, defaults to 0.85) — Threshold to use to filter out queries.

Returns

List[Dict]

A list of dictionaries, each dictionary containing a PNG string and segments_info values for an image in the batch as predicted by the model.

Converts the output of ConditionalDetrForSegmentation into actual panoptic predictions. Only supports PyTorch.

ConditionalDetrModel

class transformers.ConditionalDetrModel

< source >( config: ConditionalDetrConfig )

Parameters

- config (ConditionalDetrConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare Conditional DETR Model (consisting of a backbone and encoder-decoder Transformer) outputting raw hidden-states without any specific head on top.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >(

pixel_values

pixel_mask = None

decoder_attention_mask = None

encoder_outputs = None

inputs_embeds = None

decoder_inputs_embeds = None

output_attentions = None

output_hidden_states = None

return_dict = None

)

→

transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrModelOutput or tuple(torch.FloatTensor)

Parameters

-

pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Padding will be ignored by default should you provide it.Pixel values can be obtained using ConditionalDetrFeatureExtractor. See ConditionalDetrFeatureExtractor.call() for details.

-

pixel_mask (

torch.LongTensorof shape(batch_size, height, width), optional) — Mask to avoid performing attention on padding pixel values. Mask values selected in[0, 1]:- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

-

decoder_attention_mask (

torch.LongTensorof shape(batch_size, num_queries), optional) — Not used by default. Can be used to mask object queries. -

encoder_outputs (

tuple(tuple(torch.FloatTensor), optional) — Tuple consists of (last_hidden_state, optional:hidden_states, optional:attentions)last_hidden_stateof shape(batch_size, sequence_length, hidden_size), optional) is a sequence of hidden-states at the output of the last layer of the encoder. Used in the cross-attention of the decoder. -

inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passing the flattened feature map (output of the backbone + projection layer), you can choose to directly pass a flattened representation of an image. -

decoder_inputs_embeds (

torch.FloatTensorof shape(batch_size, num_queries, hidden_size), optional) — Optionally, instead of initializing the queries with a tensor of zeros, you can choose to directly pass an embedded representation. -

output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. -

output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. -

return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrModelOutput or tuple(torch.FloatTensor)

A transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrModelOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ConditionalDetrConfig) and inputs.

- last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the decoder of the model. - decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the decoder at the output of each layer plus the initial embedding outputs. - decoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder, after the attention softmax, used to compute the weighted average in the self-attention heads. - cross_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder’s cross-attention layer, after the attention softmax, used to compute the weighted average in the cross-attention heads. - encoder_last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the encoder of the model. - encoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the encoder at the output of each layer plus the initial embedding outputs. - encoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the encoder, after the attention softmax, used to compute the weighted average in the self-attention heads. - intermediate_hidden_states (

torch.FloatTensorof shape(config.decoder_layers, batch_size, sequence_length, hidden_size), optional, returned whenconfig.auxiliary_loss=True) — Intermediate decoder activations, i.e. the output of each decoder layer, each of them gone through a layernorm.

The ConditionalDetrModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoFeatureExtractor, AutoModel

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> feature_extractor = AutoFeatureExtractor.from_pretrained("microsoft/conditional-detr-resnet-50")

>>> model = AutoModel.from_pretrained("microsoft/conditional-detr-resnet-50")

>>> # prepare image for the model

>>> inputs = feature_extractor(images=image, return_tensors="pt")

>>> # forward pass

>>> outputs = model(**inputs)

>>> # the last hidden states are the final query embeddings of the Transformer decoder

>>> # these are of shape (batch_size, num_queries, hidden_size)

>>> last_hidden_states = outputs.last_hidden_state

>>> list(last_hidden_states.shape)

[1, 300, 256]ConditionalDetrForObjectDetection

class transformers.ConditionalDetrForObjectDetection

< source >( config: ConditionalDetrConfig )

Parameters

- config (ConditionalDetrConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

CONDITIONAL_DETR Model (consisting of a backbone and encoder-decoder Transformer) with object detection heads on top, for tasks such as COCO detection.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >(

pixel_values

pixel_mask = None

decoder_attention_mask = None

encoder_outputs = None

inputs_embeds = None

decoder_inputs_embeds = None

labels = None

output_attentions = None

output_hidden_states = None

return_dict = None

)

→

transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrObjectDetectionOutput or tuple(torch.FloatTensor)

Parameters

-

pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Padding will be ignored by default should you provide it.Pixel values can be obtained using ConditionalDetrFeatureExtractor. See ConditionalDetrFeatureExtractor.call() for details.

-

pixel_mask (

torch.LongTensorof shape(batch_size, height, width), optional) — Mask to avoid performing attention on padding pixel values. Mask values selected in[0, 1]:- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

-

decoder_attention_mask (

torch.LongTensorof shape(batch_size, num_queries), optional) — Not used by default. Can be used to mask object queries. -

encoder_outputs (

tuple(tuple(torch.FloatTensor), optional) — Tuple consists of (last_hidden_state, optional:hidden_states, optional:attentions)last_hidden_stateof shape(batch_size, sequence_length, hidden_size), optional) is a sequence of hidden-states at the output of the last layer of the encoder. Used in the cross-attention of the decoder. -

inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passing the flattened feature map (output of the backbone + projection layer), you can choose to directly pass a flattened representation of an image. -

decoder_inputs_embeds (

torch.FloatTensorof shape(batch_size, num_queries, hidden_size), optional) — Optionally, instead of initializing the queries with a tensor of zeros, you can choose to directly pass an embedded representation. -

output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. -

output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. -

return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. -

labels (

List[Dict]of len(batch_size,), optional) — Labels for computing the bipartite matching loss. List of dicts, each dictionary containing at least the following 2 keys: ‘class_labels’ and ‘boxes’ (the class labels and bounding boxes of an image in the batch respectively). The class labels themselves should be atorch.LongTensorof len(number of bounding boxes in the image,)and the boxes atorch.FloatTensorof shape(number of bounding boxes in the image, 4).

Returns

transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrObjectDetectionOutput or tuple(torch.FloatTensor)

A transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrObjectDetectionOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ConditionalDetrConfig) and inputs.

- loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsare provided)) — Total loss as a linear combination of a negative log-likehood (cross-entropy) for class prediction and a bounding box loss. The latter is defined as a linear combination of the L1 loss and the generalized scale-invariant IoU loss. - loss_dict (

Dict, optional) — A dictionary containing the individual losses. Useful for logging. - logits (

torch.FloatTensorof shape(batch_size, num_queries, num_classes + 1)) — Classification logits (including no-object) for all queries. - pred_boxes (

torch.FloatTensorof shape(batch_size, num_queries, 4)) — Normalized boxes coordinates for all queries, represented as (center_x, center_y, width, height). These values are normalized in [0, 1], relative to the size of each individual image in the batch (disregarding possible padding). You can use~ConditionalDetrFeatureExtractor.post_process_object_detectionto retrieve the unnormalized bounding boxes. - auxiliary_outputs (

list[Dict], optional) — Optional, only returned when auxilary losses are activated (i.e.config.auxiliary_lossis set toTrue) and labels are provided. It is a list of dictionaries containing the two above keys (logitsandpred_boxes) for each decoder layer. - last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the decoder of the model. - decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the decoder at the output of each layer plus the initial embedding outputs. - decoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder, after the attention softmax, used to compute the weighted average in the self-attention heads. - cross_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder’s cross-attention layer, after the attention softmax, used to compute the weighted average in the cross-attention heads. - encoder_last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the encoder of the model. - encoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the encoder at the output of each layer plus the initial embedding outputs. - encoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the encoder, after the attention softmax, used to compute the weighted average in the self-attention heads.

The ConditionalDetrForObjectDetection forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoFeatureExtractor, AutoModelForObjectDetection

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> feature_extractor = AutoFeatureExtractor.from_pretrained("microsoft/conditional-detr-resnet-50")

>>> model = AutoModelForObjectDetection.from_pretrained("microsoft/conditional-detr-resnet-50")

>>> inputs = feature_extractor(images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> # convert outputs (bounding boxes and class logits) to COCO API

>>> target_sizes = torch.tensor([image.size[::-1]])

>>> results = feature_extractor.post_process(outputs, target_sizes=target_sizes)[0]

>>> for score, label, box in zip(results["scores"], results["labels"], results["boxes"]):

... box = [round(i, 2) for i in box.tolist()]

... # let's only keep detections with score > 0.5

... if score > 0.5:

... print(

... f"Detected {model.config.id2label[label.item()]} with confidence "

... f"{round(score.item(), 3)} at location {box}"

... )

Detected remote with confidence 0.833 at location [38.31, 72.1, 177.63, 118.45]

Detected cat with confidence 0.831 at location [9.2, 51.38, 321.13, 469.0]

Detected cat with confidence 0.804 at location [340.3, 16.85, 642.93, 370.95]

Detected remote with confidence 0.683 at location [334.48, 73.49, 366.37, 190.01]

Detected couch with confidence 0.535 at location [0.52, 1.19, 640.35, 475.1]ConditionalDetrForSegmentation

class transformers.ConditionalDetrForSegmentation

< source >( config: ConditionalDetrConfig )

Parameters

- config (ConditionalDetrConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

CONDITIONAL_DETR Model (consisting of a backbone and encoder-decoder Transformer) with a segmentation head on top, for tasks such as COCO panoptic.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >(

pixel_values

pixel_mask = None

decoder_attention_mask = None

encoder_outputs = None

inputs_embeds = None

decoder_inputs_embeds = None

labels = None

output_attentions = None

output_hidden_states = None

return_dict = None

)

→

transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrSegmentationOutput or tuple(torch.FloatTensor)

Parameters

-

pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Padding will be ignored by default should you provide it.Pixel values can be obtained using ConditionalDetrFeatureExtractor. See ConditionalDetrFeatureExtractor.call() for details.

-

pixel_mask (

torch.LongTensorof shape(batch_size, height, width), optional) — Mask to avoid performing attention on padding pixel values. Mask values selected in[0, 1]:- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

-

decoder_attention_mask (

torch.LongTensorof shape(batch_size, num_queries), optional) — Not used by default. Can be used to mask object queries. -

encoder_outputs (

tuple(tuple(torch.FloatTensor), optional) — Tuple consists of (last_hidden_state, optional:hidden_states, optional:attentions)last_hidden_stateof shape(batch_size, sequence_length, hidden_size), optional) is a sequence of hidden-states at the output of the last layer of the encoder. Used in the cross-attention of the decoder. -

inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passing the flattened feature map (output of the backbone + projection layer), you can choose to directly pass a flattened representation of an image. -

decoder_inputs_embeds (

torch.FloatTensorof shape(batch_size, num_queries, hidden_size), optional) — Optionally, instead of initializing the queries with a tensor of zeros, you can choose to directly pass an embedded representation. -

output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. -

output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. -

return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. -

labels (

List[Dict]of len(batch_size,), optional) — Labels for computing the bipartite matching loss, DICE/F-1 loss and Focal loss. List of dicts, each dictionary containing at least the following 3 keys: ‘class_labels’, ‘boxes’ and ‘masks’ (the class labels, bounding boxes and segmentation masks of an image in the batch respectively). The class labels themselves should be atorch.LongTensorof len(number of bounding boxes in the image,), the boxes atorch.FloatTensorof shape(number of bounding boxes in the image, 4)and the masks atorch.FloatTensorof shape(number of bounding boxes in the image, height, width).

Returns

transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrSegmentationOutput or tuple(torch.FloatTensor)

A transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrSegmentationOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ConditionalDetrConfig) and inputs.

- loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsare provided)) — Total loss as a linear combination of a negative log-likehood (cross-entropy) for class prediction and a bounding box loss. The latter is defined as a linear combination of the L1 loss and the generalized scale-invariant IoU loss. - loss_dict (

Dict, optional) — A dictionary containing the individual losses. Useful for logging. - logits (

torch.FloatTensorof shape(batch_size, num_queries, num_classes + 1)) — Classification logits (including no-object) for all queries. - pred_boxes (

torch.FloatTensorof shape(batch_size, num_queries, 4)) — Normalized boxes coordinates for all queries, represented as (center_x, center_y, width, height). These values are normalized in [0, 1], relative to the size of each individual image in the batch (disregarding possible padding). You can use~ConditionalDetrFeatureExtractor.post_process_object_detectionto retrieve the unnormalized bounding boxes. - pred_masks (

torch.FloatTensorof shape(batch_size, num_queries, height/4, width/4)) — Segmentation masks logits for all queries. See also~ConditionalDetrFeatureExtractor.post_process_semantic_segmentationor~ConditionalDetrFeatureExtractor.post_process_instance_segmentation~ConditionalDetrFeatureExtractor.post_process_panoptic_segmentationto evaluate semantic, instance and panoptic segmentation masks respectively. - auxiliary_outputs (

list[Dict], optional) — Optional, only returned when auxiliary losses are activated (i.e.config.auxiliary_lossis set toTrue) and labels are provided. It is a list of dictionaries containing the two above keys (logitsandpred_boxes) for each decoder layer. - last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the decoder of the model. - decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the decoder at the output of each layer plus the initial embedding outputs. - decoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder, after the attention softmax, used to compute the weighted average in the self-attention heads. - cross_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder’s cross-attention layer, after the attention softmax, used to compute the weighted average in the cross-attention heads. - encoder_last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the encoder of the model. - encoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the encoder at the output of each layer plus the initial embedding outputs. - encoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the encoder, after the attention softmax, used to compute the weighted average in the self-attention heads.

The ConditionalDetrForSegmentation forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> import io

>>> import requests

>>> from PIL import Image

>>> import torch

>>> import numpy

>>> from transformers import (

... AutoFeatureExtractor,

... ConditionalDetrConfig,

... ConditionalDetrForSegmentation,

... )

>>> from transformers.models.conditional_detr.feature_extraction_conditional_detr import rgb_to_id

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> feature_extractor = AutoFeatureExtractor.from_pretrained("microsoft/conditional-detr-resnet-50")

>>> # randomly initialize all weights of the model

>>> config = ConditionalDetrConfig()

>>> model = ConditionalDetrForSegmentation(config)

>>> # prepare image for the model

>>> inputs = feature_extractor(images=image, return_tensors="pt")

>>> # forward pass

>>> outputs = model(**inputs)

>>> # use the `post_process_panoptic` method of `ConditionalDetrFeatureExtractor` to convert to COCO format

>>> processed_sizes = torch.as_tensor(inputs["pixel_values"].shape[-2:]).unsqueeze(0)

>>> result = feature_extractor.post_process_panoptic(outputs, processed_sizes)[0]

>>> # the segmentation is stored in a special-format png

>>> panoptic_seg = Image.open(io.BytesIO(result["png_string"]))

>>> panoptic_seg = numpy.array(panoptic_seg, dtype=numpy.uint8)

>>> # retrieve the ids corresponding to each mask

>>> panoptic_seg_id = rgb_to_id(panoptic_seg)

>>> panoptic_seg_id.shape

(800, 1066)