Diffusers documentation

Load community pipelines and components

Load community pipelines and components

Community pipelines

Take a look at GitHub Issue #841 for more context about why we’re adding community pipelines to help everyone easily share their work without being slowed down.

Community pipelines are any DiffusionPipeline class that are different from the original paper implementation (for example, the StableDiffusionControlNetPipeline corresponds to the Text-to-Image Generation with ControlNet Conditioning paper). They provide additional functionality or extend the original implementation of a pipeline.

There are many cool community pipelines like Marigold Depth Estimation or InstantID, and you can find all the official community pipelines here.

There are two types of community pipelines, those stored on the Hugging Face Hub and those stored on Diffusers GitHub repository. Hub pipelines are completely customizable (scheduler, models, pipeline code, etc.) while Diffusers GitHub pipelines are only limited to custom pipeline code.

| GitHub community pipeline | HF Hub community pipeline | |

|---|---|---|

| usage | same | same |

| review process | open a Pull Request on GitHub and undergo a review process from the Diffusers team before merging; may be slower | upload directly to a Hub repository without any review; this is the fastest workflow |

| visibility | included in the official Diffusers repository and documentation | included on your HF Hub profile and relies on your own usage/promotion to gain visibility |

To load a Hugging Face Hub community pipeline, pass the repository id of the community pipeline to the custom_pipeline argument and the model repository where you’d like to load the pipeline weights and components from. For example, the example below loads a dummy pipeline from hf-internal-testing/diffusers-dummy-pipeline and the pipeline weights and components from google/ddpm-cifar10-32:

By loading a community pipeline from the Hugging Face Hub, you are trusting that the code you are loading is safe. Make sure to inspect the code online before loading and running it automatically!

from diffusers import DiffusionPipeline

pipeline = DiffusionPipeline.from_pretrained(

"google/ddpm-cifar10-32", custom_pipeline="hf-internal-testing/diffusers-dummy-pipeline", use_safetensors=True

)Load from a local file

Community pipelines can also be loaded from a local file if you pass a file path instead. The path to the passed directory must contain a pipeline.py file that contains the pipeline class.

pipeline = DiffusionPipeline.from_pretrained(

"stable-diffusion-v1-5/stable-diffusion-v1-5",

custom_pipeline="./path/to/pipeline_directory/",

clip_model=clip_model,

feature_extractor=feature_extractor,

use_safetensors=True,

)Load from a specific version

By default, community pipelines are loaded from the latest stable version of Diffusers. To load a community pipeline from another version, use the custom_revision parameter.

For example, to load from the main branch:

pipeline = DiffusionPipeline.from_pretrained(

"stable-diffusion-v1-5/stable-diffusion-v1-5",

custom_pipeline="clip_guided_stable_diffusion",

custom_revision="main",

clip_model=clip_model,

feature_extractor=feature_extractor,

use_safetensors=True,

)Load with from_pipe

Community pipelines can also be loaded with the from_pipe() method which allows you to load and reuse multiple pipelines without any additional memory overhead (learn more in the Reuse a pipeline guide). The memory requirement is determined by the largest single pipeline loaded.

For example, let’s load a community pipeline that supports long prompts with weighting from a Stable Diffusion pipeline.

import torch

from diffusers import DiffusionPipeline

pipe_sd = DiffusionPipeline.from_pretrained("emilianJR/CyberRealistic_V3", torch_dtype=torch.float16)

pipe_sd.to("cuda")

# load long prompt weighting pipeline

pipe_lpw = DiffusionPipeline.from_pipe(

pipe_sd,

custom_pipeline="lpw_stable_diffusion",

).to("cuda")

prompt = "cat, hiding in the leaves, ((rain)), zazie rainyday, beautiful eyes, macro shot, colorful details, natural lighting, amazing composition, subsurface scattering, amazing textures, filmic, soft light, ultra-detailed eyes, intricate details, detailed texture, light source contrast, dramatic shadows, cinematic light, depth of field, film grain, noise, dark background, hyperrealistic dslr film still, dim volumetric cinematic lighting"

neg_prompt = "(deformed iris, deformed pupils, semi-realistic, cgi, 3d, render, sketch, cartoon, drawing, anime, mutated hands and fingers:1.4), (deformed, distorted, disfigured:1.3), poorly drawn, bad anatomy, wrong anatomy, extra limb, missing limb, floating limbs, disconnected limbs, mutation, mutated, ugly, disgusting, amputation"

generator = torch.Generator(device="cpu").manual_seed(20)

out_lpw = pipe_lpw(

prompt,

negative_prompt=neg_prompt,

width=512,

height=512,

max_embeddings_multiples=3,

num_inference_steps=50,

generator=generator,

).images[0]

out_lpw

Example community pipelines

Community pipelines are a really fun and creative way to extend the capabilities of the original pipeline with new and unique features. You can find all community pipelines in the diffusers/examples/community folder with inference and training examples for how to use them.

This section showcases a couple of the community pipelines and hopefully it’ll inspire you to create your own (feel free to open a PR for your community pipeline and ping us for a review)!

The from_pipe() method is particularly useful for loading community pipelines because many of them don’t have pretrained weights and add a feature on top of an existing pipeline like Stable Diffusion or Stable Diffusion XL. You can learn more about the from_pipe() method in the Load with from_pipe section.

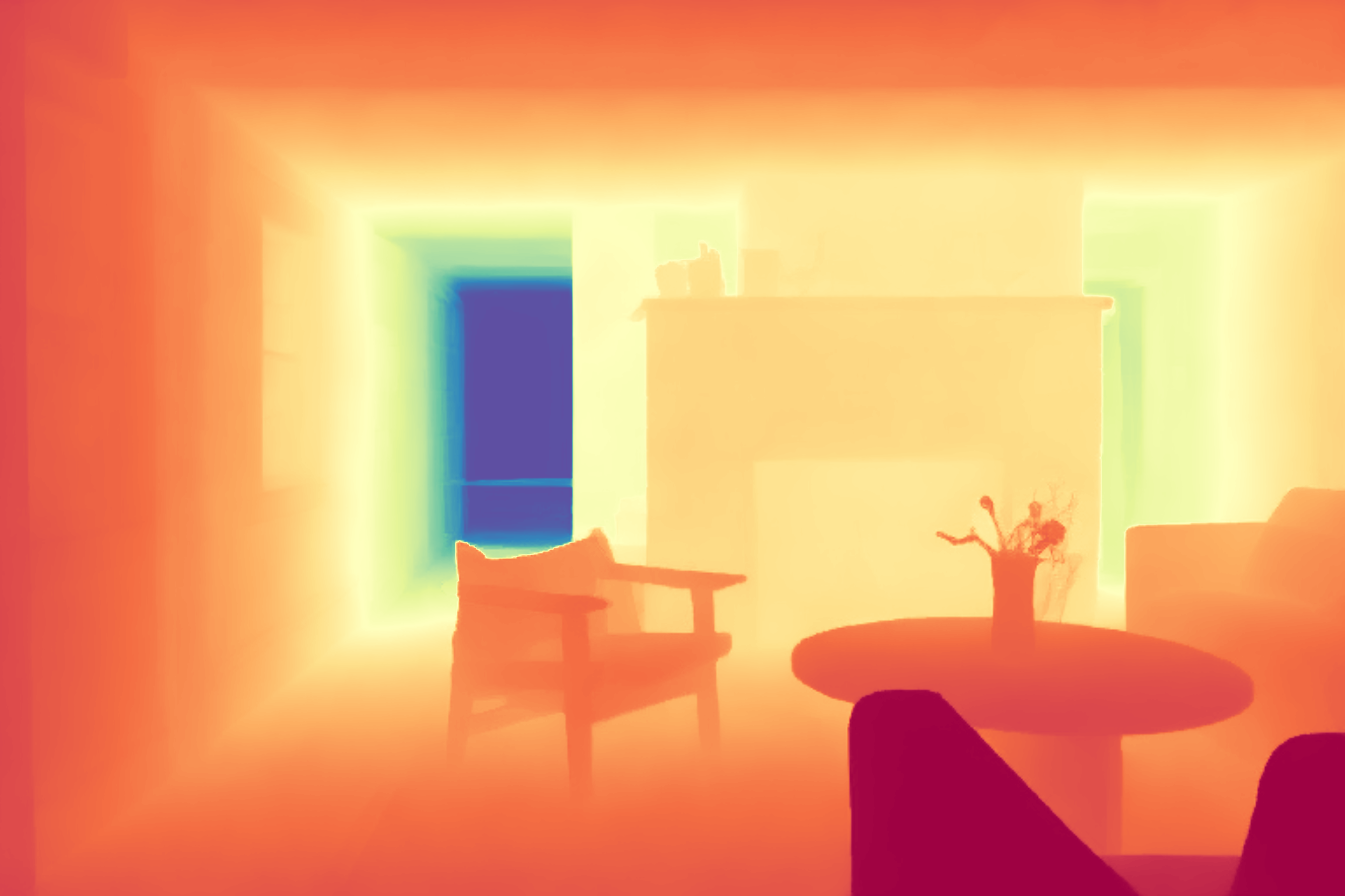

Marigold is a depth estimation diffusion pipeline that uses the rich existing and inherent visual knowledge in diffusion models. It takes an input image and denoises and decodes it into a depth map. Marigold performs well even on images it hasn’t seen before.

import torch

from PIL import Image

from diffusers import DiffusionPipeline

from diffusers.utils import load_image

pipeline = DiffusionPipeline.from_pretrained(

"prs-eth/marigold-lcm-v1-0",

custom_pipeline="marigold_depth_estimation",

torch_dtype=torch.float16,

variant="fp16",

)

pipeline.to("cuda")

image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/community-marigold.png")

output = pipeline(

image,

denoising_steps=4,

ensemble_size=5,

processing_res=768,

match_input_res=True,

batch_size=0,

seed=33,

color_map="Spectral",

show_progress_bar=True,

)

depth_colored: Image.Image = output.depth_colored

depth_colored.save("./depth_colored.png")

Community components

Community components allow users to build pipelines that may have customized components that are not a part of Diffusers. If your pipeline has custom components that Diffusers doesn’t already support, you need to provide their implementations as Python modules. These customized components could be a VAE, UNet, and scheduler. In most cases, the text encoder is imported from the Transformers library. The pipeline code itself can also be customized.

This section shows how users should use community components to build a community pipeline.

You’ll use the showlab/show-1-base pipeline checkpoint as an example.

- Import and load the text encoder from Transformers:

from transformers import T5Tokenizer, T5EncoderModel

pipe_id = "showlab/show-1-base"

tokenizer = T5Tokenizer.from_pretrained(pipe_id, subfolder="tokenizer")

text_encoder = T5EncoderModel.from_pretrained(pipe_id, subfolder="text_encoder")- Load a scheduler:

from diffusers import DPMSolverMultistepScheduler

scheduler = DPMSolverMultistepScheduler.from_pretrained(pipe_id, subfolder="scheduler")- Load an image processor:

from transformers import CLIPImageProcessor

feature_extractor = CLIPImageProcessor.from_pretrained(pipe_id, subfolder="feature_extractor")In steps 4 and 5, the custom UNet and pipeline implementation must match the format shown in their files for this example to work.

Now you’ll load a custom UNet, which in this example, has already been implemented in showone_unet_3d_condition.py for your convenience. You’ll notice the UNet3DConditionModel class name is changed to

ShowOneUNet3DConditionModelbecause UNet3DConditionModel already exists in Diffusers. Any components needed for theShowOneUNet3DConditionModelclass should be placed in showone_unet_3d_condition.py.Once this is done, you can initialize the UNet:

from showone_unet_3d_condition import ShowOneUNet3DConditionModel unet = ShowOneUNet3DConditionModel.from_pretrained(pipe_id, subfolder="unet")Finally, you’ll load the custom pipeline code. For this example, it has already been created for you in pipeline_t2v_base_pixel.py. This script contains a custom

TextToVideoIFPipelineclass for generating videos from text. Just like the custom UNet, any code needed for the custom pipeline to work should go in pipeline_t2v_base_pixel.py.

Once everything is in place, you can initialize the TextToVideoIFPipeline with the ShowOneUNet3DConditionModel:

from pipeline_t2v_base_pixel import TextToVideoIFPipeline

import torch

pipeline = TextToVideoIFPipeline(

unet=unet,

text_encoder=text_encoder,

tokenizer=tokenizer,

scheduler=scheduler,

feature_extractor=feature_extractor

)

pipeline = pipeline.to(device="cuda")

pipeline.torch_dtype = torch.float16Push the pipeline to the Hub to share with the community!

pipeline.push_to_hub("custom-t2v-pipeline")After the pipeline is successfully pushed, you need to make a few changes:

- Change the

_class_nameattribute in model_index.json to"pipeline_t2v_base_pixel"and"TextToVideoIFPipeline". - Upload

showone_unet_3d_condition.pyto the unet subfolder. - Upload

pipeline_t2v_base_pixel.pyto the pipeline repository.

To run inference, add the trust_remote_code argument while initializing the pipeline to handle all the “magic” behind the scenes.

As an additional precaution with trust_remote_code=True, we strongly encourage you to pass a commit hash to the revision parameter in from_pretrained() to make sure the code hasn’t been updated with some malicious new lines of code (unless you fully trust the model owners).

from diffusers import DiffusionPipeline

import torch

pipeline = DiffusionPipeline.from_pretrained(

"<change-username>/<change-id>", trust_remote_code=True, torch_dtype=torch.float16

).to("cuda")

prompt = "hello"

# Text embeds

prompt_embeds, negative_embeds = pipeline.encode_prompt(prompt)

# Keyframes generation (8x64x40, 2fps)

video_frames = pipeline(

prompt_embeds=prompt_embeds,

negative_prompt_embeds=negative_embeds,

num_frames=8,

height=40,

width=64,

num_inference_steps=2,

guidance_scale=9.0,

output_type="pt"

).framesAs an additional reference, take a look at the repository structure of stabilityai/japanese-stable-diffusion-xl which also uses the trust_remote_code feature.

from diffusers import DiffusionPipeline

import torch

pipeline = DiffusionPipeline.from_pretrained(

"stabilityai/japanese-stable-diffusion-xl", trust_remote_code=True

)

pipeline.to("cuda")