url

stringlengths 58

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 72

75

| comments_url

stringlengths 67

70

| events_url

stringlengths 65

68

| html_url

stringlengths 46

51

| id

int64 599M

1.5B

| node_id

stringlengths 18

32

| number

int64 1

5.38k

| title

stringlengths 1

276

| user

dict | labels

list | state

stringclasses 2

values | locked

bool 1

class | assignee

dict | assignees

list | milestone

dict | comments

sequence | created_at

stringlengths 20

20

| updated_at

stringlengths 20

20

| closed_at

stringlengths 20

20

⌀ | author_association

stringclasses 3

values | active_lock_reason

null | draft

bool 2

classes | pull_request

dict | body

stringlengths 0

228k

⌀ | reactions

dict | timeline_url

stringlengths 67

70

| performed_via_github_app

null | state_reason

stringclasses 3

values | is_pull_request

bool 1

class |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/5379 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5379/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5379/comments | https://api.github.com/repos/huggingface/datasets/issues/5379/events | https://github.com/huggingface/datasets/pull/5379 | 1,504,010,639 | PR_kwDODunzps5F1r2k | 5,379 | feat: depth estimation dataset guide. | {

"avatar_url": "https://avatars.githubusercontent.com/u/22957388?v=4",

"events_url": "https://api.github.com/users/sayakpaul/events{/privacy}",

"followers_url": "https://api.github.com/users/sayakpaul/followers",

"following_url": "https://api.github.com/users/sayakpaul/following{/other_user}",

"gists_url": "https://api.github.com/users/sayakpaul/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/sayakpaul",

"id": 22957388,

"login": "sayakpaul",

"node_id": "MDQ6VXNlcjIyOTU3Mzg4",

"organizations_url": "https://api.github.com/users/sayakpaul/orgs",

"received_events_url": "https://api.github.com/users/sayakpaul/received_events",

"repos_url": "https://api.github.com/users/sayakpaul/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/sayakpaul/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sayakpaul/subscriptions",

"type": "User",

"url": "https://api.github.com/users/sayakpaul"

} | [] | open | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/22957388?v=4",

"events_url": "https://api.github.com/users/sayakpaul/events{/privacy}",

"followers_url": "https://api.github.com/users/sayakpaul/followers",

"following_url": "https://api.github.com/users/sayakpaul/following{/other_user}",

"gists_url": "https://api.github.com/users/sayakpaul/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/sayakpaul",

"id": 22957388,

"login": "sayakpaul",

"node_id": "MDQ6VXNlcjIyOTU3Mzg4",

"organizations_url": "https://api.github.com/users/sayakpaul/orgs",

"received_events_url": "https://api.github.com/users/sayakpaul/received_events",

"repos_url": "https://api.github.com/users/sayakpaul/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/sayakpaul/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sayakpaul/subscriptions",

"type": "User",

"url": "https://api.github.com/users/sayakpaul"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/22957388?v=4",

"events_url": "https://api.github.com/users/sayakpaul/events{/privacy}",

"followers_url": "https://api.github.com/users/sayakpaul/followers",

"following_url": "https://api.github.com/users/sayakpaul/following{/other_user}",

"gists_url": "https://api.github.com/users/sayakpaul/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/sayakpaul",

"id": 22957388,

"login": "sayakpaul",

"node_id": "MDQ6VXNlcjIyOTU3Mzg4",

"organizations_url": "https://api.github.com/users/sayakpaul/orgs",

"received_events_url": "https://api.github.com/users/sayakpaul/received_events",

"repos_url": "https://api.github.com/users/sayakpaul/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/sayakpaul/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sayakpaul/subscriptions",

"type": "User",

"url": "https://api.github.com/users/sayakpaul"

}

] | null | [] | 2022-12-20T05:32:11Z | 2022-12-20T05:42:02Z | null | CONTRIBUTOR | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5379.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5379",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/5379.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5379"

} | This PR adds a guide for prepping datasets for depth estimation.

PR to add documentation images is up here: https://huggingface.co/datasets/huggingface/documentation-images/discussions/22 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5379/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5379/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5378 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5378/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5378/comments | https://api.github.com/repos/huggingface/datasets/issues/5378/events | https://github.com/huggingface/datasets/issues/5378 | 1,503,887,508 | I_kwDODunzps5Zo4CU | 5,378 | The dataset "the_pile", subset "enron_emails" , load_dataset() failure | {

"avatar_url": "https://avatars.githubusercontent.com/u/52023469?v=4",

"events_url": "https://api.github.com/users/shaoyuta/events{/privacy}",

"followers_url": "https://api.github.com/users/shaoyuta/followers",

"following_url": "https://api.github.com/users/shaoyuta/following{/other_user}",

"gists_url": "https://api.github.com/users/shaoyuta/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/shaoyuta",

"id": 52023469,

"login": "shaoyuta",

"node_id": "MDQ6VXNlcjUyMDIzNDY5",

"organizations_url": "https://api.github.com/users/shaoyuta/orgs",

"received_events_url": "https://api.github.com/users/shaoyuta/received_events",

"repos_url": "https://api.github.com/users/shaoyuta/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/shaoyuta/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/shaoyuta/subscriptions",

"type": "User",

"url": "https://api.github.com/users/shaoyuta"

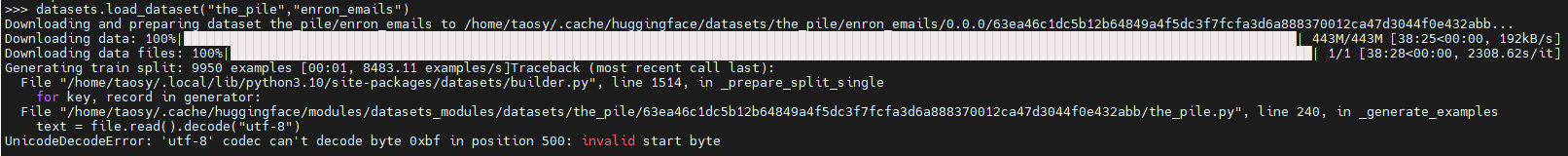

} | [] | closed | false | null | [] | null | [] | 2022-12-20T02:19:13Z | 2022-12-20T07:52:54Z | 2022-12-20T07:52:54Z | NONE | null | null | null | ### Describe the bug

When run

"datasets.load_dataset("the_pile","enron_emails")" failure

### Steps to reproduce the bug

Run below code in python cli:

>>> import datasets

>>> datasets.load_dataset("the_pile","enron_emails")

### Expected behavior

Load dataset "the_pile", "enron_emails" successfully.

### Environment info

Copy-and-paste the text below in your GitHub issue.

- `datasets` version: 2.7.1

- Platform: Linux-5.15.0-53-generic-x86_64-with-glibc2.35

- Python version: 3.10.6

- PyArrow version: 10.0.0

- Pandas version: 1.4.3

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5378/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5378/timeline | null | completed | true |

https://api.github.com/repos/huggingface/datasets/issues/5377 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5377/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5377/comments | https://api.github.com/repos/huggingface/datasets/issues/5377/events | https://github.com/huggingface/datasets/pull/5377 | 1,503,477,833 | PR_kwDODunzps5Fz5lw | 5,377 | Add a parallel implementation of to_tf_dataset() | {

"avatar_url": "https://avatars.githubusercontent.com/u/12866554?v=4",

"events_url": "https://api.github.com/users/Rocketknight1/events{/privacy}",

"followers_url": "https://api.github.com/users/Rocketknight1/followers",

"following_url": "https://api.github.com/users/Rocketknight1/following{/other_user}",

"gists_url": "https://api.github.com/users/Rocketknight1/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/Rocketknight1",

"id": 12866554,

"login": "Rocketknight1",

"node_id": "MDQ6VXNlcjEyODY2NTU0",

"organizations_url": "https://api.github.com/users/Rocketknight1/orgs",

"received_events_url": "https://api.github.com/users/Rocketknight1/received_events",

"repos_url": "https://api.github.com/users/Rocketknight1/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/Rocketknight1/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Rocketknight1/subscriptions",

"type": "User",

"url": "https://api.github.com/users/Rocketknight1"

} | [] | open | false | null | [] | null | [] | 2022-12-19T19:40:27Z | 2022-12-19T19:45:06Z | null | MEMBER | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5377.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5377",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/5377.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5377"

} | Hey all! Here's a first draft of the PR to add a multiprocessing implementation for `to_tf_dataset()`. It worked in some quick testing for me, but obviously I need to do some much more rigorous testing/benchmarking, and add some proper library tests.

The core idea is that we do everything using `multiprocessing` and `numpy`, and just wrap a `tf.data.Dataset` around the output. We could also rewrite the existing single-threaded implementation based on this code, which might simplify it a bit.

Checklist:

- [X] Add initial draft

- [x] Check that it works regardless of whether the `collate_fn` or dataset returns `tf` or `np` arrays

- [ ] Check that it works with `tf.string` return data

- [ ] Check indices are correctly reshuffled each epoch

- [ ] Check `fit()` with multiple epochs works fine and that the progress bar is correct

- [ ] Check there are no memory leaks or zombie processes

- [ ] Benchmark performance

- [ ] Tweak params for dataset inference - can we speed things up there a bit?

- [ ] Add tests to the library

- [ ] Add a PR to `transformers` to expose the `num_workers` argument via `prepare_tf_dataset` | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5377/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5377/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5376 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5376/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5376/comments | https://api.github.com/repos/huggingface/datasets/issues/5376/events | https://github.com/huggingface/datasets/pull/5376 | 1,502,730,559 | PR_kwDODunzps5FxWkM | 5,376 | set dev version | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

} | [] | closed | false | null | [] | null | [] | 2022-12-19T10:56:56Z | 2022-12-19T11:01:55Z | 2022-12-19T10:57:16Z | MEMBER | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5376.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5376",

"merged_at": "2022-12-19T10:57:16Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5376.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5376"

} | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5376/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5376/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5375 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5375/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5375/comments | https://api.github.com/repos/huggingface/datasets/issues/5375/events | https://github.com/huggingface/datasets/pull/5375 | 1,502,720,404 | PR_kwDODunzps5FxUbG | 5,375 | Release: 2.8.0 | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

} | [] | closed | false | null | [] | null | [] | 2022-12-19T10:48:26Z | 2022-12-19T10:55:43Z | 2022-12-19T10:53:15Z | MEMBER | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5375.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5375",

"merged_at": "2022-12-19T10:53:15Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5375.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5375"

} | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5375/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5375/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5374 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5374/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5374/comments | https://api.github.com/repos/huggingface/datasets/issues/5374/events | https://github.com/huggingface/datasets/issues/5374 | 1,501,872,945 | I_kwDODunzps5ZhMMx | 5,374 | Using too many threads results in: Got disconnected from remote data host. Retrying in 5sec | {

"avatar_url": "https://avatars.githubusercontent.com/u/62820084?v=4",

"events_url": "https://api.github.com/users/Muennighoff/events{/privacy}",

"followers_url": "https://api.github.com/users/Muennighoff/followers",

"following_url": "https://api.github.com/users/Muennighoff/following{/other_user}",

"gists_url": "https://api.github.com/users/Muennighoff/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/Muennighoff",

"id": 62820084,

"login": "Muennighoff",

"node_id": "MDQ6VXNlcjYyODIwMDg0",

"organizations_url": "https://api.github.com/users/Muennighoff/orgs",

"received_events_url": "https://api.github.com/users/Muennighoff/received_events",

"repos_url": "https://api.github.com/users/Muennighoff/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/Muennighoff/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Muennighoff/subscriptions",

"type": "User",

"url": "https://api.github.com/users/Muennighoff"

} | [] | open | false | null | [] | null | [] | 2022-12-18T11:38:58Z | 2022-12-19T16:33:31Z | null | CONTRIBUTOR | null | null | null | ### Describe the bug

`streaming_download_manager` seems to disconnect if too many runs access the same underlying dataset 🧐

The code works fine for me if I have ~100 runs in parallel, but disconnects once scaling to 200.

Possibly related:

- https://github.com/huggingface/datasets/pull/3100

- https://github.com/huggingface/datasets/pull/3050

### Steps to reproduce the bug

Running

```python

c4 = datasets.load_dataset("c4", "en", split="train", streaming=True).skip(args.start).take(args.end-args.start)

df = pd.DataFrame(c4, index=None)

```

with different start & end arguments on 200 CPUs in parallel yields:

```

WARNING:datasets.load:Using the latest cached version of the module from /users/muennighoff/.cache/huggingface/modules/datasets_modules/datasets/c4/df532b158939272d032cc63ef19cd5b83e9b4d00c922b833e4cb18b2e9869b01 (last modified on Mon Dec 12 10:45:02 2022) since it couldn't be found locally at c4.

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [1/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [2/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [3/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [4/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [5/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [6/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [7/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [8/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [9/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [10/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [11/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [12/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [13/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [14/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [15/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [16/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [17/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [18/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [19/20]

WARNING:datasets.download.streaming_download_manager:Got disconnected from remote data host. Retrying in 5sec [20/20]

╭───────────────────── Traceback (most recent call last) ──────────────────────╮

│ /pfs/lustrep4/scratch/project_462000119/muennighoff/dec-2022-tasky/inference │

│ _c4.py:68 in <module> │

│ │

│ 65 │ model.eval() │

│ 66 │ │

│ 67 │ c4 = datasets.load_dataset("c4", "en", split="train", streaming=Tru │

│ ❱ 68 │ df = pd.DataFrame(c4, index=None) │

│ 69 │ texts = df["text"].to_list() │

│ 70 │ preds = batch_inference(texts, batch_size=args.batch_size) │

│ 71 │

│ │

│ /opt/cray/pe/python/3.9.12.1/lib/python3.9/site-packages/pandas/core/frame.p │

│ y:684 in __init__ │

│ │

│ 681 │ │ # For data is list-like, or Iterable (will consume into list │

│ 682 │ │ elif is_list_like(data): │

│ 683 │ │ │ if not isinstance(data, (abc.Sequence, ExtensionArray)): │

│ ❱ 684 │ │ │ │ data = list(data) │

│ 685 │ │ │ if len(data) > 0: │

│ 686 │ │ │ │ if is_dataclass(data[0]): │

│ 687 │ │ │ │ │ data = dataclasses_to_dicts(data) │

│ │

│ /pfs/lustrep4/scratch/project_462000119/muennighoff/nov-2022-bettercom/venv/ │

│ lib/python3.9/site-packages/datasets/iterable_dataset.py:751 in __iter__ │

│ │

│ 748 │ │ yield from ex_iterable.shard_data_sources(shard_idx) │

│ 749 │ │

│ 750 │ def __iter__(self): │

│ ❱ 751 │ │ for key, example in self._iter(): │

│ 752 │ │ │ if self.features: │

│ 753 │ │ │ │ # `IterableDataset` automatically fills missing colum │

│ 754 │ │ │ │ # This is done with `_apply_feature_types`. │

│ │

│ /pfs/lustrep4/scratch/project_462000119/muennighoff/nov-2022-bettercom/venv/ │

│ lib/python3.9/site-packages/datasets/iterable_dataset.py:741 in _iter │

│ │

│ 738 │ │ │ ex_iterable = self._ex_iterable.shuffle_data_sources(self │

│ 739 │ │ else: │

│ 740 │ │ │ ex_iterable = self._ex_iterable │

│ ❱ 741 │ │ yield from ex_iterable │

│ 742 │ │

│ 743 │ def _iter_shard(self, shard_idx: int): │

│ 744 │ │ if self._shuffling: │

│ │

│ /pfs/lustrep4/scratch/project_462000119/muennighoff/nov-2022-bettercom/venv/ │

│ lib/python3.9/site-packages/datasets/iterable_dataset.py:617 in __iter__ │

│ │

│ 614 │ │ self.n = n │

│ 615 │ │

│ 616 │ def __iter__(self): │

│ ❱ 617 │ │ yield from islice(self.ex_iterable, self.n) │

│ 618 │ │

│ 619 │ def shuffle_data_sources(self, generator: np.random.Generator) -> │

│ 620 │ │ """Doesn't shuffle the wrapped examples iterable since it wou │

│ │

│ /pfs/lustrep4/scratch/project_462000119/muennighoff/nov-2022-bettercom/venv/ │

│ lib/python3.9/site-packages/datasets/iterable_dataset.py:594 in __iter__ │

│ │

│ 591 │ │

│ 592 │ def __iter__(self): │

│ 593 │ │ #ex_iterator = iter(self.ex_iterable) │

│ ❱ 594 │ │ yield from islice(self.ex_iterable, self.n, None) │

│ 595 │ │ #for _ in range(self.n): │

│ 596 │ │ # next(ex_iterator) │

│ 597 │ │ #yield from islice(ex_iterator, self.n, None) │

│ │

│ /pfs/lustrep4/scratch/project_462000119/muennighoff/nov-2022-bettercom/venv/ │

│ lib/python3.9/site-packages/datasets/iterable_dataset.py:106 in __iter__ │

│ │

│ 103 │ │ self.kwargs = kwargs │

│ 104 │ │

│ 105 │ def __iter__(self): │

│ ❱ 106 │ │ yield from self.generate_examples_fn(**self.kwargs) │

│ 107 │ │

│ 108 │ def shuffle_data_sources(self, generator: np.random.Generator) -> │

│ 109 │ │ return ShardShuffledExamplesIterable(self.generate_examples_f │

│ │

│ /users/muennighoff/.cache/huggingface/modules/datasets_modules/datasets/c4/d │

│ f532b158939272d032cc63ef19cd5b83e9b4d00c922b833e4cb18b2e9869b01/c4.py:89 in │

│ _generate_examples │

│ │

│ 86 │ │ for filepath in filepaths: │

│ 87 │ │ │ logger.info("generating examples from = %s", filepath) │

│ 88 │ │ │ with gzip.open(open(filepath, "rb"), "rt", encoding="utf-8" │

│ ❱ 89 │ │ │ │ for line in f: │

│ 90 │ │ │ │ │ if line: │

│ 91 │ │ │ │ │ │ example = json.loads(line) │

│ 92 │ │ │ │ │ │ yield id_, example │

│ │

│ /opt/cray/pe/python/3.9.12.1/lib/python3.9/gzip.py:313 in read1 │

│ │

│ 310 │ │ │

│ 311 │ │ if size < 0: │

│ 312 │ │ │ size = io.DEFAULT_BUFFER_SIZE │

│ ❱ 313 │ │ return self._buffer.read1(size) │

│ 314 │ │

│ 315 │ def peek(self, n): │

│ 316 │ │ self._check_not_closed() │

│ │

│ /opt/cray/pe/python/3.9.12.1/lib/python3.9/_compression.py:68 in readinto │

│ │

│ 65 │ │

│ 66 │ def readinto(self, b): │

│ 67 │ │ with memoryview(b) as view, view.cast("B") as byte_view: │

│ ❱ 68 │ │ │ data = self.read(len(byte_view)) │

│ 69 │ │ │ byte_view[:len(data)] = data │

│ 70 │ │ return len(data) │

│ 71 │

│ │

│ /opt/cray/pe/python/3.9.12.1/lib/python3.9/gzip.py:493 in read │

│ │

│ 490 │ │ │ │ self._new_member = False │

│ 491 │ │ │ │

│ 492 │ │ │ # Read a chunk of data from the file │

│ ❱ 493 │ │ │ buf = self._fp.read(io.DEFAULT_BUFFER_SIZE) │

│ 494 │ │ │ │

│ 495 │ │ │ uncompress = self._decompressor.decompress(buf, size) │

│ 496 │ │ │ if self._decompressor.unconsumed_tail != b"": │

│ │

│ /opt/cray/pe/python/3.9.12.1/lib/python3.9/gzip.py:96 in read │

│ │

│ 93 │ │ │ read = self._read │

│ 94 │ │ │ self._read = None │

│ 95 │ │ │ return self._buffer[read:] + \ │

│ ❱ 96 │ │ │ │ self.file.read(size-self._length+read) │

│ 97 │ │

│ 98 │ def prepend(self, prepend=b''): │

│ 99 │ │ if self._read is None: │

│ │

│ /pfs/lustrep4/scratch/project_462000119/muennighoff/nov-2022-bettercom/venv/ │

│ lib/python3.9/site-packages/datasets/download/streaming_download_manager.py: │

│ 365 in read_with_retries │

│ │

│ 362 │ │ │ │ ) │

│ 363 │ │ │ │ time.sleep(config.STREAMING_READ_RETRY_INTERVAL) │

│ 364 │ │ else: │

│ ❱ 365 │ │ │ raise ConnectionError("Server Disconnected") │

│ 366 │ │ return out │

│ 367 │ │

│ 368 │ file_obj.read = read_with_retries │

╰──────────────────────────────────────────────────────────────────────────────╯

ConnectionError: Server Disconnected

```

### Expected behavior

There should be no disconnect I think.

### Environment info

```

datasets=2.7.0

Python 3.9.12

``` | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5374/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5374/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5373 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5373/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5373/comments | https://api.github.com/repos/huggingface/datasets/issues/5373/events | https://github.com/huggingface/datasets/pull/5373 | 1,501,484,197 | PR_kwDODunzps5FtRU4 | 5,373 | Simplify skipping | {

"avatar_url": "https://avatars.githubusercontent.com/u/62820084?v=4",

"events_url": "https://api.github.com/users/Muennighoff/events{/privacy}",

"followers_url": "https://api.github.com/users/Muennighoff/followers",

"following_url": "https://api.github.com/users/Muennighoff/following{/other_user}",

"gists_url": "https://api.github.com/users/Muennighoff/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/Muennighoff",

"id": 62820084,

"login": "Muennighoff",

"node_id": "MDQ6VXNlcjYyODIwMDg0",

"organizations_url": "https://api.github.com/users/Muennighoff/orgs",

"received_events_url": "https://api.github.com/users/Muennighoff/received_events",

"repos_url": "https://api.github.com/users/Muennighoff/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/Muennighoff/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Muennighoff/subscriptions",

"type": "User",

"url": "https://api.github.com/users/Muennighoff"

} | [] | closed | false | null | [] | null | [] | 2022-12-17T17:23:52Z | 2022-12-18T21:43:31Z | 2022-12-18T21:40:21Z | CONTRIBUTOR | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5373.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5373",

"merged_at": "2022-12-18T21:40:21Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5373.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5373"

} | Was hoping to find a way to speed up the skipping as I'm running into bottlenecks skipping 100M examples on C4 (it takes 12 hours to skip), but didn't find anything better than this small change :(

Maybe there's a way to directly skip whole shards to speed it up? 🧐 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5373/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5373/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5372 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5372/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5372/comments | https://api.github.com/repos/huggingface/datasets/issues/5372/events | https://github.com/huggingface/datasets/pull/5372 | 1,501,377,802 | PR_kwDODunzps5Fs9w5 | 5,372 | Fix xpandas_read_excel | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

} | [] | open | false | null | [] | null | [] | 2022-12-17T12:58:52Z | 2022-12-19T09:33:03Z | null | MEMBER | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5372.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5372",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/5372.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5372"

} | This PR fixes `xpandas_read_excel`:

- Support passing a path string, besides a file-like object

- Support passing `use_auth_token`

- First assumes the host server supports HTTP range requests; only if a ValueError is thrown (Cannot seek streaming HTTP file), then if preserves previous behavior (see [#3355](https://github.com/huggingface/datasets/pull/3355)).

Fix https://huggingface.co/datasets/bigbio/meqsum/discussions/1

Fix:

- https://github.com/bigscience-workshop/biomedical/issues/801

Related to:

- #3355 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5372/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5372/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5371 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5371/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5371/comments | https://api.github.com/repos/huggingface/datasets/issues/5371/events | https://github.com/huggingface/datasets/issues/5371 | 1,501,369,036 | I_kwDODunzps5ZfRLM | 5,371 | Add a robustness benchmark dataset for vision | {

"avatar_url": "https://avatars.githubusercontent.com/u/22957388?v=4",

"events_url": "https://api.github.com/users/sayakpaul/events{/privacy}",

"followers_url": "https://api.github.com/users/sayakpaul/followers",

"following_url": "https://api.github.com/users/sayakpaul/following{/other_user}",

"gists_url": "https://api.github.com/users/sayakpaul/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/sayakpaul",

"id": 22957388,

"login": "sayakpaul",

"node_id": "MDQ6VXNlcjIyOTU3Mzg4",

"organizations_url": "https://api.github.com/users/sayakpaul/orgs",

"received_events_url": "https://api.github.com/users/sayakpaul/received_events",

"repos_url": "https://api.github.com/users/sayakpaul/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/sayakpaul/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sayakpaul/subscriptions",

"type": "User",

"url": "https://api.github.com/users/sayakpaul"

} | [

{

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset",

"id": 2067376369,

"name": "dataset request",

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request"

}

] | open | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/22957388?v=4",

"events_url": "https://api.github.com/users/sayakpaul/events{/privacy}",

"followers_url": "https://api.github.com/users/sayakpaul/followers",

"following_url": "https://api.github.com/users/sayakpaul/following{/other_user}",

"gists_url": "https://api.github.com/users/sayakpaul/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/sayakpaul",

"id": 22957388,

"login": "sayakpaul",

"node_id": "MDQ6VXNlcjIyOTU3Mzg4",

"organizations_url": "https://api.github.com/users/sayakpaul/orgs",

"received_events_url": "https://api.github.com/users/sayakpaul/received_events",

"repos_url": "https://api.github.com/users/sayakpaul/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/sayakpaul/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sayakpaul/subscriptions",

"type": "User",

"url": "https://api.github.com/users/sayakpaul"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/22957388?v=4",

"events_url": "https://api.github.com/users/sayakpaul/events{/privacy}",

"followers_url": "https://api.github.com/users/sayakpaul/followers",

"following_url": "https://api.github.com/users/sayakpaul/following{/other_user}",

"gists_url": "https://api.github.com/users/sayakpaul/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/sayakpaul",

"id": 22957388,

"login": "sayakpaul",

"node_id": "MDQ6VXNlcjIyOTU3Mzg4",

"organizations_url": "https://api.github.com/users/sayakpaul/orgs",

"received_events_url": "https://api.github.com/users/sayakpaul/received_events",

"repos_url": "https://api.github.com/users/sayakpaul/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/sayakpaul/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sayakpaul/subscriptions",

"type": "User",

"url": "https://api.github.com/users/sayakpaul"

}

] | null | [] | 2022-12-17T12:35:13Z | 2022-12-20T06:21:41Z | null | CONTRIBUTOR | null | null | null | ### Name

ImageNet-C

### Paper

Benchmarking Neural Network Robustness to Common Corruptions and Perturbations

### Data

https://github.com/hendrycks/robustness

### Motivation

It's a known fact that vision models are brittle when they meet with slightly corrupted and perturbed data. This is also correlated to the robustness aspects of vision models.

Researchers use different benchmark datasets to evaluate the robustness aspects of vision models. ImageNet-C is one of them.

Having this dataset in 🤗 Datasets would allow researchers to evaluate and study the robustness aspects of vision models. Since the metric associated with these evaluations is top-1 accuracy, researchers should be able to easily take advantage of the evaluation benchmarks on the Hub and perform comprehensive reporting.

ImageNet-C is a large dataset. Once it's in, it can act as a reference and we can also reach out to the authors of the other robustness benchmark datasets in vision, such as ObjectNet, WILDS, Metashift, etc. These datasets cater to different aspects. For example, ObjectNet is related to assessing how well a model performs under sub-population shifts.

Related thread: https://huggingface.slack.com/archives/C036H4A5U8Z/p1669994598060499 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 2,

"total_count": 2,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5371/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5371/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5369 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5369/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5369/comments | https://api.github.com/repos/huggingface/datasets/issues/5369/events | https://github.com/huggingface/datasets/pull/5369 | 1,500,622,276 | PR_kwDODunzps5Fqaj- | 5,369 | Distributed support | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

} | [] | open | false | null | [] | null | [] | 2022-12-16T17:43:47Z | 2022-12-16T18:21:30Z | null | MEMBER | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5369.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5369",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/5369.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5369"

} | To split your dataset across your training nodes, you can use the new [`datasets.distributed.split_dataset_by_node`]:

```python

import os

from datasets.distributed import split_dataset_by_node

ds = split_dataset_by_node(ds, rank=int(os.environ["RANK"]), world_size=int(os.environ["WORLD_SIZE"]))

```

This works for both map-style datasets and iterable datasets.

The dataset is split for the node at rank `rank` in a pool of nodes of size `world_size`.

For map-style datasets:

Each node is assigned a chunk of data, e.g. rank 0 is given the first chunk of the dataset.

For iterable datasets:

If the dataset has a number of shards that is a factor of `world_size` (i.e. if `dataset.n_shards % world_size == 0`),

then the shards are evenly assigned across the nodes, which is the most optimized.

Otherwise, each node keeps 1 example out of `world_size`, skipping the other examples.

This can also be combined with a `torch.utils.data.DataLoader` if you want each node to use multiple workers to load the data.

This also supports shuffling. At each epoch, the iterable dataset shards are reshuffled across all the nodes - you just have to call `iterable_ds.set_epoch(epoch_number)`.

TODO:

- [x] docs for usage in PyTorch

- [x] unit tests

- [x] integration tests with torch.distributed.launch

Related to https://github.com/huggingface/transformers/issues/20770

Close https://github.com/huggingface/datasets/issues/5360 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5369/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5369/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5368 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5368/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5368/comments | https://api.github.com/repos/huggingface/datasets/issues/5368/events | https://github.com/huggingface/datasets/pull/5368 | 1,500,322,973 | PR_kwDODunzps5FpZyx | 5,368 | Align remove columns behavior and input dict mutation in `map` with previous behavior | {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/mariosasko",

"id": 47462742,

"login": "mariosasko",

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"type": "User",

"url": "https://api.github.com/users/mariosasko"

} | [] | closed | false | null | [] | null | [] | 2022-12-16T14:28:47Z | 2022-12-16T16:28:08Z | 2022-12-16T16:25:12Z | CONTRIBUTOR | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5368.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5368",

"merged_at": "2022-12-16T16:25:12Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5368.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5368"

} | Align the `remove_columns` behavior and input dict mutation in `map` with the behavior before https://github.com/huggingface/datasets/pull/5252. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5368/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5368/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5367 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5367/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5367/comments | https://api.github.com/repos/huggingface/datasets/issues/5367/events | https://github.com/huggingface/datasets/pull/5367 | 1,499,174,749 | PR_kwDODunzps5FlevK | 5,367 | Fix remove columns from lazy dict | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

} | [] | closed | false | null | [] | null | [] | 2022-12-15T22:04:12Z | 2022-12-15T22:27:53Z | 2022-12-15T22:24:50Z | MEMBER | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5367.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5367",

"merged_at": "2022-12-15T22:24:50Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5367.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5367"

} | This was introduced in https://github.com/huggingface/datasets/pull/5252 and causing the transformers CI to break: https://app.circleci.com/pipelines/github/huggingface/transformers/53886/workflows/522faf2e-a053-454c-94f8-a617fde33393/jobs/648597

Basically this code should return a dataset with only one column:

```python

from datasets import *

ds = Dataset.from_dict({"a": range(5)})

def f(x):

x["b"] = x["a"]

return x

ds = ds.map(f, remove_columns=["a"])

assert ds.column_names == ["b"]

``` | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5367/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5367/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5366 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5366/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5366/comments | https://api.github.com/repos/huggingface/datasets/issues/5366/events | https://github.com/huggingface/datasets/pull/5366 | 1,498,530,851 | PR_kwDODunzps5FjSFl | 5,366 | ExamplesIterable fixes | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

} | [] | closed | false | null | [] | null | [] | 2022-12-15T14:23:05Z | 2022-12-15T14:44:47Z | 2022-12-15T14:41:45Z | MEMBER | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5366.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5366",

"merged_at": "2022-12-15T14:41:45Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5366.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5366"

} | fix typing and ExamplesIterable.shard_data_sources | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5366/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5366/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5365 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5365/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5365/comments | https://api.github.com/repos/huggingface/datasets/issues/5365/events | https://github.com/huggingface/datasets/pull/5365 | 1,498,422,466 | PR_kwDODunzps5Fi6ZD | 5,365 | fix: image array should support other formats than uint8 | {

"avatar_url": "https://avatars.githubusercontent.com/u/30353?v=4",

"events_url": "https://api.github.com/users/vigsterkr/events{/privacy}",

"followers_url": "https://api.github.com/users/vigsterkr/followers",

"following_url": "https://api.github.com/users/vigsterkr/following{/other_user}",

"gists_url": "https://api.github.com/users/vigsterkr/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/vigsterkr",

"id": 30353,

"login": "vigsterkr",

"node_id": "MDQ6VXNlcjMwMzUz",

"organizations_url": "https://api.github.com/users/vigsterkr/orgs",

"received_events_url": "https://api.github.com/users/vigsterkr/received_events",

"repos_url": "https://api.github.com/users/vigsterkr/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/vigsterkr/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/vigsterkr/subscriptions",

"type": "User",

"url": "https://api.github.com/users/vigsterkr"

} | [] | open | false | null | [] | null | [] | 2022-12-15T13:17:50Z | 2022-12-15T13:22:15Z | null | CONTRIBUTOR | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5365.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5365",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/5365.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5365"

} | Currently images that are provided as ndarrays, but not in `uint8` format are going to loose data. Namely, for example in a depth image where the data is in float32 format, the type-casting to uint8 will basically make the whole image blank.

`PIL.Image.fromarray` [does support mode `F`](https://pillow.readthedocs.io/en/stable/handbook/concepts.html#concept-modes).

although maybe some further metadata could be supplied via the [Image](https://huggingface.co/docs/datasets/v2.7.1/en/package_reference/main_classes#datasets.Image) object. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5365/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5365/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5364 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5364/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5364/comments | https://api.github.com/repos/huggingface/datasets/issues/5364/events | https://github.com/huggingface/datasets/pull/5364 | 1,498,360,628 | PR_kwDODunzps5Fiss1 | 5,364 | Support for writing arrow files directly with BeamWriter | {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/mariosasko",

"id": 47462742,

"login": "mariosasko",

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"type": "User",

"url": "https://api.github.com/users/mariosasko"

} | [] | open | false | null | [] | null | [] | 2022-12-15T12:38:05Z | 2022-12-19T17:05:00Z | null | CONTRIBUTOR | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5364.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5364",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/5364.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5364"

} | Make it possible to write Arrow files directly with `BeamWriter` rather than converting from Parquet to Arrow, which is sub-optimal, especially for big datasets for which Beam is primarily used. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5364/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5364/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5363 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5363/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5363/comments | https://api.github.com/repos/huggingface/datasets/issues/5363/events | https://github.com/huggingface/datasets/issues/5363 | 1,498,171,317 | I_kwDODunzps5ZTEe1 | 5,363 | Dataset.from_generator() crashes on simple example | {

"avatar_url": "https://avatars.githubusercontent.com/u/2743060?v=4",

"events_url": "https://api.github.com/users/villmow/events{/privacy}",

"followers_url": "https://api.github.com/users/villmow/followers",

"following_url": "https://api.github.com/users/villmow/following{/other_user}",

"gists_url": "https://api.github.com/users/villmow/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/villmow",

"id": 2743060,

"login": "villmow",

"node_id": "MDQ6VXNlcjI3NDMwNjA=",

"organizations_url": "https://api.github.com/users/villmow/orgs",

"received_events_url": "https://api.github.com/users/villmow/received_events",

"repos_url": "https://api.github.com/users/villmow/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/villmow/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/villmow/subscriptions",

"type": "User",

"url": "https://api.github.com/users/villmow"

} | [] | closed | false | null | [] | null | [] | 2022-12-15T10:21:28Z | 2022-12-15T11:51:33Z | 2022-12-15T11:51:33Z | NONE | null | null | null | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5363/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5363/timeline | null | completed | true |

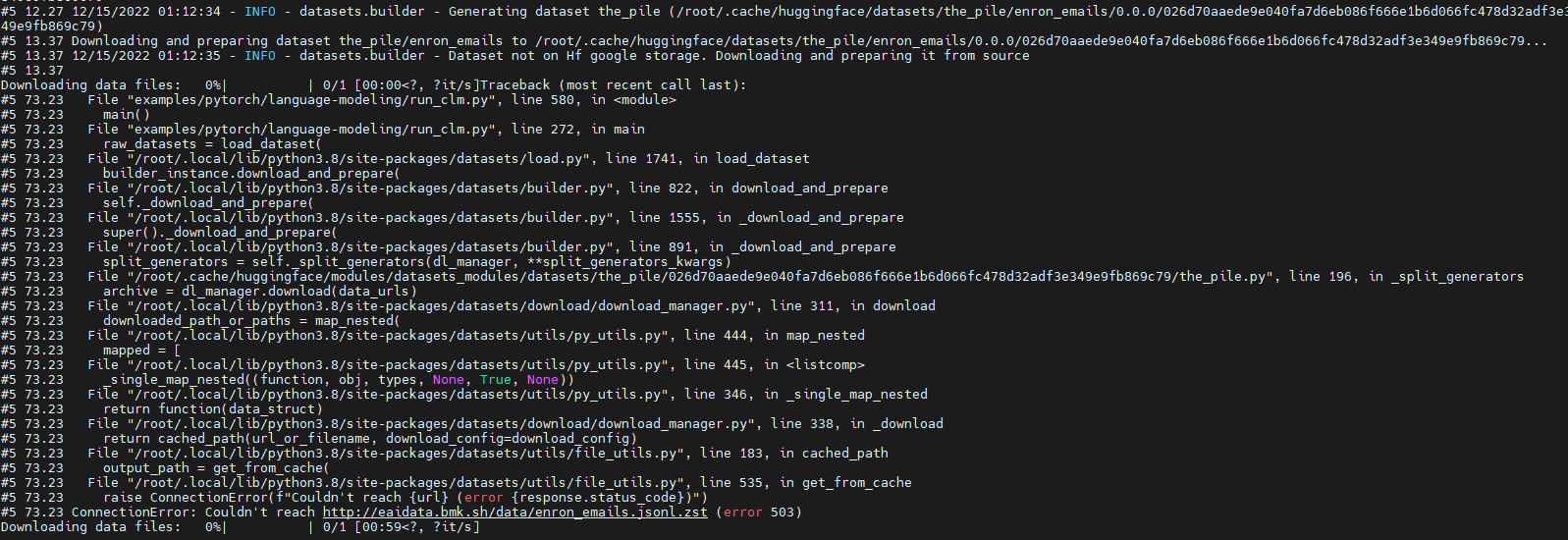

https://api.github.com/repos/huggingface/datasets/issues/5362 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5362/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5362/comments | https://api.github.com/repos/huggingface/datasets/issues/5362/events | https://github.com/huggingface/datasets/issues/5362 | 1,497,643,744 | I_kwDODunzps5ZRDrg | 5,362 | Run 'GPT-J' failure due to download dataset fail (' ConnectionError: Couldn't reach http://eaidata.bmk.sh/data/enron_emails.jsonl.zst ' ) | {

"avatar_url": "https://avatars.githubusercontent.com/u/52023469?v=4",

"events_url": "https://api.github.com/users/shaoyuta/events{/privacy}",

"followers_url": "https://api.github.com/users/shaoyuta/followers",

"following_url": "https://api.github.com/users/shaoyuta/following{/other_user}",

"gists_url": "https://api.github.com/users/shaoyuta/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/shaoyuta",

"id": 52023469,

"login": "shaoyuta",

"node_id": "MDQ6VXNlcjUyMDIzNDY5",

"organizations_url": "https://api.github.com/users/shaoyuta/orgs",

"received_events_url": "https://api.github.com/users/shaoyuta/received_events",

"repos_url": "https://api.github.com/users/shaoyuta/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/shaoyuta/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/shaoyuta/subscriptions",

"type": "User",

"url": "https://api.github.com/users/shaoyuta"

} | [] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

}

] | null | [] | 2022-12-15T01:23:03Z | 2022-12-15T07:45:54Z | 2022-12-15T07:45:53Z | NONE | null | null | null | ### Describe the bug

Run model "GPT-J" with dataset "the_pile" fail.

The fail out is as below:

Looks like which is due to "http://eaidata.bmk.sh/data/enron_emails.jsonl.zst" unreachable .

### Steps to reproduce the bug

Steps to reproduce this issue:

git clone https://github.com/huggingface/transformers

cd transformers

python examples/pytorch/language-modeling/run_clm.py --model_name_or_path EleutherAI/gpt-j-6B --dataset_name the_pile --dataset_config_name enron_emails --do_eval --output_dir /tmp/output --overwrite_output_dir

### Expected behavior

This issue looks like due to "http://eaidata.bmk.sh/data/enron_emails.jsonl.zst " couldn't be reached.

Is there another way to download the dataset "the_pile" ?

Is there another way to cache the dataset "the_pile" but not let the hg to download it when runtime ?

### Environment info

huggingface_hub version: 0.11.1

Platform: Linux-5.15.0-52-generic-x86_64-with-glibc2.35

Python version: 3.9.12

Running in iPython ?: No

Running in notebook ?: No

Running in Google Colab ?: No

Token path ?: /home/taosy/.huggingface/token

Has saved token ?: False

Configured git credential helpers:

FastAI: N/A

Tensorflow: N/A

Torch: N/A

Jinja2: N/A

Graphviz: N/A

Pydot: N/A | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5362/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5362/timeline | null | completed | true |

https://api.github.com/repos/huggingface/datasets/issues/5361 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5361/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5361/comments | https://api.github.com/repos/huggingface/datasets/issues/5361/events | https://github.com/huggingface/datasets/issues/5361 | 1,497,153,889 | I_kwDODunzps5ZPMFh | 5,361 | How concatenate `Audio` elements using batch mapping | {

"avatar_url": "https://avatars.githubusercontent.com/u/43239645?v=4",

"events_url": "https://api.github.com/users/bayartsogt-ya/events{/privacy}",

"followers_url": "https://api.github.com/users/bayartsogt-ya/followers",

"following_url": "https://api.github.com/users/bayartsogt-ya/following{/other_user}",

"gists_url": "https://api.github.com/users/bayartsogt-ya/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/bayartsogt-ya",

"id": 43239645,

"login": "bayartsogt-ya",

"node_id": "MDQ6VXNlcjQzMjM5NjQ1",

"organizations_url": "https://api.github.com/users/bayartsogt-ya/orgs",

"received_events_url": "https://api.github.com/users/bayartsogt-ya/received_events",

"repos_url": "https://api.github.com/users/bayartsogt-ya/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/bayartsogt-ya/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/bayartsogt-ya/subscriptions",

"type": "User",

"url": "https://api.github.com/users/bayartsogt-ya"

} | [] | open | false | null | [] | null | [] | 2022-12-14T18:13:55Z | 2022-12-15T10:53:28Z | null | NONE | null | null | null | ### Describe the bug

I am trying to do concatenate audios in a dataset e.g. `google/fleurs`.

```python

print(dataset)

# Dataset({

# features: ['path', 'audio'],

# num_rows: 24

# })

def mapper_function(batch):

# to merge every 3 audio

# np.concatnate(audios[i: i+3]) for i in range(i, len(batch), 3)

dataset = dataset.map(mapper_function, batch=True, batch_size=24)

print(dataset)

# Expected output:

# Dataset({

# features: ['path', 'audio'],

# num_rows: 8

# })

```

I tried to construct `result={}` dictionary inside the mapper function, I just found it will not work because it needs `byte` also needed :((

I'd appreciate if your share any use cases similar to my problem or any solutions really. Thanks!

cc: @lhoestq

### Steps to reproduce the bug

1. load audio dataset

2. try to merge every k audios and return as one

### Expected behavior

Merged dataset with a fewer rows. If we merge every 3 rows, then `n // 3` number of examples.

### Environment info

- `datasets` version: 2.1.0

- Platform: Linux-5.15.65+-x86_64-with-debian-bullseye-sid

- Python version: 3.7.12

- PyArrow version: 8.0.0

- Pandas version: 1.3.5 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5361/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5361/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5360 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5360/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5360/comments | https://api.github.com/repos/huggingface/datasets/issues/5360/events | https://github.com/huggingface/datasets/issues/5360 | 1,496,947,177 | I_kwDODunzps5ZOZnp | 5,360 | IterableDataset returns duplicated data using PyTorch DDP | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

} | [] | open | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

}

] | null | [] | 2022-12-14T16:06:19Z | 2022-12-16T17:47:20Z | null | MEMBER | null | null | null | As mentioned in https://github.com/huggingface/datasets/issues/3423, when using PyTorch DDP the dataset ends up with duplicated data. We already check for the PyTorch `worker_info` for single node, but we should also check for `torch.distributed.get_world_size()` and `torch.distributed.get_rank()` | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5360/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5360/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5359 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5359/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5359/comments | https://api.github.com/repos/huggingface/datasets/issues/5359/events | https://github.com/huggingface/datasets/pull/5359 | 1,495,297,857 | PR_kwDODunzps5FYHWm | 5,359 | Raise error if ClassLabel names is not python list | {

"avatar_url": "https://avatars.githubusercontent.com/u/1475568?v=4",

"events_url": "https://api.github.com/users/freddyheppell/events{/privacy}",

"followers_url": "https://api.github.com/users/freddyheppell/followers",

"following_url": "https://api.github.com/users/freddyheppell/following{/other_user}",

"gists_url": "https://api.github.com/users/freddyheppell/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/freddyheppell",

"id": 1475568,

"login": "freddyheppell",

"node_id": "MDQ6VXNlcjE0NzU1Njg=",

"organizations_url": "https://api.github.com/users/freddyheppell/orgs",

"received_events_url": "https://api.github.com/users/freddyheppell/received_events",

"repos_url": "https://api.github.com/users/freddyheppell/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/freddyheppell/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/freddyheppell/subscriptions",

"type": "User",

"url": "https://api.github.com/users/freddyheppell"

} | [] | open | false | null | [] | null | [] | 2022-12-13T23:04:06Z | 2022-12-16T14:06:26Z | null | NONE | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5359.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5359",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/5359.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5359"

} | Checks type of names provided to ClassLabel to avoid easy and hard to debug errors (closes #5332 - see for discussion) | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5359/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5359/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5358 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5358/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5358/comments | https://api.github.com/repos/huggingface/datasets/issues/5358/events | https://github.com/huggingface/datasets/pull/5358 | 1,495,270,822 | PR_kwDODunzps5FYBcq | 5,358 | Fix `fs.open` resource leaks | {

"avatar_url": "https://avatars.githubusercontent.com/u/297847?v=4",

"events_url": "https://api.github.com/users/tkukurin/events{/privacy}",

"followers_url": "https://api.github.com/users/tkukurin/followers",

"following_url": "https://api.github.com/users/tkukurin/following{/other_user}",

"gists_url": "https://api.github.com/users/tkukurin/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/tkukurin",

"id": 297847,

"login": "tkukurin",

"node_id": "MDQ6VXNlcjI5Nzg0Nw==",

"organizations_url": "https://api.github.com/users/tkukurin/orgs",

"received_events_url": "https://api.github.com/users/tkukurin/received_events",

"repos_url": "https://api.github.com/users/tkukurin/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/tkukurin/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/tkukurin/subscriptions",

"type": "User",

"url": "https://api.github.com/users/tkukurin"

} | [] | open | false | null | [] | null | [] | 2022-12-13T22:35:51Z | 2022-12-15T17:09:22Z | null | NONE | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5358.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5358",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/5358.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5358"

} | Invoking `{load,save}_from_dict` results in resource leak warnings, this should fix.

Introduces no significant logic changes. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5358/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5358/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5357 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5357/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5357/comments | https://api.github.com/repos/huggingface/datasets/issues/5357/events | https://github.com/huggingface/datasets/pull/5357 | 1,495,029,602 | PR_kwDODunzps5FXNyR | 5,357 | Support torch dataloader without torch formatting | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",